This repository contains the implementation of the paper:

Learning-Based Dimensionality Reduction for Computing Compact and Effective Local Feature Descriptors (ICRA2023)

Hao Dong, Xieyuanli Chen, Mihai Dusmanu, Viktor Larsson, Marc Pollefeys and Cyrill Stachniss

Link to the arXiv version of the paper is available.

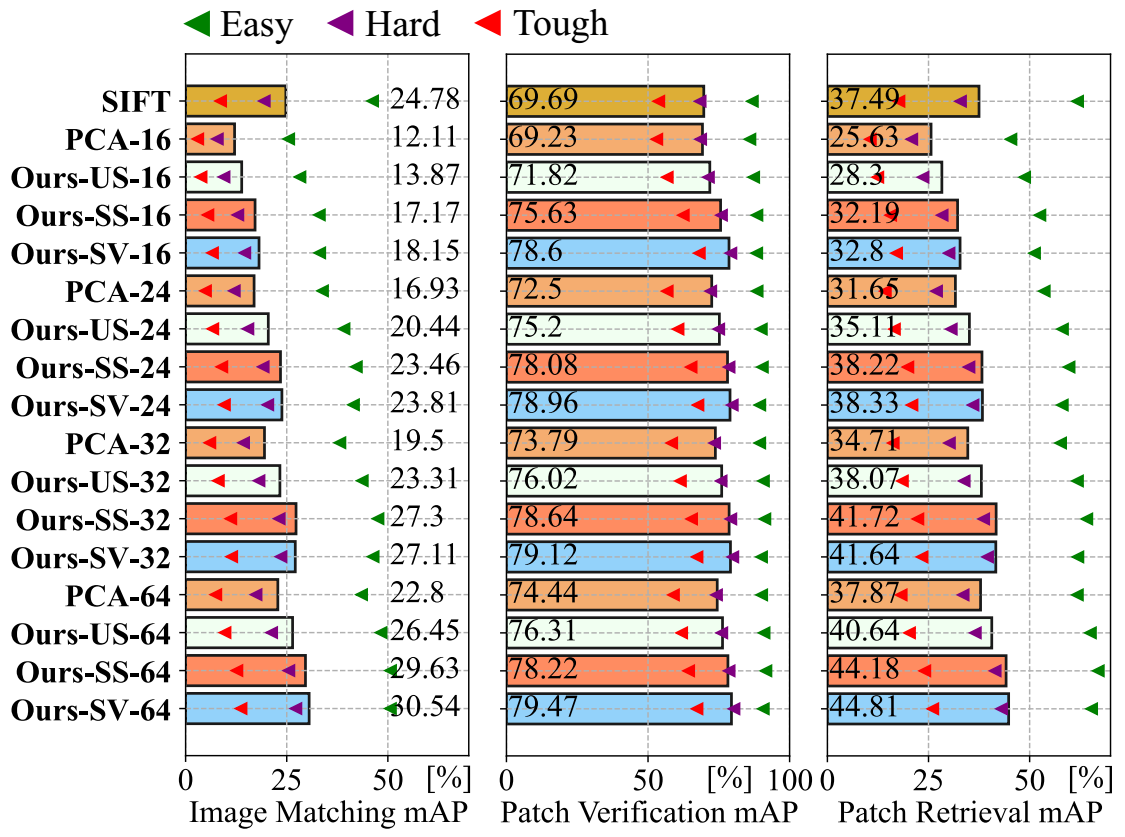

We propose and evaluate an MLP-based network for descriptor dimensionality reduction and show its superiority over PCA on multiple descriptors in various tasks.

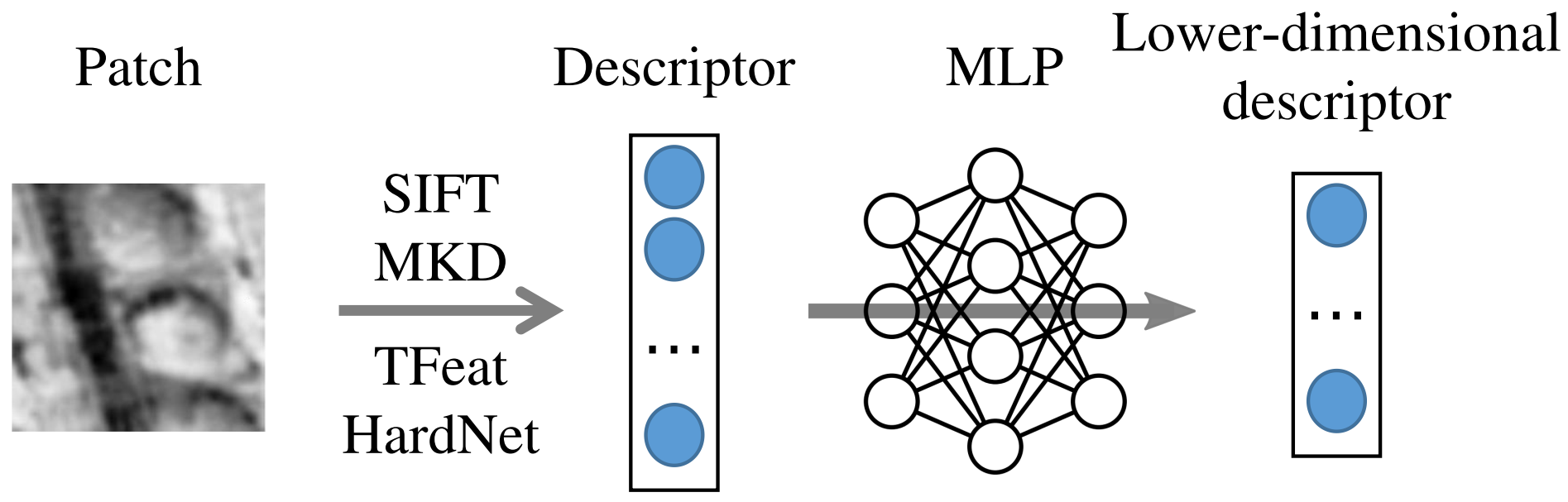

Overview of our approach. We first compute descriptors of given image patches. Then an MLP-based network is used for dimensionality reduction. We aim to learn an MLP-based projection better than PCA to generate lower-dimensional descriptors.A distinctive representation of image patches in form of features is a key component of many computer vision and robotics tasks, such as image matching, image retrieval, and visual localization. State-of-the-art descriptors, from hand-crafted descriptors such as SIFT to learned ones such as HardNet, are usually high dimensional; 128 dimensions or even more. The higher the dimensionality, the larger the memory consumption and computational time for approaches using such descriptors. In this paper, we investigate multi-layer perceptrons (MLPs) to extract low-dimensional but high-quality descriptors. We thoroughly analyze our method in unsupervised, self-supervised, and supervised settings, and evaluate the dimensionality reduction results on four representative descriptors. We consider different applications, including visual localization, patch verification, image matching and retrieval. The experiments show that our lightweight MLPs achieve better dimensionality reduction than PCA. The lower-dimensional descriptors generated by our approach outperform the original higher-dimensional descriptors in downstream tasks, especially for the hand-crafted ones.

pip install numpy kornia tqdm torch torchvision scipy faiss tensorboard_logger tabulateA simple example for SIFT-SV-64 with pre-trained model:

python example.pyFor SIFT:

python dr_pca.py --descriptor SIFTFor HardNet:

python dr_pca.py --descriptor HardNetFor SIFT:

python dr_ae_SIFT.pyFor HardNet:

python dr_ae_HardNet.pyFor SIFT:

python dr_ss_SIFT.pyFor HardNet:

python dr_ss_HardNet.pyFor SIFT:

python dr_sv_SIFT.pyFor HardNet:

python dr_sv_HardNet.pyDownload HPatches dataset and benchmark:

git clone https://github.com/hpatches/hpatches-benchmark.git

cd hpatches-benchmark/

sh download.sh hpatchesExtract descriptors on HPatches dataset and evaluate:

cd ..

python hpatches_extract_SIFT_64.py /path/to/HPatches/dataset

mkdir hpatches-benchmark/data/descriptors

mv SIFT_sv_dim64/ hpatches-benchmark/data/descriptors/

cd hpatches-benchmark/python/

python hpatches_eval.py --descr-name=SIFT_sv_dim64 --task=matching --delimiter=","

python hpatches_results.py --descr=SIFT_sv_dim64 --results-dir=results/ --task=matching

python hpatches_eval.py --descr-name=SIFT_sv_dim64 --task=verification --delimiter=","

python hpatches_results.py --descr=SIFT_sv_dim64 --results-dir=results/ --task=verification

python hpatches_eval.py --descr-name=SIFT_sv_dim64 --task=retrieval --delimiter=","

python hpatches_results.py --descr=SIFT_sv_dim64 --results-dir=results/ --task=retrievalIf you use our implementation in your academic work, please cite the corresponding paper:

@inproceedings{dong2022dr,

title={Learning-Based Dimensionality Reduction for Computing Compact and Effective Local Feature Descriptors},

author={Dong, Hao and Chen, Xieyuanli and Dusmanu, Mihai and Larsson, Viktor and Pollefeys, Marc and Stachniss, Cyrill},

booktitle={Proceedings of the IEEE International Conference on Robotics and Automation (ICRA)},

year={2023}

}

SIFT SIFT-PCA-64 SIFT-Ours-SV-64

MKD MKD-PCA-64 MKD-Ours-SV-64

TFeat TFeat-PCA-64 TFeat-Ours-SV-64

HardNet HardNet-PCA-64 HardNet-Ours-SV-64

We provide the t-SNE embedding visualization of the descriptors on UBC Phototour Liberty. We visualize the embeddings of SIFT, MKD, TFeat, and HardNet generated using PCA and supervised methods by mapping high-dimensional descriptors (128 and 64) into 2D using t-SNE visualization. We pass the image patches through the descriptor extractor, followed by PCA or MLPs, to get lower-dimensional descriptors and determine their 2D locations using t-SNE transformation. Finally, we visualize the entire patch at each location.

From the visualization, we can observe similar results as we discussed in the paper. For SIFT and MKD, the original descriptor space is irregular, and similar and dissimilar features are overlapped. Therefore, PCA projection will keep this irregular structure of the descriptor space. However, after learning a more discriminative representation using triplet loss, similar image patches in the descriptor space are close to each other while dissimilar ones have distances from each other. For TFeat and HardNet, since the outputting space is already optimized for the

We thank greatly for the authors of the following opensource projects: