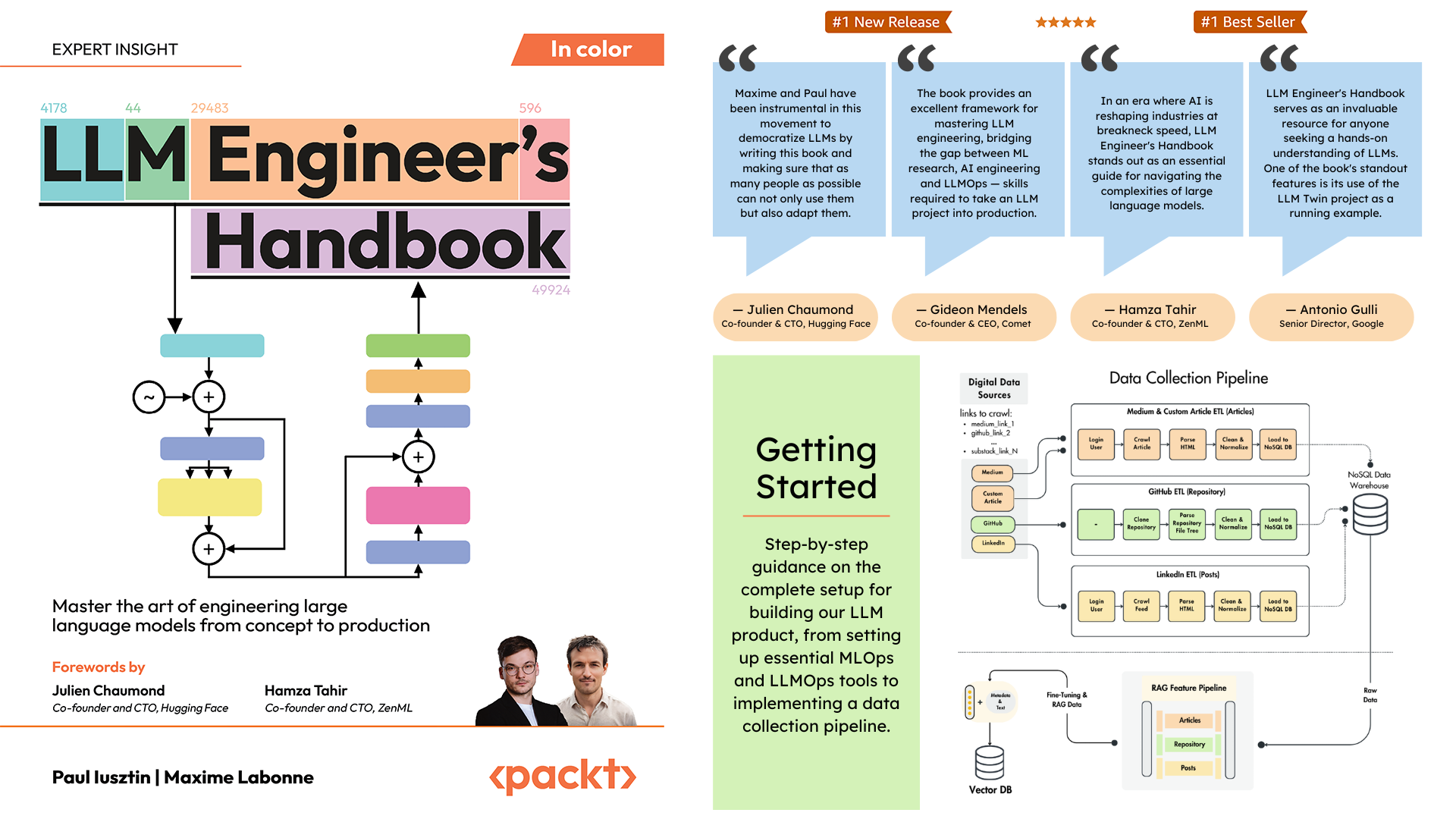

Official repository of the LLM Engineer's Handbook by Paul Iusztin and Maxime Labonne

Find the book on Amazon or Packt

The goal of this book is to create your own end-to-end LLM-based system using best practices:

- 📝 Data collection & generation

- 🔄 LLM training pipeline

- 📊 Simple RAG system

- 🚀 Production-ready AWS deployment

- 🔍 Comprehensive monitoring

- 🧪 Testing and evaluation framework

You can download and use the final trained model on Hugging Face.

Important

The code in this GitHub repository is actively maintained and may contain updates not reflected in the book. Always refer to this repository for the latest version of the code.

To install and run the project locally, you need the following dependencies.

| Tool | Version | Purpose | Installation Link |

|---|---|---|---|

| pyenv | ≥2.3.36 | Multiple Python versions (optional) | Install Guide |

| Python | 3.11 | Runtime environment | Download |

| Poetry | >= 1.8.3 and < 2.0 | Package management | Install Guide |

| Docker | ≥27.1.1 | Containerization | Install Guide |

| AWS CLI | ≥2.15.42 | Cloud management | Install Guide |

| Git | ≥2.44.0 | Version control | Download |

The code also uses and depends on the following cloud services. For now, you don't have to do anything. We will guide you in the installation and deployment sections on how to use them:

| Service | Purpose |

|---|---|

| HuggingFace | Model registry |

| Comet ML | Experiment tracker |

| Opik | Prompt monitoring |

| ZenML | Orchestrator and artifacts layer |

| AWS | Compute and storage |

| MongoDB | NoSQL database |

| Qdrant | Vector database |

| GitHub Actions | CI/CD pipeline |

In the LLM Engineer's Handbook, Chapter 2 will walk you through each tool. Chapters 10 and 11 provide step-by-step guides on how to set up everything you need.

Here is the directory overview:

.

├── code_snippets/ # Standalone example code

├── configs/ # Pipeline configuration files

├── llm_engineering/ # Core project package

│ ├── application/

│ ├── domain/

│ ├── infrastructure/

│ ├── model/

├── pipelines/ # ML pipeline definitions

├── steps/ # Pipeline components

├── tests/ # Test examples

├── tools/ # Utility scripts

│ ├── run.py

│ ├── ml_service.py

│ ├── rag.py

│ ├── data_warehouse.pyllm_engineering/ is the main Python package implementing LLM and RAG functionality. It follows Domain-Driven Design (DDD) principles:

domain/: Core business entities and structuresapplication/: Business logic, crawlers, and RAG implementationmodel/: LLM training and inferenceinfrastructure/: External service integrations (AWS, Qdrant, MongoDB, FastAPI)

The code logic and imports flow as follows: infrastructure → model → application → domain

pipelines/: Contains the ZenML ML pipelines, which serve as the entry point for all the ML pipelines. Coordinates the data processing and model training stages of the ML lifecycle.

steps/: Contains individual ZenML steps, which are reusable components for building and customizing ZenML pipelines. Steps perform specific tasks (e.g., data loading, preprocessing) and can be combined within the ML pipelines.

tests/: Covers a few sample tests used as examples within the CI pipeline.

tools/: Utility scripts used to call the ZenML pipelines and inference code:

run.py: Entry point script to run ZenML pipelines.ml_service.py: Starts the REST API inference server.rag.py: Demonstrates usage of the RAG retrieval module.data_warehouse.py: Used to export or import data from the MongoDB data warehouse through JSON files.

configs/: ZenML YAML configuration files to control the execution of pipelines and steps.

code_snippets/: Independent code examples that can be executed independently.

Note

If you are experiencing issues while installing and running the repository, consider checking the Issues GitHub section for other people who solved similar problems or directly asking us for help.

Start by cloning the repository and navigating to the project directory:

git clone https://github.com/PacktPublishing/LLM-Engineers-Handbook.git

cd LLM-Engineers-Handbook Next, we have to prepare your Python environment and its adjacent dependencies.

The project requires Python 3.11. You can either use your global Python installation or set up a project-specific version using pyenv.

Verify your Python version:

python --version # Should show Python 3.11.x- Verify pyenv installation:

pyenv --version # Should show pyenv 2.3.36 or later- Install Python 3.11.8:

pyenv install 3.11.8- Verify the installation:

python --version # Should show Python 3.11.8- Confirm Python version in the project directory:

python --version

# Output: Python 3.11.8Note

The project includes a .python-version file that automatically sets the correct Python version when you're in the project directory.

The project uses Poetry for dependency management.

- Verify Poetry installation:

poetry --version # Should show Poetry version 1.8.3 or later- Set up the project environment and install dependencies:

poetry env use 3.11

poetry install --without aws

poetry run pre-commit installThis will:

- Configure Poetry to use Python 3.11

- Install project dependencies (excluding AWS-specific packages)

- Set up pre-commit hooks for code verification

As our task manager, we run all the scripts using Poe the Poet.

- Start a Poetry shell:

poetry shell- Run project commands using Poe the Poet:

poetry poe ...🔧 Troubleshooting Poe the Poet Installation

If you're experiencing issues with poethepoet, you can still run the project commands directly through Poetry. Here's how:

- Look up the command definition in

pyproject.toml - Use

poetry runwith the underlying command

Instead of:

poetry poe local-infrastructure-upUse the direct command from pyproject.toml:

poetry run <actual-command-from-pyproject-toml>Note: All project commands are defined in the [tool.poe.tasks] section of pyproject.toml

Now, let's configure our local project with all the necessary credentials and tokens to run the code locally.

After you have installed all the dependencies, you must create and fill a .env file with your credentials to appropriately interact with other services and run the project. Setting your sensitive credentials in a .env file is a good security practice, as this file won't be committed to GitHub or shared with anyone else.

- First, copy our example by running the following:

cp .env.example .env # The file must be at your repository's root!- Now, let's understand how to fill in all the essential variables within the

.envfile to get you started. The following are the mandatory settings we must complete when working locally:

To authenticate to OpenAI's API, you must fill out the OPENAI_API_KEY env var with an authentication token.

OPENAI_API_KEY=your_api_key_here→ Check out this tutorial to learn how to provide one from OpenAI.

To authenticate to Hugging Face, you must fill out the HUGGINGFACE_ACCESS_TOKEN env var with an authentication token.

HUGGINGFACE_ACCESS_TOKEN=your_token_here→ Check out this tutorial to learn how to provide one from Hugging Face.

To authenticate to Comet ML (required only during training) and Opik, you must fill out the COMET_API_KEY env var with your authentication token.

COMET_API_KEY=your_api_key_here→ Check out this tutorial to learn how to get started with Opik. You can also access Opik's dashboard using 🔗this link.

When deploying the project to the cloud, we must set additional settings for Mongo, Qdrant, and AWS. If you are just working locally, the default values of these env vars will work out of the box. Detailed deployment instructions are available in Chapter 11 of the LLM Engineer's Handbook.

We must change the DATABASE_HOST env var with the URL pointing to your cloud MongoDB cluster.

DATABASE_HOST=your_mongodb_url→ Check out this tutorial to learn how to create and host a MongoDB cluster for free.

Change USE_QDRANT_CLOUD to true, QDRANT_CLOUD_URL with the URL point to your cloud Qdrant cluster, and QDRANT_APIKEY with its API key.

USE_QDRANT_CLOUD=true

QDRANT_CLOUD_URL=your_qdrant_cloud_url

QDRANT_APIKEY=your_qdrant_api_key→ Check out this tutorial to learn how to create a Qdrant cluster for free

For your AWS set-up to work correctly, you need the AWS CLI installed on your local machine and properly configured with an admin user (or a user with enough permissions to create new SageMaker, ECR, and S3 resources; using an admin user will make everything more straightforward).

Chapter 2 provides step-by-step instructions on how to install the AWS CLI, create an admin user on AWS, and get an access key to set up the AWS_ACCESS_KEY and AWS_SECRET_KEY environment variables. If you already have an AWS admin user in place, you have to configure the following env vars in your .env file:

AWS_REGION=eu-central-1 # Change it with your AWS region.

AWS_ACCESS_KEY=your_aws_access_key

AWS_SECRET_KEY=your_aws_secret_keyAWS credentials are typically stored in ~/.aws/credentials. You can view this file directly using cat or similar commands:

cat ~/.aws/credentialsImportant

Additional configuration options are available in settings.py. Any variable in the Settings class can be configured through the .env file.

When running the project locally, we host a MongoDB and Qdrant database using Docker. Also, a testing ZenML server is made available through their Python package.

Warning

You need Docker installed (>= v27.1.1)

For ease of use, you can start the whole local development infrastructure with the following command:

poetry poe local-infrastructure-upAlso, you can stop the ZenML server and all the Docker containers using the following command:

poetry poe local-infrastructure-downWarning

When running on MacOS, before starting the server, export the following environment variable:

export OBJC_DISABLE_INITIALIZE_FORK_SAFETY=YES

Otherwise, the connection between the local server and pipeline will break. 🔗 More details in this issue.

This is done by default when using Poe the Poet.

Start the inference real-time RESTful API:

poetry poe run-inference-ml-serviceImportant

The LLM microservice, called by the RESTful API, will work only after deploying the LLM to AWS SageMaker.

Dashboard URL: localhost:8237

Default credentials:

username: defaultpassword:

→ Find out more about using and setting up ZenML.

REST API URL: localhost:6333

Dashboard URL: localhost:6333/dashboard

→ Find out more about using and setting up Qdrant with Docker.

Database URI: mongodb://llm_engineering:llm_engineering@127.0.0.1:27017

Database name: twin

Default credentials:

username: llm_engineeringpassword: llm_engineering

→ Find out more about using and setting up MongoDB with Docker.

You can search your MongoDB collections using your IDEs MongoDB plugin (which you have to install separately), where you have to use the database URI to connect to the MongoDB database hosted within the Docker container: mongodb://llm_engineering:llm_engineering@127.0.0.1:27017

Important

Everything related to training or running the LLMs (e.g., training, evaluation, inference) can only be run if you set up AWS SageMaker, as explained in the next section on cloud infrastructure.

Here we will quickly present how to deploy the project to AWS and other serverless services. We won't go into the details (as everything is presented in the book) but only point out the main steps you have to go through.

First, reinstall your Python dependencies with the AWS group:

poetry install --with awsNote

Chapter 10 provides step-by-step instructions in the section "Implementing the LLM microservice using AWS SageMaker".

By this point, we expect you to have AWS CLI installed and your AWS CLI and project's env vars (within the .env file) properly configured with an AWS admin user.

To ensure best practices, we must create a new AWS user restricted to creating and deleting only resources related to AWS SageMaker. Create it by running:

poetry poe create-sagemaker-roleIt will create a sagemaker_user_credentials.json file at the root of your repository with your new AWS_ACCESS_KEY and AWS_SECRET_KEY values. But before replacing your new AWS credentials, also run the following command to create the execution role (to create it using your admin credentials).

To create the IAM execution role used by AWS SageMaker to access other AWS resources on our behalf, run the following:

poetry poe create-sagemaker-execution-roleIt will create a sagemaker_execution_role.json file at the root of your repository with your new AWS_ARN_ROLE value. Add it to your .env file.

Once you've updated the AWS_ACCESS_KEY, AWS_SECRET_KEY, and AWS_ARN_ROLE values in your .env file, you can use AWS SageMaker. Note that this step is crucial to complete the AWS setup.

We start the training pipeline through ZenML by running the following:

poetry poe run-training-pipelineThis will start the training code using the configs from configs/training.yaml directly in SageMaker. You can visualize the results in Comet ML's dashboard.

We start the evaluation pipeline through ZenML by running the following:

poetry poe run-evaluation-pipelineThis will start the evaluation code using the configs from configs/evaluating.yaml directly in SageMaker. You can visualize the results in *-results datasets saved to your Hugging Face profile.

To create an AWS SageMaker Inference Endpoint, run:

poetry poe deploy-inference-endpointTo test it out, run:

poetry poe test-sagemaker-endpointTo delete it, run:

poetry poe delete-inference-endpointThe ML pipelines, artifacts, and containers are deployed to AWS by leveraging ZenML's deployment features. Thus, you must create an account with ZenML Cloud and follow their guide on deploying a ZenML stack to AWS. Otherwise, we provide step-by-step instructions in Chapter 11, section Deploying the LLM Twin's pipelines to the cloud on what you must do.

We leverage Qdrant's and MongoDB's serverless options when deploying the project. Thus, you can either follow Qdrant's and MongoDB's tutorials on how to create a freemium cluster for each or go through Chapter 11, section Deploying the LLM Twin's pipelines to the cloud and follow our step-by-step instructions.

We use GitHub Actions to implement our CI/CD pipelines. To implement your own, you have to fork our repository and set the following env vars as Actions secrets in your forked repository:

AWS_ACCESS_KEY_IDAWS_SECRET_ACCESS_KEYAWS_ECR_NAMEAWS_REGION

Also, we provide instructions on how to set everything up in Chapter 11, section Adding LLMOps to the LLM Twin.

You can visualize the results on their self-hosted dashboards if you create a Comet account and correctly set the COMET_API_KEY env var. As Opik is powered by Comet, you don't have to set up anything else along Comet:

All the ML pipelines will be orchestrated behind the scenes by ZenML. A few exceptions exist when running utility scrips, such as exporting or importing from the data warehouse.

The ZenML pipelines are the entry point for most processes throughout this project. They are under the pipelines/ folder. Thus, when you want to understand or debug a workflow, starting with the ZenML pipeline is the best approach.

To see the pipelines running and their results:

- go to your ZenML dashboard

- go to the

Pipelinessection - click on a specific pipeline (e.g.,

feature_engineering) - click on a specific run (e.g.,

feature_engineering_run_2024_06_20_18_40_24) - click on a specific step or artifact of the DAG to find more details about it

Now, let's explore all the pipelines you can run. From data collection to training, we will present them in their natural order to go through the LLM project end-to-end.

Run the data collection ETL:

poetry poe run-digital-data-etlWarning

You must have Chrome (or another Chromium-based browser) installed on your system for LinkedIn and Medium crawlers to work (which use Selenium under the hood). Based on your Chrome version, the Chromedriver will be automatically installed to enable Selenium support. Another option is to run everything using our Docker image if you don't want to install Chrome. For example, to run all the pipelines combined you can run poetry poe run-docker-end-to-end-data-pipeline. Note that the command can be tweaked to support any other pipeline.

If, for any other reason, you don't have a Chromium-based browser installed and don't want to use Docker, you have two other options to bypass this Selenium issue:

- Comment out all the code related to Selenium, Chrome and all the links that use Selenium to crawl them (e.g., Medium), such as the

chromedriver_autoinstaller.install()command from application.crawlers.base and other static calls that check for Chrome drivers and Selenium. - Install Google Chrome using your CLI in environments such as GitHub Codespaces or other cloud VMs using the same command as in our Docker file.

To add additional links to collect from, go to configs/digital_data_etl_[author_name].yaml and add them to the links field. Also, you can create a completely new file and specify it at run time, like this: python -m llm_engineering.interfaces.orchestrator.run --run-etl --etl-config-filename configs/digital_data_etl_[your_name].yaml

Run the feature engineering pipeline:

poetry poe run-feature-engineering-pipelineGenerate the instruct dataset:

poetry poe run-generate-instruct-datasets-pipelineGenerate the preference dataset:

poetry poe run-generate-preference-datasets-pipelineRun all of the above compressed into a single pipeline:

poetry poe run-end-to-end-data-pipelineExport the data from the data warehouse to JSON files:

poetry poe run-export-data-warehouse-to-jsonImport data to the data warehouse from JSON files (by default, it imports the data from the data/data_warehouse_raw_data directory):

poetry poe run-import-data-warehouse-from-jsonExport ZenML artifacts to JSON:

poetry poe run-export-artifact-to-json-pipelineThis will export the following ZenML artifacts to the output folder as JSON files (it will take their latest version):

- cleaned_documents.json

- instruct_datasets.json

- preference_datasets.json

- raw_documents.json

You can configure what artifacts to export by tweaking the configs/export_artifact_to_json.yaml configuration file.

Run the training pipeline:

poetry poe run-training-pipelineRun the evaluation pipeline:

poetry poe run-evaluation-pipelineWarning

For this to work, make sure you properly configured AWS SageMaker as described in Set up cloud infrastructure (for production).

Call the RAG retrieval module with a test query:

poetry poe call-rag-retrieval-moduleStart the inference real-time RESTful API:

poetry poe run-inference-ml-serviceCall the inference real-time RESTful API with a test query:

poetry poe call-inference-ml-serviceRemember that you can monitor the prompt traces on Opik.

Warning

For the inference service to work, you must have the LLM microservice deployed to AWS SageMaker, as explained in the setup cloud infrastructure section.

Check or fix your linting issues:

poetry poe lint-check

poetry poe lint-fixCheck or fix your formatting issues:

poetry poe format-check

poetry poe format-fixCheck the code for leaked credentials:

poetry poe gitleaks-checkRun all the tests using the following command:

poetry poe testBased on the setup and usage steps described above, assuming the local and cloud infrastructure works and the .env is filled as expected, follow the next steps to run the LLM system end-to-end:

-

Collect data:

poetry poe run-digital-data-etl -

Compute features:

poetry poe run-feature-engineering-pipeline -

Compute instruct dataset:

poetry poe run-generate-instruct-datasets-pipeline -

Compute preference alignment dataset:

poetry poe run-generate-preference-datasets-pipeline

Important

From now on, for these steps to work, you need to properly set up AWS SageMaker, such as running poetry install --with aws and filling in the AWS-related environment variables and configs.

-

SFT fine-tuning Llamma 3.1:

poetry poe run-training-pipeline -

For DPO, go to

configs/training.yaml, changefinetuning_typetodpo, and runpoetry poe run-training-pipelineagain -

Evaluate fine-tuned models:

poetry poe run-evaluation-pipeline

Important

From now on, for these steps to work, you need to properly set up AWS SageMaker, such as running poetry install --with aws and filling in the AWS-related environment variables and configs.

-

Call only the RAG retrieval module:

poetry poe call-rag-retrieval-module -

Deploy the LLM Twin microservice to SageMaker:

poetry poe deploy-inference-endpoint -

Test the LLM Twin microservice:

poetry poe test-sagemaker-endpoint -

Start end-to-end RAG server:

poetry poe run-inference-ml-service -

Test RAG server:

poetry poe call-inference-ml-service

This course is an open-source project released under the MIT license. Thus, as long you distribute our LICENSE and acknowledge our work, you can safely clone or fork this project and use it as a source of inspiration for whatever you want (e.g., university projects, college degree projects, personal projects, etc.).