Accpeted to ICCV21!

This repository is built upon DeiT, timm, and mmdetction.

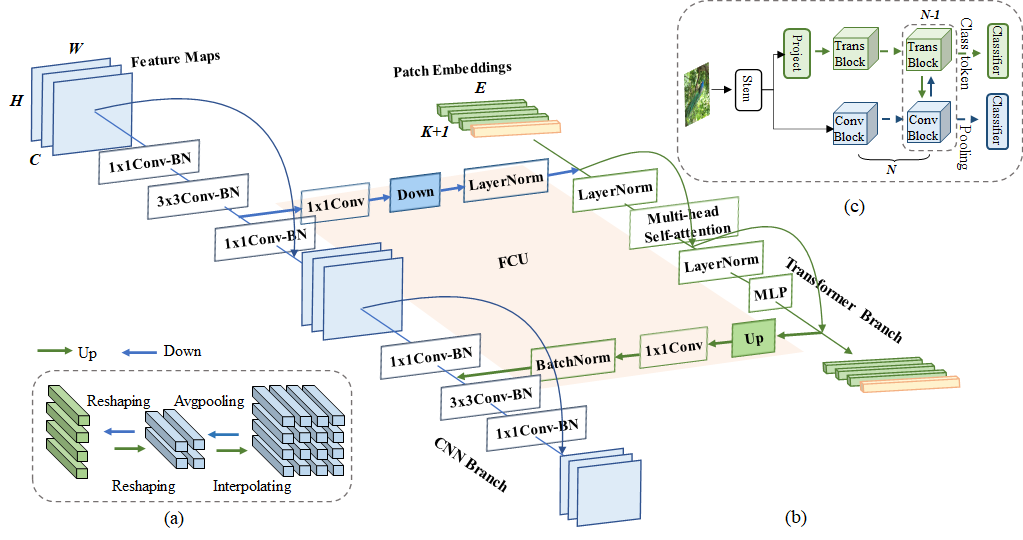

Within Convolutional Neural Network (CNN), the convolution operations are good at extracting local features but experience difficulty to capture global representations. Within visual transformer, the cascaded self-attention modules can capture long-distance feature dependencies but unfortunately deteriorate local feature details. In this paper, we propose a hybrid network structure, termed Conformer, to take advantage of convolutional operations and self-attention mechanisms for enhanced representation learning. Conformer roots in the Feature Coupling Unit (FCU), which fuses local features and global representations under different resolutions in an interactive fashion. Conformer adopts a concurrent structure so that local features and global representations are retained to the maximum extent. Experiments show that Conformer, under the comparable parameter complexity, outperforms the visual transformer (DeiT-B) by 2.3% on ImageNet. On MSCOCO, it outperforms ResNet-101 by 3.7% and 3.6% mAPs for object detection and instance segmentation, respectively, demonstrating the great potential to be a general backbone network.

The basic architecture of the Conformer is shown as following:

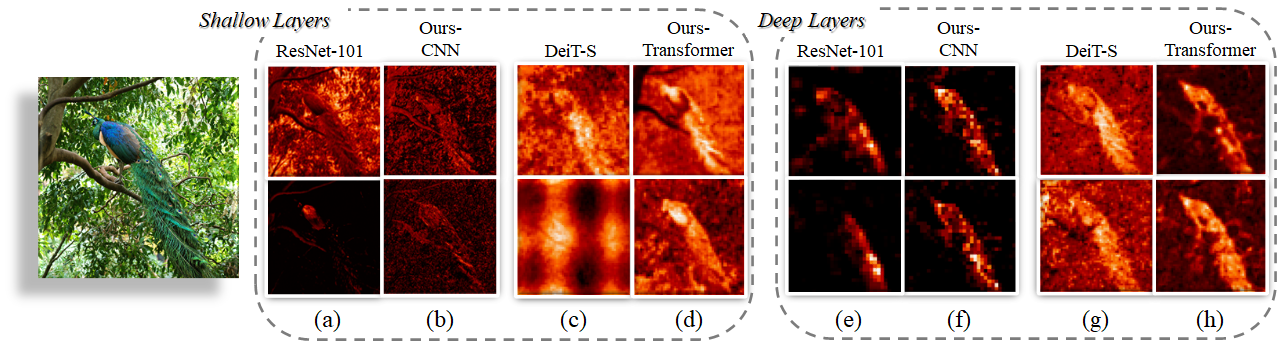

We also show the comparison of feature maps of CNN (ResNet-101), Visual Transformer (DeiT-S), and the proposed Conformer as following.

The patch embeddings in transformer are reshaped to feature maps for visualization. While CNN activates discriminative local regions (

First, install PyTorch 1.7.0+ and torchvision 0.8.1+ and pytorch-image-models 0.3.2:

conda install -c pytorch pytorch torchvision

pip install timm==0.3.2

Download and extract ImageNet train and val images from http://image-net.org/.

The directory structure is the standard layout for the torchvision datasets.ImageFolder, and the training and validation data is expected to be in the train/ folder and val folder respectively:

/path/to/imagenet/

train/

class1/

img1.jpeg

class2/

img2.jpeg

val/

class1/

img3.jpeg

class/2

img4.jpeg

To train Conformer-S on ImageNet on a single node with 8 gpus for 300 epochs run:

export CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7

OUTPUT='./output/Conformer_small_patch16_batch_1024_lr1e-3_300epochs'

python -m torch.distributed.launch --master_port 50130 --nproc_per_node=8 --use_env main.py \

--model Conformer_small_patch16 \

--data-set IMNET \

--batch-size 128 \

--lr 0.001 \

--num_workers 4 \

--data-path /data/user/Dataset/ImageNet_ILSVRC2012/ \

--output_dir ${OUTPUT} \

--epochs 300

To test Conformer-S on ImageNet on a single gpu run:

CUDA_VISIBLE_DEVICES=0, python main.py --model Conformer_small_patch16 --eval --batch-size 64 \

--input-size 224 \

--data-set IMNET \

--num_workers 4 \

--data-path /data/user/Dataset/ImageNet_ILSVRC2012/ \

--epochs 100 \

--resume ../Conformer_small_patch16.pth

| Model | Parameters | MACs | Top-1 Acc | Link |

|---|---|---|---|---|

| Conformer-Ti | 23.5 M | 5.2 G | 81.3 % | baidu(code: hzhm) google |

| Conformer-S | 37.7 M | 10.6 G | 83.4 % | baidu(code: qvu8) google |

| Conformer-B | 83.3 M | 23.3 G | 84.1 % | baidu(code: b4z9) google |

@article{peng2021conformer,

title={Conformer: Local Features Coupling Global Representations for Visual Recognition},

author={Zhiliang Peng and Wei Huang and Shanzhi Gu and Lingxi Xie and Yaowei Wang and Jianbin Jiao and Qixiang Ye},

journal={arXiv preprint arXiv:2105.03889},

year={2021},

}