Implementing Manhattan LSTM, a Siamese deep network to predict sentence to sentence semantic similarity.

Implementation inspired by this paper by Mueller & Thyagarajan, and this this Medium article by Elior Cohen.

- Original research paper: Siamese Recurrent Architectures for Learning Sentence Similarity

- The dataset can be found in

./datasetor here: First Quora Dataset Release: Question Pairs - Pretrained GloVe vectors can be found in

./pretrained_embeddingsor here: GloVe: Global Vectors for Word Representation

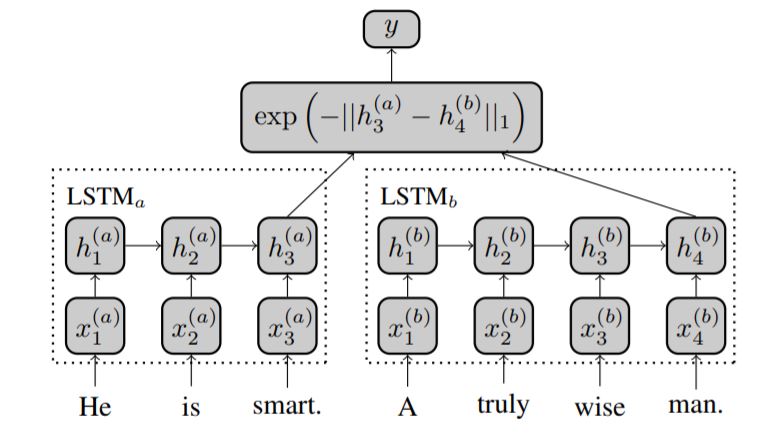

The detailed explanation of the model can be found in the aforementioned paper. The model described in the paper:

A ~3% increase in accuracy was observed compared to the aforementioned article on making a few changes and fine-tuning the model. The major ones are as follows:

- Cleaning the data was done using regular expressions, stopwords and Lemmatizer object from the NLTK library.

- The embedding matrix was created using pretrained GloVe vectors.

- The Embedding layer was made trainable.

- Adam optimizer was used with a gradient clipping norm = 1.5.

- Binary crossentropy loss was used.

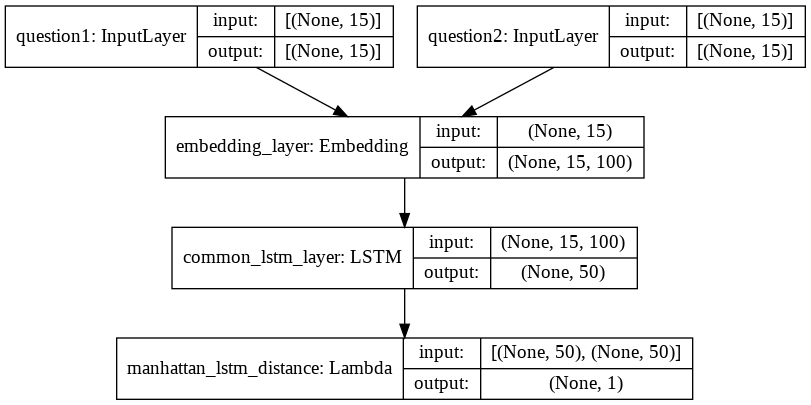

Visualization of model returned by ./src/create_model.py: