: Unsupervised Generative Attentional Networks with Adaptive Layer-Instance Normalization for Image-to-Image Translation

The results of the paper came from the Tensorflow code

U-GAT-IT: Unsupervised Generative Attentional Networks with Adaptive Layer-Instance Normalization for Image-to-Image Translation

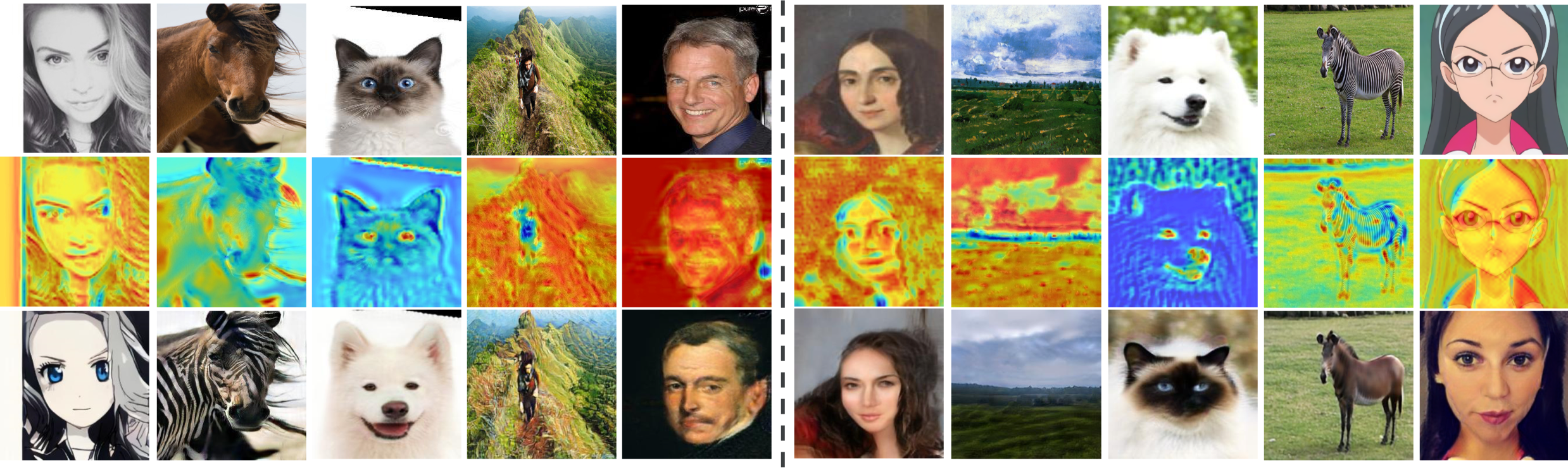

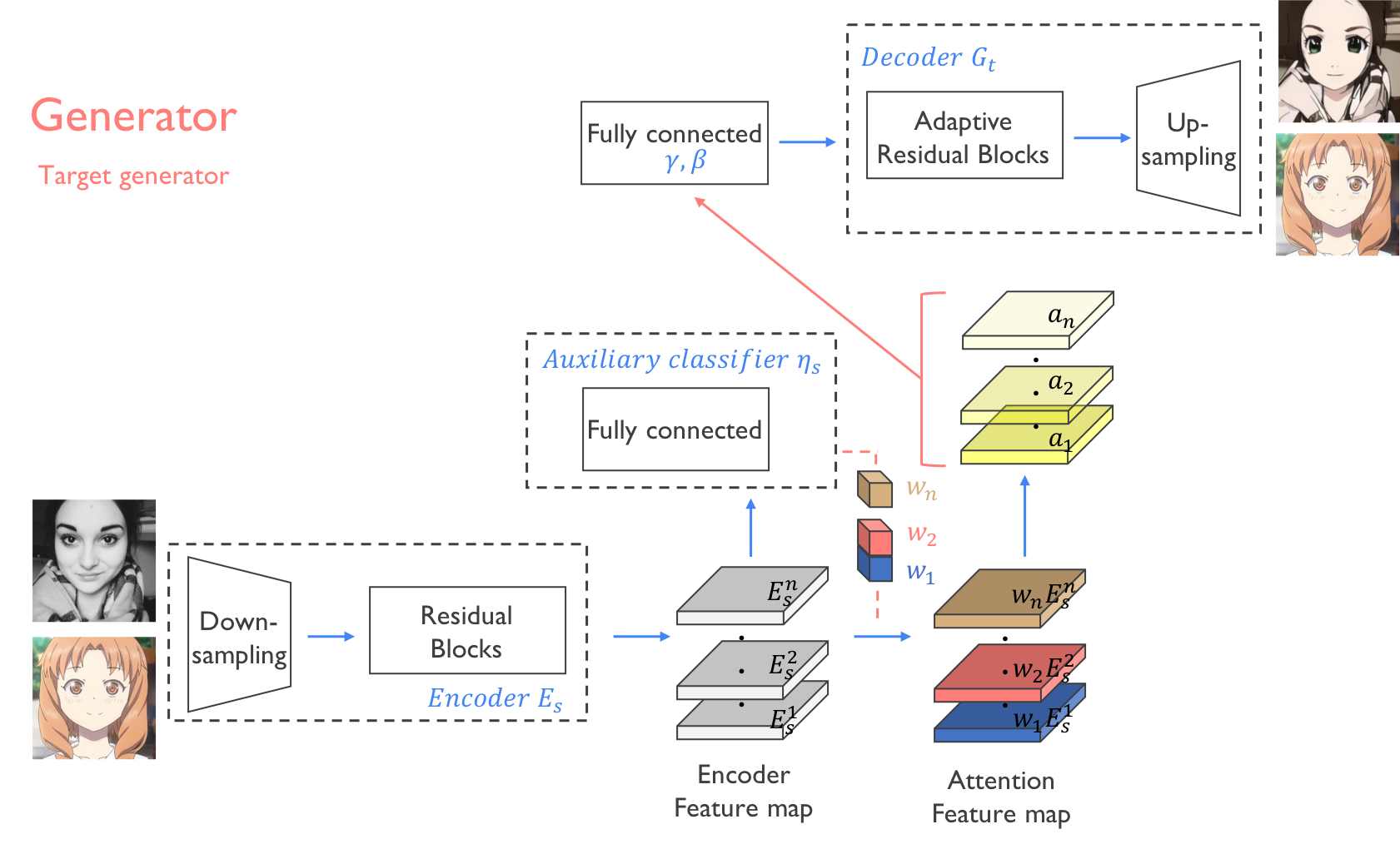

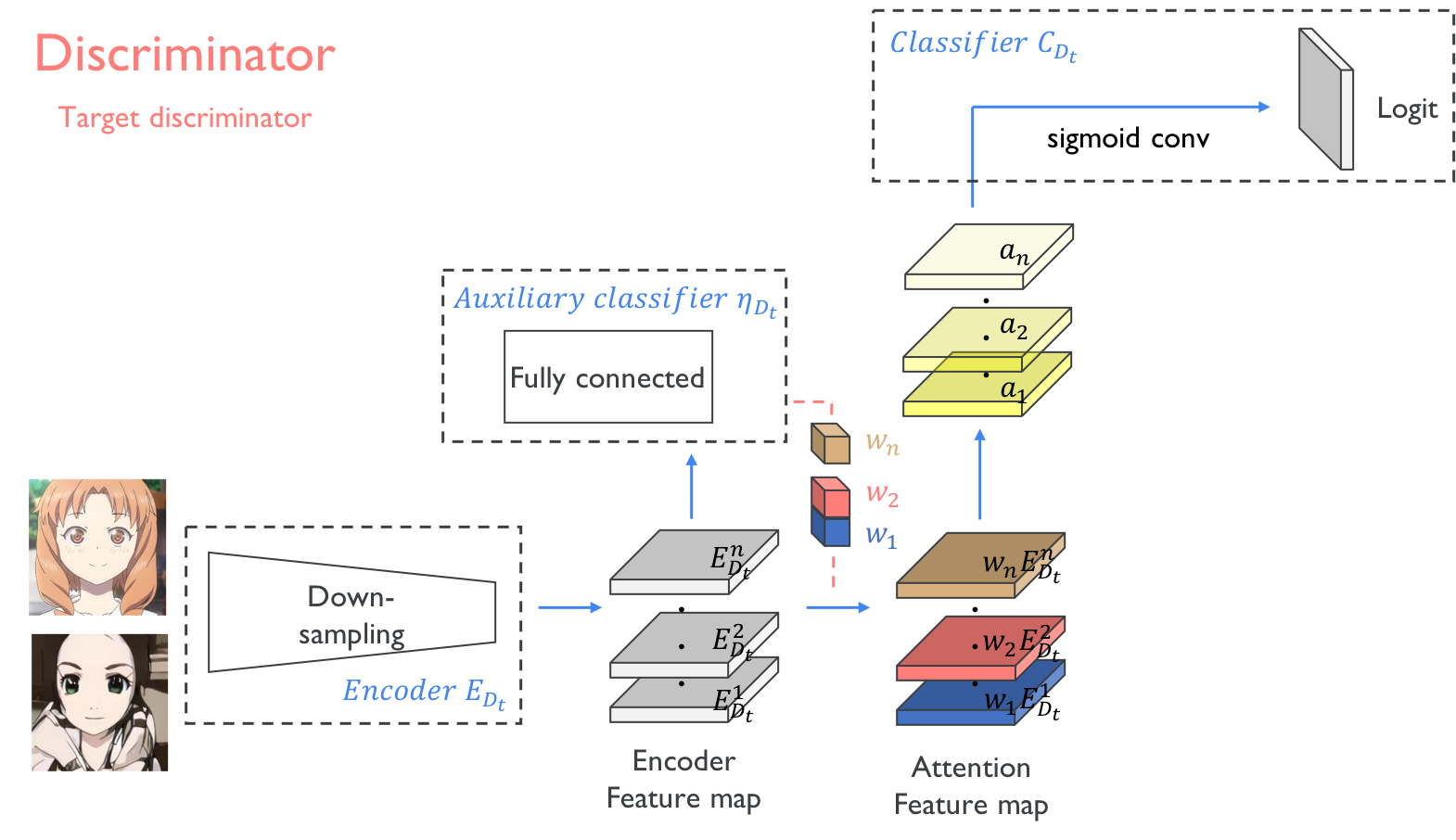

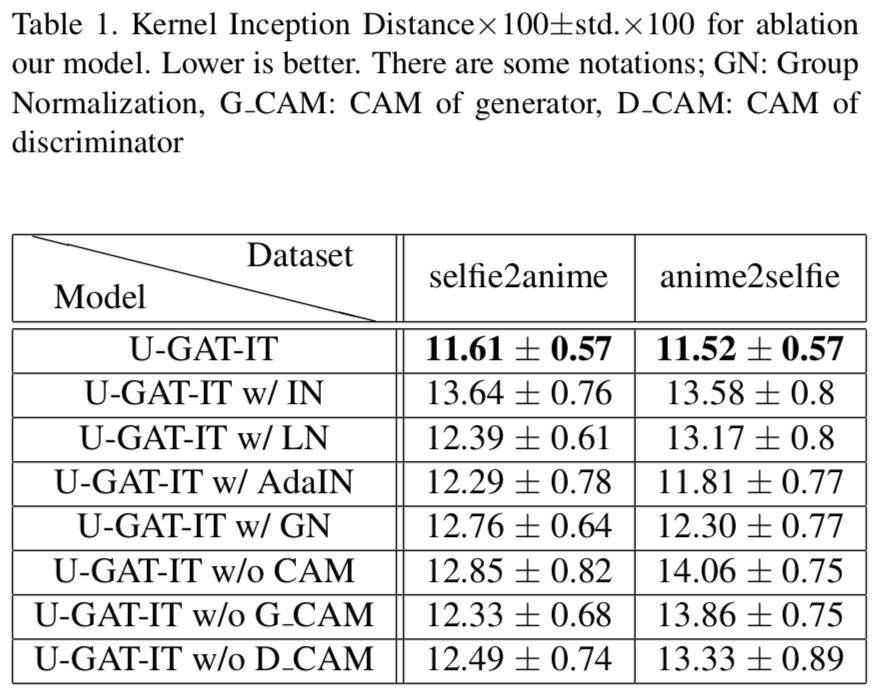

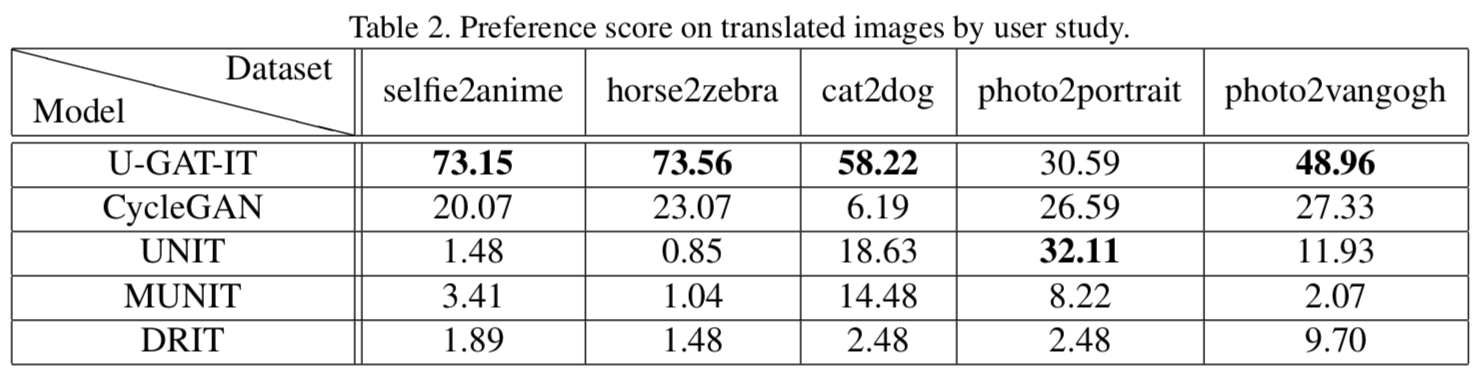

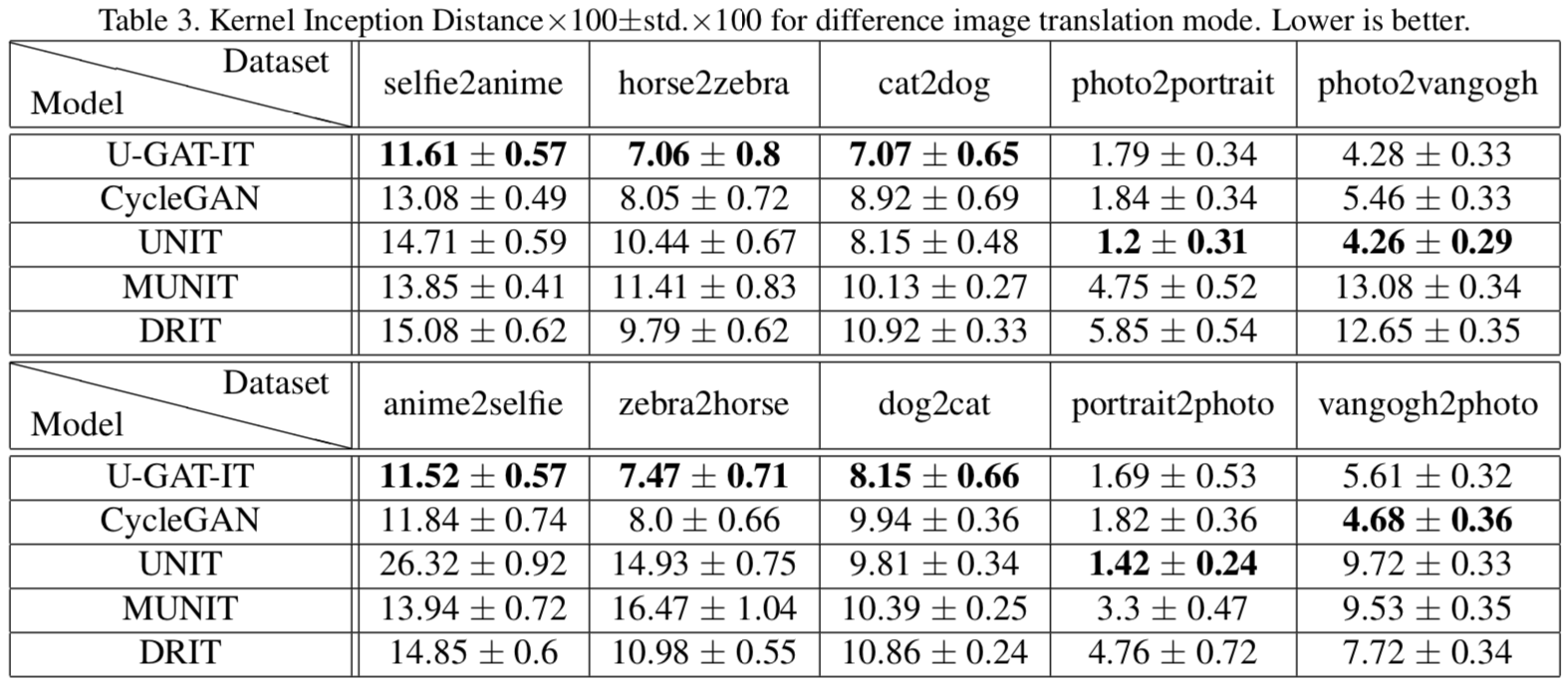

Junho Kim (NCSOFT), Minjae Kim (NCSOFT), Hyeonwoo Kang (NCSOFT), Kwanghee Lee (Boeing Korea)Abstract We propose a novel method for unsupervised image-to-image translation, which incorporates a new attention module and a new learnable normalization function in an end-to-end manner. The attention module guides our model to focus on more important regions distinguishing between source and target domains based on the attention map obtained by the auxiliary classifier. Unlike previous attention-based methods which cannot handle the geometric changes between domains, our model can translate both images requiring holistic changes and images requiring large shape changes. Moreover, our new AdaLIN (Adaptive Layer-Instance Normalization) function helps our attention-guided model to flexibly control the amount of change in shape and texture by learned parameters depending on datasets. Experimental results show the superiority of the proposed method compared to the existing state-of-the-art models with a fixed network architecture and hyper-parameters.

├── dataset

└── YOUR_DATASET_NAME

├── trainA

├── xxx.jpg (name, format doesn't matter)

├── yyy.png

└── ...

├── trainB

├── zzz.jpg

├── www.png

└── ...

├── testA

├── aaa.jpg

├── bbb.png

└── ...

└── testB

├── ccc.jpg

├── ddd.png

└── ...

> python main.py --dataset selfie2anime

- If the memory of gpu is not sufficient, set

--lightto True- But it may not perform well

- paper version is

--lightto False

> python main.py --dataset selfie2anime --phase test

If you find this code useful for your research, please cite our paper:

@misc{kim2019ugatit,

title={U-GAT-IT: Unsupervised Generative Attentional Networks with Adaptive Layer-Instance Normalization for Image-to-Image Translation},

author={Junho Kim and Minjae Kim and Hyeonwoo Kang and Kwanghee Lee},

year={2019},

eprint={1907.10830},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

Junho Kim, Minjae Kim, Hyeonwoo Kang, Kwanghee Lee