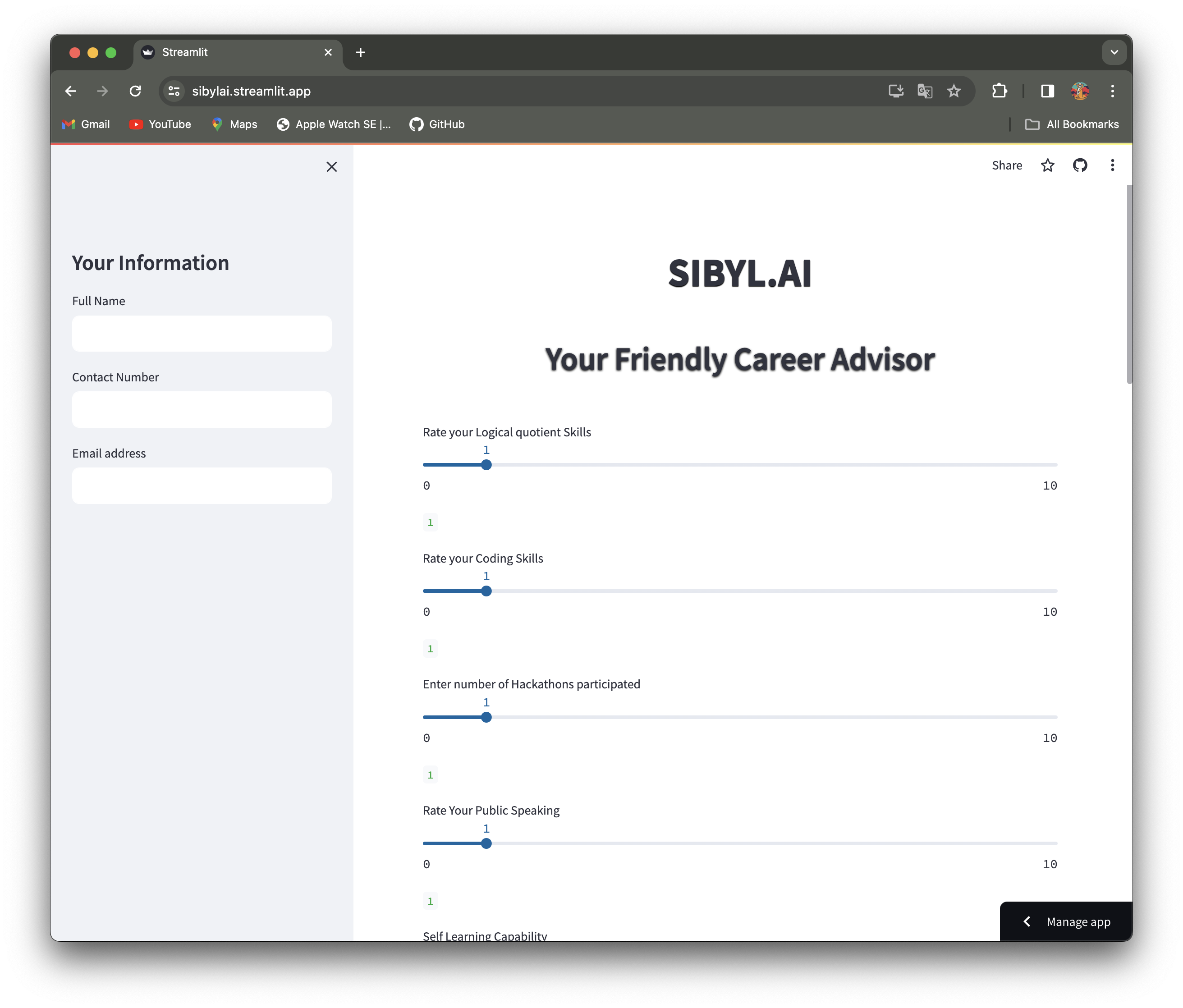

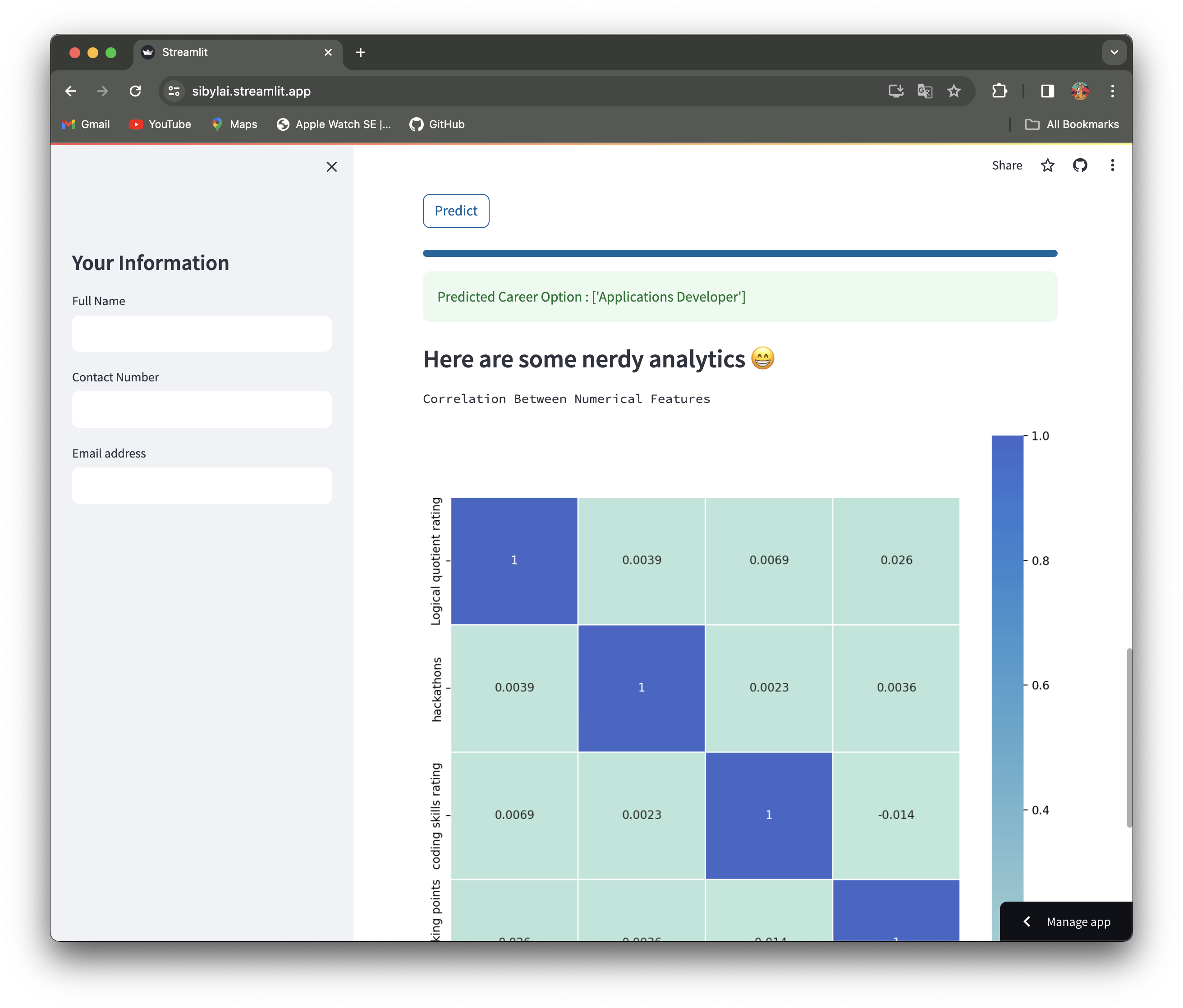

SIBYL is a cutting-edge machine learning ensemble model designed to empower decision-making processes. Developed as part of Dell's Hack2Hire initiative, this project leverages the power of data-driven insights to shape your future strategies. Deployed using Streamlit, SIBYL offers a seamless and interactive user experience.

Employee-Career-Path-Navigator/

│

├── pythonFunctions # Model training,prediction and GUI

├── data/ # Dataset used for training and testing

├── .streamlit/ # Streamlit app deployment file

├── requirements.txt # Dependencies for the project

└── README.md

To deploy SIBYL on Streamlit:

- Clone the repository:

git clone https://github.com/Ritabrata04/Employee-Career-Path-Navigator.git - Install dependencies:

pip install -r requirements.txt - Run the Streamlit app:

streamlit run app.py

Meet the brilliant minds behind SIBYL:

[From Right to Left] Ritabrata, Yashika, Rishi, Ankika, Divyanshu

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

import seaborn as sns

from sklearn.model_selection import train_test_split

from sklearn.metrics import confusion_matrix,accuracy_score

from sklearn import svm

from sklearn.tree import DecisionTreeClassifier

from sklearn.ensemble import RandomForestClassifier

from xgboost import XGBClassifier

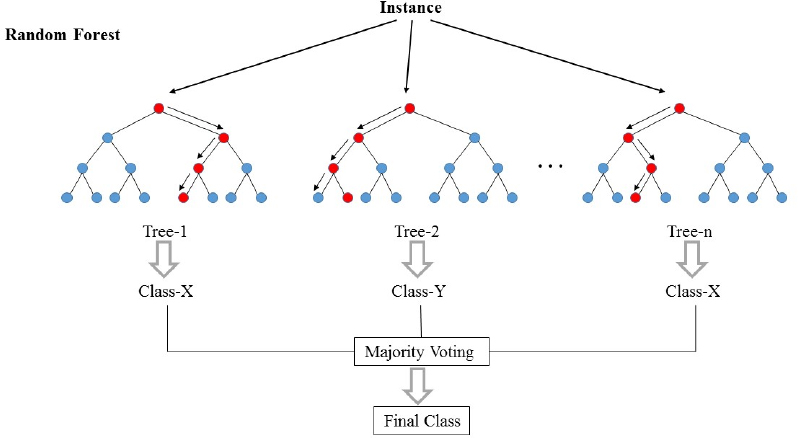

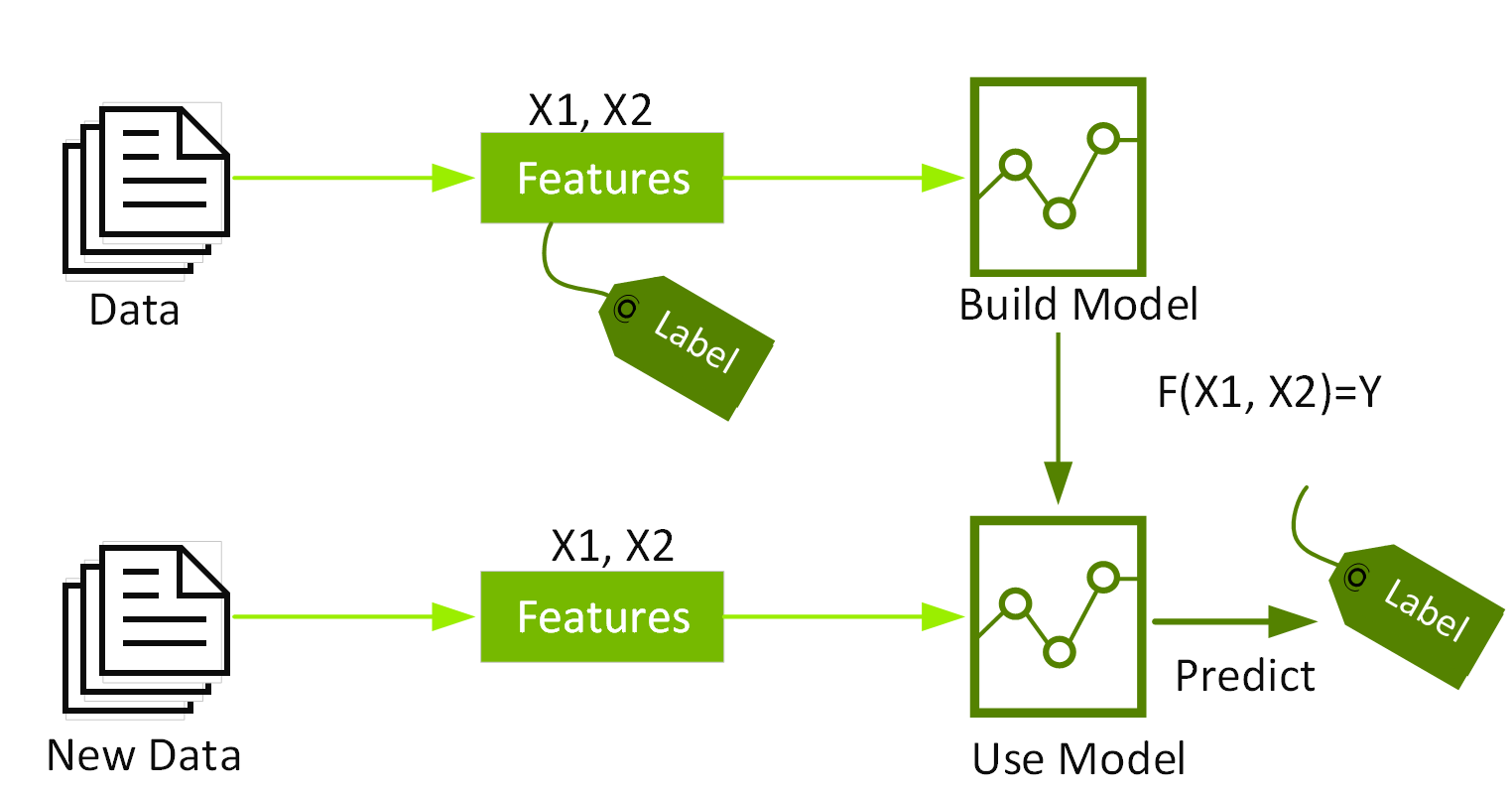

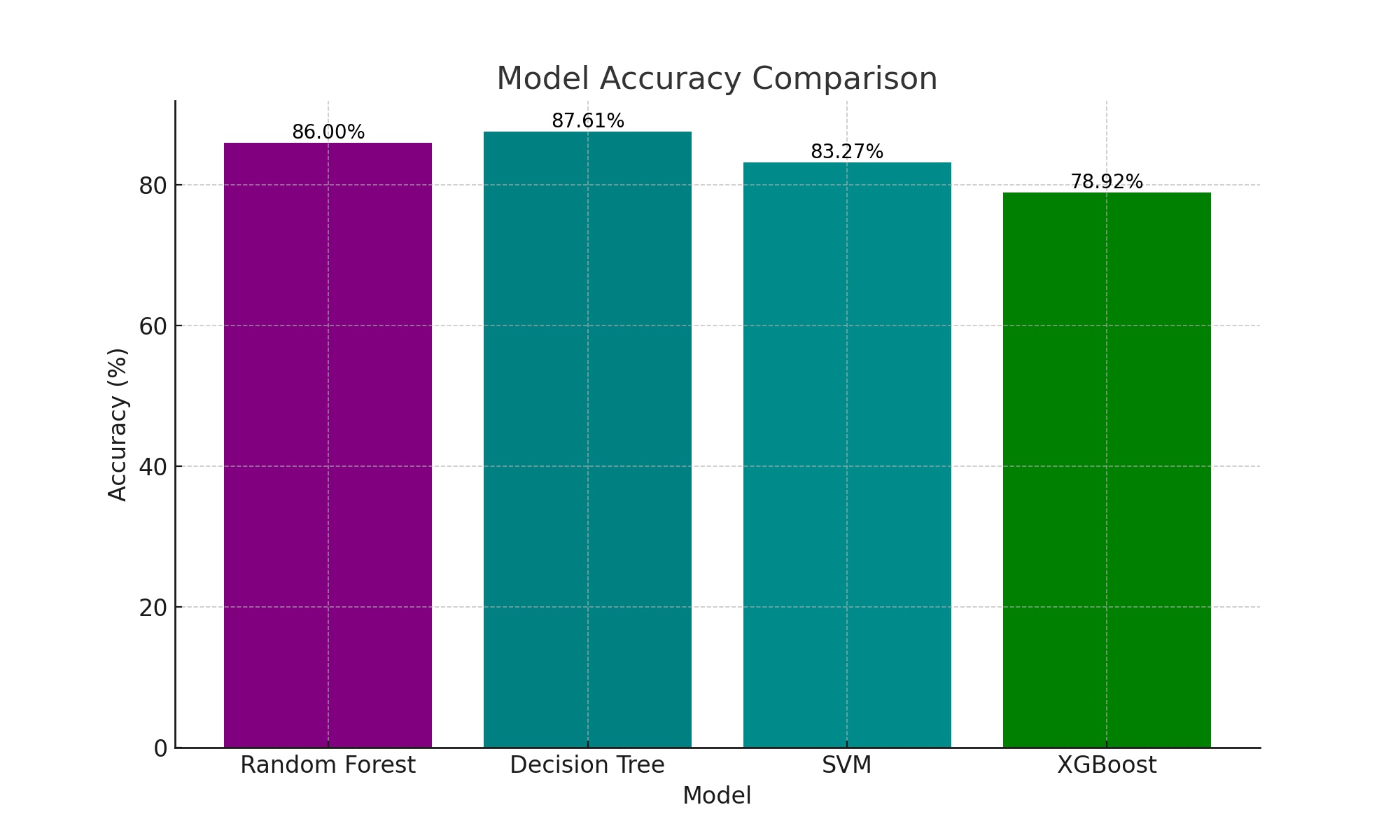

RF: Random Forest refers to a statistical machine learning approach which combines multiple decision trees to improve prediction accuracy and control over-fitting. This was chosen for its ease of handling large datasets with higher dimensional spaces. In our code, it enhances the job role prediction reliability by utilizing multiple decision trees.

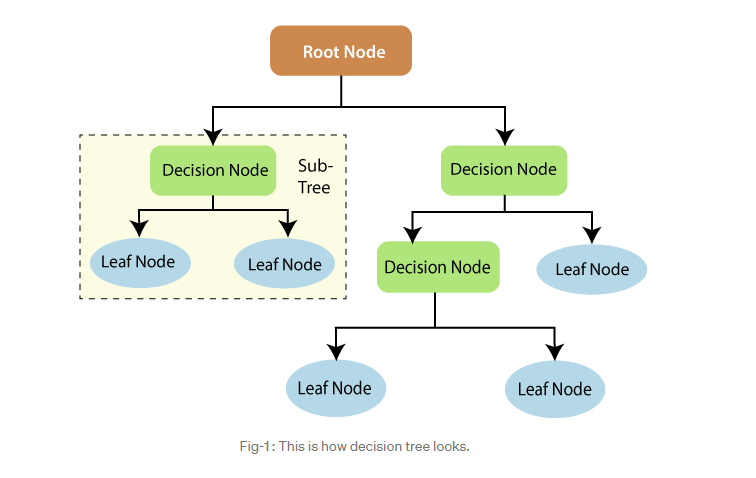

DT: Decision Tree constructs a tree-like model of decisions based on the features of the dataset. It has been chosen for its effectiveness for classification of tasks due to its simplicity and interpretability. For our use case, it categorizes job roles based on various input features, making decisions at each node of the tree.

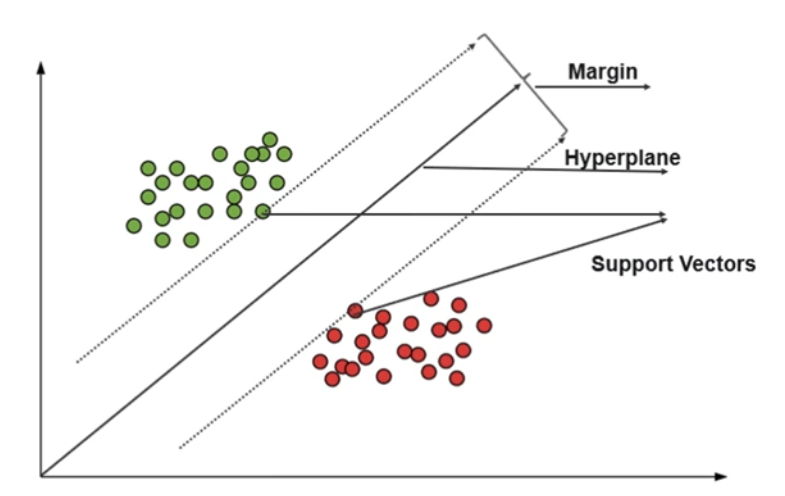

SVM: SVM is a powerful classifier that works by finding a hyperplane in an N-dimensional space (N — the number of features) that distinctly classifies the data points. It is known for its versatility and its effectiveness in high-dimensional spaces. Here, it segregates job roles as per feature set boundaries.

XGB: XGBoost stands for eXtreme Gradient Boosting. It serves as an efficient and scalable implementation of the gradient boosting framework. It improves the model's performance by sequentially correcting errors made by previous trees. It serves as an optimal solution due to its speed and performance in structured data.

.png)