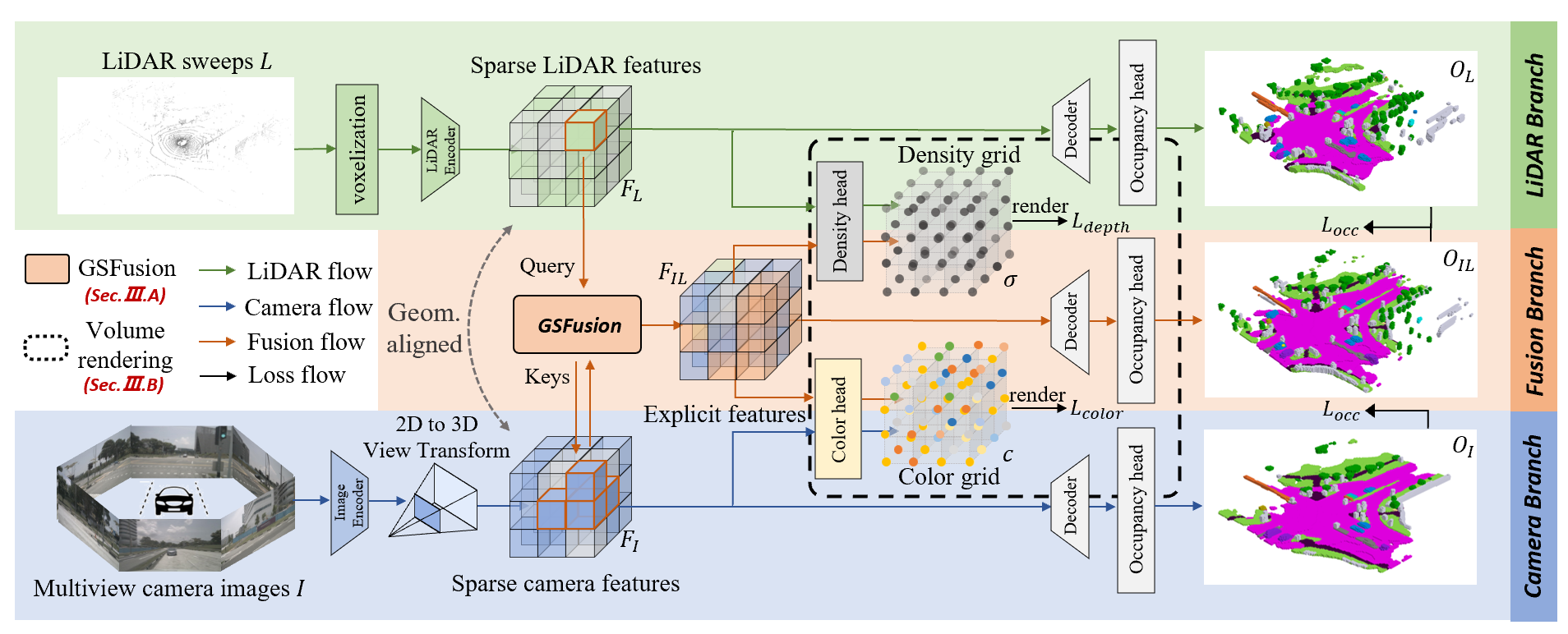

Co-Occ: Coupling Explicit Feature Fusion with Volume Rendering Regularization for Multi-Modal 3D Semantic Occupancy Prediction

Project Page | Paper | Arxiv

3D semantic occupancy prediction is a pivotal task in the field of autonomous driving. Recent approaches have made great advances in 3D semantic occupancy predictions on a single modality. However, multi-modal semantic occupancy prediction approaches have encountered difficulties in dealing with the modality heterogeneity, modality misalignment, and insufficient modality interactions that arise during the fusion of different modalities data, which may result in the loss of important geometric and semantic information. This letter presents a novel multi-modal, i.e., LiDAR-camera 3D semantic occupancy prediction framework, dubbed Co-Occ, which couples explicit LiDAR-camera feature fusion with implicit volume rendering regularization. The key insight is that volume rendering in the feature space can proficiently bridge the gap between 3D LiDAR sweeps and 2D images while serving as a physical regularization to enhance LiDAR-camera fused volumetric representation. Specifically, we first propose a Geometric- and Semantic-aware Fusion (GSFusion) module to explicitly enhance LiDAR features by incorporating neighboring camera features through a K-nearest neighbors (KNN) search. Then, we employ volume rendering to project the fused feature back to the image planes for reconstructing color and depth maps. These maps are then supervised by input images from the camera and depth estimations derived from LiDAR, respectively. Extensive experiments on the popular nuScenes and SemanticKITTI benchmarks verify the effectiveness of our Co-Occ for 3D semantic occupancy prediction.

- [2024/05/21] Codes release.

[1] Check installation for installation. Our code is mainly based on mmdetection3d.

[2] Check data_preparation for preparing nuScenes datasets.

[3] Check train_and_eval for training and evaluation.

[4] Check predict_and_visualize for prediction and visualization.

We provide the pretrained weights on nuScenes datasets with occupancy labels (resolution 200x200x16 with voxel size 0.5m) from here, reproduced with the released codebase.

| Modality | Backbone | Model Weights |

|---|---|---|

| Camera-only | ResNet101 | nusc_cam_r101_896x1600.pth |

| LiDAR-only | - | nusc_lidar.pth |

| LiDAR-camera | ResNet101 | nusc_multi_r101_896x1600.pth |

| LiDAR-camera | ResNet50 | nusc_multi_r50_256x704.pth |

For nuscenes-openoccupancy benchmark, we provide the pretrained weight (password: cooc) with this config file.

This project is developed based on the following open-sourced projects: OccFormer, SurroundOcc, OpenOccupancy, MonoScene, BEVDet, BEVFormer. Thanks for their excellent work.

If you find this project helpful, please consider giving this repo a star or citing the following paper:

@article{pan2024co,

title={Co-Occ: Coupling Explicit Feature Fusion With Volume Rendering Regularization for Multi-Modal 3D Semantic Occupancy Prediction},

author={Pan, Jingyi and Wang, Zipeng and Wang, Lin},

journal={IEEE Robotics and Automation Letters},

year={2024},

publisher={IEEE}

}