Just a little fun I had during the summer implementing fast style transfer in TensorFlow. When I have the time, I'll look into implementing n-style transfer and universal style transfer.

Our implementation is based off Logan Engstrom's implementation. The following modifications where made:

- Transpose convolutions were replaced with nearest neighbor upsampling, based on Odena's Deconvolution and Checkerboard Artifacts.

- Zero-padding replaced with reflection-padding in convolutions, based on Dumoulin's A Learned Representation For Artistic Style.

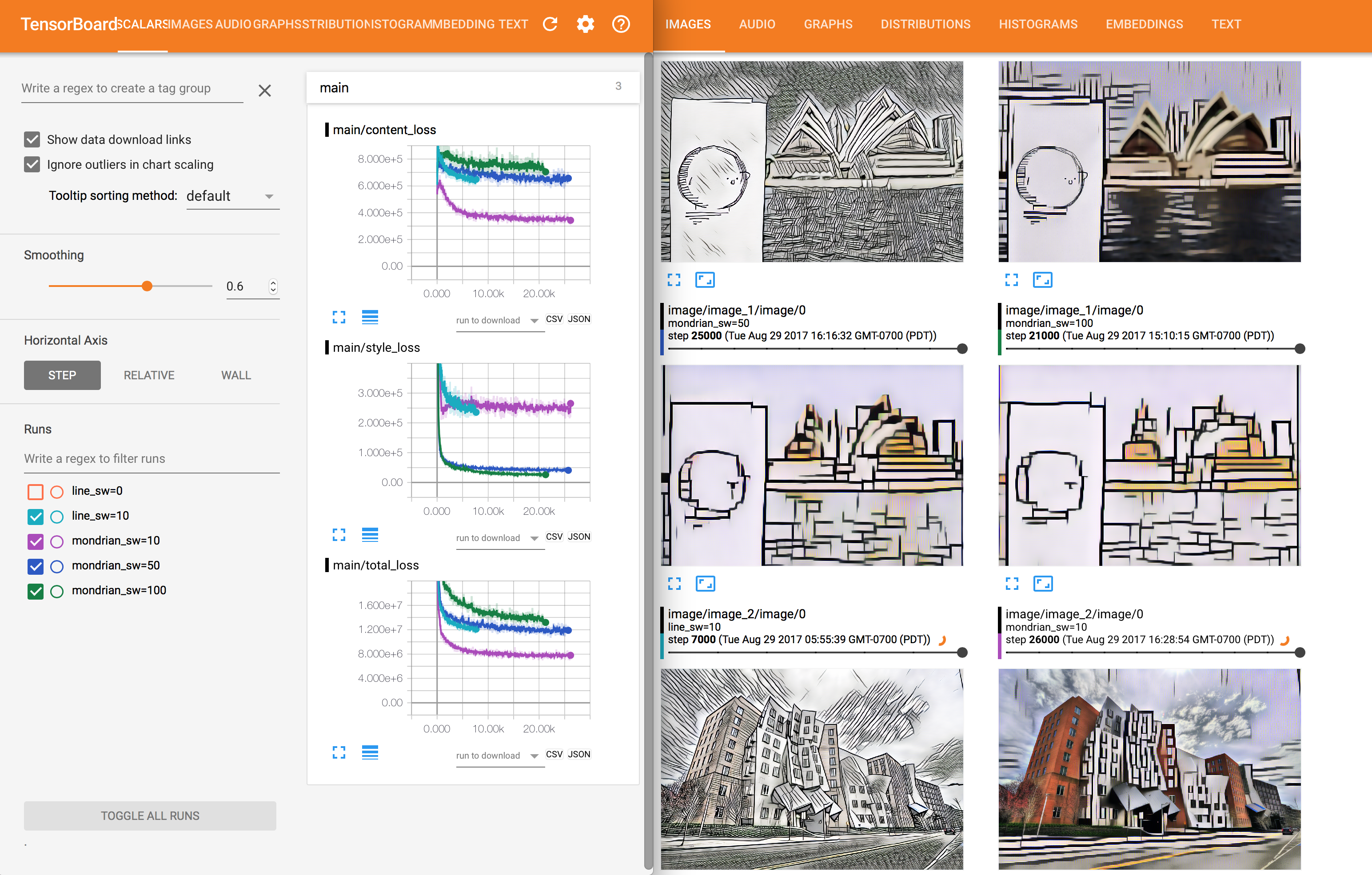

- Added Tensorboard visualizations.

You'll need

python==2.7

ffmpeg==3.2.2You'll also need the following python libraries

numpy==1.13.0

scipy==0.18.1

tensorflow==1.1.0

tensorbayes==0.2.0Get training data

Get data and VGG weights for training:

sh get_data.sh

Train model

Example of training with target style data/styles/hatch.jpg:

python main.py train \

--content-weight 15 \

--style-weight 100 \

--target-style data/styles/hatch.jpg \

--validation-dir data/validation

Models are automatically saved to checkpoints/MODEL_NAME where MODEL_NAME is STYLE_sw=STYLE_WEIGHT (e.g. checkpoints/hatch_sw=100). A validation directory is required to monitor the visual performance of the fast-style-transfer network. The default arguments work fairly well, so the only thing you really need to provide is the target style.

Test model

Once trained we can test the model on, for example, images in the data/validation directory:

python main.py test \

--model-name MODEL_NAME \

--ckpt CKPT \

--test-dir data/validation \

If --ckpt is not provided, the latest checkpoint from checkpoints/MODEL_NAME is loaded.

Or if you have a specific jpg/png/mp4 file:

python main.py test \

--model-name MODEL_NAME \

--ckpt CKPT \

--test-file path/to/test/file

Here is style transfer applied to Linkin Park's Numb :D

A tensorboard summary is created and saved in log when training the model. You can see how the model is doing there.