$ mkdir -p .github/workflows. This allows you to create a directory hierarchy with a single command.$ mkdir -p path/to/directory. -p stands for 'parent'.

- Generate new migrations file:

$ migrate create -ext sql -dir db/migration/ -seq add_users

-

run sqlc init to generate

sqlc.yamlfile -

TechSchool Example implementations:

https://github.com/techschool/simplebank/blob/master/db/query/transfer.sql

https://github.com/techschool/simplebank/blob/master/db/query/entry.sql

https://github.com/techschool/simplebank/blob/master/db/query/account.sql

-lib/pq is required for postgres unit testing process

Offset value is the number of rows skipped. It is the starting point for the cursor and SQL output

-

Locking Transactions:

# SELECT * FROM accounts WHERE id = 1 FOR UPDATE; -

After running this query the terminal will lock and wait for another SQL command on another terminal to run an update query e.g. (

# UPDATE accounts SET balance = 500 WHERE id = 1;). After the update query has finished running, the# COMMITcommand must run to unblock the initial terminal.

SELECT ... FOR UPDATE is used in PostgreSQL to lock rows returned by a SELECT query, allowing you to perform operations on those rows within a transaction while preventing other transactions from modifying or acquiring locks on the same rows until your transaction completes. There are several scenarios where you might need to use SELECT ... FOR UPDATE:

-

Implementing Transaction Isolation: When multiple transactions need to access and modify the same rows concurrently, it's essential to ensure transaction isolation to maintain data consistency and integrity. By using

SELECT ... FOR UPDATE, you can lock the selected rows, ensuring that other transactions cannot modify them until your transaction completes. -

Preventing Race Conditions: In scenarios where multiple transactions might attempt to modify the same data simultaneously, using

SELECT ... FOR UPDATEhelps prevent race conditions. By acquiring locks on the rows you intend to modify, you ensure that your transaction has exclusive access to those rows until it completes, avoiding conflicts with other transactions. -

Implementing Pessimistic Locking: Pessimistic locking is a concurrency control technique where locks are acquired on resources (rows, tables, etc.) before performing operations on them.

SELECT ... FOR UPDATEis a way to implement pessimistic locking in PostgreSQL by explicitly locking rows for update within a transaction, ensuring that other transactions cannot modify them concurrently. -

Implementing Business Logic: In some cases, your application's business logic might require exclusive access to certain rows during a transaction. For example, if you're processing orders and need to ensure that no other transactions modify the same order concurrently, you can use

SELECT ... FOR UPDATEto lock the order rows while processing them. -

Avoiding Concurrent Modifications: When you need to perform multiple operations on related rows within a transaction and want to ensure that those operations are atomic and consistent,

SELECT ... FOR UPDATEcan help avoid concurrent modifications by other transactions.

Overall, SELECT ... FOR UPDATE is a useful feature in PostgreSQL for managing concurrency and ensuring data consistency within transactions, especially in scenarios where multiple transactions might access or modify the same data concurrently.

- How

FOR NO KEY UPDATE;can prevent deadlock in postgres

Using FOR NO KEY UPDATE in PostgreSQL can prevent deadlocks by instructing the database not to acquire row-level locks when executing a SELECT ... FOR UPDATE query. Deadlocks often occur when multiple transactions are trying to acquire locks on rows in different orders, resulting in a circular dependency that neither transaction can break. By avoiding row-level locks with FOR NO KEY UPDATE, you reduce the likelihood of deadlocks because transactions won't be competing for the same locks.

Here's how it works:

-

Locking Mechanisms: In PostgreSQL, when you execute a

SELECT ... FOR UPDATEstatement, it locks the selected rows until the end of the transaction. This prevents other transactions from modifying or locking those rows until the first transaction releases its lock. -

Deadlocks: Deadlocks occur when two transactions each hold locks that the other transaction needs to proceed. For example, Transaction A holds a lock on Row 1 and is trying to acquire a lock on Row 2, while Transaction B holds a lock on Row 2 and is trying to acquire a lock on Row 1. This creates a circular dependency, and neither transaction can proceed, resulting in a deadlock.

-

Preventing Deadlocks with

FOR NO KEY UPDATE: By usingFOR NO KEY UPDATEin yourSELECTstatement, you tell PostgreSQL not to acquire row-level locks. This means that other transactions can still acquire locks on the same rows concurrently without waiting for the first transaction to release its locks. As a result, the likelihood of deadlocks decreases because transactions are less likely to be competing for the same locks. -

Trade-offs: While using

FOR NO KEY UPDATEcan help prevent deadlocks, it also means that you lose the ability to enforce exclusive access to the selected rows. If maintaining exclusive access is crucial for your application, you might need to find alternative strategies for deadlock prevention, such as careful transaction ordering or reducing the scope of transactions.

In summary, FOR NO KEY UPDATE can be a useful tool for preventing deadlocks in PostgreSQL by reducing the likelihood of lock contention between transactions. However, it's essential to understand the trade-offs and implications for your specific application requirements.

- Example Query

-- All checked filters --

SELECT * FROM users WHERE ("full_name" = 'Ryan Finlayson') ORDER BY "full_name" DESC LIMIT 300 OFFSET 0;

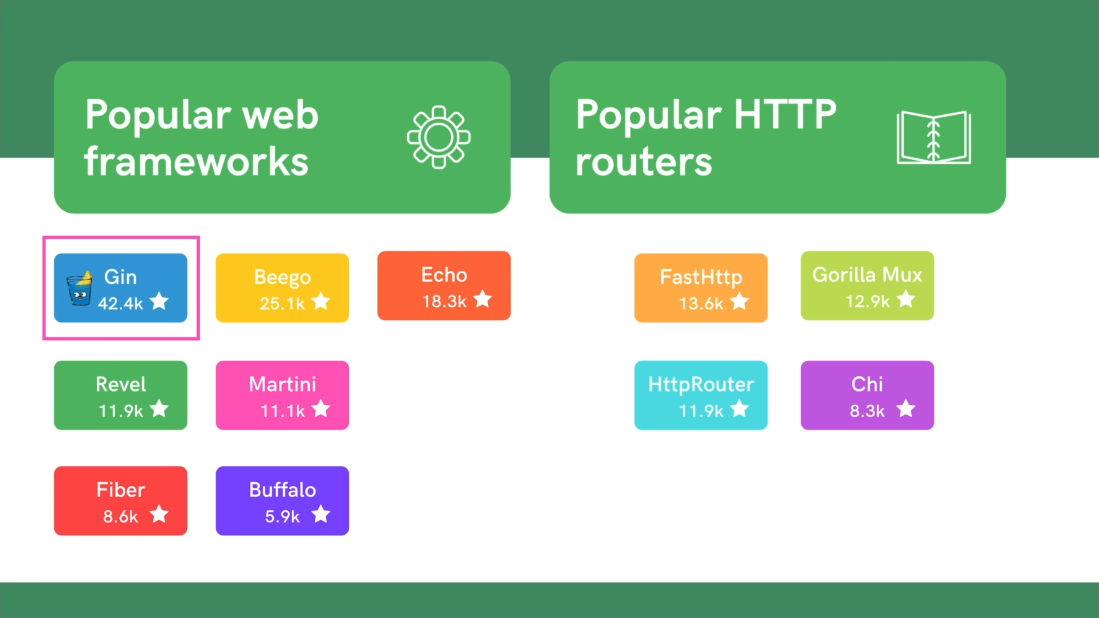

- Go Gin: The most popular Go Web Framework is Gin

-

Access Tokens: Access tokens are used to authenticate API endpoint routes and are provided once the user successfully logs into their account with a valid username and password. These access tokens are stateless in nature, and therefore they are not stored by the database. They should not be used for long sessions due to their stateless design. There is no way to revoke them if they get leaked. Recommended lifetime: 10-15 mins.

-

Refresh Tokens: Refresh tokens maintain a stateful session on the server.Client can use a refresh token with a long valid duration to request a new access token when the access token expires. The refresh token can be as simple as a random string or we can use PASETO. The refresh token is stored in a sessions table in the database. With an additional boolean field is_blocked to block the refresh token if it gets compromised or leaked. With the ability to revoke this refresh token its life time can be much longer than its access token counterpart. Recommended lifetime: 1-7 days.

Pre-requisite:

-

Have golang migrate installed ($ brew install golang-migrate)

-

Have sqlc installed ($ brew install sqlc)

-

Have mockgen installed ($ go install github.com/golang/mock/mockgen@v1.6.0)

- Add export PATH=$PATH:$(go env GOPATH)/bin and export PATH=$PATH:$(go env GOBIN) to ~/.zshrc

-- Begin --

- Generate an up and a down migration file for the new table

- $ migrate create -ext sql -dir db/migration -seq add_sessions

- Add SQL code to create a new table to the newly created up migration file (000003_add_sessions.up.sql)

CREATE TABLE "sessions" (

"id" uuid PRIMARY KEY,

"username" varchar NOT NULL,

"refresh_token" varchar NOT NULL,

"user_agent" varchar NOT NULL,

"client_ip" varchar UNIQUE NOT NULL,

"is_blocked" boolean NOT NULL DEFAULT false,

"expires_at" timestamptz NOT NULL,

"created_at" timestamptz NOT NULL DEFAULT (now())

);

ALTER TABLE "sessions" ADD FOREIGN KEY ("username") REFERENCES "users" ("username");

[db/migration/000003_add_sessions.up.sql]

- Add code for the migration down file

DROP TABLE IF EXISTS "sessions";

[db/migration/000003_add_sessions.down.sql]

-

Execute the migration up file

-

$ migrate -path db/migration/ -database "postgresql://root:root@localhost:5432/simple_bank?sslmode=disable" -verbose up

- Create a sql script file that sqlc will use to generate the CRUD operations that you need.

-

Here is an example for Inserting a new session (CREATE) and for Getting session information by its id (READ)

-

Ensure that the comments are named according to what you wish the name of the functions to be called

-- name: CreateSession :one

INSERT INTO sessions (

id,

username,

refresh_token,

user_agent,

client_ip,

is_blocked,

expires_at

) VALUES (

$1, $2, $3, $4, $5, $6, $7

) RETURNING *;

-- name: GetSession :one

SELECT * FROM sessions

WHERE id = $1 LIMIT 1;

[db/query/sessions.sql]

-

Execute the sql script file so that sqlc will generate the golang code for these two methods

-

$ sqlc generate

-

The db/sqlc/session.sql.go file will now have the go code required for the CRUD operations that you prototyped in the db/query/sessions.sql file.

-

Update mock interfaces if need be:

- $ mockgen -package mockdb -destination db/mock/store.go RyanFin/GoSimpleBank/db/sqlc Store

Generate sql schema from db.dbml file

Install dbml2gen: $ npm install -g @dbml/cli

Get the doc/db.dbml file from dbdiagram.io

Run: $ dbml2sql --postgres -o doc/schema.sql doc/db.dbml

Install VS Code extension: vscode-dbml

Protobuf, which is short for “Protocol Buffers,” is an efficient, language-agnostic data serialization mechanism.

-

Install Go plugins:

Install the protocol compiler plugins for Go using the following commands:

$ go install google.golang.org/protobuf/cmd/protoc-gen-go@v1.28

$ go install google.golang.org/grpc/cmd/protoc-gen-go-grpc@v1.2

Install VS Code extension: vscode-proto3

-

Ensure service_simple_bank.proto functions can access other proto files:

- Backend Masterclass Episode 42. Timestamp - 8:19

- Go to VSCode settings and search for

vscode-proto3 configurationin the settings search bar -> open in settings.json. This will open up / create a new .vscode/settings.json file - Go to Extensions and search for

vscode-proto3and click it then scroll down to this piece of code for settings.json:

{ "protoc": { "path": "/path/to/protoc", "compile_on_save": false, "options": [ "--proto_path=protos/v3", "--proto_path=protos/v2", "--proto_path=${workspaceRoot}/proto", "--proto_path=${env.GOPATH}/src", "--java_out=gen/java" ] }

}

Modify it to this in settings.json:

"protoc": {

"options": [

"--proto_path=proto",

]

}

```

WHEN USING PROTOBUF ENSURE THAT THE PACKAGE NAME IS IDENTICAL IN EVERY FILE!

After generating the gRPC code, make sure that you run $ go mod tidy will get rid of the red lines with service_simple_bank_grpc.pb.go

EVANS gRPC tool

- install:

- $ brew tap ktr0731/evans

- $ brew install evans

Connect to the running gRPC server with this command:

- $ evans --host localhost --port 9090 -r repl

Running > show service in the Evans shell initially failed to display my RPCs 'CreateUser' and 'LoginUser' in the shell window. The solution to this issue was to run $ show package to display the packages that have been loaded by Evans, you should see pb which contains the RPCs. You then set the pb package by running $ package pb. This selection fixes the issue, and you can now run $ show service to display all of my RPCs.

You then need to set the SimpleBank service with $ service SimpleBank.

TLDR: $ package pb -> $ service SimpleBank

- gRPC Gateway setup

- Necessary GoogleAPIs files

- annotations.proto

- field_behavior.proto

- http.proto

- httpbody.proto

I had an issue loading the session data after attempting to login, which would result in a login failed attempt. The solution to this is to refresh all the table data by running

make migrateupandmake migratedown. This resolved the issue.