Author: Mitch Fairweather

Research Paper that was followed: https://arxiv.org/pdf/1812.07606.pdf

import os, math

from os import makedirs

from os.path import expanduser, exists, join

import argparse

import PIL

from PIL import Image

from queue import Queue

from threading import Thread

import keras

from keras.preprocessing.image import ImageDataGenerator

from keras.preprocessing.image import img_to_array

from keras.preprocessing.image import load_img

from keras.applications import inception_v3

from keras.applications.inception_v3 import InceptionV3

from keras.layers import GlobalAveragePooling2D

from keras.models import Model

from keras.models import Sequential

from keras.layers import Dense, Conv2D, MaxPool2D , Flatten

from keras.optimizers import Adam

from keras.metrics import categorical_crossentropy

from keras import optimizers

from keras.applications.vgg16 import VGG16

from keras.preprocessing import image

from keras.applications.vgg16 import preprocess_input

from keras.layers import Input, Flatten, Dense

from keras.callbacks import ModelCheckpoint, EarlyStopping

import sklearn

from sklearn.model_selection import train_test_split

from sklearn import metrics

from sklearn.metrics import classification_report

import warnings

warnings.filterwarnings('ignore')

import numpy as np

from numpy import random

import pandas as pd

import matplotlib.pyplot as plt

import matplotlib.image as mpimg

import cv2

from tqdm import tqdm

import numpy as npThe data that I will be using for analysis is from the Microsoft Malware Challenge hosted on Kaggle in 2015. The competition data can be found here: https://www.kaggle.com/c/malware-classification/data

Unzipping the data file leaves you with about 500gb of both the byte and assembly files. I will only be using the byte files, which there are about 10,000 files. A single byte file, before any transformations can be seen below.

file = open(r"C:\Users\fairwemr\ISA 480\FinalProject\01azqd4InC7m9JpocGv5.bytes", "rb")

byte = file.read(116)

print(byte)

file.close()b'00401000 E8 0B 00 00 00 E9 16 00 00 00 90 90 90 90 90 90\r\n00401010 B9 25 2B 56 00 FF 25 80 23 41 00 90 90 90 90 90\r\n'

I found this github https://github.com/ncarkaci/binary-to-image/blob/master/binary2image.py for converting binary code to images, and used it as a starting point, and slightly tweaked it for my needs.

The function below will read in the binary data files, and basically converts the individual bytes into unicode format, and appends it to the "binary_values" array.

def getBinaryData(filename):

binary_values = []

with open(filename, 'rb') as fileobject:

# read file byte by byte

data = fileobject.read(1) # initializes the reading byte values by reading the first

while data != b'': # While the data object isn't empty

binary_values.append(ord(data)) #returns integer representing the Unicode code point of the character

data = fileobject.read(1) # Reads the next line

return binary_values # returning the binary values array The function below sets up the data for converting to an image. Using the size of the binary file data, it calculates the necessary height and width of the image to be created. I used the same specifications found in the original paper.

def get_size(data_length, width=None):

if width is None: # if the user doesn't pass in a hardcoded width value.

size = data_length

if (size < 10240):

width = 32

elif (10240 <= size <= 10240 * 3):

width = 64

elif (10240 * 3 <= size <= 10240 * 6):

width = 128

elif (10240 * 6 <= size <= 10240 * 10):

width = 256

elif (10240 * 10 <= size <= 10240 * 20):

width = 384

elif (10240 * 20 <= size <= 10240 * 50):

width = 512

elif (10240 * 50 <= size <= 10240 * 100):

width = 768

else:

width = 1024

height = int(size / width) + 1

else:

width = int(math.sqrt(data_length)) + 1

height = width

return (width, height)The below function, when called, will save the converted binary to image file into a .png file.

def save_file(filename, data, size, image_type):

try:

image = Image.new(image_type, size)

image.putdata(data)

# setup output filename

dirname = os.path.dirname(filename)

name, _ = os.path.splitext(filename)

name = os.path.basename(name)

imagename = dirname + os.sep + image_type + os.sep + name + '.png'

os.makedirs(os.path.dirname(imagename), exist_ok=True)

image.save(imagename)

print('The file', imagename, 'saved.')

except Exception as err:

print(err)The below function converts the binary files into a grescale image. I have commented out the save_file portion, as I will only be using the RGB png files for training and testing.

def createGreyScaleImage(filename, width=None):

greyscale_data = getBinaryData(filename)

size = get_size(len(greyscale_data), width)

#save_file(filename, greyscale_data, size, 'L')The below function is the important one, converting the 24 bit binary data into RGB format, and then saving the file as a RGB png file.

def createRGBImage(filename, width=None):

"""

Create RGB image from 24 bit binary data 8bit Red, 8 bit Green, 8bit Blue

:param filename: image filename

"""

index = 0

rgb_data = []

# Read binary file

binary_data = getBinaryData(filename)

# Create R,G,B pixels

while (index + 3) < len(binary_data):

R = binary_data[index]

G = binary_data[index+1]

B = binary_data[index+2]

index += 3

rgb_data.append((R, G, B))

size = get_size(len(rgb_data), width)

save_file(filename, rgb_data, size, 'RGB')The below function is the wrapper I wrote for all of the above functions. It gets the binary data, finds the correct image size based on the file size, and then converts it to an RGB image which is saved.

def main(filename):

Binary_Values = getBinaryData(filename)

size = get_size(data_length = len(Binary_Values))

rgbImage = createRGBImage(filename)

return Binary_ValuesWith over 10868 binary files, I iterated over all of the files in the specified directory and called the main function at each iteration. The end result from this is a folder in the trainBytes directory called RGB holding 10868 total png files. I made the block below a markdown cell to prevent it from running again, as it took nearly 24 hours the initial pass through.

directory = r"C:\Users\mitch\ISA480FinalProject\trainBytes"

for filename in os.listdir(directory):

main(filename = directory + os.sep + filename)Below are just two examples of what these RGB images created from the binary code look like. As you can see, to the naked eye there is not much variation or interesting areas.

img=mpimg.imread(r'C:\Users\fairwemr\ISA 480\FinalProject\RGB\0A32eTdBKayjCWhZqDOQ.png')

imgplot = plt.imshow(img)

plt.show()img=mpimg.imread(r'C:\Users\fairwemr\ISA 480\FinalProject\RGB\8Z5zugCOx3cTB6ikpbSq.png')

imgplot = plt.imshow(img)

plt.show()From the Kaggle Website, there is another csv file that holds each file's ID along with its malware class noted by a 1 through 9 integer.

train_malware = pd.read_csv(r"C:\Users\fairwemr\ISA 480\FinalProject\trainLabels.csv")

train_malware.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

| Id | Class | |

|---|---|---|

| 0 | 01kcPWA9K2BOxQeS5Rju | 1 |

| 1 | 04EjIdbPV5e1XroFOpiN | 1 |

| 2 | 05EeG39MTRrI6VY21DPd | 1 |

| 3 | 05rJTUWYAKNegBk2wE8X | 1 |

| 4 | 0AnoOZDNbPXIr2MRBSCJ | 1 |

| ... | ... | ... |

| 10863 | KFrZ0Lop1WDGwUtkusCi | 9 |

| 10864 | kg24YRJTB8DNdKMXpwOH | 9 |

| 10865 | kG29BLiFYPgWtpb350sO | 9 |

| 10866 | kGITL4OJxYMWEQ1bKBiP | 9 |

| 10867 | KGorN9J6XAC4bOEkmyup | 9 |

10868 rows × 2 columns

Below, I am matching up the ID's from kaggles label file with the PNG file names. The output is the original label file, with a third column that holds the image path for each observation.

train_folder = r"C:\Users\fairwemr\ISA 480\FinalProject\RGB"

train_malware['image_path'] = train_malware.apply( lambda x: (train_folder + os.sep + x["Id"] + ".png"), axis=1)

pd.options.display.max_colwidth = 100

train_malware.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

| Id | Class | image_path | |

|---|---|---|---|

| 0 | 01kcPWA9K2BOxQeS5Rju | 1 | C:\Users\fairwemr\ISA 480\FinalProject\RGB\01kcPWA9K2BOxQeS5Rju.png |

| 1 | 04EjIdbPV5e1XroFOpiN | 1 | C:\Users\fairwemr\ISA 480\FinalProject\RGB\04EjIdbPV5e1XroFOpiN.png |

| 2 | 05EeG39MTRrI6VY21DPd | 1 | C:\Users\fairwemr\ISA 480\FinalProject\RGB\05EeG39MTRrI6VY21DPd.png |

| 3 | 05rJTUWYAKNegBk2wE8X | 1 | C:\Users\fairwemr\ISA 480\FinalProject\RGB\05rJTUWYAKNegBk2wE8X.png |

| 4 | 0AnoOZDNbPXIr2MRBSCJ | 1 | C:\Users\fairwemr\ISA 480\FinalProject\RGB\0AnoOZDNbPXIr2MRBSCJ.png |

| ... | ... | ... | ... |

| 10863 | KFrZ0Lop1WDGwUtkusCi | 9 | C:\Users\fairwemr\ISA 480\FinalProject\RGB\KFrZ0Lop1WDGwUtkusCi.png |

| 10864 | kg24YRJTB8DNdKMXpwOH | 9 | C:\Users\fairwemr\ISA 480\FinalProject\RGB\kg24YRJTB8DNdKMXpwOH.png |

| 10865 | kG29BLiFYPgWtpb350sO | 9 | C:\Users\fairwemr\ISA 480\FinalProject\RGB\kG29BLiFYPgWtpb350sO.png |

| 10866 | kGITL4OJxYMWEQ1bKBiP | 9 | C:\Users\fairwemr\ISA 480\FinalProject\RGB\kGITL4OJxYMWEQ1bKBiP.png |

| 10867 | KGorN9J6XAC4bOEkmyup | 9 | C:\Users\fairwemr\ISA 480\FinalProject\RGB\KGorN9J6XAC4bOEkmyup.png |

10868 rows × 3 columns

Getting the Data ready for feeding in to the model.

target_labels = train_malware['Class']train_data = np.array([img_to_array(load_img(img, target_size=(299, 299))) for img in train_malware[r'image_path'].values.tolist()]).astype('float32')x_train, x_validation, y_train, y_validation = train_test_split(train_data, target_labels, test_size=0.2, stratify=np.array(target_labels), random_state=100)In the original paper, their final model was based on the Inception V1 model, which used a resized image of 224x224. However, the inception V1 model is no longer in use from what I can find, so I started with the inception V3 model as my starting point. The target image size for this model is 299x299.

print ('x_train shape = ', x_train.shape)

print ('x_validation shape = ', x_validation.shape)x_train shape = (8694, 299, 299, 3)

x_validation shape = (2174, 299, 299, 3)

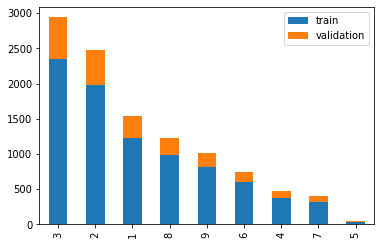

# Calculate the value counts for train and validation data and plot to show the partitioning of the data

data = y_train.value_counts().sort_index().to_frame() # this creates the data frame with train numbers

data.columns = ['train']

data['validation'] = y_validation.value_counts().sort_index().to_frame() # add the validation numbers

new_plot = data[['train','validation']].sort_values(['train']+['validation'], ascending=False) # sort the data

new_plot.plot(kind='bar', stacked=True)

plt.show()y_train = pd.get_dummies(y_train.reset_index(drop=True))

y_validation = pd.get_dummies(y_validation.reset_index(drop=True))For most applications of image classification, many use a variety of resizing, zoom, rotation, etc. of the image to make the neural network able to pick up more on the individual pixel patterns. However, because the original paper made no mention of their resizing technique, I was unsure of whether to follow that path. Additionally, I used the logic that because our images are created from binary code, and not humans taking pictures of objects at different angles for example, all of those image manipulations aren't as applicable to classifying RGB images of malware binary code as other image classification techniques.

# Create train generator.

train_datagen = ImageDataGenerator()

train_generator = train_datagen.flow(x_train, y_train, shuffle=True, batch_size=100, seed=10)# Create validation generator

val_datagen = ImageDataGenerator()

val_generator = train_datagen.flow(x_validation, y_validation, shuffle=True, batch_size=10, seed=10)In the paper, they claimed that their technique could be applied to a variety of pre-trained neural networks. Their "final"model, however, was based on the pre-trained inception V1. This model is no longer in use, however the inception V3 is. Thus, this is the first model I tried to retrain.

# Get the InceptionV3 model

#include_top = false to allow me to create my own pooling and output layer

base_inception_model = InceptionV3(weights = 'imagenet', include_top = False, input_shape=(299, 299, 3))From my limited, but growing knowledge, global average pooling layers are used to help prevent overfitting. Nearly every resource I found to retrain inception models include adding this layer, and it aligned with my goals, so I did as well.

# Add a global spatial average pooling layer.

x = base_inception_model.output

x = GlobalAveragePooling2D()(x)Many of the resources I found were adding a dense layer with far more nodes than the 512 value I ended on, some upwards of 5000. I tried this at first, realized it was taking far too long to retrain, and was seeing abismal results all around on both the training and testing data. After a lot of research, many people recommended lowering this value to between 400-800 to improve performance on both partitions and speed up training.

# Add a fully-connected layer and a logistic layer with 9 outputs, one for each class of malware

x = Dense(512, activation='relu')(x)

predictions = Dense(9, activation='softmax')(x)# The model we will train

inceptionV3Model = Model(inputs = base_inception_model.input, outputs = predictions)The original paper was not very clear on which layers of the neural network they decided to train and which they kept the pre-trained weights. They mentioned that they froze everything but the last few layers, and explicitly said they trained the final pooling layer. Because the final pooling layer was one that I added myself, I froze all of the base model layers, and only trained the pooling layer I created, the dense layer, and the output layer.

# first: train only the top layers i.e. freeze all convolutional InceptionV3 layers

for layer in base_inception_model.layers:

layer.trainable = Falseprint(inceptionV3Model.summary())Model: "model_1"

__________________________________________________________________________________________________

Layer (type) Output Shape Param # Connected to

==================================================================================================

input_1 (InputLayer) (None, 299, 299, 3) 0

__________________________________________________________________________________________________

conv2d_1 (Conv2D) (None, 149, 149, 32) 864 input_1[0][0]

__________________________________________________________________________________________________

batch_normalization_1 (BatchNor (None, 149, 149, 32) 96 conv2d_1[0][0]

__________________________________________________________________________________________________

activation_1 (Activation) (None, 149, 149, 32) 0 batch_normalization_1[0][0]

__________________________________________________________________________________________________

conv2d_2 (Conv2D) (None, 147, 147, 32) 9216 activation_1[0][0]

__________________________________________________________________________________________________

batch_normalization_2 (BatchNor (None, 147, 147, 32) 96 conv2d_2[0][0]

__________________________________________________________________________________________________

activation_2 (Activation) (None, 147, 147, 32) 0 batch_normalization_2[0][0]

__________________________________________________________________________________________________

conv2d_3 (Conv2D) (None, 147, 147, 64) 18432 activation_2[0][0]

__________________________________________________________________________________________________

batch_normalization_3 (BatchNor (None, 147, 147, 64) 192 conv2d_3[0][0]

__________________________________________________________________________________________________

activation_3 (Activation) (None, 147, 147, 64) 0 batch_normalization_3[0][0]

__________________________________________________________________________________________________

max_pooling2d_1 (MaxPooling2D) (None, 73, 73, 64) 0 activation_3[0][0]

__________________________________________________________________________________________________

conv2d_4 (Conv2D) (None, 73, 73, 80) 5120 max_pooling2d_1[0][0]

__________________________________________________________________________________________________

batch_normalization_4 (BatchNor (None, 73, 73, 80) 240 conv2d_4[0][0]

__________________________________________________________________________________________________

activation_4 (Activation) (None, 73, 73, 80) 0 batch_normalization_4[0][0]

__________________________________________________________________________________________________

conv2d_5 (Conv2D) (None, 71, 71, 192) 138240 activation_4[0][0]

__________________________________________________________________________________________________

batch_normalization_5 (BatchNor (None, 71, 71, 192) 576 conv2d_5[0][0]

__________________________________________________________________________________________________

activation_5 (Activation) (None, 71, 71, 192) 0 batch_normalization_5[0][0]

__________________________________________________________________________________________________

max_pooling2d_2 (MaxPooling2D) (None, 35, 35, 192) 0 activation_5[0][0]

__________________________________________________________________________________________________

conv2d_9 (Conv2D) (None, 35, 35, 64) 12288 max_pooling2d_2[0][0]

__________________________________________________________________________________________________

batch_normalization_9 (BatchNor (None, 35, 35, 64) 192 conv2d_9[0][0]

__________________________________________________________________________________________________

activation_9 (Activation) (None, 35, 35, 64) 0 batch_normalization_9[0][0]

__________________________________________________________________________________________________

conv2d_7 (Conv2D) (None, 35, 35, 48) 9216 max_pooling2d_2[0][0]

__________________________________________________________________________________________________

conv2d_10 (Conv2D) (None, 35, 35, 96) 55296 activation_9[0][0]

__________________________________________________________________________________________________

batch_normalization_7 (BatchNor (None, 35, 35, 48) 144 conv2d_7[0][0]

__________________________________________________________________________________________________

batch_normalization_10 (BatchNo (None, 35, 35, 96) 288 conv2d_10[0][0]

__________________________________________________________________________________________________

activation_7 (Activation) (None, 35, 35, 48) 0 batch_normalization_7[0][0]

__________________________________________________________________________________________________

activation_10 (Activation) (None, 35, 35, 96) 0 batch_normalization_10[0][0]

__________________________________________________________________________________________________

average_pooling2d_1 (AveragePoo (None, 35, 35, 192) 0 max_pooling2d_2[0][0]

__________________________________________________________________________________________________

conv2d_6 (Conv2D) (None, 35, 35, 64) 12288 max_pooling2d_2[0][0]

__________________________________________________________________________________________________

conv2d_8 (Conv2D) (None, 35, 35, 64) 76800 activation_7[0][0]

__________________________________________________________________________________________________

conv2d_11 (Conv2D) (None, 35, 35, 96) 82944 activation_10[0][0]

__________________________________________________________________________________________________

conv2d_12 (Conv2D) (None, 35, 35, 32) 6144 average_pooling2d_1[0][0]

__________________________________________________________________________________________________

batch_normalization_6 (BatchNor (None, 35, 35, 64) 192 conv2d_6[0][0]

__________________________________________________________________________________________________

batch_normalization_8 (BatchNor (None, 35, 35, 64) 192 conv2d_8[0][0]

__________________________________________________________________________________________________

batch_normalization_11 (BatchNo (None, 35, 35, 96) 288 conv2d_11[0][0]

__________________________________________________________________________________________________

batch_normalization_12 (BatchNo (None, 35, 35, 32) 96 conv2d_12[0][0]

__________________________________________________________________________________________________

activation_6 (Activation) (None, 35, 35, 64) 0 batch_normalization_6[0][0]

__________________________________________________________________________________________________

activation_8 (Activation) (None, 35, 35, 64) 0 batch_normalization_8[0][0]

__________________________________________________________________________________________________

activation_11 (Activation) (None, 35, 35, 96) 0 batch_normalization_11[0][0]

__________________________________________________________________________________________________

activation_12 (Activation) (None, 35, 35, 32) 0 batch_normalization_12[0][0]

__________________________________________________________________________________________________

mixed0 (Concatenate) (None, 35, 35, 256) 0 activation_6[0][0]

activation_8[0][0]

activation_11[0][0]

activation_12[0][0]

__________________________________________________________________________________________________

conv2d_16 (Conv2D) (None, 35, 35, 64) 16384 mixed0[0][0]

__________________________________________________________________________________________________

batch_normalization_16 (BatchNo (None, 35, 35, 64) 192 conv2d_16[0][0]

__________________________________________________________________________________________________

activation_16 (Activation) (None, 35, 35, 64) 0 batch_normalization_16[0][0]

__________________________________________________________________________________________________

conv2d_14 (Conv2D) (None, 35, 35, 48) 12288 mixed0[0][0]

__________________________________________________________________________________________________

conv2d_17 (Conv2D) (None, 35, 35, 96) 55296 activation_16[0][0]

__________________________________________________________________________________________________

batch_normalization_14 (BatchNo (None, 35, 35, 48) 144 conv2d_14[0][0]

__________________________________________________________________________________________________

batch_normalization_17 (BatchNo (None, 35, 35, 96) 288 conv2d_17[0][0]

__________________________________________________________________________________________________

activation_14 (Activation) (None, 35, 35, 48) 0 batch_normalization_14[0][0]

__________________________________________________________________________________________________

activation_17 (Activation) (None, 35, 35, 96) 0 batch_normalization_17[0][0]

__________________________________________________________________________________________________

average_pooling2d_2 (AveragePoo (None, 35, 35, 256) 0 mixed0[0][0]

__________________________________________________________________________________________________

conv2d_13 (Conv2D) (None, 35, 35, 64) 16384 mixed0[0][0]

__________________________________________________________________________________________________

conv2d_15 (Conv2D) (None, 35, 35, 64) 76800 activation_14[0][0]

__________________________________________________________________________________________________

conv2d_18 (Conv2D) (None, 35, 35, 96) 82944 activation_17[0][0]

__________________________________________________________________________________________________

conv2d_19 (Conv2D) (None, 35, 35, 64) 16384 average_pooling2d_2[0][0]

__________________________________________________________________________________________________

batch_normalization_13 (BatchNo (None, 35, 35, 64) 192 conv2d_13[0][0]

__________________________________________________________________________________________________

batch_normalization_15 (BatchNo (None, 35, 35, 64) 192 conv2d_15[0][0]

__________________________________________________________________________________________________

batch_normalization_18 (BatchNo (None, 35, 35, 96) 288 conv2d_18[0][0]

__________________________________________________________________________________________________

batch_normalization_19 (BatchNo (None, 35, 35, 64) 192 conv2d_19[0][0]

__________________________________________________________________________________________________

activation_13 (Activation) (None, 35, 35, 64) 0 batch_normalization_13[0][0]

__________________________________________________________________________________________________

activation_15 (Activation) (None, 35, 35, 64) 0 batch_normalization_15[0][0]

__________________________________________________________________________________________________

activation_18 (Activation) (None, 35, 35, 96) 0 batch_normalization_18[0][0]

__________________________________________________________________________________________________

activation_19 (Activation) (None, 35, 35, 64) 0 batch_normalization_19[0][0]

__________________________________________________________________________________________________

mixed1 (Concatenate) (None, 35, 35, 288) 0 activation_13[0][0]

activation_15[0][0]

activation_18[0][0]

activation_19[0][0]

__________________________________________________________________________________________________

conv2d_23 (Conv2D) (None, 35, 35, 64) 18432 mixed1[0][0]

__________________________________________________________________________________________________

batch_normalization_23 (BatchNo (None, 35, 35, 64) 192 conv2d_23[0][0]

__________________________________________________________________________________________________

activation_23 (Activation) (None, 35, 35, 64) 0 batch_normalization_23[0][0]

__________________________________________________________________________________________________

conv2d_21 (Conv2D) (None, 35, 35, 48) 13824 mixed1[0][0]

__________________________________________________________________________________________________

conv2d_24 (Conv2D) (None, 35, 35, 96) 55296 activation_23[0][0]

__________________________________________________________________________________________________

batch_normalization_21 (BatchNo (None, 35, 35, 48) 144 conv2d_21[0][0]

__________________________________________________________________________________________________

batch_normalization_24 (BatchNo (None, 35, 35, 96) 288 conv2d_24[0][0]

__________________________________________________________________________________________________

activation_21 (Activation) (None, 35, 35, 48) 0 batch_normalization_21[0][0]

__________________________________________________________________________________________________

activation_24 (Activation) (None, 35, 35, 96) 0 batch_normalization_24[0][0]

__________________________________________________________________________________________________

average_pooling2d_3 (AveragePoo (None, 35, 35, 288) 0 mixed1[0][0]

__________________________________________________________________________________________________

conv2d_20 (Conv2D) (None, 35, 35, 64) 18432 mixed1[0][0]

__________________________________________________________________________________________________

conv2d_22 (Conv2D) (None, 35, 35, 64) 76800 activation_21[0][0]

__________________________________________________________________________________________________

conv2d_25 (Conv2D) (None, 35, 35, 96) 82944 activation_24[0][0]

__________________________________________________________________________________________________

conv2d_26 (Conv2D) (None, 35, 35, 64) 18432 average_pooling2d_3[0][0]

__________________________________________________________________________________________________

batch_normalization_20 (BatchNo (None, 35, 35, 64) 192 conv2d_20[0][0]

__________________________________________________________________________________________________

batch_normalization_22 (BatchNo (None, 35, 35, 64) 192 conv2d_22[0][0]

__________________________________________________________________________________________________

batch_normalization_25 (BatchNo (None, 35, 35, 96) 288 conv2d_25[0][0]

__________________________________________________________________________________________________

batch_normalization_26 (BatchNo (None, 35, 35, 64) 192 conv2d_26[0][0]

__________________________________________________________________________________________________

activation_20 (Activation) (None, 35, 35, 64) 0 batch_normalization_20[0][0]

__________________________________________________________________________________________________

activation_22 (Activation) (None, 35, 35, 64) 0 batch_normalization_22[0][0]

__________________________________________________________________________________________________

activation_25 (Activation) (None, 35, 35, 96) 0 batch_normalization_25[0][0]

__________________________________________________________________________________________________

activation_26 (Activation) (None, 35, 35, 64) 0 batch_normalization_26[0][0]

__________________________________________________________________________________________________

mixed2 (Concatenate) (None, 35, 35, 288) 0 activation_20[0][0]

activation_22[0][0]

activation_25[0][0]

activation_26[0][0]

__________________________________________________________________________________________________

conv2d_28 (Conv2D) (None, 35, 35, 64) 18432 mixed2[0][0]

__________________________________________________________________________________________________

batch_normalization_28 (BatchNo (None, 35, 35, 64) 192 conv2d_28[0][0]

__________________________________________________________________________________________________

activation_28 (Activation) (None, 35, 35, 64) 0 batch_normalization_28[0][0]

__________________________________________________________________________________________________

conv2d_29 (Conv2D) (None, 35, 35, 96) 55296 activation_28[0][0]

__________________________________________________________________________________________________

batch_normalization_29 (BatchNo (None, 35, 35, 96) 288 conv2d_29[0][0]

__________________________________________________________________________________________________

activation_29 (Activation) (None, 35, 35, 96) 0 batch_normalization_29[0][0]

__________________________________________________________________________________________________

conv2d_27 (Conv2D) (None, 17, 17, 384) 995328 mixed2[0][0]

__________________________________________________________________________________________________

conv2d_30 (Conv2D) (None, 17, 17, 96) 82944 activation_29[0][0]

__________________________________________________________________________________________________

batch_normalization_27 (BatchNo (None, 17, 17, 384) 1152 conv2d_27[0][0]

__________________________________________________________________________________________________

batch_normalization_30 (BatchNo (None, 17, 17, 96) 288 conv2d_30[0][0]

__________________________________________________________________________________________________

activation_27 (Activation) (None, 17, 17, 384) 0 batch_normalization_27[0][0]

__________________________________________________________________________________________________

activation_30 (Activation) (None, 17, 17, 96) 0 batch_normalization_30[0][0]

__________________________________________________________________________________________________

max_pooling2d_3 (MaxPooling2D) (None, 17, 17, 288) 0 mixed2[0][0]

__________________________________________________________________________________________________

mixed3 (Concatenate) (None, 17, 17, 768) 0 activation_27[0][0]

activation_30[0][0]

max_pooling2d_3[0][0]

__________________________________________________________________________________________________

conv2d_35 (Conv2D) (None, 17, 17, 128) 98304 mixed3[0][0]

__________________________________________________________________________________________________

batch_normalization_35 (BatchNo (None, 17, 17, 128) 384 conv2d_35[0][0]

__________________________________________________________________________________________________

activation_35 (Activation) (None, 17, 17, 128) 0 batch_normalization_35[0][0]

__________________________________________________________________________________________________

conv2d_36 (Conv2D) (None, 17, 17, 128) 114688 activation_35[0][0]

__________________________________________________________________________________________________

batch_normalization_36 (BatchNo (None, 17, 17, 128) 384 conv2d_36[0][0]

__________________________________________________________________________________________________

activation_36 (Activation) (None, 17, 17, 128) 0 batch_normalization_36[0][0]

__________________________________________________________________________________________________

conv2d_32 (Conv2D) (None, 17, 17, 128) 98304 mixed3[0][0]

__________________________________________________________________________________________________

conv2d_37 (Conv2D) (None, 17, 17, 128) 114688 activation_36[0][0]

__________________________________________________________________________________________________

batch_normalization_32 (BatchNo (None, 17, 17, 128) 384 conv2d_32[0][0]

__________________________________________________________________________________________________

batch_normalization_37 (BatchNo (None, 17, 17, 128) 384 conv2d_37[0][0]

__________________________________________________________________________________________________

activation_32 (Activation) (None, 17, 17, 128) 0 batch_normalization_32[0][0]

__________________________________________________________________________________________________

activation_37 (Activation) (None, 17, 17, 128) 0 batch_normalization_37[0][0]

__________________________________________________________________________________________________

conv2d_33 (Conv2D) (None, 17, 17, 128) 114688 activation_32[0][0]

__________________________________________________________________________________________________

conv2d_38 (Conv2D) (None, 17, 17, 128) 114688 activation_37[0][0]

__________________________________________________________________________________________________

batch_normalization_33 (BatchNo (None, 17, 17, 128) 384 conv2d_33[0][0]

__________________________________________________________________________________________________

batch_normalization_38 (BatchNo (None, 17, 17, 128) 384 conv2d_38[0][0]

__________________________________________________________________________________________________

activation_33 (Activation) (None, 17, 17, 128) 0 batch_normalization_33[0][0]

__________________________________________________________________________________________________

activation_38 (Activation) (None, 17, 17, 128) 0 batch_normalization_38[0][0]

__________________________________________________________________________________________________

average_pooling2d_4 (AveragePoo (None, 17, 17, 768) 0 mixed3[0][0]

__________________________________________________________________________________________________

conv2d_31 (Conv2D) (None, 17, 17, 192) 147456 mixed3[0][0]

__________________________________________________________________________________________________

conv2d_34 (Conv2D) (None, 17, 17, 192) 172032 activation_33[0][0]

__________________________________________________________________________________________________

conv2d_39 (Conv2D) (None, 17, 17, 192) 172032 activation_38[0][0]

__________________________________________________________________________________________________

conv2d_40 (Conv2D) (None, 17, 17, 192) 147456 average_pooling2d_4[0][0]

__________________________________________________________________________________________________

batch_normalization_31 (BatchNo (None, 17, 17, 192) 576 conv2d_31[0][0]

__________________________________________________________________________________________________

batch_normalization_34 (BatchNo (None, 17, 17, 192) 576 conv2d_34[0][0]

__________________________________________________________________________________________________

batch_normalization_39 (BatchNo (None, 17, 17, 192) 576 conv2d_39[0][0]

__________________________________________________________________________________________________

batch_normalization_40 (BatchNo (None, 17, 17, 192) 576 conv2d_40[0][0]

__________________________________________________________________________________________________

activation_31 (Activation) (None, 17, 17, 192) 0 batch_normalization_31[0][0]

__________________________________________________________________________________________________

activation_34 (Activation) (None, 17, 17, 192) 0 batch_normalization_34[0][0]

__________________________________________________________________________________________________

activation_39 (Activation) (None, 17, 17, 192) 0 batch_normalization_39[0][0]

__________________________________________________________________________________________________

activation_40 (Activation) (None, 17, 17, 192) 0 batch_normalization_40[0][0]

__________________________________________________________________________________________________

mixed4 (Concatenate) (None, 17, 17, 768) 0 activation_31[0][0]

activation_34[0][0]

activation_39[0][0]

activation_40[0][0]

__________________________________________________________________________________________________

conv2d_45 (Conv2D) (None, 17, 17, 160) 122880 mixed4[0][0]

__________________________________________________________________________________________________

batch_normalization_45 (BatchNo (None, 17, 17, 160) 480 conv2d_45[0][0]

__________________________________________________________________________________________________

activation_45 (Activation) (None, 17, 17, 160) 0 batch_normalization_45[0][0]

__________________________________________________________________________________________________

conv2d_46 (Conv2D) (None, 17, 17, 160) 179200 activation_45[0][0]

__________________________________________________________________________________________________

batch_normalization_46 (BatchNo (None, 17, 17, 160) 480 conv2d_46[0][0]

__________________________________________________________________________________________________

activation_46 (Activation) (None, 17, 17, 160) 0 batch_normalization_46[0][0]

__________________________________________________________________________________________________

conv2d_42 (Conv2D) (None, 17, 17, 160) 122880 mixed4[0][0]

__________________________________________________________________________________________________

conv2d_47 (Conv2D) (None, 17, 17, 160) 179200 activation_46[0][0]

__________________________________________________________________________________________________

batch_normalization_42 (BatchNo (None, 17, 17, 160) 480 conv2d_42[0][0]

__________________________________________________________________________________________________

batch_normalization_47 (BatchNo (None, 17, 17, 160) 480 conv2d_47[0][0]

__________________________________________________________________________________________________

activation_42 (Activation) (None, 17, 17, 160) 0 batch_normalization_42[0][0]

__________________________________________________________________________________________________

activation_47 (Activation) (None, 17, 17, 160) 0 batch_normalization_47[0][0]

__________________________________________________________________________________________________

conv2d_43 (Conv2D) (None, 17, 17, 160) 179200 activation_42[0][0]

__________________________________________________________________________________________________

conv2d_48 (Conv2D) (None, 17, 17, 160) 179200 activation_47[0][0]

__________________________________________________________________________________________________

batch_normalization_43 (BatchNo (None, 17, 17, 160) 480 conv2d_43[0][0]

__________________________________________________________________________________________________

batch_normalization_48 (BatchNo (None, 17, 17, 160) 480 conv2d_48[0][0]

__________________________________________________________________________________________________

activation_43 (Activation) (None, 17, 17, 160) 0 batch_normalization_43[0][0]

__________________________________________________________________________________________________

activation_48 (Activation) (None, 17, 17, 160) 0 batch_normalization_48[0][0]

__________________________________________________________________________________________________

average_pooling2d_5 (AveragePoo (None, 17, 17, 768) 0 mixed4[0][0]

__________________________________________________________________________________________________

conv2d_41 (Conv2D) (None, 17, 17, 192) 147456 mixed4[0][0]

__________________________________________________________________________________________________

conv2d_44 (Conv2D) (None, 17, 17, 192) 215040 activation_43[0][0]

__________________________________________________________________________________________________

conv2d_49 (Conv2D) (None, 17, 17, 192) 215040 activation_48[0][0]

__________________________________________________________________________________________________

conv2d_50 (Conv2D) (None, 17, 17, 192) 147456 average_pooling2d_5[0][0]

__________________________________________________________________________________________________

batch_normalization_41 (BatchNo (None, 17, 17, 192) 576 conv2d_41[0][0]

__________________________________________________________________________________________________

batch_normalization_44 (BatchNo (None, 17, 17, 192) 576 conv2d_44[0][0]

__________________________________________________________________________________________________

batch_normalization_49 (BatchNo (None, 17, 17, 192) 576 conv2d_49[0][0]

__________________________________________________________________________________________________

batch_normalization_50 (BatchNo (None, 17, 17, 192) 576 conv2d_50[0][0]

__________________________________________________________________________________________________

activation_41 (Activation) (None, 17, 17, 192) 0 batch_normalization_41[0][0]

__________________________________________________________________________________________________

activation_44 (Activation) (None, 17, 17, 192) 0 batch_normalization_44[0][0]

__________________________________________________________________________________________________

activation_49 (Activation) (None, 17, 17, 192) 0 batch_normalization_49[0][0]

__________________________________________________________________________________________________

activation_50 (Activation) (None, 17, 17, 192) 0 batch_normalization_50[0][0]

__________________________________________________________________________________________________

mixed5 (Concatenate) (None, 17, 17, 768) 0 activation_41[0][0]

activation_44[0][0]

activation_49[0][0]

activation_50[0][0]

__________________________________________________________________________________________________

conv2d_55 (Conv2D) (None, 17, 17, 160) 122880 mixed5[0][0]

__________________________________________________________________________________________________

batch_normalization_55 (BatchNo (None, 17, 17, 160) 480 conv2d_55[0][0]

__________________________________________________________________________________________________

activation_55 (Activation) (None, 17, 17, 160) 0 batch_normalization_55[0][0]

__________________________________________________________________________________________________

conv2d_56 (Conv2D) (None, 17, 17, 160) 179200 activation_55[0][0]

__________________________________________________________________________________________________

batch_normalization_56 (BatchNo (None, 17, 17, 160) 480 conv2d_56[0][0]

__________________________________________________________________________________________________

activation_56 (Activation) (None, 17, 17, 160) 0 batch_normalization_56[0][0]

__________________________________________________________________________________________________

conv2d_52 (Conv2D) (None, 17, 17, 160) 122880 mixed5[0][0]

__________________________________________________________________________________________________

conv2d_57 (Conv2D) (None, 17, 17, 160) 179200 activation_56[0][0]

__________________________________________________________________________________________________

batch_normalization_52 (BatchNo (None, 17, 17, 160) 480 conv2d_52[0][0]

__________________________________________________________________________________________________

batch_normalization_57 (BatchNo (None, 17, 17, 160) 480 conv2d_57[0][0]

__________________________________________________________________________________________________

activation_52 (Activation) (None, 17, 17, 160) 0 batch_normalization_52[0][0]

__________________________________________________________________________________________________

activation_57 (Activation) (None, 17, 17, 160) 0 batch_normalization_57[0][0]

__________________________________________________________________________________________________

conv2d_53 (Conv2D) (None, 17, 17, 160) 179200 activation_52[0][0]

__________________________________________________________________________________________________

conv2d_58 (Conv2D) (None, 17, 17, 160) 179200 activation_57[0][0]

__________________________________________________________________________________________________

batch_normalization_53 (BatchNo (None, 17, 17, 160) 480 conv2d_53[0][0]

__________________________________________________________________________________________________

batch_normalization_58 (BatchNo (None, 17, 17, 160) 480 conv2d_58[0][0]

__________________________________________________________________________________________________

activation_53 (Activation) (None, 17, 17, 160) 0 batch_normalization_53[0][0]

__________________________________________________________________________________________________

activation_58 (Activation) (None, 17, 17, 160) 0 batch_normalization_58[0][0]

__________________________________________________________________________________________________

average_pooling2d_6 (AveragePoo (None, 17, 17, 768) 0 mixed5[0][0]

__________________________________________________________________________________________________

conv2d_51 (Conv2D) (None, 17, 17, 192) 147456 mixed5[0][0]

__________________________________________________________________________________________________

conv2d_54 (Conv2D) (None, 17, 17, 192) 215040 activation_53[0][0]

__________________________________________________________________________________________________

conv2d_59 (Conv2D) (None, 17, 17, 192) 215040 activation_58[0][0]

__________________________________________________________________________________________________

conv2d_60 (Conv2D) (None, 17, 17, 192) 147456 average_pooling2d_6[0][0]

__________________________________________________________________________________________________

batch_normalization_51 (BatchNo (None, 17, 17, 192) 576 conv2d_51[0][0]

__________________________________________________________________________________________________

batch_normalization_54 (BatchNo (None, 17, 17, 192) 576 conv2d_54[0][0]

__________________________________________________________________________________________________

batch_normalization_59 (BatchNo (None, 17, 17, 192) 576 conv2d_59[0][0]

__________________________________________________________________________________________________

batch_normalization_60 (BatchNo (None, 17, 17, 192) 576 conv2d_60[0][0]

__________________________________________________________________________________________________

activation_51 (Activation) (None, 17, 17, 192) 0 batch_normalization_51[0][0]

__________________________________________________________________________________________________

activation_54 (Activation) (None, 17, 17, 192) 0 batch_normalization_54[0][0]

__________________________________________________________________________________________________

activation_59 (Activation) (None, 17, 17, 192) 0 batch_normalization_59[0][0]

__________________________________________________________________________________________________

activation_60 (Activation) (None, 17, 17, 192) 0 batch_normalization_60[0][0]

__________________________________________________________________________________________________

mixed6 (Concatenate) (None, 17, 17, 768) 0 activation_51[0][0]

activation_54[0][0]

activation_59[0][0]

activation_60[0][0]

__________________________________________________________________________________________________

conv2d_65 (Conv2D) (None, 17, 17, 192) 147456 mixed6[0][0]

__________________________________________________________________________________________________

batch_normalization_65 (BatchNo (None, 17, 17, 192) 576 conv2d_65[0][0]

__________________________________________________________________________________________________

activation_65 (Activation) (None, 17, 17, 192) 0 batch_normalization_65[0][0]

__________________________________________________________________________________________________

conv2d_66 (Conv2D) (None, 17, 17, 192) 258048 activation_65[0][0]

__________________________________________________________________________________________________

batch_normalization_66 (BatchNo (None, 17, 17, 192) 576 conv2d_66[0][0]

__________________________________________________________________________________________________

activation_66 (Activation) (None, 17, 17, 192) 0 batch_normalization_66[0][0]

__________________________________________________________________________________________________

conv2d_62 (Conv2D) (None, 17, 17, 192) 147456 mixed6[0][0]

__________________________________________________________________________________________________

conv2d_67 (Conv2D) (None, 17, 17, 192) 258048 activation_66[0][0]

__________________________________________________________________________________________________

batch_normalization_62 (BatchNo (None, 17, 17, 192) 576 conv2d_62[0][0]

__________________________________________________________________________________________________

batch_normalization_67 (BatchNo (None, 17, 17, 192) 576 conv2d_67[0][0]

__________________________________________________________________________________________________

activation_62 (Activation) (None, 17, 17, 192) 0 batch_normalization_62[0][0]

__________________________________________________________________________________________________

activation_67 (Activation) (None, 17, 17, 192) 0 batch_normalization_67[0][0]

__________________________________________________________________________________________________

conv2d_63 (Conv2D) (None, 17, 17, 192) 258048 activation_62[0][0]

__________________________________________________________________________________________________

conv2d_68 (Conv2D) (None, 17, 17, 192) 258048 activation_67[0][0]

__________________________________________________________________________________________________

batch_normalization_63 (BatchNo (None, 17, 17, 192) 576 conv2d_63[0][0]

__________________________________________________________________________________________________

batch_normalization_68 (BatchNo (None, 17, 17, 192) 576 conv2d_68[0][0]

__________________________________________________________________________________________________

activation_63 (Activation) (None, 17, 17, 192) 0 batch_normalization_63[0][0]

__________________________________________________________________________________________________

activation_68 (Activation) (None, 17, 17, 192) 0 batch_normalization_68[0][0]

__________________________________________________________________________________________________

average_pooling2d_7 (AveragePoo (None, 17, 17, 768) 0 mixed6[0][0]

__________________________________________________________________________________________________

conv2d_61 (Conv2D) (None, 17, 17, 192) 147456 mixed6[0][0]

__________________________________________________________________________________________________

conv2d_64 (Conv2D) (None, 17, 17, 192) 258048 activation_63[0][0]

__________________________________________________________________________________________________

conv2d_69 (Conv2D) (None, 17, 17, 192) 258048 activation_68[0][0]

__________________________________________________________________________________________________

conv2d_70 (Conv2D) (None, 17, 17, 192) 147456 average_pooling2d_7[0][0]

__________________________________________________________________________________________________

batch_normalization_61 (BatchNo (None, 17, 17, 192) 576 conv2d_61[0][0]

__________________________________________________________________________________________________

batch_normalization_64 (BatchNo (None, 17, 17, 192) 576 conv2d_64[0][0]

__________________________________________________________________________________________________

batch_normalization_69 (BatchNo (None, 17, 17, 192) 576 conv2d_69[0][0]

__________________________________________________________________________________________________

batch_normalization_70 (BatchNo (None, 17, 17, 192) 576 conv2d_70[0][0]

__________________________________________________________________________________________________

activation_61 (Activation) (None, 17, 17, 192) 0 batch_normalization_61[0][0]

__________________________________________________________________________________________________

activation_64 (Activation) (None, 17, 17, 192) 0 batch_normalization_64[0][0]

__________________________________________________________________________________________________

activation_69 (Activation) (None, 17, 17, 192) 0 batch_normalization_69[0][0]

__________________________________________________________________________________________________

activation_70 (Activation) (None, 17, 17, 192) 0 batch_normalization_70[0][0]

__________________________________________________________________________________________________

mixed7 (Concatenate) (None, 17, 17, 768) 0 activation_61[0][0]

activation_64[0][0]

activation_69[0][0]

activation_70[0][0]

__________________________________________________________________________________________________

conv2d_73 (Conv2D) (None, 17, 17, 192) 147456 mixed7[0][0]

__________________________________________________________________________________________________

batch_normalization_73 (BatchNo (None, 17, 17, 192) 576 conv2d_73[0][0]

__________________________________________________________________________________________________

activation_73 (Activation) (None, 17, 17, 192) 0 batch_normalization_73[0][0]

__________________________________________________________________________________________________

conv2d_74 (Conv2D) (None, 17, 17, 192) 258048 activation_73[0][0]

__________________________________________________________________________________________________

batch_normalization_74 (BatchNo (None, 17, 17, 192) 576 conv2d_74[0][0]

__________________________________________________________________________________________________

activation_74 (Activation) (None, 17, 17, 192) 0 batch_normalization_74[0][0]

__________________________________________________________________________________________________

conv2d_71 (Conv2D) (None, 17, 17, 192) 147456 mixed7[0][0]

__________________________________________________________________________________________________

conv2d_75 (Conv2D) (None, 17, 17, 192) 258048 activation_74[0][0]

__________________________________________________________________________________________________

batch_normalization_71 (BatchNo (None, 17, 17, 192) 576 conv2d_71[0][0]

__________________________________________________________________________________________________

batch_normalization_75 (BatchNo (None, 17, 17, 192) 576 conv2d_75[0][0]

__________________________________________________________________________________________________

activation_71 (Activation) (None, 17, 17, 192) 0 batch_normalization_71[0][0]

__________________________________________________________________________________________________

activation_75 (Activation) (None, 17, 17, 192) 0 batch_normalization_75[0][0]

__________________________________________________________________________________________________

conv2d_72 (Conv2D) (None, 8, 8, 320) 552960 activation_71[0][0]

__________________________________________________________________________________________________

conv2d_76 (Conv2D) (None, 8, 8, 192) 331776 activation_75[0][0]

__________________________________________________________________________________________________

batch_normalization_72 (BatchNo (None, 8, 8, 320) 960 conv2d_72[0][0]

__________________________________________________________________________________________________

batch_normalization_76 (BatchNo (None, 8, 8, 192) 576 conv2d_76[0][0]

__________________________________________________________________________________________________

activation_72 (Activation) (None, 8, 8, 320) 0 batch_normalization_72[0][0]

__________________________________________________________________________________________________

activation_76 (Activation) (None, 8, 8, 192) 0 batch_normalization_76[0][0]

__________________________________________________________________________________________________

max_pooling2d_4 (MaxPooling2D) (None, 8, 8, 768) 0 mixed7[0][0]

__________________________________________________________________________________________________

mixed8 (Concatenate) (None, 8, 8, 1280) 0 activation_72[0][0]

activation_76[0][0]

max_pooling2d_4[0][0]

__________________________________________________________________________________________________

conv2d_81 (Conv2D) (None, 8, 8, 448) 573440 mixed8[0][0]

__________________________________________________________________________________________________

batch_normalization_81 (BatchNo (None, 8, 8, 448) 1344 conv2d_81[0][0]

__________________________________________________________________________________________________

activation_81 (Activation) (None, 8, 8, 448) 0 batch_normalization_81[0][0]

__________________________________________________________________________________________________

conv2d_78 (Conv2D) (None, 8, 8, 384) 491520 mixed8[0][0]

__________________________________________________________________________________________________

conv2d_82 (Conv2D) (None, 8, 8, 384) 1548288 activation_81[0][0]

__________________________________________________________________________________________________

batch_normalization_78 (BatchNo (None, 8, 8, 384) 1152 conv2d_78[0][0]

__________________________________________________________________________________________________

batch_normalization_82 (BatchNo (None, 8, 8, 384) 1152 conv2d_82[0][0]

__________________________________________________________________________________________________

activation_78 (Activation) (None, 8, 8, 384) 0 batch_normalization_78[0][0]

__________________________________________________________________________________________________

activation_82 (Activation) (None, 8, 8, 384) 0 batch_normalization_82[0][0]

__________________________________________________________________________________________________

conv2d_79 (Conv2D) (None, 8, 8, 384) 442368 activation_78[0][0]

__________________________________________________________________________________________________

conv2d_80 (Conv2D) (None, 8, 8, 384) 442368 activation_78[0][0]

__________________________________________________________________________________________________

conv2d_83 (Conv2D) (None, 8, 8, 384) 442368 activation_82[0][0]

__________________________________________________________________________________________________

conv2d_84 (Conv2D) (None, 8, 8, 384) 442368 activation_82[0][0]

__________________________________________________________________________________________________

average_pooling2d_8 (AveragePoo (None, 8, 8, 1280) 0 mixed8[0][0]

__________________________________________________________________________________________________

conv2d_77 (Conv2D) (None, 8, 8, 320) 409600 mixed8[0][0]

__________________________________________________________________________________________________

batch_normalization_79 (BatchNo (None, 8, 8, 384) 1152 conv2d_79[0][0]

__________________________________________________________________________________________________

batch_normalization_80 (BatchNo (None, 8, 8, 384) 1152 conv2d_80[0][0]

__________________________________________________________________________________________________

batch_normalization_83 (BatchNo (None, 8, 8, 384) 1152 conv2d_83[0][0]

__________________________________________________________________________________________________

batch_normalization_84 (BatchNo (None, 8, 8, 384) 1152 conv2d_84[0][0]

__________________________________________________________________________________________________

conv2d_85 (Conv2D) (None, 8, 8, 192) 245760 average_pooling2d_8[0][0]

__________________________________________________________________________________________________

batch_normalization_77 (BatchNo (None, 8, 8, 320) 960 conv2d_77[0][0]

__________________________________________________________________________________________________

activation_79 (Activation) (None, 8, 8, 384) 0 batch_normalization_79[0][0]

__________________________________________________________________________________________________

activation_80 (Activation) (None, 8, 8, 384) 0 batch_normalization_80[0][0]

__________________________________________________________________________________________________

activation_83 (Activation) (None, 8, 8, 384) 0 batch_normalization_83[0][0]

__________________________________________________________________________________________________

activation_84 (Activation) (None, 8, 8, 384) 0 batch_normalization_84[0][0]

__________________________________________________________________________________________________

batch_normalization_85 (BatchNo (None, 8, 8, 192) 576 conv2d_85[0][0]

__________________________________________________________________________________________________

activation_77 (Activation) (None, 8, 8, 320) 0 batch_normalization_77[0][0]

__________________________________________________________________________________________________

mixed9_0 (Concatenate) (None, 8, 8, 768) 0 activation_79[0][0]

activation_80[0][0]

__________________________________________________________________________________________________

concatenate_1 (Concatenate) (None, 8, 8, 768) 0 activation_83[0][0]

activation_84[0][0]

__________________________________________________________________________________________________

activation_85 (Activation) (None, 8, 8, 192) 0 batch_normalization_85[0][0]

__________________________________________________________________________________________________

mixed9 (Concatenate) (None, 8, 8, 2048) 0 activation_77[0][0]

mixed9_0[0][0]

concatenate_1[0][0]

activation_85[0][0]

__________________________________________________________________________________________________

conv2d_90 (Conv2D) (None, 8, 8, 448) 917504 mixed9[0][0]

__________________________________________________________________________________________________

batch_normalization_90 (BatchNo (None, 8, 8, 448) 1344 conv2d_90[0][0]

__________________________________________________________________________________________________

activation_90 (Activation) (None, 8, 8, 448) 0 batch_normalization_90[0][0]

__________________________________________________________________________________________________

conv2d_87 (Conv2D) (None, 8, 8, 384) 786432 mixed9[0][0]

__________________________________________________________________________________________________

conv2d_91 (Conv2D) (None, 8, 8, 384) 1548288 activation_90[0][0]

__________________________________________________________________________________________________

batch_normalization_87 (BatchNo (None, 8, 8, 384) 1152 conv2d_87[0][0]

__________________________________________________________________________________________________

batch_normalization_91 (BatchNo (None, 8, 8, 384) 1152 conv2d_91[0][0]

__________________________________________________________________________________________________

activation_87 (Activation) (None, 8, 8, 384) 0 batch_normalization_87[0][0]

__________________________________________________________________________________________________

activation_91 (Activation) (None, 8, 8, 384) 0 batch_normalization_91[0][0]

__________________________________________________________________________________________________

conv2d_88 (Conv2D) (None, 8, 8, 384) 442368 activation_87[0][0]

__________________________________________________________________________________________________

conv2d_89 (Conv2D) (None, 8, 8, 384) 442368 activation_87[0][0]

__________________________________________________________________________________________________

conv2d_92 (Conv2D) (None, 8, 8, 384) 442368 activation_91[0][0]

__________________________________________________________________________________________________

conv2d_93 (Conv2D) (None, 8, 8, 384) 442368 activation_91[0][0]

__________________________________________________________________________________________________

average_pooling2d_9 (AveragePoo (None, 8, 8, 2048) 0 mixed9[0][0]

__________________________________________________________________________________________________

conv2d_86 (Conv2D) (None, 8, 8, 320) 655360 mixed9[0][0]

__________________________________________________________________________________________________

batch_normalization_88 (BatchNo (None, 8, 8, 384) 1152 conv2d_88[0][0]

__________________________________________________________________________________________________

batch_normalization_89 (BatchNo (None, 8, 8, 384) 1152 conv2d_89[0][0]

__________________________________________________________________________________________________

batch_normalization_92 (BatchNo (None, 8, 8, 384) 1152 conv2d_92[0][0]

__________________________________________________________________________________________________

batch_normalization_93 (BatchNo (None, 8, 8, 384) 1152 conv2d_93[0][0]

__________________________________________________________________________________________________

conv2d_94 (Conv2D) (None, 8, 8, 192) 393216 average_pooling2d_9[0][0]

__________________________________________________________________________________________________

batch_normalization_86 (BatchNo (None, 8, 8, 320) 960 conv2d_86[0][0]

__________________________________________________________________________________________________

activation_88 (Activation) (None, 8, 8, 384) 0 batch_normalization_88[0][0]

__________________________________________________________________________________________________

activation_89 (Activation) (None, 8, 8, 384) 0 batch_normalization_89[0][0]

__________________________________________________________________________________________________

activation_92 (Activation) (None, 8, 8, 384) 0 batch_normalization_92[0][0]

__________________________________________________________________________________________________

activation_93 (Activation) (None, 8, 8, 384) 0 batch_normalization_93[0][0]

__________________________________________________________________________________________________

batch_normalization_94 (BatchNo (None, 8, 8, 192) 576 conv2d_94[0][0]

__________________________________________________________________________________________________

activation_86 (Activation) (None, 8, 8, 320) 0 batch_normalization_86[0][0]

__________________________________________________________________________________________________

mixed9_1 (Concatenate) (None, 8, 8, 768) 0 activation_88[0][0]

activation_89[0][0]

__________________________________________________________________________________________________

concatenate_2 (Concatenate) (None, 8, 8, 768) 0 activation_92[0][0]

activation_93[0][0]

__________________________________________________________________________________________________

activation_94 (Activation) (None, 8, 8, 192) 0 batch_normalization_94[0][0]

__________________________________________________________________________________________________

mixed10 (Concatenate) (None, 8, 8, 2048) 0 activation_86[0][0]

mixed9_1[0][0]

concatenate_2[0][0]

activation_94[0][0]

__________________________________________________________________________________________________

global_average_pooling2d_1 (Glo (None, 2048) 0 mixed10[0][0]

__________________________________________________________________________________________________

dense_1 (Dense) (None, 512) 1049088 global_average_pooling2d_1[0][0]

__________________________________________________________________________________________________

dense_2 (Dense) (None, 9) 4617 dense_1[0][0]

==================================================================================================

Total params: 22,856,489

Trainable params: 1,053,705

Non-trainable params: 21,802,784

__________________________________________________________________________________________________

None

# Compile with Adam

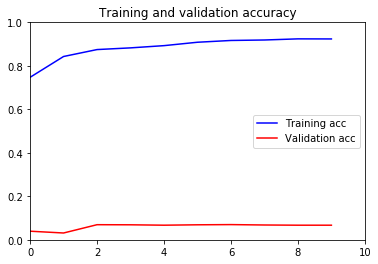

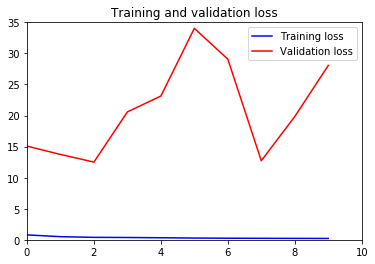

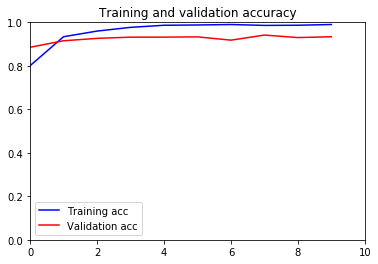

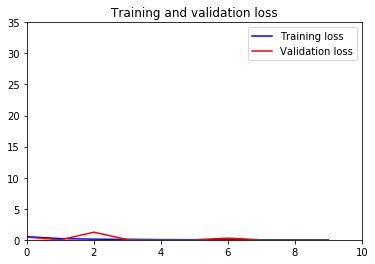

inceptionV3Model.compile(Adam(lr=.001), loss='categorical_crossentropy', metrics=['accuracy'])After seeing how terrible the performance was, I tried increasing my learning rate, but saw no impact. I started at .0001, and increased to the current .001. Performance was slightly better, but still far from satisfactory.

checkpoint = ModelCheckpoint("inception_v3_1.h5", monitor='val_acc', verbose=2, save_best_only=True, save_weights_only=False, mode='auto', period=1)

early = EarlyStopping(monitor='val_acc', min_delta=0, patience=3, verbose=2, mode='auto')

inceptionV3Hist = inceptionV3Model.fit_generator(generator= train_generator,

validation_data = val_generator,

epochs = 10,

callbacks=[checkpoint,early])Epoch 1/10

87/87 [==============================] - 1201s 14s/step - loss: 0.7889 - accuracy: 0.7473 - val_loss: 15.0749 - val_accuracy: 0.0396

Epoch 2/10

87/87 [==============================] - 1198s 14s/step - loss: 0.5040 - accuracy: 0.8430 - val_loss: 13.7417 - val_accuracy: 0.0313

Epoch 3/10

87/87 [==============================] - 1197s 14s/step - loss: 0.3945 - accuracy: 0.8749 - val_loss: 12.5104 - val_accuracy: 0.0695

Epoch 4/10

87/87 [==============================] - 1198s 14s/step - loss: 0.3710 - accuracy: 0.8827 - val_loss: 20.5829 - val_accuracy: 0.0690

Epoch 5/10

87/87 [==============================] - 1197s 14s/step - loss: 0.3303 - accuracy: 0.8929 - val_loss: 23.1406 - val_accuracy: 0.0672

Epoch 6/10

87/87 [==============================] - 1212s 14s/step - loss: 0.2823 - accuracy: 0.9086 - val_loss: 34.0384 - val_accuracy: 0.0690

Epoch 7/10

87/87 [==============================] - 1200s 14s/step - loss: 0.2553 - accuracy: 0.9167 - val_loss: 29.0741 - val_accuracy: 0.0699

Epoch 8/10

87/87 [==============================] - 1206s 14s/step - loss: 0.2426 - accuracy: 0.9189 - val_loss: 12.7246 - val_accuracy: 0.0681

Epoch 9/10

87/87 [==============================] - 1220s 14s/step - loss: 0.2283 - accuracy: 0.9241 - val_loss: 19.8445 - val_accuracy: 0.0672

Epoch 10/10

87/87 [==============================] - 1201s 14s/step - loss: 0.2182 - accuracy: 0.9237 - val_loss: 28.0628 - val_accuracy: 0.0672

inceptionV3Model.save(r"C:\Users\fairwemr\ISA 480\FinalProject\inceptionV3Model.h5")

inceptionV3Hist.model.save(r"C:\Users\fairwemr\ISA 480\FinalProject\inceptionV3Hist.h5")inceptionV3Hist_acc = inceptionV3Hist.history['accuracy']

inceptionV3Hist_val_acc = inceptionV3Hist.history['val_accuracy']

inceptionV3Hist_loss = inceptionV3Hist.history['loss']

inceptionV3Hist_val_loss = inceptionV3Hist.history['val_loss']

inceptionV3Hist_epochs = range(len(inceptionV3Hist_acc))

plt.axis([0, 10, 0, 1])

plt.plot(inceptionV3Hist_epochs, inceptionV3Hist_acc, 'b', label='Training acc')

plt.plot(inceptionV3Hist_epochs, inceptionV3Hist_val_acc, 'r', label='Validation acc')

plt.title('Training and validation accuracy')

plt.legend()

plt.figure()

plt.plot(inceptionV3Hist_epochs, inceptionV3Hist_loss, 'b', label='Training loss')

plt.plot(inceptionV3Hist_epochs, inceptionV3Hist_val_loss, 'r', label='Validation loss')

plt.title('Training and validation loss')

plt.legend()

plt.axis([0, 10, 0, 35])

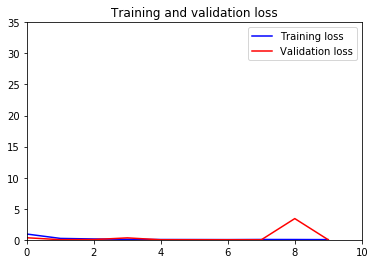

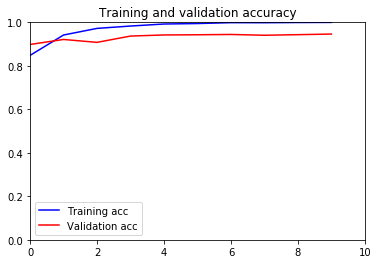

plt.show()As you can see, the performance on the inception V3 model is absolutely terrible. I was very perplexed as to how the model could overfit the training data so much to the point where accuracy on the testing data was basically zero. The two data sets shouldn't be that different, where even if it is overfit I would expect halfway decent performance on the testing. Obviously not the case.

After hours of searching, rerunning the model with different image augmentations, learning rates, even different architectures and layers, this was the BEST performance I achieved. Many of the preliminary models had training accuracies of 20%. The only solution I could find from other people having similar issues was that the model itself is just not well suited to what I need. Many encouraged trying the VGG16 model, which was also mentioned in the original paper, as even without fine tuning parameters, performance was much better.

#Get the convolutional part of a VGG network trained on ImageNet

model_vgg16_conv = VGG16(weights='imagenet', include_top=False)

model_vgg16_conv.summary()Model: "vgg16"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

input_2 (InputLayer) (None, None, None, 3) 0

_________________________________________________________________

block1_conv1 (Conv2D) (None, None, None, 64) 1792

_________________________________________________________________

block1_conv2 (Conv2D) (None, None, None, 64) 36928

_________________________________________________________________

block1_pool (MaxPooling2D) (None, None, None, 64) 0

_________________________________________________________________

block2_conv1 (Conv2D) (None, None, None, 128) 73856

_________________________________________________________________

block2_conv2 (Conv2D) (None, None, None, 128) 147584

_________________________________________________________________

block2_pool (MaxPooling2D) (None, None, None, 128) 0

_________________________________________________________________

block3_conv1 (Conv2D) (None, None, None, 256) 295168

_________________________________________________________________

block3_conv2 (Conv2D) (None, None, None, 256) 590080

_________________________________________________________________

block3_conv3 (Conv2D) (None, None, None, 256) 590080

_________________________________________________________________

block3_pool (MaxPooling2D) (None, None, None, 256) 0

_________________________________________________________________

block4_conv1 (Conv2D) (None, None, None, 512) 1180160

_________________________________________________________________

block4_conv2 (Conv2D) (None, None, None, 512) 2359808

_________________________________________________________________

block4_conv3 (Conv2D) (None, None, None, 512) 2359808

_________________________________________________________________

block4_pool (MaxPooling2D) (None, None, None, 512) 0

_________________________________________________________________

block5_conv1 (Conv2D) (None, None, None, 512) 2359808

_________________________________________________________________

block5_conv2 (Conv2D) (None, None, None, 512) 2359808