Jiachen Li, Xinyao Wang, Sijie Zhu, Chia-wen Kuo, Lu Xu, Fan Chen, Jitesh Jain, Humphrey Shi, Longyin Wen

- [06/07] We released checkpoints of CuMo after pre-training and pre-finetuning stages at CuMo-misc.

- [05/10] Check out the Demo based on Gradio zero gpu space.

- [05/09] Check out the Arxiv version of the paper!

- [05/08] We released CuMo: Scaling Multimodal LLM with Co-Upcycled Mixture-of-Experts with project page and codes.

- Release

- Contents

- Overview

- Installation

- Model Zoo

- Demo setup

- Getting Started

- Citation

- Acknowledgement

- License

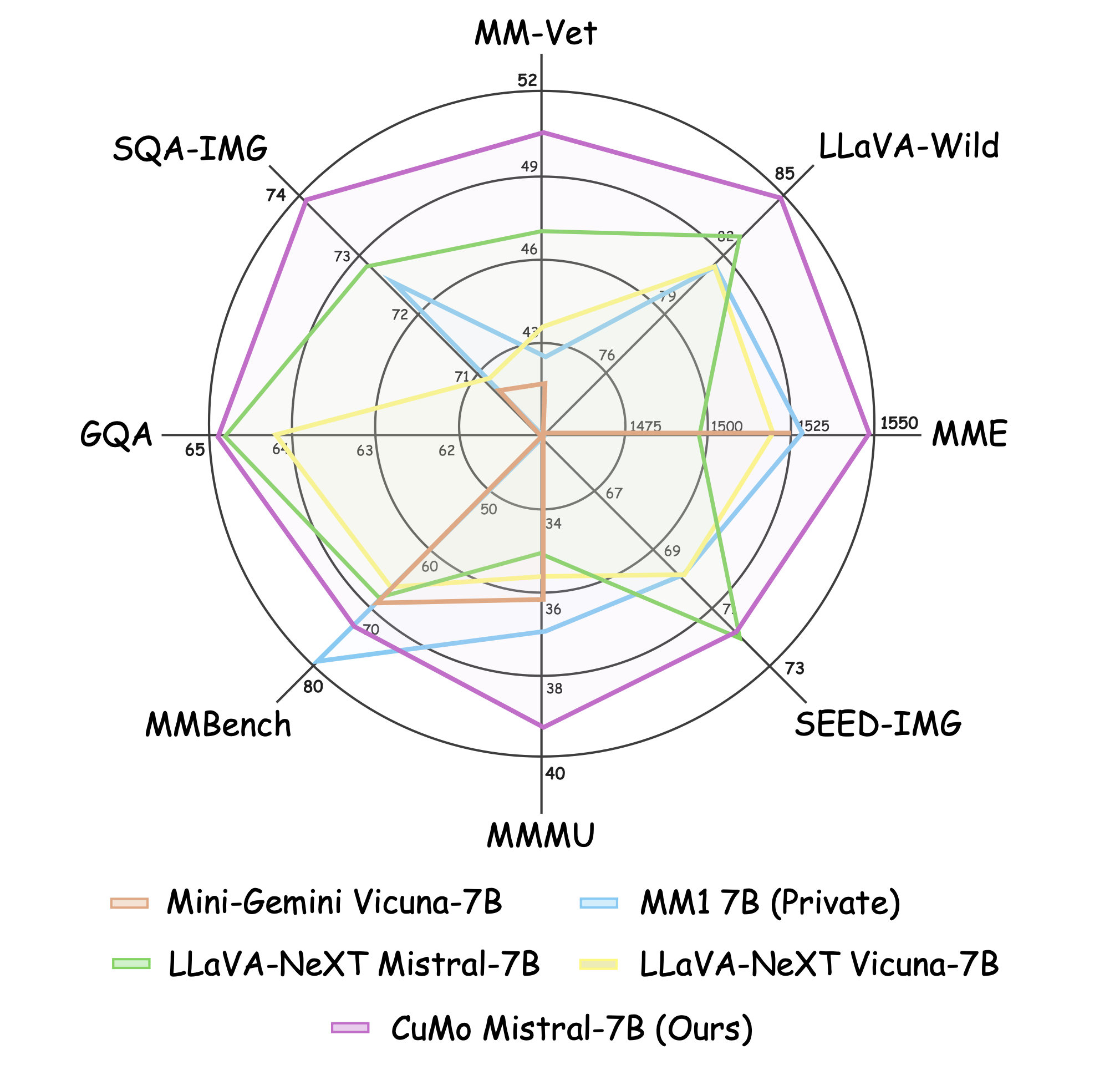

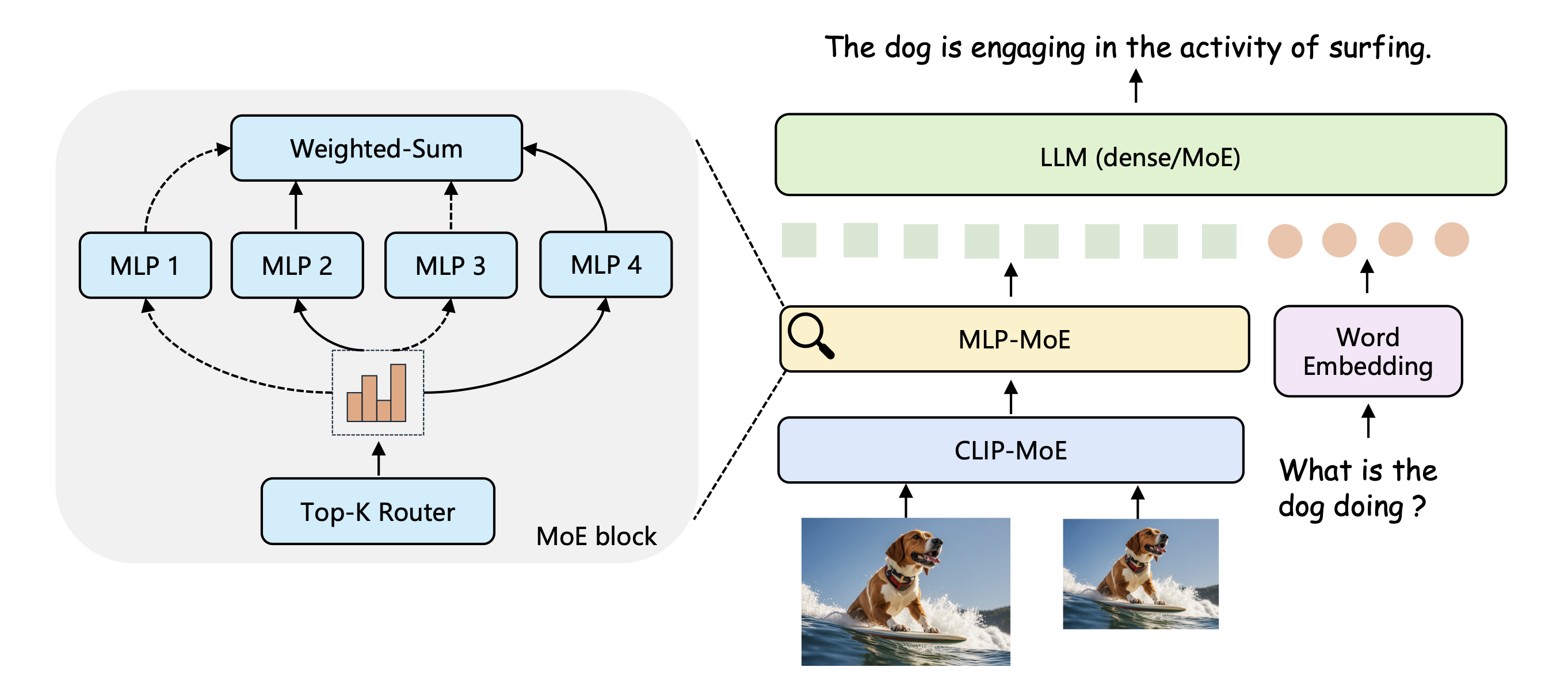

In this project, we delve into the usage and training recipe of leveraging MoE in multimodal LLMs. We propose CuMo, which incorporates Co-upcycled Top-K sparsely-gated Mixture-of-experts blocks into the vision encoder and the MLP connector, thereby enhancing the capabilities of multimodal LLMs. We further adopt a three-stage training approach with auxiliary losses to stabilize the training process and maintain a balanced loading of experts. CuMo is exclusively trained on open-sourced datasets and achieves comparable performance to other state-of-the-art multimodal LLMs on multiple VQA and visual-instruction-following benchmarks.

- Clone this repo.

git clone https://github.com/SHI-Labs/CuMo.git

cd CuMo- Install dependencies.

We used python 3.9 venv for all experiments and it should be compatible with python 3.9 or 3.10 under anaconda if you prefer to use it.

venv:

python -m venv /path/to/new/virtual/cumo

source /path/to/new/virtual/cumo/bin/activate

anaconda:

conda create -n cumo python=3.9 -y

conda activate cumo

pip install --upgrade pip

pip install -e .- Install additional packages for training CuMo

pip install -e ".[train]"

pip install flash-attn --no-build-isolation

The CuMo model weights are open-sourced at Huggingface:

| Model | Base LLM | Vision Encoder | MLP Connector | Download |

|---|---|---|---|---|

| CuMo-7B | Mistral-7B-Instruct-v0.2 | CLIP-MoE | MLP-MoE | 🤗 HF ckpt |

| CuMo-8x7B | Mixtral-8x7B-Instruct-v0.1 | CLIP-MoE | MLP-MoE | 🤗 HF ckpt |

The intermediate checkpoints after pre-training and pre-finetuning are also released at Huggingface:

| Model | Base LLM | Stage | Download |

|---|---|---|---|

| CuMo-7B | Mistral-7B-Instruct-v0.2 | Pre-Training | 🤗 HF ckpt |

| CuMo-8x7B | Mixtral-8x7B-Instruct-v0.1 | Pre-Finetuning | 🤗 HF ckpt |

We provide a Gradio Web UI based demo. You can also setup the demo locally with

CUDA_VISIBLE_DEVICES=0 python -m cumo.serve.app \

--model-path checkpoints/CuMo-mistral-7byou can add --bits 8 or --bits 4 to save the GPU memory.

If you prefer to star a demo without a web UI, you can use the following commands to run a demo with CuMo-Mistral-7b on your terminal:

CUDA_VISIBLE_DEVICES=0 python -m cumo.serve.cli \

--model-path checkpoints/CuMo-mistral-7b \

--image-file cumo/serve/examples/waterview.jpgyou can add --load-4bit or --load-8bit to save the GPU memory.

Please refer to Getting Started for dataset preparation, training, and inference details of CuMo.

@article{li2024cumo,

title={CuMo: Scaling Multimodal LLM with Co-Upcycled Mixture-of-Experts},

author={Li, Jiachen and Wang, Xinyao and Zhu, Sijie and Kuo, Chia-wen and Xu, Lu and Chen, Fan and Jain, Jitesh and Shi, Humphrey and Wen, Longyin},

journal={arXiv:},

year={2024}

}

We thank the authors of LLaVA, MoE-LLaVA, S^2, st-moe-pytorch, mistral-src for releasing the source codes.

The weights of checkpoints are licensed under CC BY-NC 4.0 for non-commercial use. The codebase is licensed under Apache 2.0. This project utilizes certain datasets and checkpoints that are subject to their respective original licenses. Users must comply with all terms and conditions of these original licenses. The content produced by any version of CuMo is influenced by uncontrollable variables such as randomness, and therefore, the accuracy of the output cannot be guaranteed by this project. This project does not accept any legal liability for the content of the model output, nor does it assume responsibility for any losses incurred due to the use of associated resources and output results.