This is the official implementaion of paper TS-CAM: Token Semantic Coupled Attention Map for Weakly Supervised Object Localization, which is accepted as ICCV 2021 poster.

This repository contains Pytorch training code, evaluation code, pretrained models and jupyter notebook for more visualization.

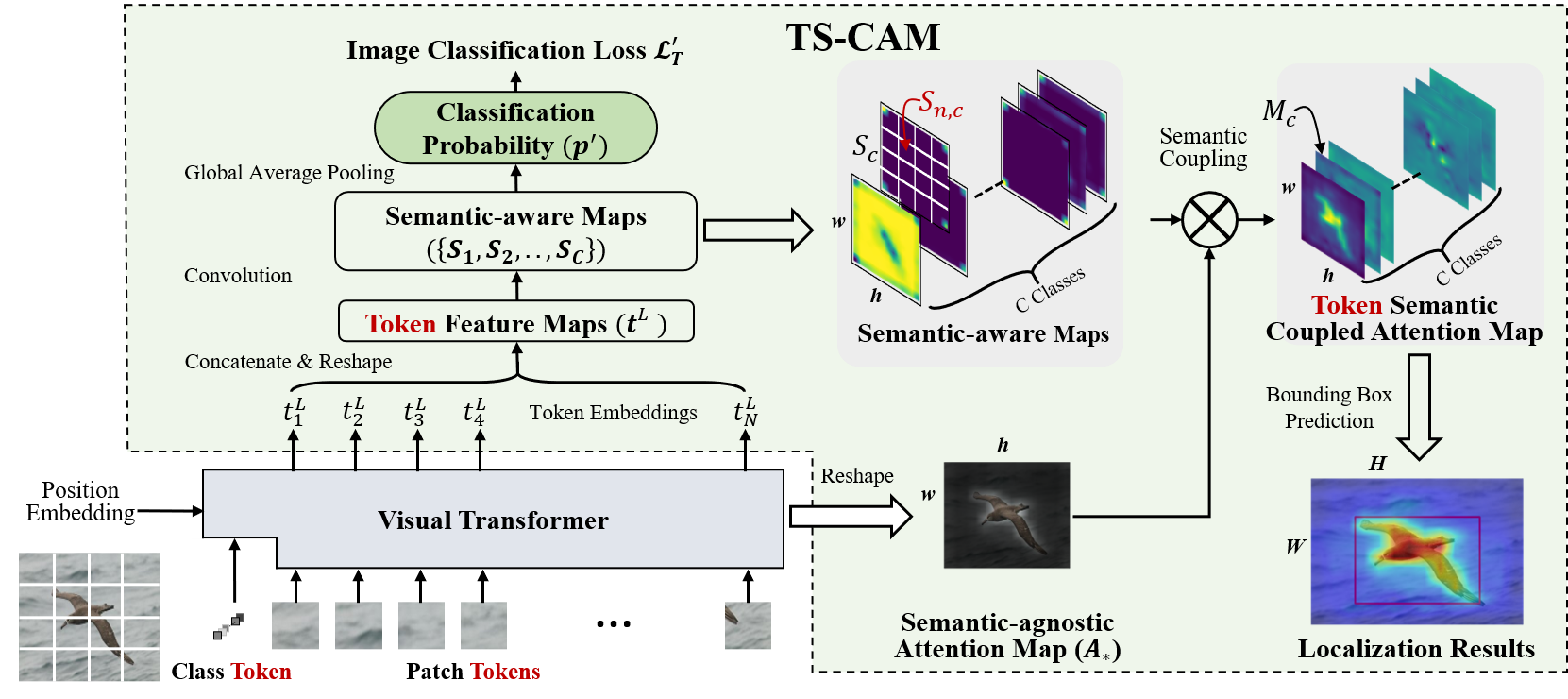

Based on Deit, TS-CAM couples attention maps from visual image transformer with semantic-aware maps to obtain accurate localization maps (Token Semantic Coupled Attention Map, ts-cam).

- (06/07/2021) Higher performance is reported when using stonger visual transformer Conformer.

We provide pretrained TS-CAM models trained on CUB-200-2011 and ImageNet_ILSVRC2012 datasets.

| Backbone | Loc.Acc@1 | Loc.Acc@5 | Loc.Gt-Known | Cls.Acc@1 | Cls.Acc@5 | Baidu Drive | Google Drive |

|---|---|---|---|---|---|---|---|

| Deit-T | 64.5 | 80.9 | 86.4 | 72.9 | 91.9 | model | model |

| Deit-S | 71.3 | 83.8 | 87.7 | 80.3 | 94.8 | model | model |

| Deit-B-384 | 75.8 | 84.1 | 86.6 | 86.8 | 96.7 | model | model |

| Conformer-S | 77.2 | 90.9 | 94.1 | 81.0 | 95.8 | model | model |

| Backbone | Loc.Acc@1 | Loc.Acc@5 | Loc.Gt-Known | Cls.Acc@1 | Cls.Acc@5 | Baidu Drive | Google Drive |

|---|---|---|---|---|---|---|---|

| Deit-S | 53.4 | 64.3 | 67.6 | 74.3 | 92.1 | model | model |

Note: the Extrate Code for Baidu Drive is gwg7

- On CUB-200-2011 dataset, we train TS-CAM on one Titan RTX 2080Ti GPU, with batch-size 128 and learning rate 5e-5, respectively.

- On ILSVRC2012 dataset, we train TS-CAM on four Titan RTX 2080Ti GPUs, with batch-size 256 and learning rate 5e-4, respectively.

First clone the repository locally:

git clone https://github.com/vasgaowei/TS-CAM.git

Then install Pytorch 1.7.0+ and torchvision 0.8.1+ and pytorch-image-models 0.3.2:

conda create -n pytorch1.7 python=3.6

conda activate pytorc1.7

conda install anaconda

conda install pytorch==1.7.0 torchvision==0.8.0 torchaudio==0.7.0 cudatoolkit=10.2 -c pytorch

pip install timm==0.3.2

Please download and extrate CUB-200-2011 dataset.

The directory structure is the following:

TS-CAM/

data/

CUB-200-2011/

attributes/

images/

parts/

bounding_boxes.txt

classes.txt

image_class_labels.txt

images.txt

image_sizes.txt

README

train_test_split.txt

Download ILSVRC2012 dataset and extract train and val images.

The directory structure is organized as follows:

TS-CAM/

data/

ImageNet_ILSVRC2012/

ILSVRC2012_list/

train/

n01440764/

n01440764_18.JPEG

...

n01514859/

n01514859_1.JPEG

...

val/

n01440764/

ILSVRC2012_val_00000293.JPEG

...

n01531178/

ILSVRC2012_val_00000570.JPEG

...

ILSVRC2012_list/

train.txt

val_folder.txt

val_folder_new.txt

And the training and validation data is expected to be in the train/ folder and val folder respectively:

On CUB-200-2011 dataset:

bash train_val_cub.sh {GPU_ID} ${NET} ${NET_SCALE} ${SIZE}

On ImageNet1k dataset:

bash train_val_ilsvrc.sh {GPU_ID} ${NET} ${NET_SCALE} ${SIZE}

Please note that pretrained model weights of Deit-tiny, Deit-small and Deit-base on ImageNet-1k model will be downloaded when you first train you model, so the Internet should be connected.

On CUB-200-2011 dataset:

bash val_cub.sh {GPU_ID} ${NET} ${NET_SCALE} ${SIZE} ${MODEL_PATH}

On ImageNet1k dataset:

bash val_ilsvrc.sh {GPU_ID} ${NET} ${NET_SCALE} ${SIZE} ${MODEL_PATH}

GPU_ID should be specified and multiple GPUs can be used for accelerating training and evaluation.

NET shoule be chosen among deit and conformer.

NET_SCALE shoule be chosen among tiny, small and base.

SIZE shoule be chosen among 224 and 384.

MODEL_PATH is the path of pretrained model.

We provided jupyter notebook in tools_cam folder.

TS-CAM/

tools-cam/

visualization_attention_map_cub.ipynb

visualization_attention_map_imaget.ipynb

Please download pretrained TS-CAM model weights and try more visualzation results((Attention maps using our method and Attention Rollout method)). You can try other interseting images you like to show the localization map(ts-cams).

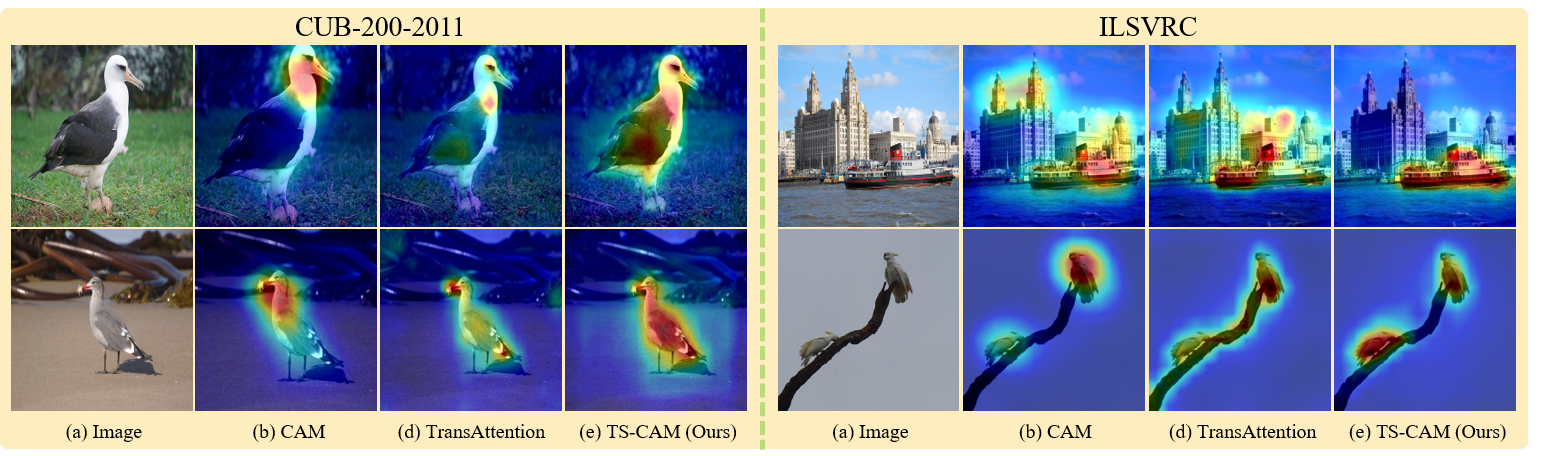

We provide some visualization results as follows.

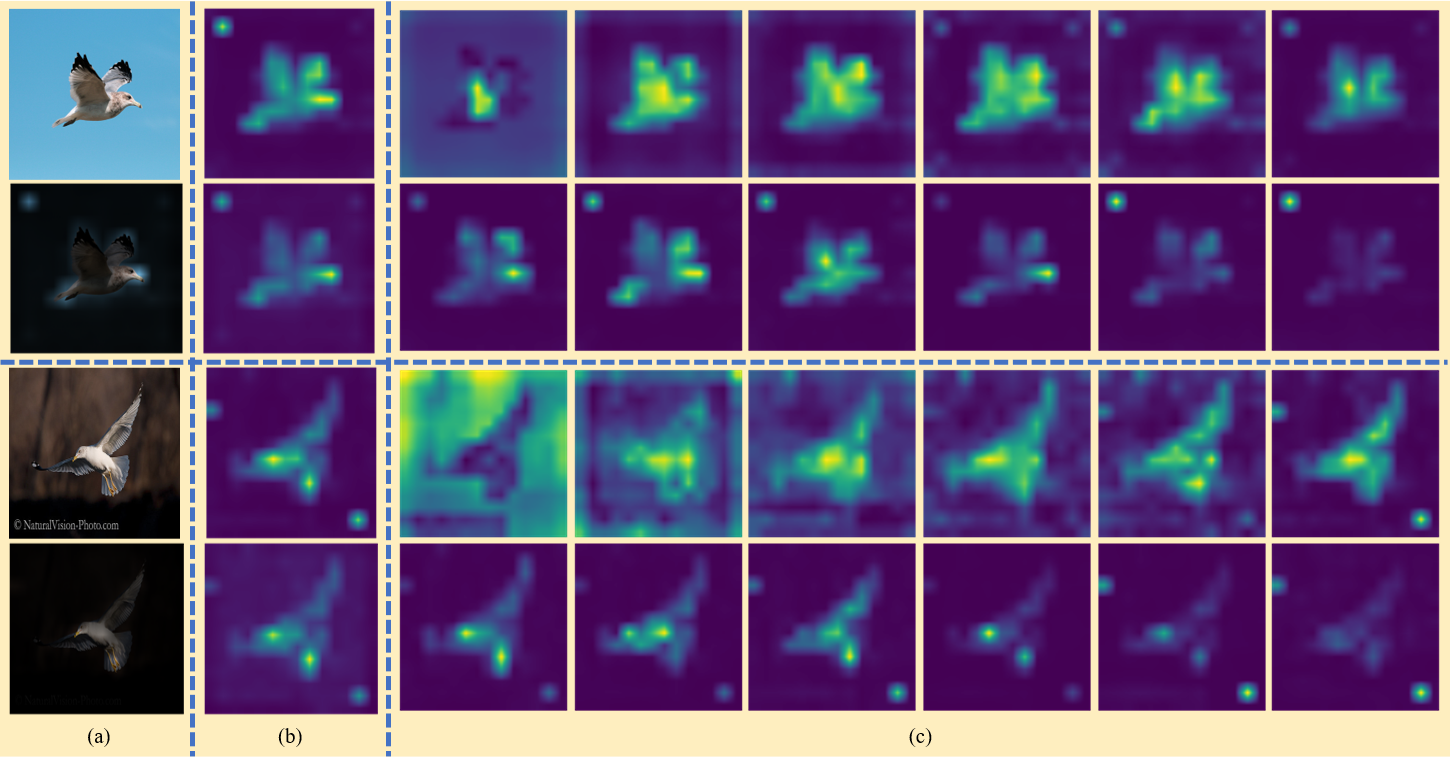

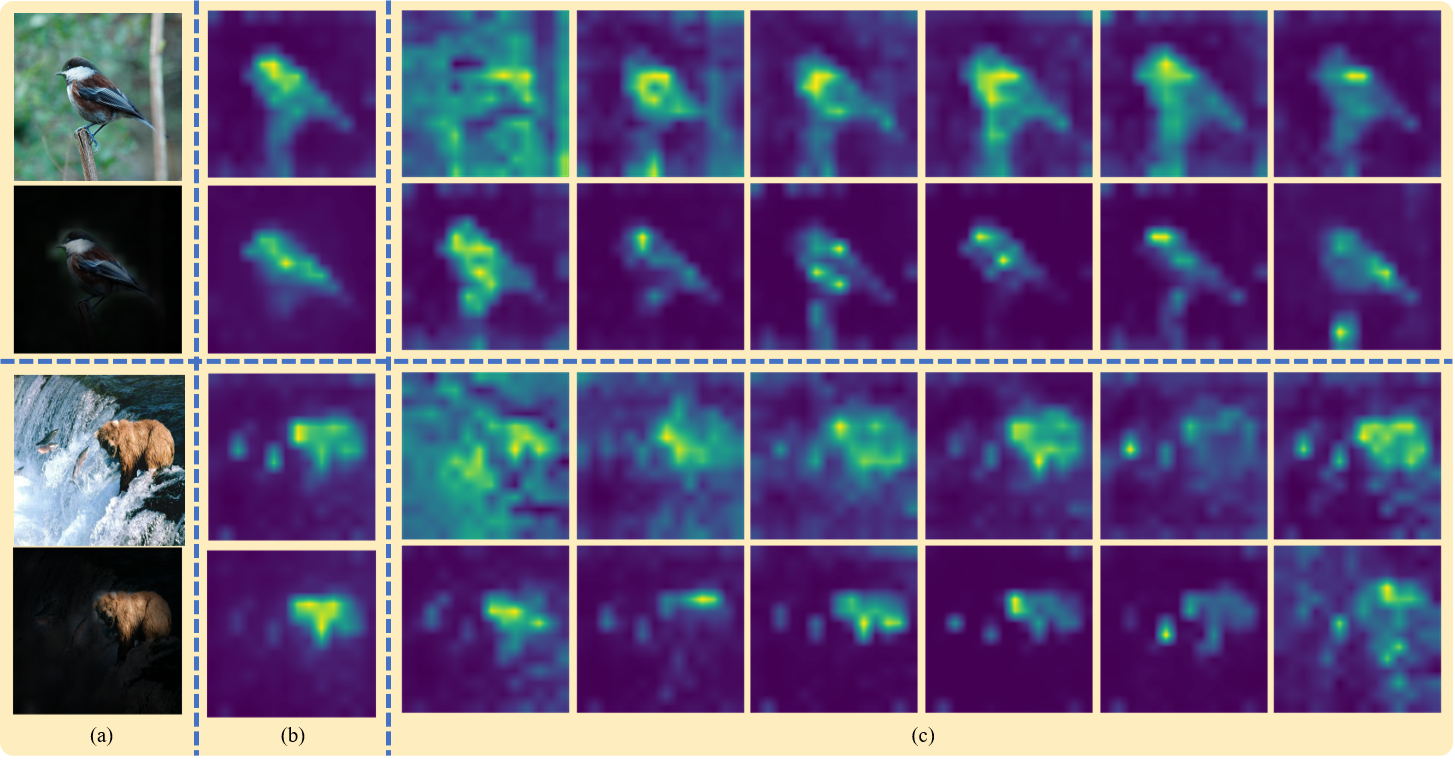

We can also visualize attention maps from different transformer layers.

If you have any question about our work or this repository, please don't hesitate to contact us by emails.

You can also open an issue under this project.

If you use this code for a paper please cite:

@article{Gao2021TSCAMTS,

title={TS-CAM: Token Semantic Coupled Attention Map for Weakly Supervised Object Localization},

author={Wei Gao and Fang Wan and Xingjia Pan and Zhiliang Peng and Qi Tian and Zhenjun Han and Bolei Zhou and Qixiang Ye},

journal={ArXiv},

year={2021},

volume={abs/2103.14862}

}