This dataset consists of 4 parts, totaling ~425,000 curated high quality 1024x1024 synthetic face images.

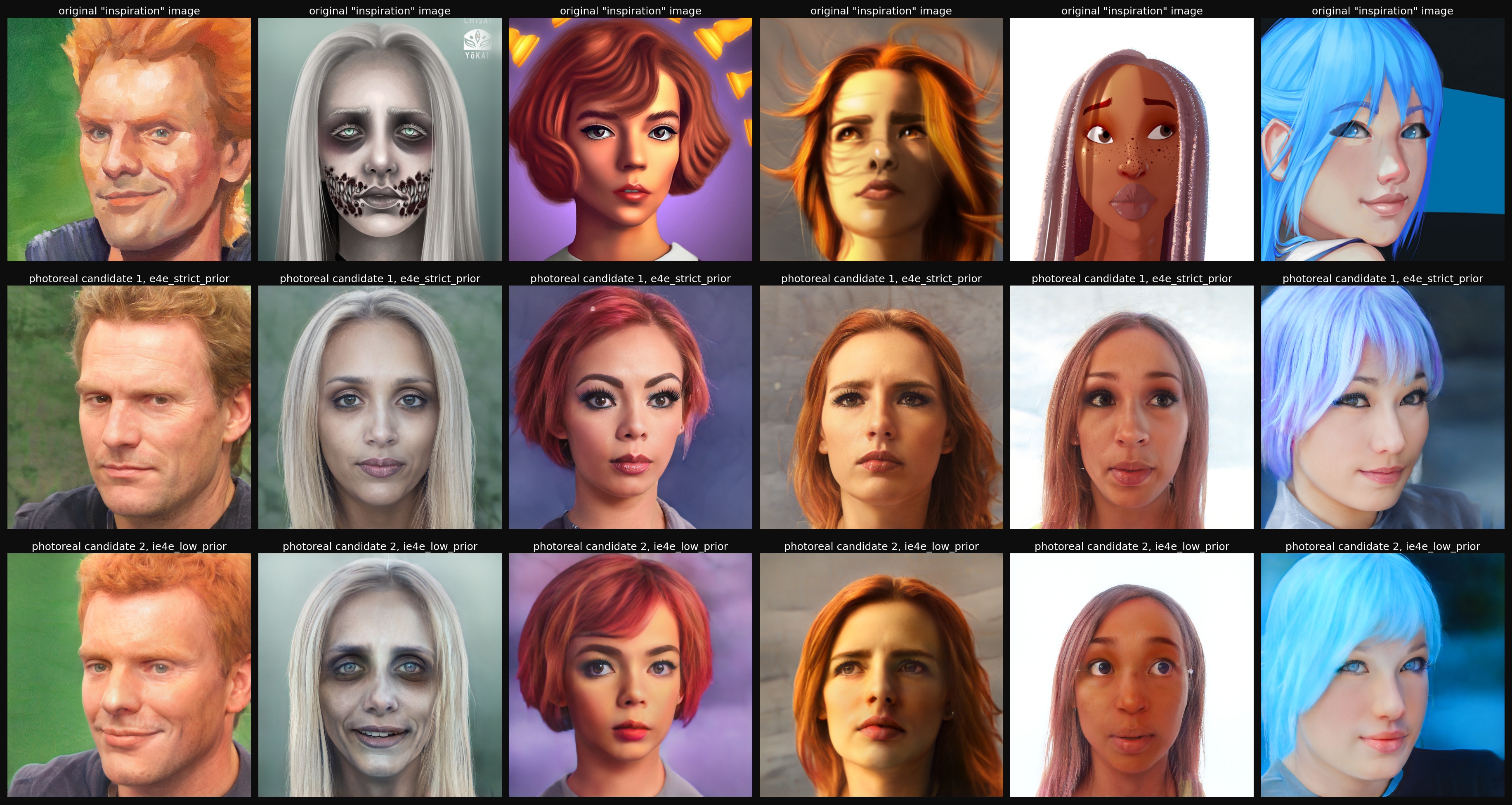

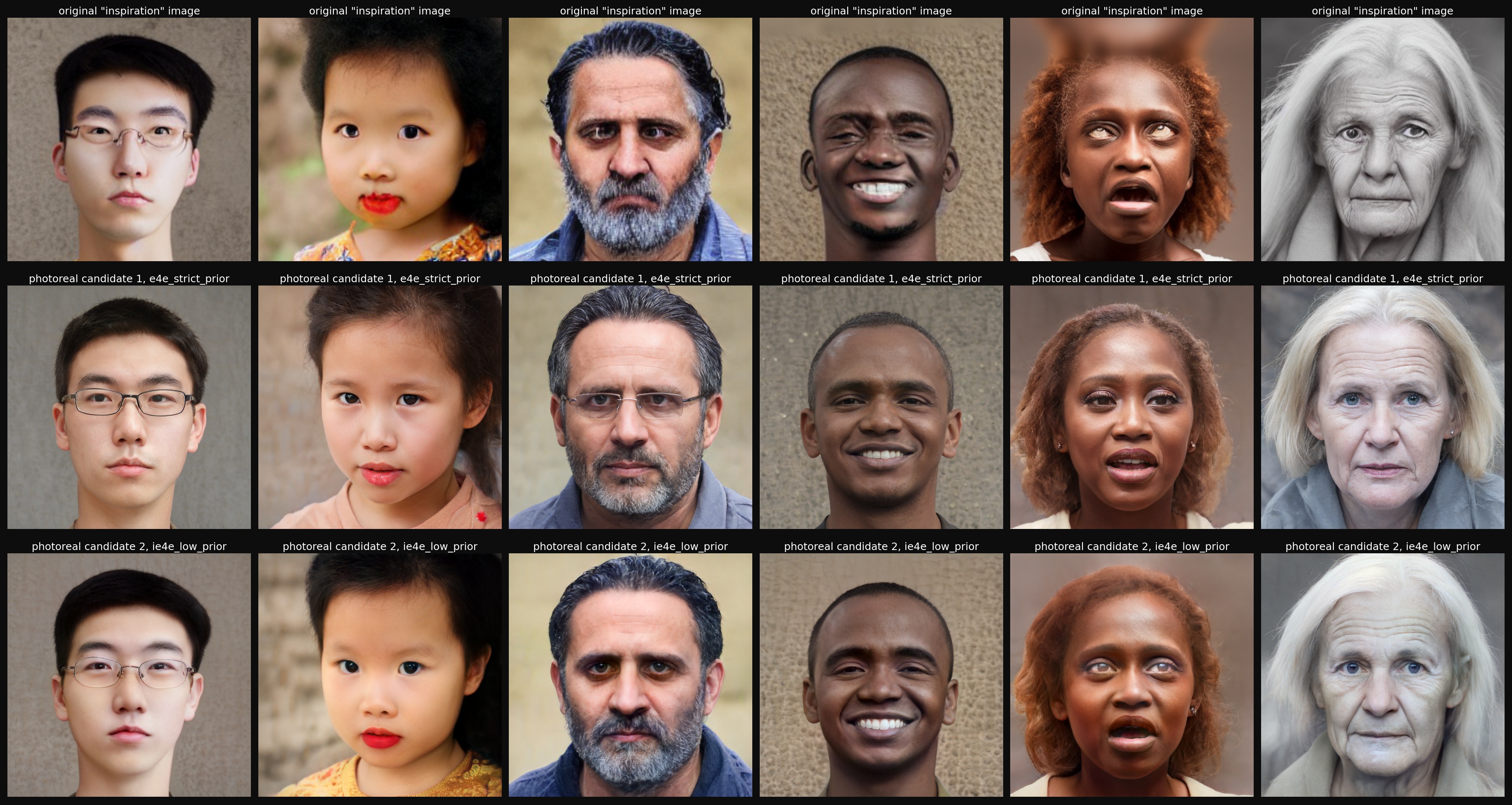

It was created by "bringing to life" and turning to photorealistic face images from multiple "inspiration" sources (paintings, drawings, 3D models, text to image generators, etc) using a process similar to what is described in this short twitter thread. The process involves encoding the images into StyleGAN2 latent space and performing a small manipulation that turns each image into a photo-realistic image. These resulting candidate images are then further curated using a semi-manual semi-automatic process with the help of the lightweight visual taste aprroximator tool

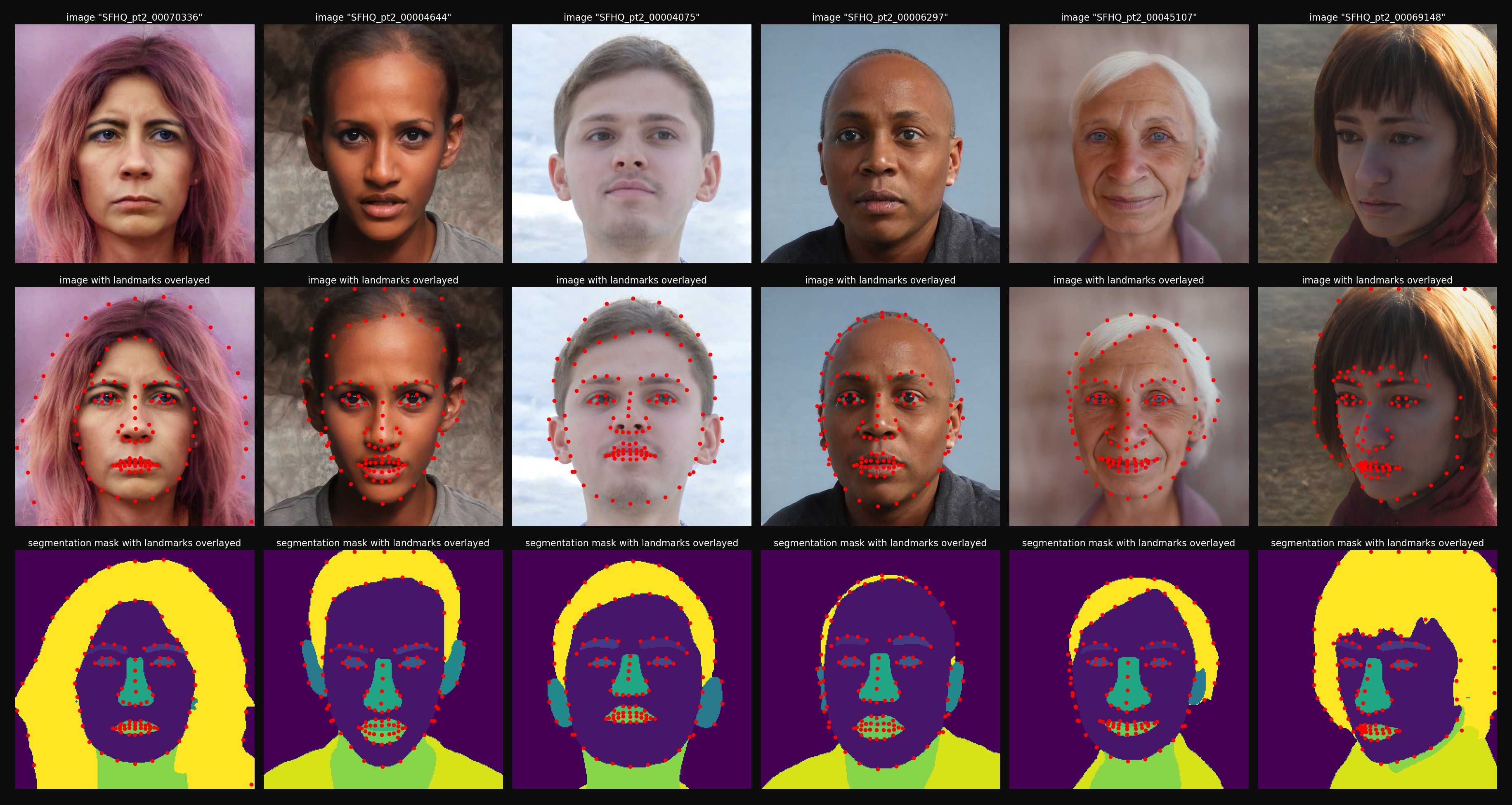

The dataset also contains facial landmarks (an extended set of 110 landmark points) and face parsing semantic segmentation maps. An example script (explore_dataset.py) is provided (live kaggle notebook here) and demonstrates how to access landmarks, segmentation maps, and textually search withing the dataset (with CLIP image/text feature vectors), and also performs some exploratory analysis of the dataset.

Example illustation of landmarks and segmentation maps below:

The dataset can be downloaded via kaggle:

- Part 1 consists of 89,785 HQ 1024x1024 curated face images. It uses "inspiration" images from Artstation-Artistic-face-HQ dataset (AAHQ), Close-Up Humans dataset and UIBVFED dataset.

- Part 2 consists of 91,361 HQ 1024x1024 curated face images. It uses "inspiration" images from Face Synthetics dataset and by sampling from the Stable Diffusion v1.4 text to image generator using varied face portrait prompts.

- Part 3 consists of 118,358 HQ 1024x1024 curated face images. It uses "inspiration" images by sampling from StyleGAN2 mapping network with very high truncation psi coefficients to increase diversity of the generation. Here, the e4e encoder is basically used a new kind of truncation trick.

- Part 4 consists of 125,754 HQ 1024x1024 curated face images. It uses "inspiration" images by sampling from the Stable Diffusion v2.1 text to image generator using varied face portrait prompts.

- The original inspiration images are taken from:

- Artstation-Artistic-face-HQ Dataset (AAHQ) which contains mainly painting, drawing and 3D models of faces (part 1)

- Close-Up Humans Dataset that contains 3D models of faces (part 1)

- UIBVFED Dataset that also contain 3D models of faces (part 1)

- Face Synthetics Dataset which contains 3D models of faces (part 2)

- generated images using stable diffusion v1.4 model using various face portrait prompts that span a wide range of ethnicities, ages, expressions, hairstyles, etc. (part 2)

- StyleGAN2 mapping network sampled with larger that 1 truncation psi values, and then using a new truncation trick in which instead of moving towards the average w_avg vector, we move towards the encoded w_e4e vector to correct the example. illustation is provided in the section below (part 3)

- generated images using stable diffusion v2.1 model using various face portrait prompts that span a wide range of ethnicities, ages, expressions, hairstyles, etc. (part 4)

- Each inspiration image was encoded by encoder4editing (e4e) into StyleGAN2 latent space (StyleGAN2 is a generative face model tained on FFHQ dataset) and multiple candidate images were generated from each inspiration image

- These candidate images were then further curated and verified as being photo-realistic and high quality by a single human (me) and a machine learning assistant model that was trained to approximate my own human judgments and helped me scale myself to asses the quality of all images in the dataset. The code for the tool used for this purpuse can be found here

- Near duplicates and images that were too similar were removed using CLIP features (no two images in the dataset have CLIP similarity score of greater than ~0.92)

- From each image various pre-trained features were extracted and provided here for convenience, in particular CLIP features for fast textual query of the dataset, the feaures are under

pretrained_features/folder - From each image, semantic segmentation maps were extracted using Face Parsing BiSeNet and are provided in the dataset under under

segmentations/folder - From each image, an extended landmark set was extracted that also contain inner and outer hairlines (these are unique landmarks that are usually not extracted by other algorithms). These landmarks were extracted using Dlib, Face Alignment and some post processing of Face Parsing BiSeNet and are provided in the dataset under

landmarks/folder - NOTE: semantic segmentation and landmarks were first calculated on scaled down version of 256x256 images, and then upscaled to 1024x1024

Example of dataset generation process on artistic illustations and paintings taken from AAHQ (part 1):

Example of dataset generation process on 3D models taken from Face Synthetics, Close-Up Humans, and UIBVFED (parts 1 & 2):

Example of dataset generation process of correcting faults in face images generated by Stable Diffusion (parts 2 & 4):

Example of dataset generation process of using the StyleGAN2 mapping network samples with high truncation psi and correcting with e4e encoder (part 3):

we deomonstate the variability of the images in the dataset by textual query of the dataset with CLIP ViT-L/14@336 model embeddings (NOTE: these demonstation images are only of parts 1&2, the dataset with parts 3&4 is much more varied, please try out for yourself or check the script on kaggle):

Additional variability demonstations can be found under images/

Since all images in this dataset are synthetically generated there are no privacy issues or license issues surrounding these images.

Overall the 4 parts of this dataset contain ~425,000 high quality and curated synthetic face images that have no privacy issues or license issues surrounding them.

This dataset contains a high degree of variability on the axes of identity, ethnicity, age, pose, expression, lighting conditions, hair-style, hair-color, facial hair. It lacks variability in accessories axes such as hats or earphones as well as various jewelry. It also doesn't contain any occlusions except the self-occlusion of hair occluding the forehead, the ears and rarely the eyes. This dataset naturally inherits all the biases of it's original datasets (FFHQ, AAHQ, Close-Up Humans, Face Synthetics, LAION-5B) and the StyleGAN2 and Stable Diffusion models.

The purpose of this dataset is to be of sufficiently high quality that new machine learning models can be trained using this data alone or provide meaningful augmentation to other data sources, including the training of generative face models such as StyleGAN. The dataset may be extended from time to time with additional supervision labels (e.g. text descriptions), but no promises.

Hope this is helpful to some of you, feel free to use as you see fit...

@misc{david_beniaguev_2022_SFHQ,

title={Synthetic Faces High Quality (SFHQ) dataset},

url={https://github.com/SelfishGene/SFHQ-dataset},

DOI={10.34740/kaggle/dsv/4737549},

publisher={GitHub},

author={David Beniaguev},

year={2022}

}