Sharath Girish, Kamal Gupta, Abhinav Shrivastava

Official implementation of the paper "EAGLES: Efficient Accelerated 3D Gaussians with Lightweight EncodingS"

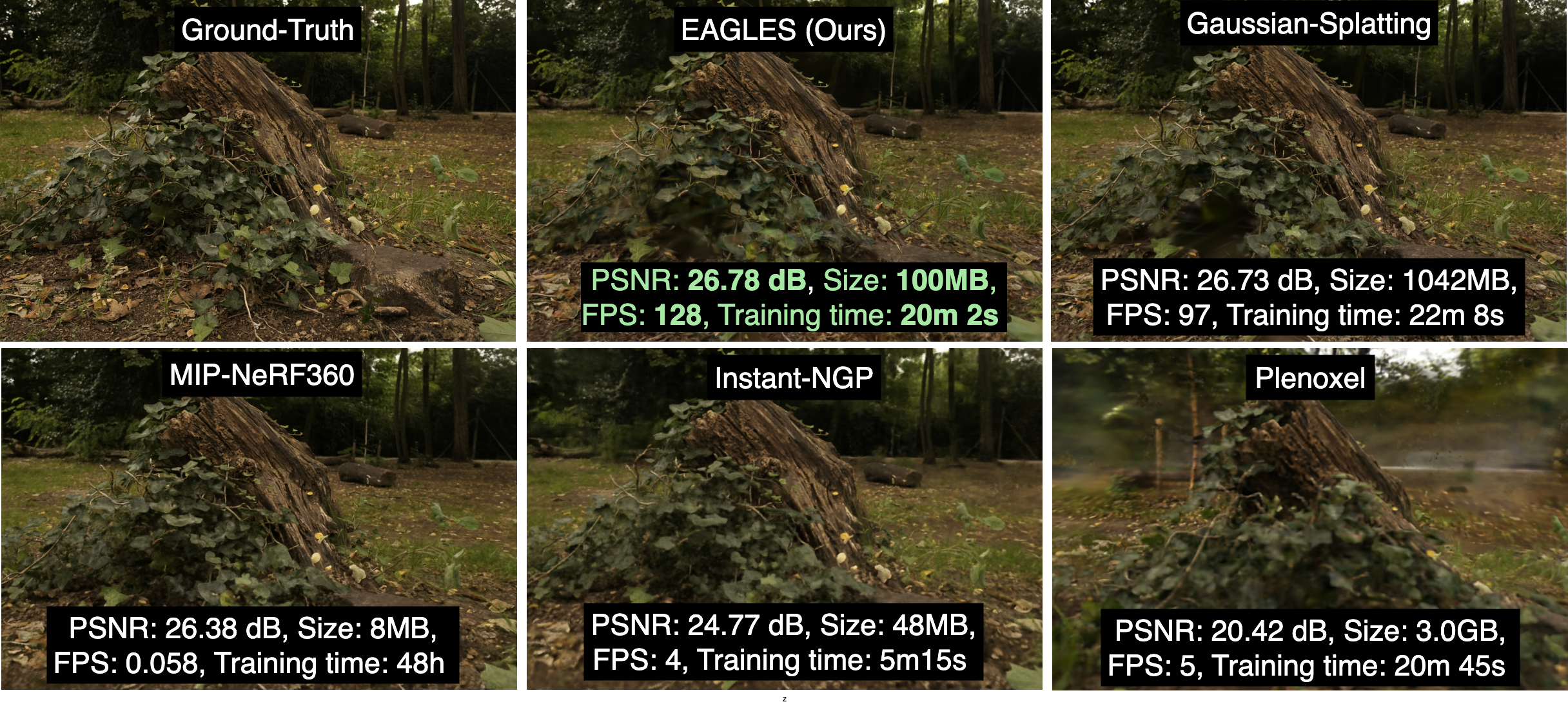

Abstract: Recently, 3D Gaussian splatting (3D-GS) has gained popularity in novel-view scene synthesis. It addresses the challenges of lengthy training times and slow rendering speeds associated with Neural Radiance Fields (NeRFs). Through rapid, differentiable rasterization of 3D Gaussians, 3D-GS achieves real-time rendering and accelerated training. They, however, demand substantial memory resources for both training and storage, as they require millions of Gaussians in their point cloud representation for each scene. We present a technique utilizing quantized embeddings to significantly reduce per-point memory storage requirements and a coarse-to-fine training strategy for a faster and more stable optimization of the Gaussian point clouds. Our approach develops a pruning stage which results in scene representations with fewer Gaussians, leading to faster training times and rendering speeds for real-time rendering of high resolution scenes. We reduce storage memory by more than an order of magnitude all while preserving the reconstruction quality. We validate the effectiveness of our approach on a variety of datasets and scenes preserving the visual quality while consuming 10-20x less memory and faster training/inference speed.

The codebase is built off of the codebase of 3D-Gaussian Splatting (3D-GS) here by Kerbl et. al. The setup instructions are similar to their codebase with small changes in the libraries. The repository contains submodules

# SSH

git clone git@github.com:Sharath-girish/efficientgaussian.git --recursiveor

# HTTPS

git clone https://github.com/Sharath-girish/efficientgaussian.git --recursiveOn Linux, it can be set up as

conda env create --file environment.yml

conda activate gaussian_splattingThe different components for the codebase have similar hardware and software requirements as 3D-GS but with an updated environment.yml as provided. The config files corresponding to different experiments are provided in the configs folder as yaml files. These files override the default arguments at arguments/__init__.py. They can be further overridden with command-line arguments. The model with compressible gaussians is provided at scene/gaussians_sq.py with the latent decoders at compress/decoders.py

The main training and evaluation script is train_eval.py with the different training modes controllable using the CLI arguments as

--skip_train and --skip_test accordingly.

The final compressed object is stored as a pickle file at point_cloud_best/point_cloud_compressed.pkl within the experiment log directory.

We use PyTorch version 1.12.1 along with CUDA version 11.3 as we find this configuration to work for running our experiments. Prior or subsequent versions might work but are not tested. Training and evaluation requires a CUDA compatible GPU with Compute Capability 7.0+ and will likely consume less than 12 GB RAM for scenes in the MiP-NeRF360 dataset but can go up when also evaluating or if more Gaussians are created based on the densification interval.

To run the training optimizer, simply use

python train_eval.py --config configs/efficient-3dgs.yaml -s <path to COLMAP or NeRF Synthetic dataset> -m <path to log directory>Standard Command Line Arguments for train_eval.py

Path to the config file which loads the default arguments of the experiment setup

Path to the source directory containing a COLMAP or Synthetic NeRF data set.

Path where the trained model should be stored (output/<random> by default).

Alternative subdirectory for COLMAP images (images by default).

Specifies resolution of the loaded images before training. If provided 1, 2, 4 or 8, uses original, 1/2, 1/4 or 1/8 resolution, respectively. For all other values, rescales the width to the given number while maintaining image aspect. If not set and input image width exceeds 1.6K pixels, inputs are automatically rescaled to this target.

Specifies where to put the source image data, cuda by default, recommended to use cpu if training on large/high-resolution dataset, will reduce VRAM consumption, but slightly slow down training. Thanks to HrsPythonix.

Add this flag to use white background instead of black (default), e.g., for evaluation of NeRF Synthetic dataset.

Order of spherical harmonics to be used (no larger than 3). 3 by default.

Flag to make pipeline compute forward and backward of SHs with PyTorch instead of ours.

Flag to make pipeline compute forward and backward of the 3D covariance with PyTorch instead of ours.

Rerun training even if the completed checkpoint object is present in the experiment logs

Rerun testing even if previously evaluated

Skip training phase

Skip testing phase

Save images during evaluation stage of the train or test camera set

Do not store the trained point cloud at the end (if only obtaining metrics for the run)

Number of total iterations to train for, 30_000 by default.

Flag to omit any text written to standard out pipe.

Spherical harmonics features learning rate, 0.0025 by default.

Opacity learning rate, 0.05 by default.

Scaling learning rate, 0.005 by default.

Rotation learning rate, 0.001 by default.

Number of steps (from 0) where position learning rate goes from initial to final. 30_000 by default.

Initial 3D position learning rate, 0.00016 by default.

Final 3D position learning rate, 0.0000016 by default.

Position learning rate multiplier (cf. Plenoxels), 0.01 by default.

Iteration where densification starts, 500 by default.

Iteration where densification stops, 15_000 by default.

Limit that decides if points should be densified based on 2D position gradient, 0.0002 by default.

How frequently to densify, 100 (every 100 iterations) by default.

How frequently to reset opacity, 3_000 by default.

Influence of SSIM on total loss from 0 to 1, 0.2 by default.

Percentage of scene extent (0--1) a point must exceed to be forcibly densified, 0.01 by default.

The train_eval.py script also consists of additional arguments for hyperparameters of the latent quantization.

Each hyperparameter is a list of 6 attributes: position (xyz), rotation(rotation), scaling(scaling), opacity(opacity), base SH component for color(features_dc), remaining components(features_rest).

As explained in the paper, we compress rotation, opacity and features_rest as they consume bulk of the memory and are easily compressible. Changing the hyperparameter for each attribute can then be done as

--{attribute_name}_{hyperparameter_name}. The default hyperparameters used are provided in the configs file

configs/efficient-3dgs.yaml. The list of hyperparameter name arguments for any given attribute_name is given below

Latent Quantization Command Line Arguments for train_eval.py

Quantization type for the attribute. Set to none to disable or sq to quantize

Dimension of the latents

Standard deviation for initialization of latent decoder parameters

LR scaling coefficient of the latents compared to the default uncompressed attribute learning rate.

LR of the latent decoder parameters

Scaling the learning rate of the latents based on the decoder norm. Set to none for no scaling and div to divide learning rate by the decoder norm (typically faster, stable training)

Type of decode matrix. Set to learnable for fully learning decoder matrix parameters and dft to use DFT basis with learnable scaling coefficients.

Images with width greater than 1600 pixels are automatically resized to 1600 as in 3D-GS. This can be avoided by explicitly specifying resolution -r 1.

The datasets can be obtained from the links provided in the 3D-GS repository: MipNeRF360, Tanks&Temples and Deep Blending

Evaluation executes the same script train_eval.py with the --skip_train option to skip retraining and jump to evaluation.

python train_eval.py --config --config configs/efficient-3dgs.yaml -s <path to COLMAP or NeRF Synthetic dataset> -m <path to log directory of saved model> --skip_trainThe --save_images option uses the render_sets function to render images in the train set (unless --skip_train is specified) and test set and saves them to disk. The rendering FPS is calculated as well.

Metrics are then evaluated using the evaluate function.

A 360 degree video of the scene can be created by running the render_360.py script by specifying the dataset flag -s and the model directory -m.