See it in action

Screencast.from.01-31-2023.02.27.05.PM.webm

Features

Features

- ChatGPT Mode (just say activate chat mode)

- GUI Mode (using Flutter)

- Master Control Mode (yeah, it's your Jarvis Now)

- Voice Feedback

- Desktop Notifications

- Simplest Command Execution Logic (use your own words and map them to a command in lvc-commands.json)

- Accurate Master Voice Matching using speechbrain

- Automated Setup!

- Customization

- Change Your Control System Name

- Voice Feedback Speech Control

- Voice Feedback Speed Control

- Total Execution Control through configuration

- Live Mode (under development, until, the system listens every x seconds, where x is the record-duration property in lvc-config.json)

- Dynamic Mode

Pro-Tip: Say 'See you later' to it turn off.

Pro-Tip: Say 'Activate master control mode' to turn on master control mode without manual config.

Pro-Tip: Say 'Deactivate master control mode' to turn off master control mode without manual config.

Yes, these are the built-in actions!

Ok! ;)

Let's just see how to set up master control mode:

After installing, run the master_control_mode_setup.py script

python3 master_control_mode_setup.pyYou will be asked to speak three times given only 3 seconds each time. Speak as much as you can but in your normal tone! After Saving the training-data, this program exists without actually enabling master control mode.

For enabling master control mode, you need to set this property in lvc-config.json to true OR Even you can just say activate master control mode during the runtime to enable it dynamically for that session only 😉!

{

"master-mode": true

} Usage / Install

Usage / Install

Lets quick finish the setup

git clone https://github.com/omegaui/linux-voice-control

cd linux-voice-control

./install.shThe install.sh script will set up your installation of linux-voice-control by installing some dependencies with pip.

The above process will finish off writing lvc-config.json and lvc-commands.json file to your root (~) and also the sources to ~/lvc-bin.

That's how your lvc-config.json look initially ...

{

"name": "alex",

"greeting": "Greetings!",

"record-duration": 3,

"channels": 2,

"rate": 44100,

"chunk-size": 1024,

"notifications-enabled": false,

"show-commands-on-startup": true,

"logs": true,

"speech-threshold": 2500,

"live-mode": false,

"use-hot-word-in-basic-mode": false,

"hot-words": [

"hey alex",

"here alex",

"listen alex"

],

"master-mode": false,

"master-mode-barrier-speech-enabled": true,

"master-mode-barrier-speech": "Unauthorized",

"voice-pitch": 1.0,

"voice-feedback-enabled": true,

"voice-transcription-feedback-enabled": false,

"voice-feedback-speed": 1.35,

"voice-cache-enabled": true,

"voice-feedback-default-speeches": [],

"voice-feedback-transcription-capable-speeches": [

"transcribing...",

"getting it..."

],

"voice-feedback-turning-off": "Turning off linux voice control!"

}Let's take a look at the game controller ... lvc-commands.json

here, blocking property means that whether the feedback must go on simultaneously with command execution (if set to false)

or it should first complete the voice feedback then execute the command (if set to true).

{

"open firefox": {

"exec": "firefox",

"feedback": "starting firefox",

"blocking": true

},

"open editor": {

"exec": "gedit",

"feedback": "launching editor",

"blocking": true

},

"lock the screen": {

"exec": "xdg-screensaver lock",

"feedback": "locked",

"blocking": false

},

"open whatsapp": {

"exec": "firefox https://web.whatsapp.com",

"feedback": "opening whatsapp",

"blocking": true

},

"open instagram": {

"exec": "firefox https://instagram.com",

"feedback": "opening instagram",

"blocking": true

},

"write an email": {

"exec": "firefox https://mail.google.com/mail/u/0/#inbox",

"feedback": "opening G Mail",

"blocking": true

}

}You must be able to infer from above by now, that the keys are your speeches actually and their values are the corresponding commands that are to be executed each time you name those keys.

But wait ... understand how it's actually working in the background.

Let's take an example how it accurately recognizes your commands and your actual speeches.

First if you're really feeling happy to know about this project, then, you must thank openai's whisper it's actually the root and the stem of this project being a reality. Want to know why? Just click on the link above.

After getting the audio transcription, a fuzzy match is applied against the keys in the lvc-commands.json.

Just like you search something on Google and getting the correct results even after typing the wrong spelling.

Your installation is all set for usage (for forking too 😉).

Just hit linux-voice-control in the terminal.

Life Saver Tip: Add linux-voice-control-gui to your startup scripts

Future Ideas: Adding Dynamic Voice Control like hey alex ... call Spider-Man (you already know things like that) and most important things discussions

Build from source

Build from source

Super simple things you know ...

git clone https://github.com/omegaui/linux-voice-control

cd linux-voice-control

pip install -r requirements.txt

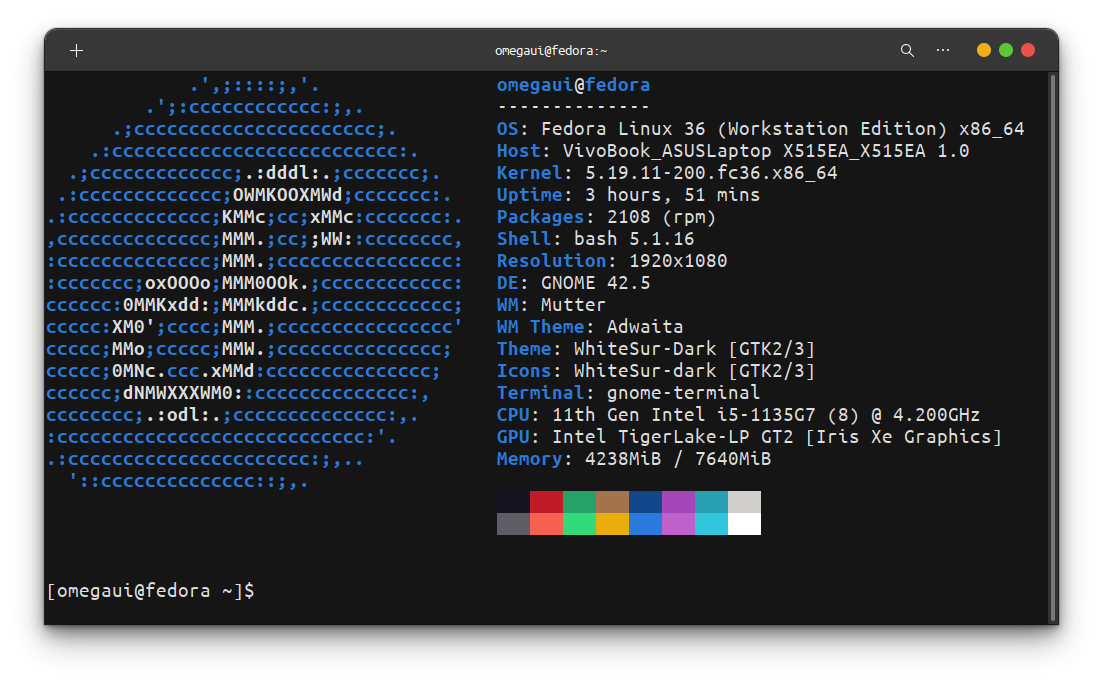

python3 main.py Ok Tested!

Ok Tested!

Extending Usage

Extending Usage

The values in lvc-commands.json seem just like single commands running when matching key is triggered.

But wait ... it doesn't ends here.

You can even do more with this, i.e. you can write a shell script or any other program, that can be used to perform some other complex task.

Example

{

"its time to rock": {

"exec": "/usr/bin/setup-spotify-chill-mode"

}

}#!/bin/bash

echo "Listen I don't know actually how to do this ... but I think you got what I mean ..."

# some code here that launches spotify and starts playing that playlist