This repository implements custom controllers for watching Profile resources from Kubeflow.

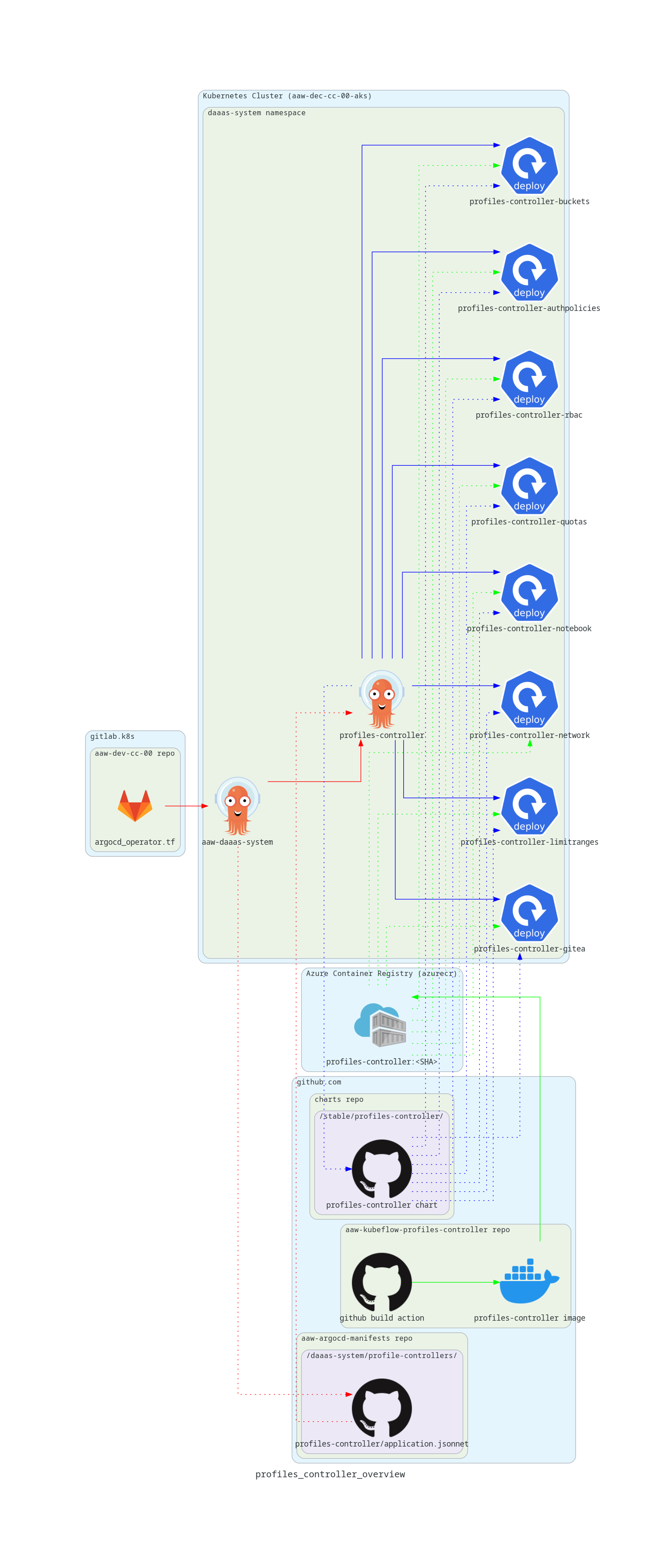

The diagram below illustrates how profiles controller components are rolled out on the cluster.1234

Note: Modifying a controller is a subset of these steps; if you are only updating an existing controller, ignore the steps that don't apply.

- Create a

.gofile in thecmdfolder of this repository with the name of your controller. Your controller must create acobra.Commandand register it with therootCmdof the application. Your controller will be invoked with the entrypoint./profiles-controller <your controller name>. - Modify the profiles-controller helm chart in the StatCan charts repo. Add the deployment containing your new controller and update the

values.yamlfile accordingly with any new parameters specific to your container. Don't forget to increment the helm chart version if you made changes to the helm chart. - Once your new controller is ready, merge your branch to the

mainbranch of this repository. A Github action in this repository runs a build job (see.github/workflows/build.yml) that builds the profiles-controller container image and pushes it to Azure container registry. The pushed image is tagged with the commit SHA of the git commit that triggered the Github Action. - Go to the build job in the Github action and copy the commit SHA that the image is tagged with to your clipboard. This can be found at the top of the step called Run docker build in the build job.

- Go to the aaw-argocd-manifests repository and open the

application.jsonnetfile that deploys theprofiles-controllerArgoCD application in thedaaas-systemnamespace. In this file, paste the commit SHA from the previous step to theimage.tagfield. This updates the image tag for the base image used by theprofiles-controllerdeployment. Note that you can make this edit directly through Github's editing features. This edit should be merged into either theaaw-dev-cc-00branch or theaaw-prod-cc-00branch depending on whether the update applies to the dev or prod environments. Theprofiles-controllerArgoCD application watches for changes to theapplication.jsonnetfile on these branches.

Some controllers deploy per-namespace applications for each user (e.g. Gitea, S3Proxy). In this case, a few additional steps may be required.

- The

profiles-argocd-systemfolder of the aaw-argocd-manifests repository contains manifests that get deployed by per-namespace ArgoCD applications. The intended pattern is that Kubernetes manifests are applied directly with Kustomize patches as required. - Depending on what a particular per-namespace application looks like, there may be a manual step required where a developer must build the manifests by, for example, templating a helm chart and saving the output in a

manifest.yamlfile. In this case, a PR must be made to either theaaw-dev-cc-00oraaw-prod-cc-00branches of the aaw-argocd-manifests repo with the newly buildmanifest.yamlfile.

- Start by opening the appropriate ArgoCD instance. Here we will check the following:

- take note of the current SHA associated with the profiles-controller Argo App.

- Disable auto-sync on both, the App of apps

Argocd-Manifests:aaw-das-systemandprofiles-controller. This will avoid any unintended reconcile.

- Take note of the SHA associated with the image you've build. Make sure that this image was pushed to the appropriate ACR instance.

- In ArgoCD, replace the SHA for the profiles-controller (or appropriate sub-controller) with the value in step 2. This should cause a new replicaset to spawn.

- Test your changes.

- Once complete, enable auto-sync on the App of apps

Argocd-Manifests:aaw-das-systemandprofiles-controller. This should align the cluster state with the repo state. Follow [How to Create a Controller](### How to Create a Controller) to deploy your changes formally.

Helpful links to k8s resources, technologies and other terminologies related to this project are provided below.

- Profile

- Istio AuthorizationPolicy

- LimitRange

- ResourceQuotas

- Kubeflow Notebook

- Roles and RoleBinding (RBAC)

- Labels

- Blob CSI Driver

- Open Policy Agent (OPA)

The profiles controller uses client-go library extensively. The details of interaction points of the profiles controller with various mechanisms from this library are explained here.

The cmd package contains source files for a variety of profile controllers for Kubeflow.

For more information about profile controllers, see the documentation for the client-go library, which contains a variety of mechanisms for use when developing custom profile controllers. The mechanisms are defined in the tools/cache folder of the library.

Responsible for creating, removing and updating Istio Authorization Policies using the Istio client for a given Profile. Currently, the only AuthorizationPolicy is to block upload/download from protected-b Notebook's.

Creates an Azure Blob Storage container for a user in a few storage accounts (e.g. standard, protected-b, etc.) and binds a PersistentVolume to the container using the blob-csi driver. This relies on secrets stored in a system namespace --- secrets are not accessible by users. The controller also creates a PVC in the users namespace bound to this specific PV.

- Supports read-write and read-only PersistentVolumes using mountOptions.

- PVCs are ReadWriteMany or ReadOnlyMany, respectively

- Supports both protected-b and unclassified mounts.

In addition, the controller is responsible for managing links from PersistentVolume's to any buckets from Fair Data Infrastructure (FDI) Section of DAaas. This is accomplished by querying unclassified and protected-b OPA gateways which return json responses containing buckets along with permissions. The controller parses the returned json, creating a PersistentVolume and PersistentVolumeClaim for each bucket in accordance with the permission constraints.

BLOB_CSI_FDI_OPA_DAEMON_TICKER_MILLIS: the time interval at which the opa gateways are queried, in milliseconds.BLOB_CSI_FDI_UNCLASS_OPA_ENDPOINT: the http address pointing to the unclassified OPA gateway.BLOB_CSI_FDI_UNCLASS_SPN_SECRET_NAME: the name of a secret containing dataazurestoragespnclientsecret: value, where value is a secret registered under the unclassified FDI service principal.BLOB_CSI_FDI_UNCLASS_SPN_SECRET_NAMESPACE: the namespace the above secret is contained in.BLOB_CSI_FDI_UNCLASS_PV_STORAGE_CAP: the storage capacity for FDI PV's in Terabytes.BLOB_CSI_FDI_UNCLASS_AZURE_STORAGE_AUTH_TYPE: for the current implementation, onlyspnauth type is supportedBLOB_CSI_FDI_UNCLASS_AZURE_STORAGE_AAD_ENDPOINT: the azure active directory endpointBLOB_CSI_FDI_PROTECTED_B_OPA_ENDPOINT: the http address pointing to the protected-b OPA gateway.BLOB_CSI_FDI_PROTECTED_B_SPN_SECRET_NAME: the name of a secret containing dataazurestoragespnclientsecret: value, where value is a secret registered under the protected-b FDI service principal.BLOB_CSI_FDI_PROTECTED_B_SPN_SECRET_NAMESPACE: the namespace the above secret is contained in.BLOB_CSI_FDI_PROTECTED_B_PV_STORAGE_CAP: the storage capacity for FDI PV's in Terabytes.BLOB_CSI_FDI_PROTECTED_B_AZURE_STORAGE_AUTH_TYPE: for the current implementation, onlyspnauth type is supportedBLOB_CSI_FDI_PROTECTED_B_AZURE_STORAGE_AAD_ENDPOINT: the azure active directory endpoint

For more context on the blob-csi system as a whole, see here

The Taskfile includes task blobcsi:dev for preparing your local environment for development and testing. Due to the controller's dependency on Azure blob-csi driver a cluster with the blob-csi driver installed is required. As such, it is recommended to debug and test against the development k8s context for AAW. With the local environment configured, the Vscode Debugger can be used through Run and Debug: blobcsi Controller in the debug pane.

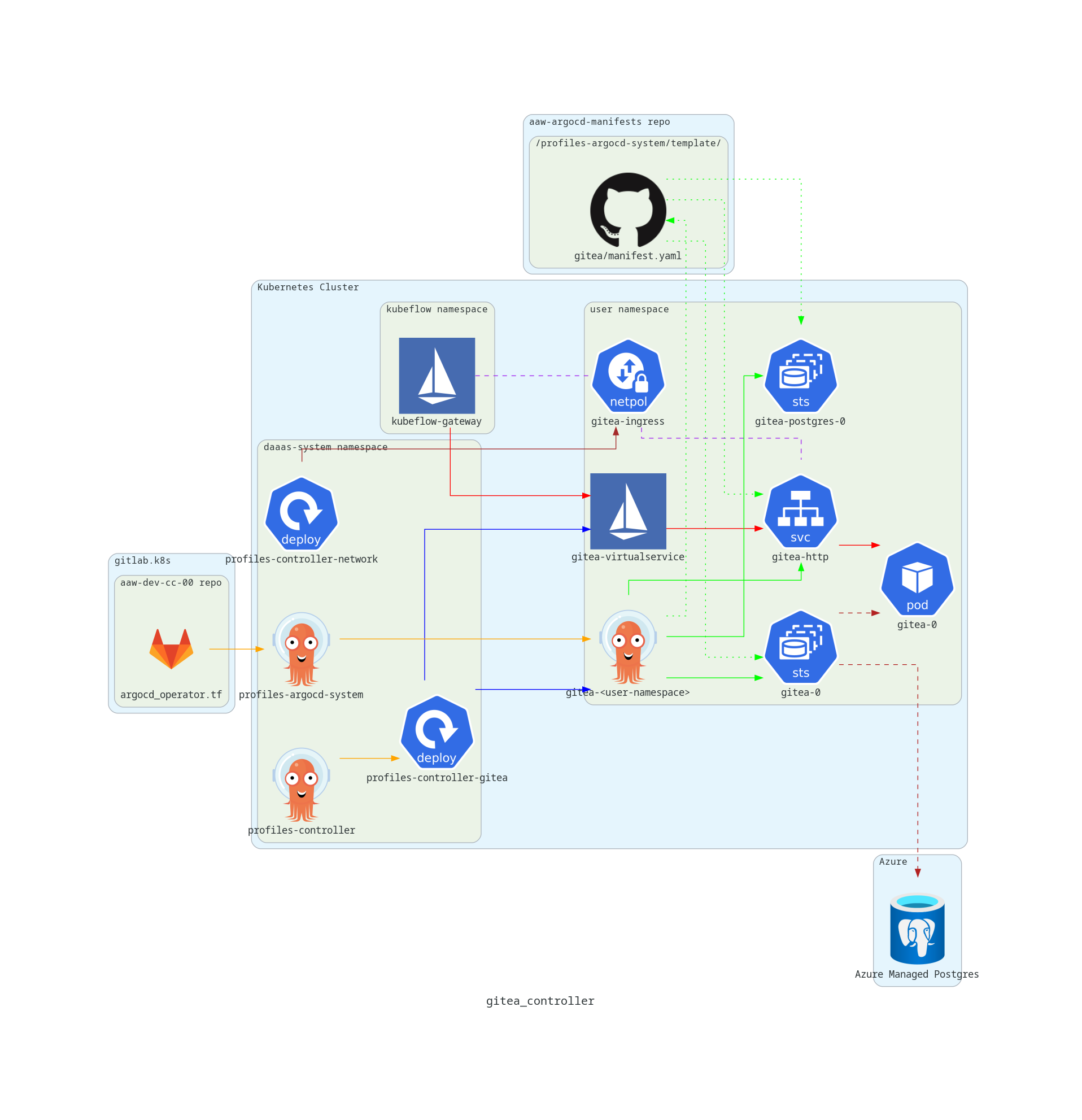

Responsible for deploying gitea as argocd applications per Profile. Currently, argocd applications are deployed by the gitea controller based on the customized gitea manifest found here.

The diagram below highlights the key components involved with the Gitea controller

The unclassified Gitea user interface is embedded within the Kubeflow dashboard's user interface, rendered by an iframe. Protected-b gitea is setup to be accessible only through a protected-b node, and thus the user is expected to access their protected-b gitea instance within an AAW ubuntu VM, or through the CLI in a protected-b notebook.

Requests to a namespace's Gitea server are made to the Kubeflow base url with the suffix /gitea-unclassified/?ns=<user-namespace>, where the user's namespace is passed as an http parameter. An Istio Virtual Service created by the gitea.go controller contains an http route that redirects traffic from /gitea-unclassified/?ns=<user-namespace> to /gitea-unclassified/<user-namespace>/. A second http route routes traffic from /gitea-unclassified/<user-namespace>/ to the Gitea instance in the user's namespace.

The Gitea application server sets the ROOT_URL environment variable to contain the Kubeflow base URL with the /gitea-unclassified/<user-namespace>/. This is required as it is the application's responsibility to establish the URLS that will be used in the browser to retrieve static assets and send requests to application endpoints.

The Gitea application values.yaml file makes use of pod fields as environment variables and dependent environment variables to create a ROOT_URL that depends on the user's namespace. Additionally, an upstream gitea issue indicates that it is possible to set multiple domains in the ROOT_URL environment variable by using the syntax ROOT_URL=https://(domain1,domain2)/gitea/, which is how the dev/prod domains are set without needing to check the deployment environment.

To allow requests to reach the user's Gitea instance, a Network Policy is set in the user's namespace that allows ingress traffic from the kubeflow-gateway to be sent to any pods in the user's namespace that match the app: gitea && app.kubernetes.io/instance: gitea-unclassified label selector.

The Gitea controller is configurable to run in unclassified or protected-b mode. The configuration of the following parameters thus need set to run the controller:

GITEA_CLASSIFICATION: the classification the controller should be running against. Currently,unclassifiedandprotected-bare supported as values.GITEA_PSQL_PORT: port of the postgres instance the gitea application will connect toGITEA_PSQL_ADMIN_UNAME: username of a priviledged user within the postgres instanceGITEA_PSQL_ADMIN_PASSWD: password for the above userGITEA_PSQL_MAINTENANCE_DB: maintenance DB name in the postgres instanceGITEA_SERVICE_URL: internal Gitea URL is specified in hereGITEA_URL_PREFIX: url prefix for redirecting giteaGITEA_SERVICE_PORT: the port exposed by gitea service, as specified hereGITEA_BANNER_CONFIGMAP_NAME: gitea banner configmap name (configmap which corresponds to the banner at the top of the gitea ui)GITEA_ARGOCD_NAMESPACE: namespace for arcocd instance that the controller will install applications intoGITEA_ARGOCD_SOURCE_REPO_URL: repository url containing the gitea deployment manifestGITEA_ARGOCD_SOURCE_TARGET_REVISION: git branch to deploy fromGITEA_ARGOCD_SOURCE_PATH: path to the manifest from the root of the git source repoGITEA_ARGOCD_PROJECT: argocd instances's project to deploy applications withinGITEA_SOURCE_CONTROL_ENABLED_LABEL: this label will be searched for within profiles to indicate if a user has opted in.GITEA_KUBEFLOW_ROOT_URL: the url for kubeflow's central dashboard (for dev: https://kubeflow.aaw-dev.cloud.statcan.ca, for production: https://kubeflow.aaw.cloud.statcan.ca)

Within AAW, these variables are defined in the statcan charts repo, and they are configured within the dev argocd-manifests-repo or prod argocd-manifests-repo.

Responsible for creating, removing and updating LimitRange resources for a given profile. LimitRange resources are generated to limit the cpu and memory resources for the kubeflow profile's default container. LimitRange resources require the implementation of a controller managing ResourceQuotas, which is provided in this package (see quotas.go). Implementing LimitRange resources allows any Pod to run associated with the Profile, restricted by a ResourceQuota.

Responsible for the following networking policies:

- Ingress from the ingress gateway

- Ingress from knative-serving

- Egress to the cluster local gateway

- Egress from unclassified workloads

- Egress from unclassified workloads to the ingress gateway

- Egress to port 443 from protected-b workloads

- Egress to vault

- Egress to pipelines

- Egress to Elasticsearch

- Egress to Artifactory

Responsible for the configuration of Notebook resources within Kubeflow. This controller adds a default option for running a protected-b Notebook.

Responsible for the generation of ResourceQuotas for a given profile. Management of ResourceQuotas is essential, as it provides the constraint for total amount of compute resources that are consumable within the Profile. Since the ResourceQuotas definition included in quotas.go provides constraints for cpu and memory, the limits for the values must be defined. These limits are defined as LimitRange resources and are managed by limitrange.go.

In order for ArgoCD to sync Profile resources, the /metadata/labels/ field needed to be ignored. However, this field is required in ResourceQuota resource generation. A Label is provided for each type of quota below, which allows ResourceQuotas to be overidden by the controller for each resource type:

- quotas.statcan.gc.ca/requests.cpu

- quotas.statcan.gc.ca/limits.cpu

- quotas.statcan.gc.ca/requests.memory

- quotas.statcan.gc.ca/limits.memory

- quotas.statcan.gc.ca/requests.storage

- quotas.statcan.gc.ca/pods

- quotas.statcan.gc.ca/services.nodeports

- quotas.statcan.gc.ca/services.loadbalancers

A special case is considered for overriding gpu resources. Although the label quotas.statcan.gc.ca/gpu exists for the given Profile, the label requests.nvidia.com/gpu is overidden.

Responsible for the generation of Roles and RoleBinding resources for a given profile.

A Role is created for each Profile, the following RoleBinding's are created:

ml-pipelinerole binding for theProfile.DAaas-AAW-Supportis granted a profile-support cluster role in the namespace for support purposes.

The root interface for the profile controllers.

A helm chart for deploying the profile controllers can be found here. Each controller has a corresponding k8s manifest.

Footnotes

-

Gitlab icons provided by Gitlab Press Kit. ↩

-

Github icons provided by Github logos. ↩

-

Argo icon provided by CNCF branding. ↩