Authors official PyTorch implementation of the HyperReenact: One-Shot Reenactment via Jointly Learning to Refine and Retarget Faces (ICCV 2023). If you use this code for your research, please cite our paper.

HyperReenact: One-Shot Reenactment via Jointly Learning to Refine and Retarget Faces

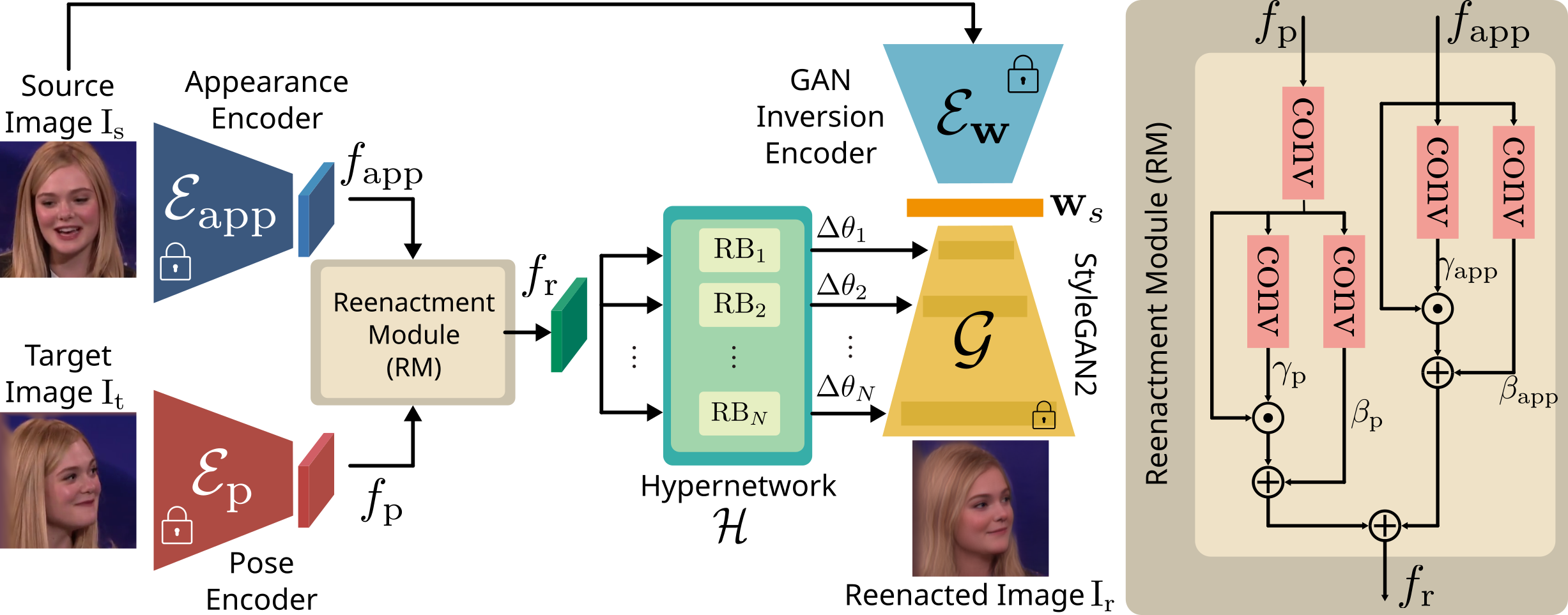

Stella Bounareli, Christos Tzelepis, Vasileios Argyriou, Ioannis Patras, Georgios TzimiropoulosAbstract: In this paper, we present our method for neural face reenactment, called HyperReenact, that aims to generate realistic talking head images of a source identity, driven by a target facial pose. Existing state-of-the-art face reenactment methods train controllable generative models that learn to synthesize realistic facial images, yet producing reenacted faces that are prone to significant visual artifacts, especially under the challenging condition of extreme head pose changes, or requiring expensive few-shot fine-tuning to better preserve the source identity characteristics. We propose to address these limitations by leveraging the photorealistic generation ability and the disentangled properties of a pretrained StyleGAN2 generator, by first inverting the real images into its latent space and then using a hypernetwork to perform: (i) refinement of the source identity characteristics and (ii) facial pose re-targeting, eliminating this way the dependence on external editing methods that typically produce artifacts. Our method operates under the one-shot setting (i.e., using a single source frame) and allows for cross-subject reenactment, without requiring any subject-specific fine-tuning. We compare our method both quantitatively and qualitatively against several state-of-the-art techniques on the standard benchmarks of VoxCeleb1 and VoxCeleb2, demonstrating the superiority of our approach in producing artifact-free images, exhibiting remarkable robustness even under extreme head pose changes.

- Python 3.5+

- Linux

- NVIDIA GPU + CUDA CuDNN

- Pytorch (>=1.5)

We recommend running this repository using Anaconda.

conda create -n hyperreenact_env python=3.8

conda activate hyperreenact_env

conda install pytorch==1.7.0 torchvision==0.8.0 cudatoolkit=11.0 -c pytorch

pip install -r requirements.txt

We provide a StyleGAN2 model trained using StyleGAN2-ada-pytorch and an e4e inversion model trained on VoxCeleb1 dataset. We also provide our HyperNetwork trained on VoxCeleb dataset.

| Path | Description |

|---|---|

| StyleGAN2-VoxCeleb1 | StyleGAN2 trained on VoxCeleb1 dataset. |

| e4e-VoxCeleb1 | e4e trained on VoxCeleb1 dataset. |

| HyperReenact-net | hypernetwork trained on VoxCeleb1 dataset. |

We provide additional auxiliary models needed during training/inference.

| Path | Description |

|---|---|

| face-detector | Pretrained face detector taken from face-alignment. |

| ArcFace Model | Pretrained ArcFace model taken from InsightFace_Pytorch used as our Appearance encoder |

| DECA model | Pretrained model taken from DECA used as our Pose encoder. Extract data.tar.gz under ./pretrained_models. |

Please download all models and save them under ./pretrained_models path.

Given as input a source frame (.png or .jpg) and a target video (.png or .jpg, .mp4 or a directory with images), reenact the source face.

python run_inference.py --source_path ./inference_examples/source.png \

--target_path ./inference_examples/target_video_1.mp4 \

--output_path ./results --save_video

[1] Stella Bounareli, Christos Tzelepis, Argyriou Vasileios, Ioannis Patras, and Georgios Tzimiropoulos. HyperReenact: One-Shot Reenactment via Jointly Learning to Refine and Retarget Faces. IEEE International Conference on Computer Vision (ICCV), 2023.

Bibtex entry:

@InProceedings{bounareli2023hyperreenact,

author = {Bounareli, Stella and Tzelepis, Christos and Argyriou, Vasileios and Patras, Ioannis and Tzimiropoulos, Georgios},

title = {HyperReenact: One-Shot Reenactment via Jointly Learning to Refine and Retarget Faces},

booktitle = {Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV)},

year = {2023},

}This research was supported by the EU's Horizon 2020 programme H2020-951911 AI4Media project.