by Xu Ma*, Yuqian Zhou*, Huan Wang, Can Qin, Bin Sun, Chang Liu, Yun Fu.

torch>=1.7.0; torchvision>=0.8.0; pyyaml; timm; apex-amp (if you want to use fp16);

data prepare: ImageNet with the following folder structure, you can extract ImageNet by this script.

│imagenet/

├──train/

│ ├── n01440764

│ │ ├── n01440764_10026.JPEG

│ │ ├── n01440764_10027.JPEG

│ │ ├── ......

│ ├── ......

├──val/

│ ├── n01440764

│ │ ├── ILSVRC2012_val_00000293.JPEG

│ │ ├── ILSVRC2012_val_00002138.JPEG

│ │ ├── ......

│ ├── ......

We upload the checkpoints and logs to anonymous google drive. Feel free to download.

| Model | #params | Image resolution | Top1 Acc | Throughtput | Download |

|---|---|---|---|---|---|

| ContextCliuster-tiny | 5.3M | 224 | 71.8 | 518.4 | [checkpoint & logs] |

| ContextCliuster-tiny* | 5.3M | 224 | 71.7 | 510.8 | [checkpoint & logs] |

| ContextCliuster-small | 14.0M | 224 | 77.5 | 513.0 | [checkpoint & logs] |

| ContextCliuster-medium | 27.9M | 224 | 81.0 | 325.2 | [checkpoint & logs] |

To evaluate our Context Cluster models, run:

MODEL=coc_tiny #{tiny, tiny2 small, medium}

python3 validate.py /path/to/imagenet --model $MODEL -b 128 --checkpoint {/path/to/checkpoint} We show how to train Context Cluster on 8 GPUs. The relation between learning rate and batch size is lr=bs/1024*1e-3. For convenience, assuming the batch size is 1024, then the learning rate is set as 1e-3 (for batch size of 1024, setting the learning rate as 2e-3 sometimes sees better performance).

MODEL=coc_tiny # coc variants

DROP_PATH=0.1 # drop path rates

python3 -m torch.distributed.launch --nproc_per_node=8 train.py --data_dir /dev/shm/imagenet --model $MODEL -b 128 --lr 1e-3 --drop-path $DROP_PATH --ampSee folder pointcloud for point cloud classification taks on ScanObjectNN.

See folder detection for Detection and instance segmentation tasks on COCO..

See folder segmentation for Semantic Segmentation task on ADE20K.

@inproceedings{ma2023image,

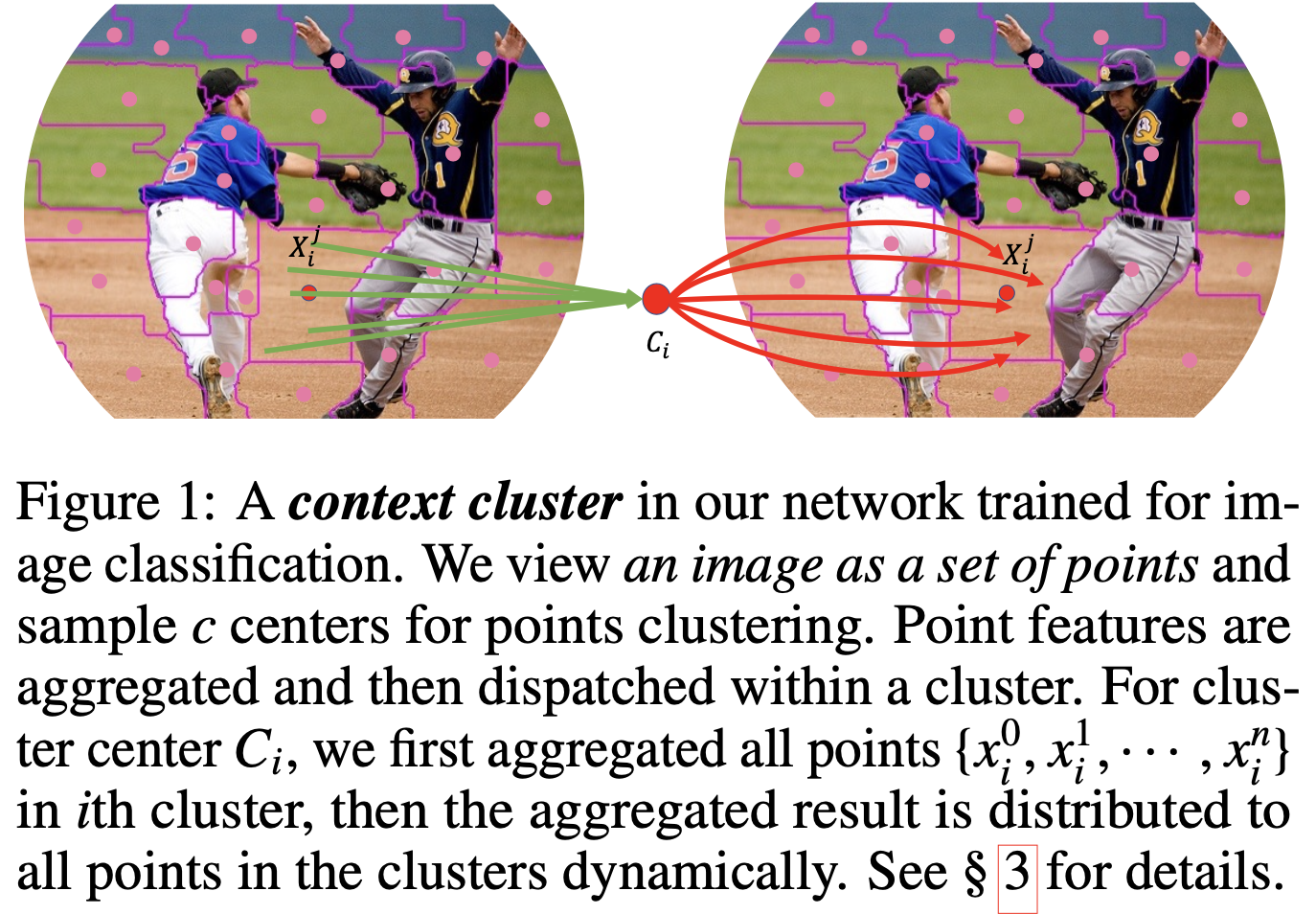

title={Image as Set of Points},

author={Xu Ma and Yuqian Zhou and Huan Wang and Can Qin and Bin Sun and Chang Liu and Yun Fu},

booktitle={The Eleventh International Conference on Learning Representations},

year={2023},

url={https://openreview.net/forum?id=awnvqZja69}

}

Our implementation is mainly based on the following codebases. We gratefully thank the authors for their wonderful works.

pointMLP, poolformer, pytorch-image-models, mmdetection, mmsegmentation.

The majority of Context Cluster is licensed under an Apache License 2.0