This is an code implementation base on Mindspore2.2 and pytorch 1.7.1 of CVPR 2024 paper AMU-Tuning: Effective Logit Bias for CLIP-based Few-shot Learning

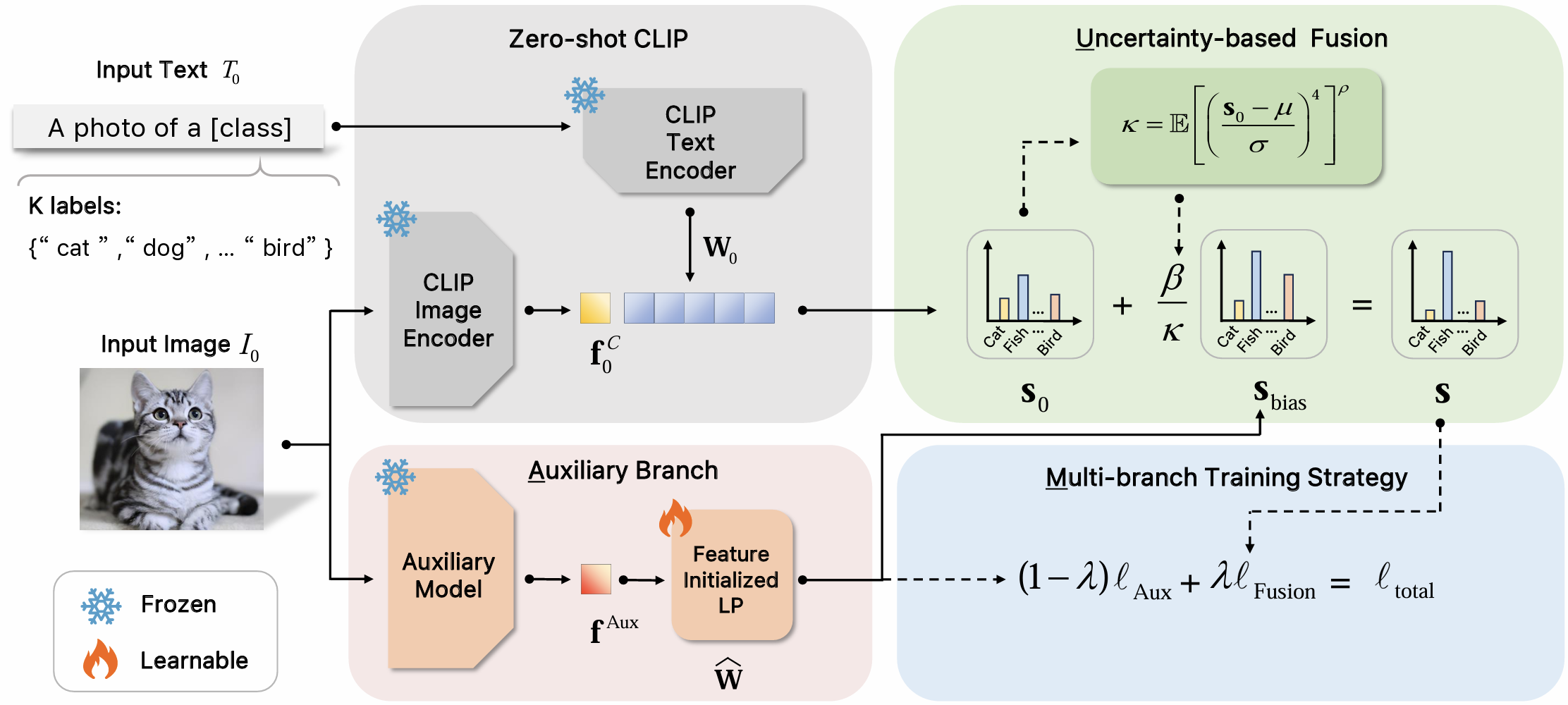

This paper proposes a novel AMU-Tuning method to learn effective logit bias for CLIP-based few shot classification. Specifically, our AMU-Tuning predicts logit bias by exploiting the appropriate Auxiliary features, which are fed into an efficient feature-initialized linear classifier with Multi-branch training. Finally, an Uncertainty based fusion is developed to incorporate logit bias into CLIP for few-shot classification. The experiments are conducted on several widely used benchmarks, and the results show AMU-Tuning clearly outperforms its counterparts while achieving state-of-the-art performance of CLIP based few-shot learning without bells and whistles.

●OS:16.04

●CUDA:11.6

●Toolkit:MindSpore2.2 & PyTorch 1.7.1

●GPU:GTX 3090

create virtual enviroment and install dependencies:

git clone https://github.com/TJU-sjyj/MindSpore-AMU

conda create -n AMU python=3.7

conda activate AMU

# Install the according versions of torch and torchvision

conda install pytorch torchvision cudatoolkitCUDA 10.1

conda install mindspore-gpu cudatoolkit=10.1 -c mindspore -c conda-forgeCUDA 11.1

conda install mindspore-gpu cudatoolkit=11.1 -c mindspore -c conda-forgevalidataion

python -c "import mindspore;mindspore.run_check()"Our dataset setup is primarily based on Tip-Adapter. Please follow DATASET.md to download official ImageNet and other 10 datasets.

- The pre-tained weights of CLIP will be automatically downloaded by running.

- The pre-tained weights of MoCo-v3 can be download at MoCo v3.

We provide run.sh with which you can complete the pre-training + fine-tuning experiment cycle in an one-line command.

clip_backboneis the name of the backbone network of CLIP visual coders that will be used (e.g. RN50, RN101, ViT-B/16).lrlearning rate for adapter training.shotsnumber of samples per class used for training.alphais used to control the effect of logit bias.lambda_mergeis a hyper-parameter in Multi-branch Training

More Arguments can be referenced in parse_args.py

You can use this command to train a AMU adapter with ViT-B-16 as CLIP's image encoder by 16-shot setting for 50 epochs.

CUDA_VISIBLE_DEVICES=0 python train.py\

--rand_seed 2 \

--torch_rand_seed 1\

--exp_name test_16_shot \

--clip_backbone "ViT-B-16" \

--augment_epoch 1 \

--init_alpha 0.5\

--lambda_merge 0.35\

--train_epoch 50\

--lr 1e-3\

--batch_size 8\

--shots 16\

--root_path 'your root path' \You can use the test scripts test.sh to test the pretrained model. More Arguments can be referenced in parse_args.py

| Method | Acc-MindSpore | Acc-PyTorch | Checkpoint(PyTorch) | Checkpoint(MindSpore) |

|---|---|---|---|---|

| MoCov3-ResNet50-16shot-lmageNet1k | 69.98 | 70.02 | Download | Download |

| MoCov3-ResNet50-8shot-lmageNet1k | 68.21 | 68.25 | Download | Download |

| MoCov3-ResNet50-4shot-lmageNet1k | 65.79 | 65.92 | Download | Download |

| MoCov3-ResNet50-2shot-lmageNet1k | 64.19 | 64.25 | Download | Download |

| MoCov3-ResNet50-1shot-lmageNet1k | 62.57 | 62.60 | Download | Download |

This repo benefits from Tip and CaFo. Thanks for their works.

If you have any questions or suggestions, please feel free to contact us: tangyuwei@tju.edu.cn and linzhenyi@tju.edu.cn.