An open-source framework to evaluate, test and monitor ML models in production.

Docs | Discord Community | Newsletter | Blog | Twitter

Evidently helps analyze and track data and ML model quality throughout the model lifecycle. You can think of it as an evaluation layer that fits into the existing ML stack.

Evidently has a modular approach with 3 interfaces on top of the shared analyzer functionality.

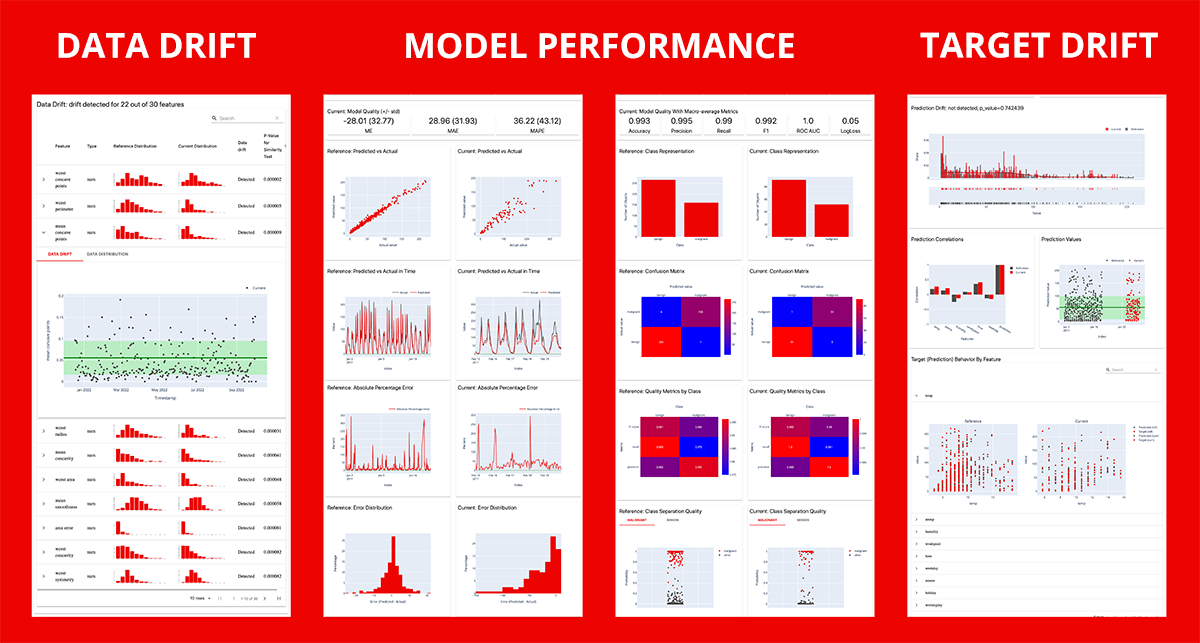

Evidently generates interactive dashboards from pandas DataFrame or csv files. You can use them for model evaluation, debugging and documentation.

Each report covers a particular aspect of the model performance. You can display reports in Jupyter notebook or Colab or export as an HTML file. Currently 7 pre-built reports are available:

- Data Drift. Detects changes in the input feature distribution.

- Data Quality. Provides the detailed feature stats and behavior overview.

- Target Drift: Numerical, Categorical. Detects changes in the model output.

- Model Performance: Classification, Probabilistic Classification, Regression. Evaluates the quality of the model and model errors.

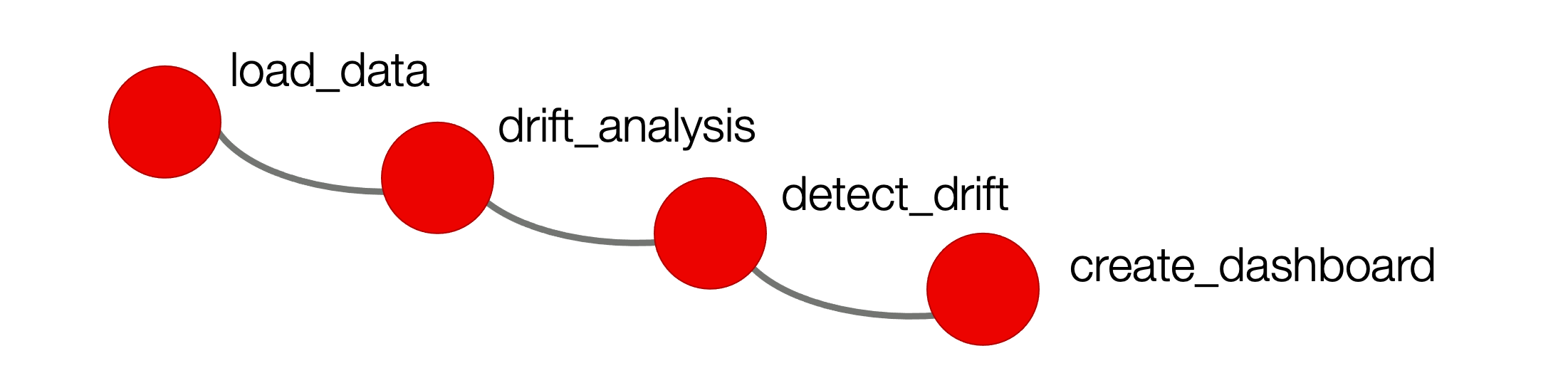

Evidently also generates JSON profiles. You can use them to integrate the data or model evaluation step into the ML pipeline.

You can log and store JSON profiles for further analysis, or build a conditional workflow based on the result of the check (e.g. to trigger alert, retraining, or generate a visual report). The profiles calculate the same metrics and statistical tests as visual reports.

You can explore example integrations with tools like Airflow and Mlflow.

Note: this functionality is in active development and subject to API change.

Evidently has monitors that collect the data and model metrics from a deployed ML service. You can use it to build live monitoring dashboards. Evidently configures the monitoring on top of the streaming data and emits the metrics.

There is a lightweight integration with Prometheus and Grafana that comes with pre-built dashboards.

Evidently is available as a PyPI package. To install it using pip package manager, run:

$ pip install evidentlyIf you want to generate reports as HTML files or export as JSON profiles, the installation is now complete.

If you want to display the dashboards directly in a Jupyter notebook, you should install jupyter nbextension. After installing evidently, run the two following commands in the terminal from the evidently directory.

To install jupyter nbextension, run:

$ jupyter nbextension install --sys-prefix --symlink --overwrite --py evidentlyTo enable it, run:

$ jupyter nbextension enable evidently --py --sys-prefixThat's it! A single run after the installation is enough.

Note: if you use Jupyter Lab, the dashboard might not display in the notebook. However, the report generation in a separate HTML file will work correctly.

Evidently is available as a PyPI package. To install it using pip package manager, run:

$ pip install evidentlyThe tool allows building interactive reports both inside a Jupyter notebook and as a separate HTML file. Unfortunately, building reports inside a Jupyter notebook is not yet possible for Windows. The reason is Windows requires administrator privileges to create symlink. In later versions we will address this issue.

To start, prepare your data as two pandas DataFrames. The first should include your reference data, the second - current production data. The structure of both datasets should be identical.

- For Data Drift report, include the input features only.

- For Target Drift reports, include the column with Target and/or Prediction.

- For Model Performance reports, include the columns with Target and Prediction.

Calculation results can be available in one of the two formats:

- Option 1: an interactive Dashboard displayed inside the Jupyter notebook or exportable as a HTML report.

- Option 2: a JSON Profile that includes the values of metrics and the results of statistical tests.

After installing the tool, import Evidently dashboard and required tabs:

from sklearn import datasets

from evidently.dashboard import Dashboard

from evidently.dashboard.tabs import (

DataDriftTab,

CatTargetDriftTab

)

iris = datasets.load_iris(as_frame=True)

iris_frame, iris_frame["target"] = iris.data, iris.targetTo generate the Data Drift report, run:

iris_data_drift_report = Dashboard(tabs=[DataDriftTab()])

iris_data_drift_report.calculate(iris_frame[:100], iris_frame[100:], column_mapping = None)

iris_data_drift_report.save("reports/my_report.html")To generate the Data Drift and the Categorical Target Drift reports, run:

iris_data_and_target_drift_report = Dashboard(tabs=[DataDriftTab(), CatTargetDriftTab()])

iris_data_and_target_drift_report.calculate(iris_frame[:100], iris_frame[100:], column_mapping = None)

iris_data_and_target_drift_report.save("reports/my_report_with_2_tabs.html")If you get a security alert, press "trust html". HTML report does not open automatically. To explore it, you should open it from the destination folder.

After installing the tool, import Evidently profile and required sections:

from sklearn import datasets

from evidently.model_profile import Profile

from evidently.model_profile.sections import (

DataDriftProfileSection,

CatTargetDriftProfileSection

)

iris = datasets.load_iris(as_frame=True)

iris_frame = iris.dataTo generate the Data Drift profile, run:

iris_data_drift_profile = Profile(sections=[DataDriftProfileSection()])

iris_data_drift_profile.calculate(iris_frame, iris_frame, column_mapping = None)

iris_data_drift_profile.json() To generate the Data Drift and the Categorical Target Drift profile, run:

iris_target_and_data_drift_profile = Profile(sections=[DataDriftProfileSection(), CatTargetDriftProfileSection()])

iris_target_and_data_drift_profile.calculate(iris_frame[:75], iris_frame[75:], column_mapping = None)

iris_target_and_data_drift_profile.json() Read instructions on how to run Evidently in other notebook environments.

You can run evidently in Google Colab, Kaggle Notebook and Deepnote.

First, install evidently. Run the following command in the notebook cell:

!pip install evidently

There is no need to enable nbextension for this case, because evidently uses an alternative way to display visuals in the hosted notebooks.

To build a Dashboard or a Profile simply repeat the steps described in the previous paragraph. For example, to build the Data Drift dashboard, run:

from sklearn import datasets

from evidently.dashboard import Dashboard

from evidently.dashboard.tabs import DataDriftTab

iris = datasets.load_iris(as_frame=True)

iris_frame = iris.data

iris_data_drift_report = Dashboard(tabs=[DataDriftTab()])

iris_data_drift_report.calculate(iris_frame[:100], iris_frame[100:], column_mapping = None)To display the dashboard in the Google Colab, Kaggle Kernel, Deepnote, run:

iris_data_drift_report.show()The show() method has the argument mode, which can take the following options:

- auto - the default option. Ideally, you will not need to specify the value for

modeand use the default. But, if it does not work (in case we failed to determine the environment automatically), consider setting the correct value explicitly. - nbextension - to show the UI using nbextension. Use this option to display dashboards in Jupyter notebooks (it should work automatically).

- inline - to insert the UI directly into the cell. Use this option for PyLab, Google Colab, Kaggle Kernels and Deepnote. For Google Colab, this should work automatically, for PyLab, Kaggle Kernels and Deepnote the option should be specified explicitly.

We welcome contributions! Read the Guide to learn more.

You can also contribute custom reports with a combination of own metrics and widgets. We'll be glad to showcase some of them!

- A simple dashboard which contains two custom widgets with target distribution information: link to repository

For more information, refer to a complete Documentation. You can start with this Tutorial for a quick introduction.

Here you can find simple examples on toy datasets to quickly explore what Evidently can do right out of the box.

| Report | Jupyter notebook | Colab notebook | Data source |

|---|---|---|---|

| Data Drift + Categorical Target Drift (Multiclass) | link | link | Iris plants sklearn.datasets |

| Data Drift + Categorical Target Drift (Binary) | link | link | Breast cancer sklearn.datasets |

| Data Drift + Numerical Target Drift | link | link | California housing sklearn.datasets |

| Regression Performance | link | link | Bike sharing UCI: link |

| Classification Performance (Multiclass) | link | link | Iris plants sklearn.datasets |

| Probabilistic Classification Performance (Multiclass) | link | link | Iris plants sklearn.datasets |

| Classification Performance (Binary) | link | link | Breast cancer sklearn.datasets |

| Probabilistic Classification Performance (Binary) | link | link | Breast cancer sklearn.datasets |

| Data Quality | link | link | Bike sharing UCI: link |

See how to integrate Evidently in your prediction pipelines and use it with other tools.

| Title | link to tutorial |

|---|---|

| Real-time ML monitoring with Grafana | Evidently + Grafana |

| Batch ML monitoring with Airflow | Evidently + Airflow |

| Log Evidently metrics in MLflow UI | Evidently + MLflow |

We host monthly community call for users and contributors. Sign up to join the next one.

- If you want to receive updates, follow us on Twitter, or sign up for our newsletter.

- You can also find more tutorials and explanations in our Blog.

- If you want to chat and connect, join our Discord community!