Puncc (Predictive uncertainty calibration and conformalization) is an open-source Python library that integrates a collection of state-of-the-art conformal prediction algorithms and related techniques for regression and classification problems. It can be used with any predictive model to provide rigorous uncertainty estimations.

Under data exchangeability (or i.i.d), the generated prediction sets are guaranteed to cover the true outputs within a user-defined error

Documentation is available online.

puncc requires a version of python higher than 3.8 and several libraries including Scikit-learn and Numpy. It is recommended to install puncc in a virtual environment to not mess with your system's dependencies.

You can directly install the library using pip:

pip install git+https://github.com/deel-ai/punccYou can alternatively clone the repo and use the makefile to automatically create a virtual environment and install the requirements:

- For users:

make install-user- For developpers:

make prepare-devWe highly recommand following the introduction tutorials to get familiar with the library and its API:

You can also familiarize yourself with the architecture of puncc to build more efficiently your own conformal prediction methods:

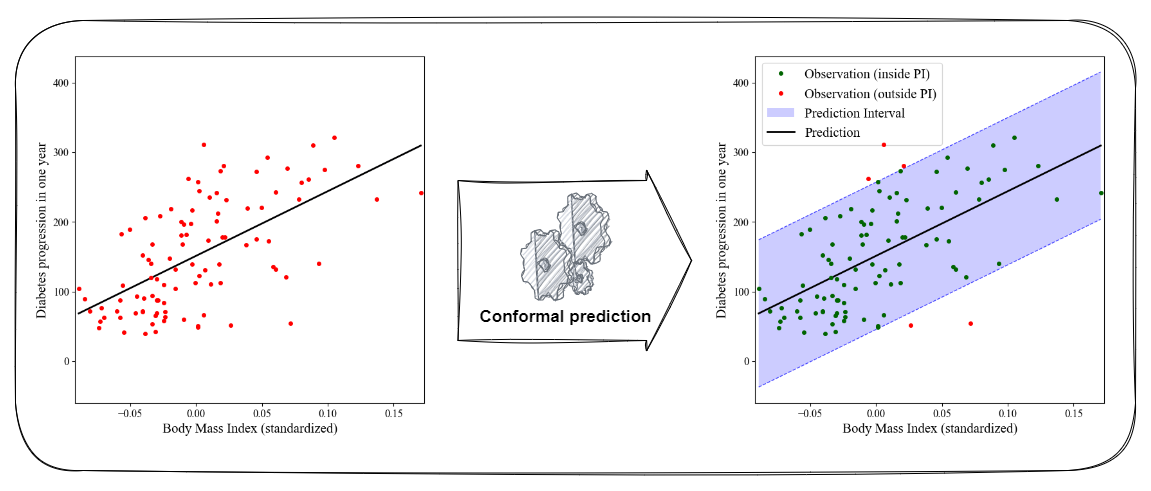

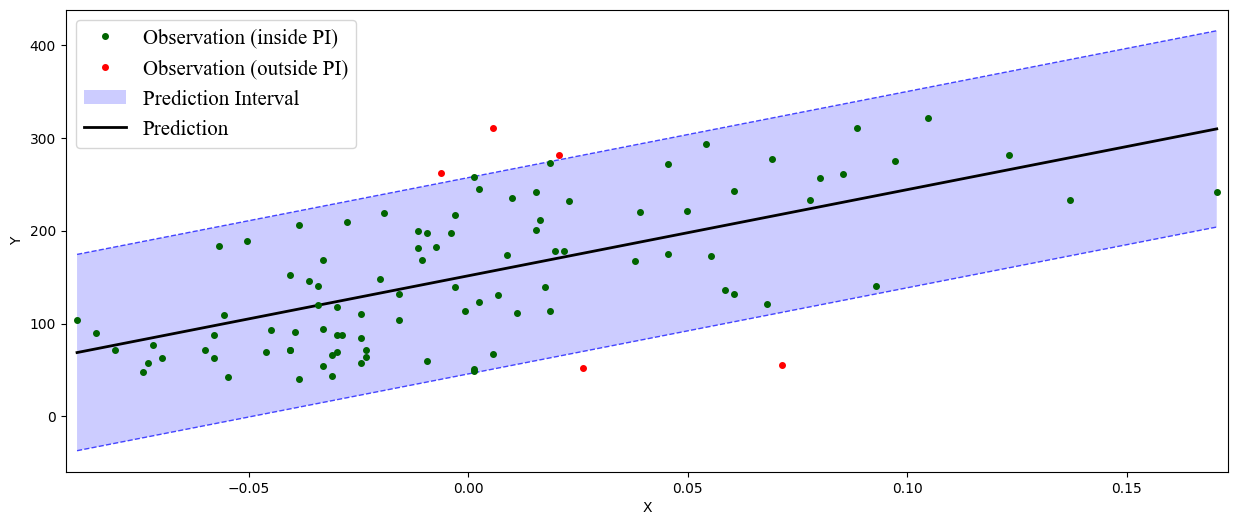

Conformal prediction enables to transform point predictions into interval predictions with high probability of coverage. The figure below shows the result of applying the split conformal algorithm on a linear regressor.

Many conformal prediction algorithms can easily be applied using puncc. The code snippet below shows the example of split conformal prediction wrapping a linear model, done in few lines of code:

from sklearn import linear_model

from deel.puncc.api.prediction import BasePredictor

# Load training data and test data

# ...

# Instanciate a linear regression model

# linear_model = ...

# Create a predictor to wrap the linear regression model defined earlier.

# This enables interoperability with different ML libraries.

# The argument `is_trained` is set to False to tell that the the linear model

# needs to be trained before the calibration.

lin_reg_predictor = BasePredictor(linear_model, is_trained=False)

# Instanciate the split cp wrapper around the linear predictor.

split_cp = SplitCP(lin_reg_predictor)

# Fit model (as is_trained` is False) on the fit dataset and

# compute the residuals on the calibration dataset.

# The fit (resp. calibration) subset is randomly sampled from the training

# data and constitutes 80% (resp. 20%) of it (fit_ratio = 80%).

split_cp.fit(X_train, y_train, fit_ratio=.8)

# The predict returns the output of the linear model y_pred and

# the calibrated interval [y_pred_lower, y_pred_upper].

y_pred, y_pred_lower, y_pred_upper = split_cp.predict(X_test, alpha=alpha)The library provides several metrics (deel.puncc.metrics) and plotting capabilities (deel.puncc.plotting) to evaluate and visualize the results of a conformal procedure. For a target error rate of

Puncc provides two ways of defining and using conformal prediction wrappers:

- A direct approach to run state-of-the-art conformal prediction procedures. This is what we used in the previous conformal regression example.

- Low-level API: a more flexible approach based of full customization of the prediction model, the choice of nonconformity scores and the split between fit and calibration datasets.

A quick comparison of both approaches is provided in the API tutorial for a regression problem.

This library was initially built to support the work presented in our COPA 2022 paper on conformal prediction for time series. If you use our library for your work, please cite our paper:

@inproceedings{mendil2022robust,

title={Robust Gas Demand Forecasting With Conformal Prediction},

author={Mendil, Mouhcine and Mossina, Luca and Nabhan, Marc and Pasini, Kevin},

booktitle={Conformal and Probabilistic Prediction with Applications},

pages={169--187},

year={2022},

organization={PMLR}

}

Contributions are welcome! Feel free to report an issue or open a pull request. Take a look at our guidelines here.

This project received funding from the French ”Investing for the Future – PIA3” program within the Artificial and Natural Intelligence Toulouse Institute (ANITI). The authors gratefully acknowledge the support of the DEEL project.The package is released under MIT license.