This repo hosts the source code for our paper Grounded Video Description. It supports ActivityNet-Entities dataset. We also have code that supports Flickr30k-Entities dataset, hosted at the flickr_branch branch.

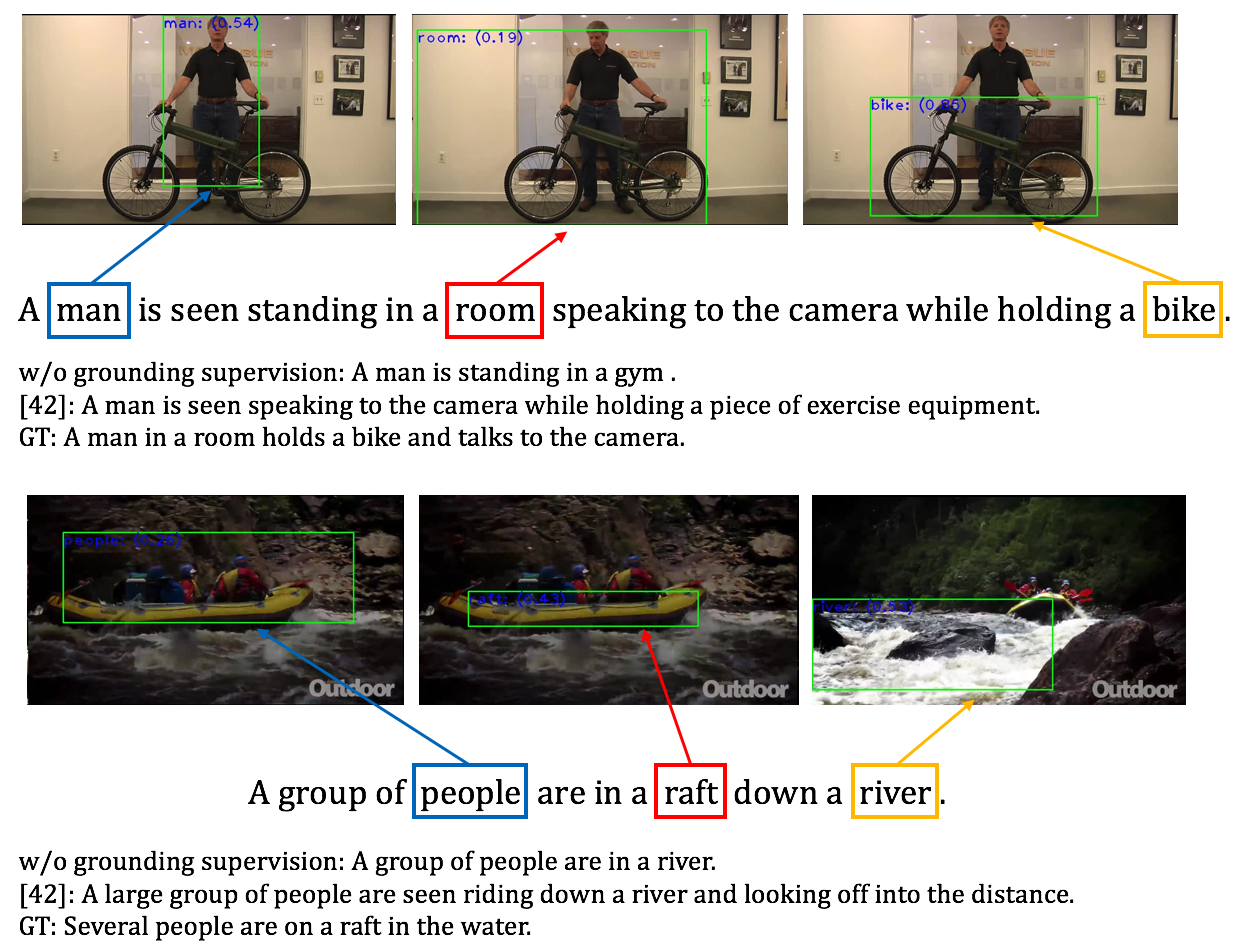

Note: [42] indicates Masked Transformer

Follow the instructions 1 to 3 in the Requirements section to install required packages.

Simply run the following command to download all the data and pre-trained models (total 216GB):

bash tools/download_all.sh

Run the following eval code to test if your environment is setup:

python main.py --batch_size 100 --cuda --num_workers 6 --max_epoch 50 --inference_only \

--start_from save/anet-sup-0.05-0-0.1-run1 --id anet-sup-0.05-0-0.1-run1 \

--seq_length 20 --language_eval --eval_obj_grounding --obj_interact

(Optional) Single-GPU training code for double-check:

python main.py --batch_size 20 --cuda --checkpoint_path save/gvd_starter --id gvd_starter --language_eval

You can now skip to the Training and Validation section!

- Clone the repo recursively:

git clone --recursive git@github.com:facebookresearch/grounded-video-description.git

Make sure all the submodules densevid_eval and coco-caption are included.

-

Install CUDA 9.0 and CUDNN v7.1. Later versions should be fine, but might need to get the conda env file updated (e.g., for PyTorch).

-

Install Miniconda (either Miniconda2 or 3, version 4.6+). We recommend using conda environment to install required packages, including Python 2.7, PyTorch 1.1.0 etc.:

MINICONDA_ROOT=[to your Miniconda root directory]

conda env create -f cfgs/conda_env_gvd.yml --prefix $MINICONDA_ROOT/envs/gvd_pytorch1.1

conda activate gvd_pytorch1.1

- (Optional) If you choose to not use

download_all.sh, be sure to install JAVA and download Stanford CoreNLP for SPICE (see here). Also, download and place the reference file undercoco-caption/annotations. Download Stanford CoreNLP 3.9.1 for grounding evaluation and place the uncompressed folder under thetoolsdirectory.

Download the preprocessed annotation files from here, uncompress and place them under data/anet. Or you can reproduce them all using the data from ActivityNet-Entities repo and the preprocessing script prepro_dic_anet.py under prepro. Then, download the ground-truth caption annotations (under our val/test splits) from here and same place under data/anet.

The region features and detections are available for download (feature and detection). The region feature file should be decompressed and placed under your feature directory. We refer to the region feature directory as feature_root in the code. The H5 region detection (proposal) file is referred to as proposal_h5 in the code.

The frame-wise appearance (with suffix _resnet.npy) and motion (with suffix _bn.npy) feature files are available here. We refer to this directory as seg_feature_root.

Other auxiliary files, such as the weights from Detectron fc7 layer, are available here. Uncompress and place under the data directory.

Modify the config file cfgs/anet_res101_vg_feat_10x100prop.yml with the correct dataset and feature paths (or through symlinks). Link tools/anet_entities to your ANet-Entities dataset root location. Create new directories log and results under the root directory to save log and result files.

The example command on running a 8-GPU data parallel job:

For supervised models (with self-attention):

CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7 python main.py --path_opt cfgs/anet_res101_vg_feat_10x100prop.yml \

--batch_size $batch_size --cuda --checkpoint_path save/$ID --id $ID --mGPUs \

--language_eval --w_att2 $w_att2 --w_grd $w_grd --w_cls $w_cls --obj_interact | tee log/$ID

For unsupervised models (without self-attention):

CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7 python main.py --path_opt cfgs/anet_res101_vg_feat_10x100prop.yml \

--batch_size $batch_size --cuda --checkpoint_path save/$ID --id $ID --mGPUs \

--language_eval | tee log/$ID

Arguments: batch_size=240, w_att2=0.05, w_grd=0, w_cls=0.1, ID indicates the model name.

(Optional) Remove --mGPUs to run in single-GPU mode.

The pre-trained models can be downloaded from here (1.5GB). Make sure you uncompress the file under the save directory (create one under the root directory if not exists).

For supervised models (ID=anet-sup-0.05-0-0.1-run1):

(standard inference: language evaluation and localization evaluation on generated sentences)

python main.py --path_opt cfgs/anet_res101_vg_feat_10x100prop.yml --batch_size 100 --cuda \

--num_workers 6 --max_epoch 50 --inference_only --start_from save/$ID --id $ID \

--val_split $val_split --densecap_references $references --densecap_verbose --seq_length 20 \

--language_eval --eval_obj_grounding --obj_interact \

| tee log/eval-$val_split-$ID-beam$beam_size-standard-inference

(GT inference: localization evaluation on GT sentences)

python main.py --path_opt cfgs/anet_res101_vg_feat_10x100prop.yml --batch_size 100 --cuda \

--num_workers 6 --max_epoch 50 --inference_only --start_from save/$ID --id $ID \

--val_split $val_split --seq_length 40 --eval_obj_grounding_gt --obj_interact \

| tee log/eval-$val_split-$ID-beam$beam_size-gt-inference

For unsupervised models (ID=anet-unsup-0-0-0-run1), simply remove the --obj_interact option.

Arguments: references="./data/anet/anet_entities_val_1.json ./data/anet/anet_entities_val_2.json", val_split='validation'. If you want to evaluate on the test split, set val_split='testing' and references accordingly and submit the object localization output files under results to the eval server.

You need at least 9GB of free GPU memory for the evaluation.

Please acknowledge the following paper if you use the code:

@inproceedings{zhou2019grounded,

title={Grounded Video Description},

author={Zhou, Luowei and Kalantidis, Yannis and Chen, Xinlei and Corso, Jason J and Rohrbach, Marcus},

booktitle={CVPR},

year={2019}

}

We thank Jiasen Lu for his Neural Baby Talk repo. We thank Chih-Yao Ma for his helpful discussions.

This project is licensed under the license found in the LICENSE file in the root directory of this source tree.

Portions of the source code are based on the Neural Baby Talk project.