U-GAT-IT

This an implementation of U-GAT-IT: Unsupervised Generative Attentional Networks with Adaptive Layer-Instance Normalization for Image-to-Image Translation.

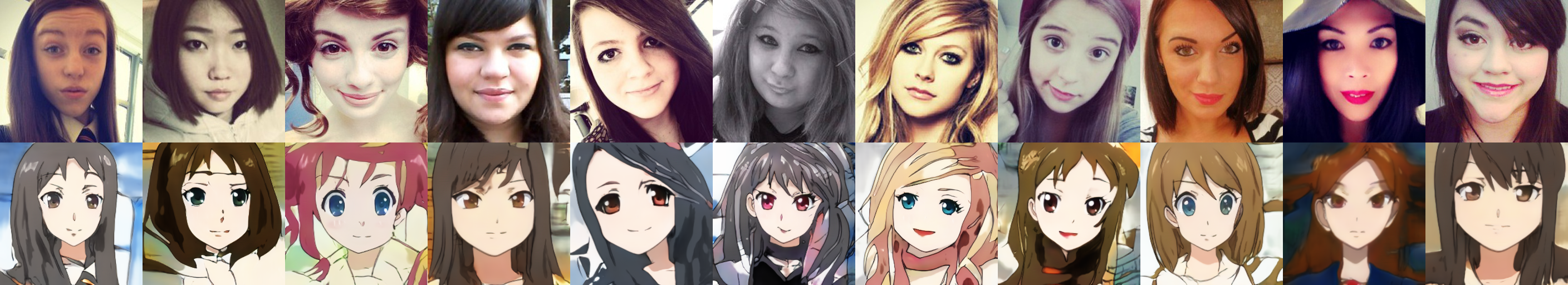

Cherry picked examples

Notes

- The generator is slightly changed to fit training on a 11 GB graphics card.

- Use

python train.pyfor training but set the right configs inmodel.pyfirst. - Use

generate.ipynbfor inference after the training. - You can download pretrained (selfie2anime) checkpoints and logs from here.

Credit

This code is based on the official implementation znxlwm/UGATIT-pytorch and taki0112/UGATIT.

Requirements

- pytorch 1.3

- numpy 1.17

- tensorboard 1.15

- Pillow 6.1