MedTrinity-25M: A Large-scale Multimodal Dataset with Multigranular Annotations for Medicine

Yunfei Xie*, Ce Zhou*, Lang Gao*, Juncheng Wu*, Xianhang Li, Hong-Yu Zhou, Sheng Liu, Lei Xing, James Zou, Cihang Xie, Yuyin Zhou

- [🆕💥 August 31, 2024] Detailed tutorial for deploying MedTrinity now available at HuggingFace. We apologize for any previous inconvenience.

- [📄💥 August 7, 2024] Our arXiv paper is released.

- [💾 July 21, 2024] Full dataset released.

- [💾 June 16, 2024] Demo dataset released.

Star 🌟 us if you think it is helpful!!

- Data processing: extracting essential information from collected data, including metadata integration to generate coarse captions, ROI locating, and medical knowledge collection.

- Multigranular textual description generation: using this information to prompt MLLMs to generate fine-grained captions.

You can view detailed statistics of MedTrinity-25M from this link.

Note: sometimes a single image contains multiple biological structures. The data only reflect the number of samples in which a specific biological structure is present.

| Dataset | 🤗 Huggingface Hub |

|---|---|

| MedTrinity-25M | UCSC-VLAA/MedTrinity-25M |

Using Linux system,

- Clone this repository and navigate to the folder

git clone https://github.com/UCSC-VLAA/MedTrinity-25M.git- Install Package

conda create -n llava-med++ python=3.10 -y

conda activate llava-med++

pip install --upgrade pip # enable PEP 660 support

pip install -e .- Install additional packages for training cases

pip install -e ".[train]"

pip install flash-attn --no-build-isolation

pip install git+https://github.com/bfshi/scaling_on_scales.git

pip install multimedevalgit pull

pip install -e .

# if you see some import errors when you upgrade,

# please try running the command below (without #)

# pip install flash-attn --no-build-isolation --no-cache-dirThe following table provides an overview of the available models in our zoo. For each model, you can find links to its Hugging Face page or Google drive folder.

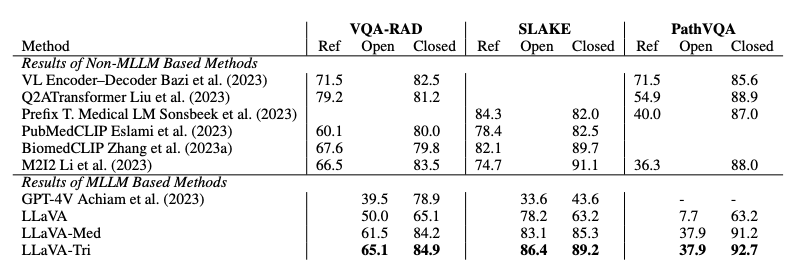

| Model Name | Link | Summary |

|---|---|---|

| LLaVA-Med++ (VQA-RAD) | Google Drive | Pretrained on LLaVA-Med Data and MedTrinity-25M (specifically the VQA-RAD training set subset), finetuning on VQA-RAD training set. |

| LLaVA-Med++ (SLAKE) | Google Drive | Pretrained on LLaVA-Med Data and MedTrinity-25M (specifically the SLAKE training set subset), finetuning on SLAKE training set. |

| LLaVA-Med++ (PathVQA) | Google Drive | Pretrained on LLaVA-Med Data and MedTrinity-25M (specifically the PathVQA training set subset), finetuning on PathVQA training set. |

| LLaVA-Med-Captioner | Hugging Face | Captioner for generating multigranular annotations fine-tuned on MedTrinity-Instruct-200K (Coming soon). |

First, you need to download the base model LLaVA-Meta-Llama-3-8B-Instruct-FT-S2 and download the stage1 and stage2 datasets in the LLaVA-Med.

- Pre-train

# stage1 training

cd MedTrinity-25M

bash ./scripts/med/llava3_med_stage1.sh

# stage2 training

bash ./scripts/med/llava3_med_stage2.sh- Finetune

cd MedTrinity-25M

bash ./scripts/med/llava3_med_finetune.sh- Eval

First, you need to download corresponding weight from Model-Zoo and change the path in evaluation script. Then run:

cd MedTrinity-25M

bash ./scripts/med/llava3_med_eval_batch_vqa_rad.shIf you find MedTrinity-25M useful for your research and applications, please cite using this BibTeX:

@misc{xie2024medtrinity25mlargescalemultimodaldataset,

title={MedTrinity-25M: A Large-scale Multimodal Dataset with Multigranular Annotations for Medicine},

author={Yunfei Xie and Ce Zhou and Lang Gao and Juncheng Wu and Xianhang Li and Hong-Yu Zhou and Sheng Liu and Lei Xing and James Zou and Cihang Xie and Yuyin Zhou},

year={2024},

eprint={2408.02900},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2408.02900},

}- We thank the Microsoft Accelerate Foundation Models Research Program, the OpenAI Researcher Access Program, TPU Research Cloud (TRC) program, Google Cloud Research Credits program, AWS Cloud Credit for Research program, and Lambda Cloud for supporting our computing needs.

- Thanks for the codebase of LLaVA-pp, LLaVA-Med and LLaVA we built upon, and our base model LLaVA-Meta-Llama-3-8B-Instruct-FT-S2 that has the amazing language capabilities!