Yonggan Fu, Wuyang Chen, Haotao Wang, Haoran Li, Yingyan Lin, Zhangyang Wang

Accepted at ICML 2020 [Paper Link].

We propose AutoGAN-Distiller (AGD) Framework, among the first AutoML frameworks dedicated to GAN compression, and is also among a few earliest works that explore AutoML for GANs.

- AGD is established on a specifically designed search space of efficient generator building blocks, leveraging knowledge from state-of-the-art GANs for different tasks.

- It performs differentiable neural architecture search under the target compression ratio (computational resource constraint), which preserves the original GAN generation quality via the guidance of knowledge distillation.

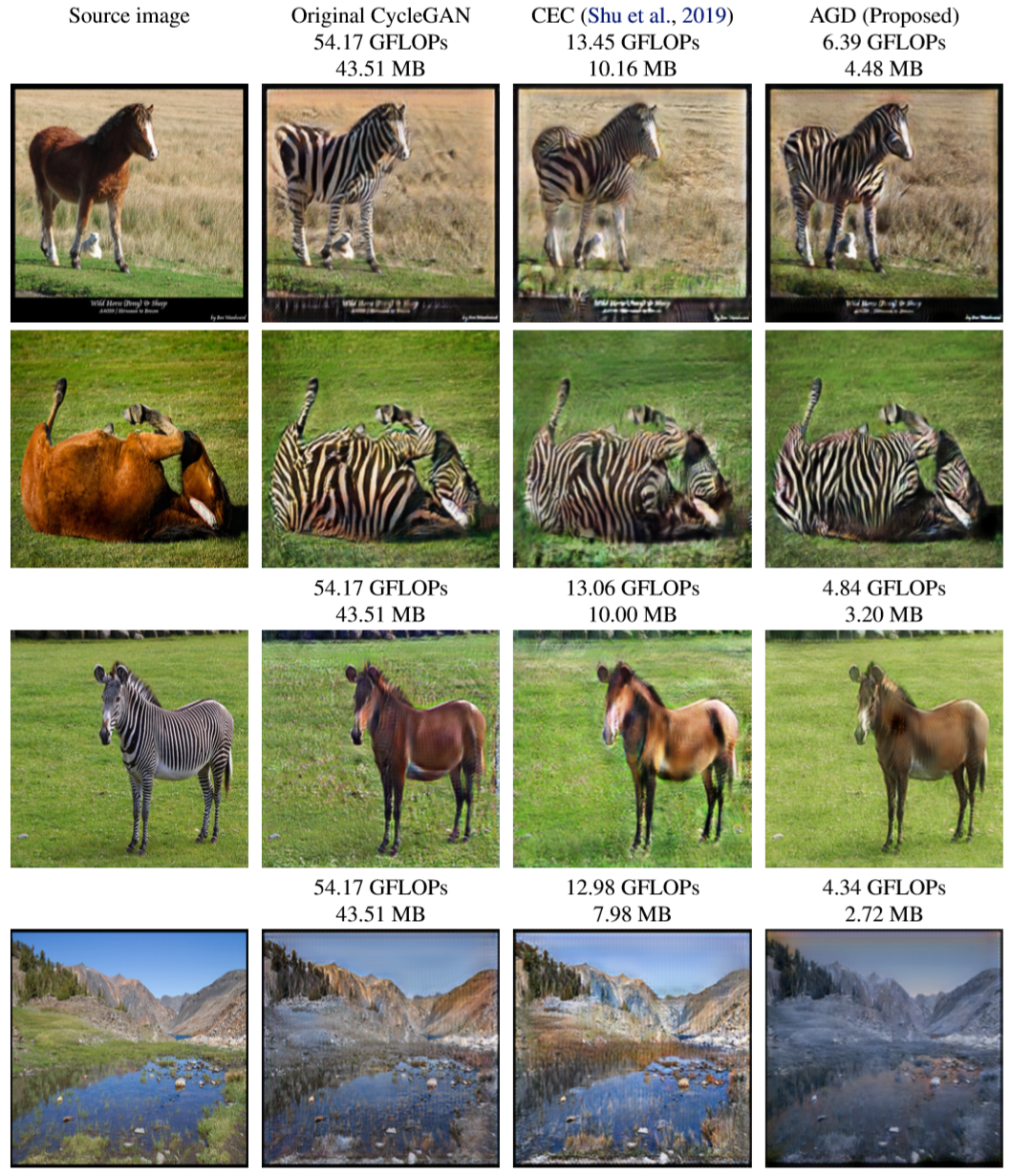

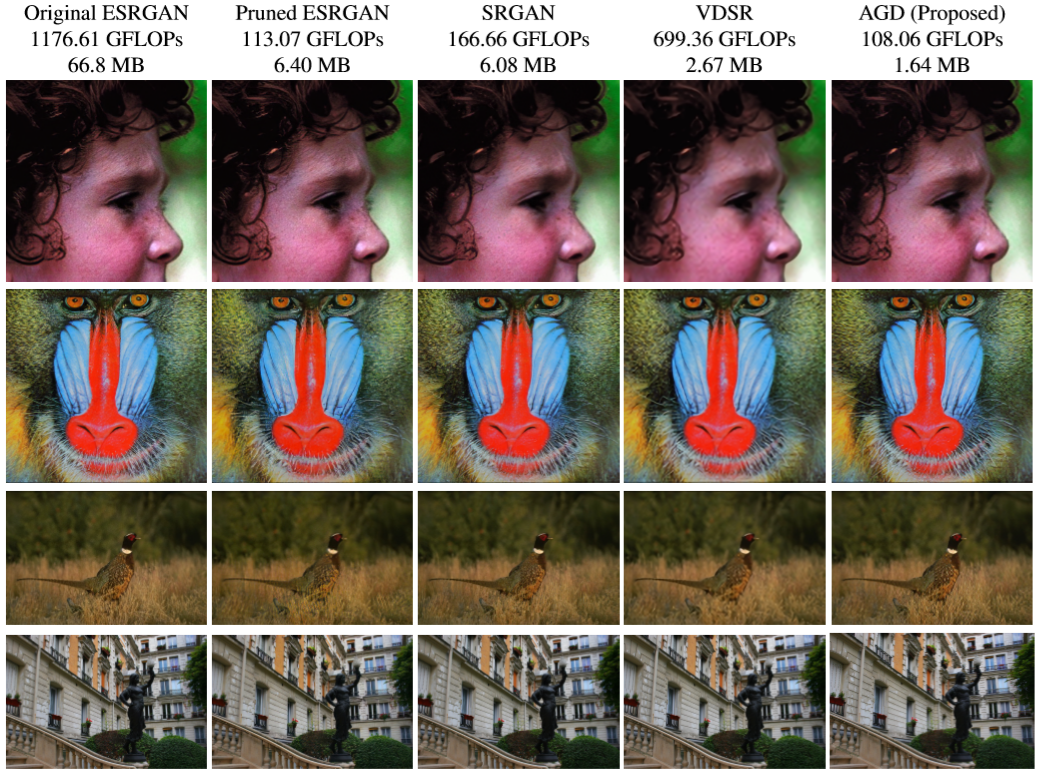

- We demonstrate AGD on two representative mobile-based GAN applications: unpaired image translation (using a CycleGAN), and super resolution (using an encoder-decoder GAN).

Unpaired image translation:

Super Resolution:

horse2zebra, zebra2horse, summer2winter, winter2summer: Unpaired-dataset

Training (DIV2K+Flickr2K): SR-training-dataset

Evaluation (Set5, Set14, BSD100, Urban100): SR-eval-dataset

AGD_ST and AGD_SR are the source codes for unpaired image translation task and super resolution task respectively. The codes for pretrain, search, train from scratch and eval are in the AGD_ST/search and AGD_SR/search directory.

We use AGD_ST/search as an example. All the configurations during pretrain, search, train from scratch, eval are in config_search.py, config_train.py and config_eval.py respectively. Please specify the target dataset C.dataset and change the dataset path C.dataset_path in the three config files to the real paths on your PC.

See env.yml for the complete conda environment. Create a new conda environment:

conda env create -f env.yml

conda activate pytorch

In partiqular, if the thop package encounters some version conflicts, please specify the thop version:

pip install thop==0.0.31.post1912272122

- Switch to the

searchdirectory:

cd AGD_ST/search

-

Set

C.pretrain = Trueinconfig_search.py. -

Start to pretrain:

python train_search.py

The checkpoints during pretraining are saved at ./ckpt/pretrain.

-

Set

C.pretrain = 'ckpt/pretrain'inconfig_search.py. -

Start to search:

python train_search.py

-

Set

C.load_path = 'ckpt/search'inconfig_train.py. -

Start to train from scratch:

python train.py

- Set

C.load_path = 'ckpt/search'andC.ckpt = 'ckpt/finetune/weights.pt'inconfig_eval.py. - Start to evaluate on the testing dataset:

python eval.py

The result images are saved at ./output/eval/.

Please download the checkpoint of original ESRGAN (teacher model) from pretrained ESRGAN and move it to the directory AGD_SR/search/ESRGAN/.

The step 3 is splitted into two steps, i.e., first pretrain the derived architecture with only content loss and then finetune with perceptual loss:

-

Pretrain: Set

C.pretrain = Trueinconfig_train.py. -

Finetune: Set

C.pretrain = 'ckpt/finetune_pretrain/weights.pt'inconfig_train.py.

Pretrained models are provided at pretrained AGD.

To evaluate the pretrained models, please copy the network architecture definition and pretrained weights to the corresponding directories:

cp arch.pt ckpt/search/

cp weights.pt ckpt/finetune/

then do the evaluation following step 4.

Please also check our concurrent work on a unified optimization framework combining model distillation, channel pruning and quantization for GAN compression:

Haotao Wang, Shupeng Gui, Haichuan Yang, Ji Liu, and Zhangyang Wang. "All-in-One GAN Compression by Unified Optimization." ECCV, 2020. (Spotlight) [pdf] [code]