HyperCUT: Video Sequence from a Single Blurry Image using Unsupervised Ordering (CVPR'23)

VinAI Research, Vietnam

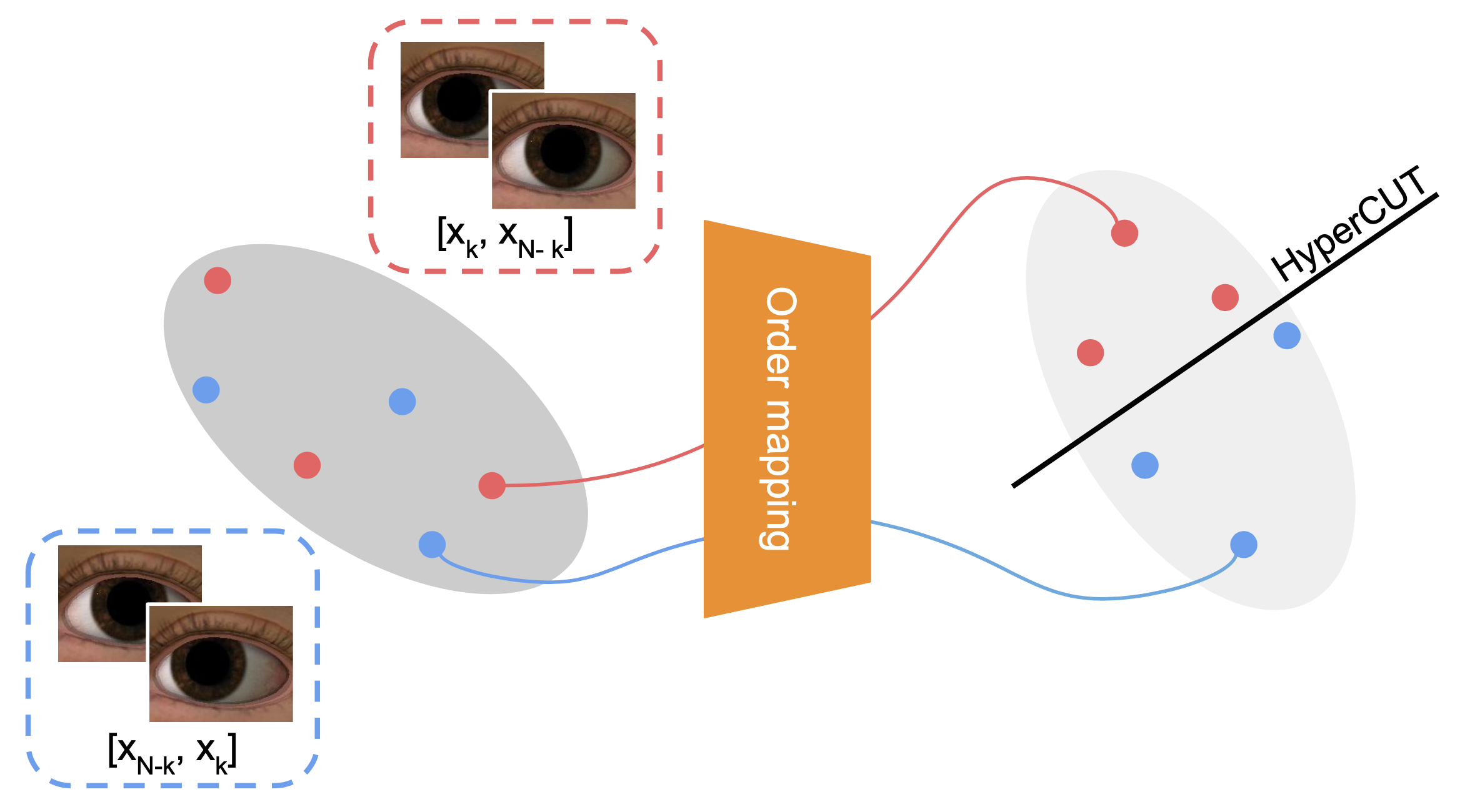

Abstract: We consider the challenging task of training models for image-to-video deblurring, which aims to recover a sequence of sharp images corresponding to a given blurry image input. A critical issue disturbing the training of an image-to-video model is the ambiguity of the frame ordering since both the forward and backward sequences are plausible solutions. This paper proposes an effective self-supervised ordering scheme that allows training high-quality image-to-video deblurring models. Unlike previous methods that rely on order-invariant losses, we assign an explicit order for each video sequence, thus avoiding the order-ambiguity issue. Specifically, we map each video sequence to a vector in a latent high-dimensional space so that there exists a hyperplane such that for every video sequence, the vectors extracted from it and its reversed sequence are on different sides of the hyperplane. The side of the vectors will be used to define the order of the corresponding sequence. Last but not least, we propose a real-image dataset for the image-to-video deblurring problem that covers a variety of popular domains, including face, hand, and street. Extensive experimental results confirm the effectiveness of our method.

Details of the model architecture and experimental results can be found in our paper:

@inproceedings{dangpb2023hypercut,

author={Bang-Dang Pham, Phong Tran, Anh Tran, Cuong Pham, Rang Nguyen, Minh Hoai},

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

title={HyperCUT: Video Sequence from a Single Blurry Image using Unsupervised Ordering},

year={2023}

}

Please CITE our paper whenever this repository is used to help produce published results or incorporated into other software.

- Getting Started

- Datasets

- HyperCUT Ordering

- Deblurring Model

- Results

- Acknowledgments

- References

- Contacts

- Python >= 3.7

- Pytorch >= 1.9.0

- CUDA >= 10.0

Install dependencies:

git clone https://github.com/VinAIResearch/HyperCUT.git

cd HyperCUT

conda create -n hypercut python=3.9

conda activate hypercut

pip install -r requirements.txt You can download our proposed RB2V dataset by following this script:

chmod +x ./dataset/download_RB2V.sh

bash ./dataset/download_RB2V.sh

Table 1: The statistic of our dataset

| Dataset | Train | Test |

|---|---|---|

| RB2V-Street | 9000 | 2053 |

| RB2V-Face | 8000 | 2157 |

| RB2V-Hand | 12000 | 4722 |

By downloading this dataset, USER agrees:

- to use the dataset for research or educational purposes only.

- to not distribute the dataset or part of the dataset in any original or modified form.

- and to cite our paper whenever the dataset is used to help produce published results.

Download datasets REDS and B-Aist++ then unzip to folder ./dataset and organize following this format:

root

├── 000000

├── blur

├──── 0000.png

├──── ...

├── sharp

├──── 0000_1.png

├──── 0000_2.png

├──── ...

├──── 0000_6.png

├──── 0000_7.png

├── 000001

├── blur

├──── 0000.png

├──── ...

├── sharp

├──── 0000_1.png

├──── 0000_2.png

├──── ...

├──── 0000_6.png

├──── 0000_7.png

├── ...

├── metadata.json

where root is the name of dataset. The metadata.json file is the compulsory for each dataset. You can create by your own but it have to follow this format:

{

"name": "Street",

"frame_per_seq": 7,

"data": [

{

"id": "00000/0000",

"order": "ignore",

"partition": "train",

"blur_path": "00000/blur/0000.png", #path_to_image

"frame001_path": "00000/sharp/0000_1.png",

"frame002_path": "00000/sharp/0000_2.png",

"frame003_path": "00000/sharp/0000_3.png",

"frame004_path": "00000/sharp/0000_4.png",

"frame005_path": "00000/sharp/0000_5.png",

"frame006_path": "00000/sharp/0000_6.png",

"frame007_path": "00000/sharp/0000_7.png"

},

{

...

}

]

}In this format, the attribute order has total 3 value [ignore, reverse, random] which define the order of sharp images and our HyperCUT would identify the value ignore or reverse (more detailed in section HyperCUT Ordering).

Before training our HyperCUT, you can set the atrribute order of each sample in metatdata.json file is ignore by default, then use the following script to train the model:

python train_hypercut.py --dataset_name dataname --metadata_root path/to/metadata.jsonYou can run this script to evaluate hit and con ratio that are mentioned in our paper:

python test_hypercut.py --dataset_name dataname \

--metadata_root path/to/metadata.json \

--pretrained_path path/to/pretrained_HyperCUT.pth \After training HyperCUT, you can use our pretrained model to generate a new order for each sample using this script:

python generate_order.py --dataset_name dataname \

--metadata_root path/to/metadata.json \

--save_path path/to/generated_metadata.json \

--pretrained_path path/to/pretrained_HyperCUT.pth \And we also provide the pretrained model of our proposed dataset RB2V-Street, RB2V-Hand, RB2V-Face

You can train deblurring networks using train_blur2vid.py. For example:

# Train baseline model

python train_blur2vid.py --dataset_name dataname \

--metadata_root path/to/generated_metadata.json \

--batch_size 8 \

--backbone Jin \

--loss_type order_inv \

--target_frames 1 2 3 4 5 6 7 \

# Train baseline + HyperCUT model

python train_blur2vid.py --dataset_name dataname \

--metadata_root path/to/generated_metadata.json \

--batch_size 8 \

--backbone Jin \

--loss_type hypercut \

--hypercut_path path/to/pretrained_HyperCUT.pth \

--target_frames 1 2 3 4 5 6 7 \ In this project, we offer configurable arguments for training different baselines either with their original settings or incorporating our HyperCUT regularization. The key arguments to consider are:

backbone: This current version of the code incorporates two baseline methods - those proposed by Jin et al. [1] and Purohit et al. [2] , located under./models/backbones/. The training process of the deblurring network can be tailored to employ either of these methods by setting thebackbonevalue toJinorPurohit, respectively.loss_type: We implement 3 version of loss functions that are mentioned in our main paper. These are: Naive loss (naive), Order-Invariant loss (order_inv), and our novel HyperCUT regularization (hypercut). Should you opt to utilize the HyperCUT method, please ensure that thehypercut_pathis correctly specified, as demonstrated in the provided bash file above.

Like the training process, you can evaluate the deblurring model with 2 config:

# Evaluate baseline model

python test_blur2vid.py --dataset_name dataname \

--metadata_root path/to/generated_metadata.json \

--batch_size 8 \

--backbone Jin \

--target_frames 1 2 3 4 5 6 7 \

# Evaluate baseline + HyperCUT model

python test_blur2vid.py --dataset_name dataname \

--metadata_root path/to/generated_metadata.json \

--batch_size 8 \

--backbone Jin \

--hypercut_path path/to/pretrained_HyperCUT.pth \

--target_frames 1 2 3 4 5 6 7 \ If you want to perform inference using the Blur2Vid model, we've provided a sample inference code that demonstrates the process

# Sample instruction for inference with Jin backbone

python inference.py --backbone Jin \

--target_frames 1 2 3 4 5 6 7 \

--pretrained_path path/to/pretrained_Blur2Vid.pth \

--blur_path path/to/blurry_image \ To ensure a fair evaluation, we adopt the maximum value from the results of both forward and backward predictions for each metric. We denote them with the prefix "p"-

Table 2: Quantitative result (pPSNR↑) compared between the baseline [1] and our HyperCUT-based model on REDS dataset

| Model |

|

|

|

|

|

|

|

|---|---|---|---|---|---|---|---|

| [1] | 20.65 | 22.63 | 24.20 | 23.50 | 24.20 | 22.63 | 20.65 |

| [1] + Ours | 22.87 | 24.88 | 26.29 | 25.10 | 26.29 | 24.88 | 22.86 |

Table 3: Performance boost (pPSNR↑) of each frame on REDS (left) and RB2V-Street (average of all three categories) dataset when using HyperCUT

| Model |

|

|

|

|

|

|

|

|---|---|---|---|---|---|---|---|

| [2] | 22.78/26.99 | 24.47/27.99 | 26.14/29.45 | 31.50/32.08 | 26.12/29.55 | 24.49/28.06 | 22.83/27.04 |

| [2] + Ours | 26.75/28.29 | 28.30/29.20 | 29.42/30.43 | 29.97/32.08 | 29.41/30.53 | 28.30/29.22 | 26.76/28.25 |

- The result of Jin et al .[1] compare to HyperCUT-based

- The result of Purohit et al .[2] compare to HyperCUT-based

- Moreover, we also compare our HyperCUT-base model with the baseline having the additional motion guidance input [3] in B-Aist++ dataset

Thanks for the model based code from the implementation of Purohit et al.(code), Jin et al.(code).

[1] Meiguang Jin, Givi Meishvili, and Paolo Favaro. Learning to Extract a Video Sequence from a Single Motion-Blurred Image. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2018.

[2] Kuldeep Purohit, Anshul Shah, and AN Rajagopalan. Bringing Alive Blurred Moments. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2019.

[3] Zhihang Zhong, Xiao Sun, Zhirong Wu, Yinqiang Zheng, Stephen Lin, and Imari Sato. Animation from Blur: Multi-modal Blur Decomposition with Motion Guidance. In Proceedings of the European Conference on Computer Vision. Springer, 2022.

If you have any questions or suggestions about this repo, please feel free to contact me (bangdang2000@gmail.com).