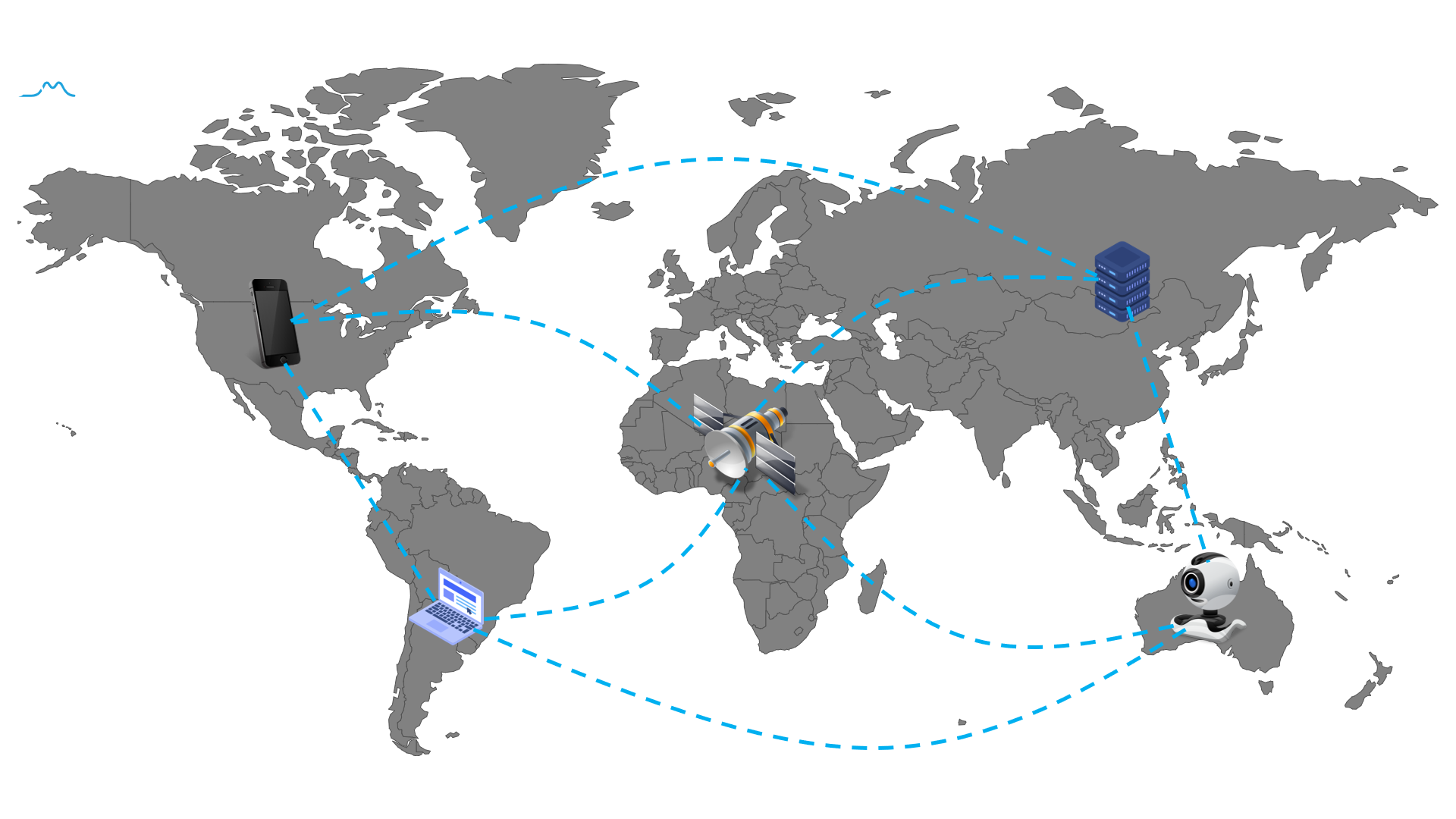

Federated Learning (FL) is a new machine learning framework, which enables multiple devices collaboratively to train a shared model without compromising data privacy and security.

This repository will continue to be collected and updated everything about federated learning materials, including research papers, conferences, blogs and beyond.

- Top Machine Learning conferences

- Books

- Papers

- Talks and Tutorials

- Conferences and Workshops

- Blogs

- Open-Sources

In this section, we will summarize Federated Learning papers accepted by top machine learning conference, Including Neurips, ICML, ICLR.

| Conferences | Title | Affiliation | Slide & Code |

| AISTATS 2020 | FedPAQ: A Communication-Efficient Federated Learning Method with Periodic Averaging and Quantization | UC Santa Barbara; UT Austin | Supplementary |

| How To Backdoor Federated Learning | Cornell Tech | Supplementary | |

| Federated Heavy Hitters Discovery with Differential Privacy | RPI; |

Supplementary |

-

联邦学习(Federated Learning)

-

联邦学习实战(Practicing Federated Learning)

Personalized federated learning refers to train a model for each client, based on the client’s own dataset and the datasets of other clients. There are two major motivations for personalized federated learning:

- Due to statistical heterogeneity across clients, a single global model would not be a good choice for all clients. Sometimes, the local models trained solely on their private data perform better than the global shared model.

- Different clients need models specifically customized to their own environment. As an example of model heterogeneity, consider the sentence: “I live in .....”. The next-word prediction task applied on this sentence needs to predict a different answer customized for each user. Different clients may assign different labels to the same data.

Personalized federated learning Survey paper:

-

Three Approaches for Personalization with Applications to Federated Learning

-

Personalized Federated Learning for Intelligent IoT Applications: A Cloud-Edge based Framework

Recommender system (RecSys) is widely used to solve information overload. In general, the more data RecSys use, the better the recommendation performance we can obtain.

Traditionally, RecSys requires the data that are distributed across multiple devices to be uploaded to the central database for model training. However, due to privacy and security concerns, such directly sharing user data strategies are no longer appropriate.

The incorporation of federated learning and RecSys is a promising approach, which can alleviate the risk of privacy leakage.

| Methodology | Title | Conferences | Slide & Code |

| Matrix Factorization | Secure federated matrix factorization | IEEE Intelligent Systems | |

| Federated Multi-view Matrix Factorization for Personalized Recommendations | ECML-PKDD 2020 | video | |

| Decentralized Recommendation Based on Matrix Factorization: A Comparison of Gossip and Federated Learning | ECML-PKDD 2019 | ||

| Towards Privacy-preserving Mobile Applications with Federated Learning: The Case of Matrix Factorization | MobiSys 2019 | ||

| Meta Matrix Factorization for Federated Rating Predictions | ACM SIGIR 2020 | code | |

| Federated Collaborative Filtering for Privacy-Preserving Personalized Recommendation System | Arxiv | ||

| GNN | FedGNN: Federated Graph Neural Network for Privacy-Preserving Recommendation | Arxiv |

| Methodology | Title | Conferences | Slide & Code |

| Backdoor Attack | How To Backdoor Federated Learning | AISTATS 2020 | code |

| Can You Really Backdoor Federated Learning? | Arxiv | ||

| Attack of the Tails: Yes, You Really Can Backdoor Federated Learning | NeurIPS 2020 | code | |

| DBA: Distributed Backdoor Attacks against Federated Learning | ICLR 2020 | code |

| Methodology | Title | Conferences | Slide & Code |

| Differential Privacy | Federated Learning With Differential Privacy: Algorithms and Performance Analysis | IEEE Transactions on Information Forensics and Security | |

| Differentially Private Federated Learning: A Client Level Perspective | Arxiv | code | |

| Learning Differentially Private Recurrent Language Models | ICLR 2018 | ||

| Homomorphic Encryption | Private federated learning on vertically partitioned data via entity resolution and additively homomorphic encryption | Arxiv | |

| A Little Is Enough: Circumventing Defenses For Distributed Learning | NeurIPS 2019 |

-

Advances and Open Problems in Federated Learning - arXiv Dec 2019

-

A Survey on Federated Learning Systems: Vision, Hype and Reality for Data Privacy and Protection - arXiv Apr 2020

-

Federated Learning in Mobile Edge Networks: A Comprehensive Survey - arXiv Sep 2019

-

Federated Learning for Wireless Communications: Motivation, Opportunities and Challenges - arXiv Sep 2019

-

Federated Learning: Challenges, Methods, and Future Directions - arXiv Aug 2019

-

Federated Machine Learning: Concept and Applications - ACM TIST 2018

-

Towards Federated Learning at Scale: System Design - SysML 2019

-

A generic framework for privacy preserving deep learning - arXiv 2018

-

Communication-Efficient Learning of Deep Networks from Decentralized Data - AISTATS 2017 (The first paper proposed federated learning concept)

-

RPN: A Residual Pooling Network for Efficient Federated Learning - ECAI 2020

-

Federated Learning: Strategies for Improving Communication Efficiency - arXiv 2017

-

Federated Learning with Matched Averaging - ICLR 2020

-

One-Shot Federated Learning - arXiv 2019

-

cpSGD: Communication-efficient and differentially-private distributed SGD - NeurIPS 2018

-

Federated Optimization: Distributed Machine Learning for On-Device Intelligence - arXiv 2016

-

Fair Resource Allocation in Federated Learning - arXiv 2019

-

Agnostic Federated Learning - ICML 2019

| Applications | Title | Conferences | Slide & Code |

| Computer Vision | FedVision: An Online Visual Object Detection Platform Powered by Federated Learning | AAAI 2020 | code |

| Nature Language Processing | Federated Topic Modeling | CIKM 2019 | |

| Federated Learning for Mobile Keyboard Prediction | Arxiv | ||

| Applied federated learning: Improving google keyboard query suggestions | Arxiv | ||

| Federated Learning Of Out-Of-Vocabulary Words | Arxiv | ||

| Healthcare | Privacy-preserving Federated Brain Tumour Segmentation | MICCAI MLMI 2019 | |

| FedHealth: A Federated Transfer Learning Framework for Wearable Healthcare | Arxiv | ||

| Blockchain | FedCoin: A Peer-to-Peer Payment System for Federated Learning | Arxiv | |

| Blockchained On-Device Federated Learning | IEEE Communications Letters 2019 |

-

TensorFlow Federated (TFF): Machine Learning on Decentralized Data - Google, TF Dev Summit ‘19 2019

-

Federated Learning: Machine Learning on Decentralized Data - Google, Google I/O 2019

-

Federated Learning - Cloudera Fast Forward Labs, DataWorks Summit 2019

-

GDPR, Data Shortage and AI - Qiang Yang, AAAI 2019 Invited Talk

-

Making every phone smarter with Federated Learning - Google, 2018

- FL-ICML 2020 - Organized by IBM Watson Research.

- FL-IBM 2020 - Organized by IBM Watson Research and Webank.

- FL-NeurIPS 2019 - Organized by Google, Webank, NTU, CMU.

- FL-IJCAI 2019 - Organized by Webank.

- Google Federated Learning workshop - Organized by Google.

- What is Federated Learning - Nvidia 2019

- Online Federated Learning Comic - Google 2019

- Federated Learning: Collaborative Machine Learning without Centralized Training Data - Google AI Blog 2017