English | 简体中文

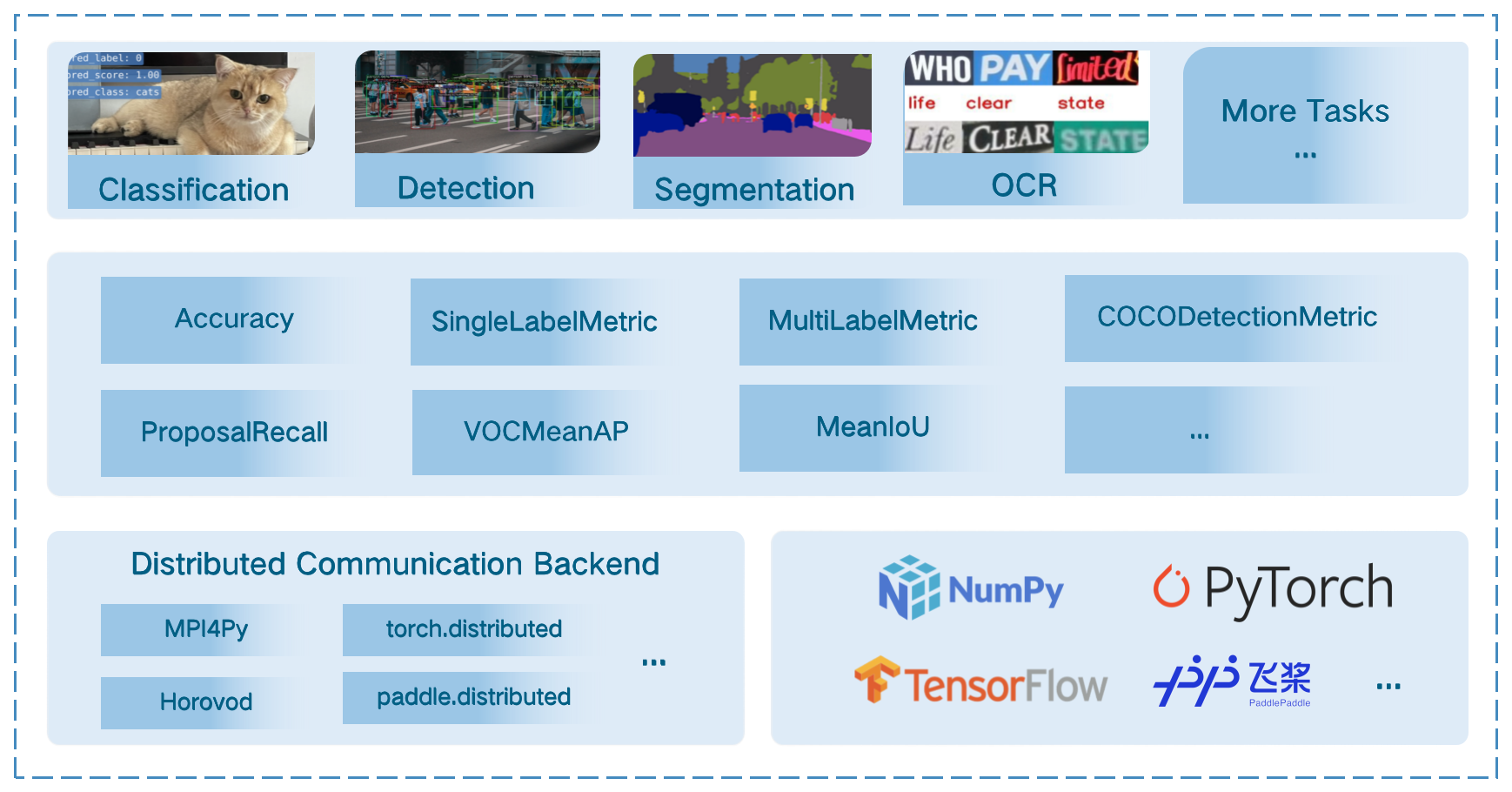

MMEval is a machine learning evaluation library that supports efficient and accurate distributed evaluation on a variety of machine learning frameworks.

Major features:

- Comprehensive metrics for various computer vision tasks (NLP will be covered soon!)

- Efficient and accurate distributed evaluation, backed by multiple distributed communication backends

- Support multiple machine learning frameworks via dynamic input dispatching mechanism

Supported distributed communication backends

| MPI4Py | torch.distributed | Horovod | paddle.distributed |

|---|---|---|---|

| MPI4PyDist | TorchCPUDist TorchCUDADist |

TFHorovodDist | PaddleDist |

Supported metrics and ML frameworks

NOTE: MMEval tested with PyTorch 1.6+, TensorFlow 2.4+ and Paddle 2.2+.

| Metric | numpy.ndarray | torch.Tensor | tensorflow.Tensor | paddle.Tensor |

|---|---|---|---|---|

| Accuracy | ✔ | ✔ | ✔ | ✔ |

| SingleLabelMetric | ✔ | ✔ | ||

| MultiLabelMetric | ✔ | ✔ | ||

| AveragePrecision | ✔ | ✔ | ||

| MeanIoU | ✔ | ✔ | ✔ | ✔ |

| VOCMeanAP | ✔ | |||

| OIDMeanAP | ✔ | |||

| CocoDetectionMetric | ✔ | |||

| ProposalRecall | ✔ | |||

| F1Metric | ✔ | ✔ | ||

| HmeanIoU | ✔ | |||

| PCKAccuracy | ✔ | |||

| MpiiPCKAccuracy | ✔ | |||

| JhmdbPCKAccuracy | ✔ | |||

| EndPointError | ✔ | ✔ | ||

| AVAMeanAP | ✔ | |||

| SSIM | ✔ | |||

| SNR | ✔ | |||

| PSNR | ✔ | |||

| MAE | ✔ | |||

| MSE | ✔ |

MMEval requires Python 3.6+ and can be installed via pip.

pip install mmevalTo install the dependencies required for all the metrics provided in MMEval, you can install them with the following command.

pip install 'mmeval[all]'There are two ways to use MMEval's metrics, using Accuracy as an example:

from mmeval import Accuracy

import numpy as np

accuracy = Accuracy()The first way is to directly call the instantiated Accuracy object to calculate the metric.

labels = np.asarray([0, 1, 2, 3])

preds = np.asarray([0, 2, 1, 3])

accuracy(preds, labels)

# {'top1': 0.5}The second way is to calculate the metric after accumulating data from multiple batches.

for i in range(10):

labels = np.random.randint(0, 4, size=(100, ))

predicts = np.random.randint(0, 4, size=(100, ))

accuracy.add(predicts, labels)

accuracy.compute()

# {'top1': ...}Examples

- Continue to add more metrics and expand more tasks (e.g. NLP, audio).

- Support more ML frameworks and explore multiple ML framework support paradigms.

We appreciate all contributions to improve MMEval. Please refer to CONTRIBUTING.md for the contributing guideline.

This project is released under the Apache 2.0 license.

- MMEngine: OpenMMLab foundational library for training deep learning models.

- MIM: MIM installs OpenMMLab packages.

- MMCV: OpenMMLab foundational library for computer vision.

- MMClassification: OpenMMLab image classification toolbox and benchmark.

- MMDetection: OpenMMLab detection toolbox and benchmark.

- MMDetection3D: OpenMMLab's next-generation platform for general 3D object detection.

- MMRotate: OpenMMLab rotated object detection toolbox and benchmark.

- MMYOLO: OpenMMLab YOLO series toolbox and benchmark.

- MMSegmentation: OpenMMLab semantic segmentation toolbox and benchmark.

- MMOCR: OpenMMLab text detection, recognition, and understanding toolbox.

- MMPose: OpenMMLab pose estimation toolbox and benchmark.

- MMHuman3D: OpenMMLab 3D human parametric model toolbox and benchmark.

- MMSelfSup: OpenMMLab self-supervised learning toolbox and benchmark.

- MMRazor: OpenMMLab model compression toolbox and benchmark.

- MMFewShot: OpenMMLab fewshot learning toolbox and benchmark.

- MMAction2: OpenMMLab's next-generation action understanding toolbox and benchmark.

- MMTracking: OpenMMLab video perception toolbox and benchmark.

- MMFlow: OpenMMLab optical flow toolbox and benchmark.

- MMEditing: OpenMMLab image and video editing toolbox.

- MMGeneration: OpenMMLab image and video generative models toolbox.

- MMDeploy: OpenMMLab model deployment framework.