Tensorflow implementation of Restricted Boltzman Machine for layerwise pretraining of deep autoencoders.

This is a fork of https://github.com/Cospel/rbm-ae-tf with some corrections and improvements:

- scripts are in the package now

- implemented momentum for RBM

- using probabilities instead of samples for training

- implemented both Bernoulli-Bernoulli RBM and Gaussian-Bernoulli RBM

Bernoulli-Bernoulli RBM is good for Bernoulli-distributed binary input data. MNIST, for example.

Load data and train RBM:

import numpy as np

import matplotlib.pyplot as plt

from tfrbm import BBRBM, GBRBM

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets('MNIST_data/', one_hot=True)

mnist_images = mnist.train.images

bbrbm = BBRBM(n_visible=784, n_hidden=64, learning_rate=0.01, momentum=0.95)

errs = bbrbm.fit(mnist_images, n_epoches=20, batch_size=10, tqdm='notebook')

plt.plot(errs)

plt.show()Output:

Epoch: 0, error: 0.069226

Epoch: 1, error: 0.042563

Epoch: 2, error: 0.036503

Epoch: 3, error: 0.033372

Epoch: 4, error: 0.031310

Epoch: 5, error: 0.029698

...

Epoch: 17, error: 0.022318

Epoch: 18, error: 0.022065

Epoch: 19, error: 0.021828

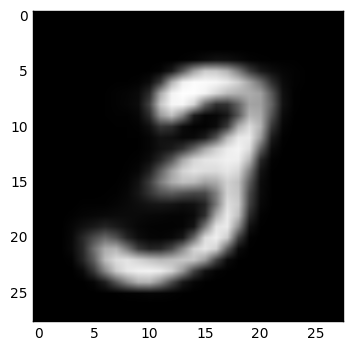

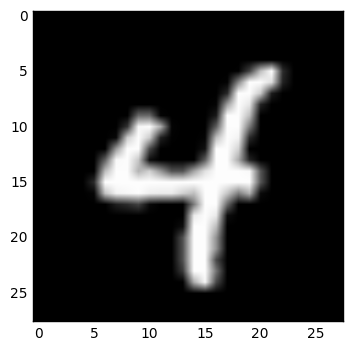

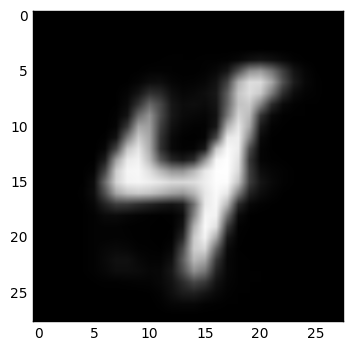

Examine some reconstructed data:

IMAGE = 1

def show_digit(x):

plt.imshow(x.reshape((28, 28)), cmap=plt.cm.gray)

plt.show()

image = mnist_images[IMAGE]

image_rec = bbrbm.reconstruct(image.reshape(1,-1))

show_digit(image)

show_digit(image_rec)Examples:

rbm = BBRBM(n_visible, n_hidden, learning_rate=1.0, momentum=1.0, xavier_const=1.0, err_function='mse')or

rbm = GBRBM(n_visible, n_hidden, learning_rate=1.0, momentum=1.0, xavier_const=1.0, err_function='mse', sample_visible=False, sigma=1)Initialization.

n_visible— number of neurons on visible layern_hidden— number of neurons on hidden layerxavier_const— constant, used in weights initialization, 1.0 is gooderr_function— error function (it's not used in training process, just inget_errfunction), should bemseorcosine

Only for GBRBM:

sample_visible— sample reconstructed data with Gaussian distribution (with reconstructed value as a mean and asigmaparameter as deviation) or not (if not, every gaussoid will be projected into a single point)sigma— standard deviation of the input data

Advices:

- Use BBRBM for Bernoulli distributed data. Input values in this case must be in the interval from

0to1. - Use GBRBM for normal distributed data with

0mean andsigmastandard deviation. If it's not, just normalize it.

rbm.fit(data_x, n_epoches=10, batch_size=10, shuffle=True, verbose=True, tqdm=None)Fit the model.

data_x— data of shape(n_data, data_dim)n_epoches— number of epochesbatch_size— batch size, should be as small as possibleshuffle— shuffle data or notverbose— output to stdouttqdm— use tqdm package or not, should be None, True or 'notebook'

Returns errors array.

rbm.partial_fit(batch_x)Fit the model on one batch.

rbm.reconstruct(batch_x)Reconstruct data. Input and output shapes are (n_data, n_visible).

rbm.transform(batch_x)Transform data. Input shape is (n_data, n_visible), output shape is (n_data, n_hidden).

rbm.transform_inv(batch_y)Inverse transform data. Input shape is (n_data, n_hidden), output shape is (n_data, n_visible).

rbm.get_err(batch_x)Returns error on batch.

rbm.get_weights()Get RBM's weights as a numpy arrays. Returns (W, Bv, Bh) where W is weights matrix of shape (n_visible, n_hidden), Bv is visible layer bias of shape (n_visible,) and Bh is hidden layer bias of shape (n_hidden,).

Note: when initializing deep network layer with this weights, use W as weights, Bh as bias and just ignore the Bv.

rbm.set_weights(w, visible_bias, hidden_bias)Set RBM's weights as numpy arrays.

rbm.save_weights(filename, name)Save RBM's weights to filename file with unique name prefix.

rbm.load_weights(filename, name)Loads RBM's weights from filename file with unique name prefix.

Tensorflow implementation of Restricted Boltzman Machine and Autoencoder for layerwise pretraining of Deep Autoencoders with RBM. Idea is to first create RBMs for pretraining weights for autoencoder. Then weigts for autoencoder are loaded and autoencoder is trained again. In this implementation you can also use tied weights for autoencoder(that means that encoding and decoding layers have same transposed weights!).

I was inspired with these implementations but I need to refactor them and improve them. I tried to use also similar api as it is in tensorflow/models:

Thank you for your gists!

More about pretraining of weights in this paper:

Feel free to make updates, repairs. You can enhance implementation with some tips from: