This repository is the official implementation of DiffAttack. The newest version of the paper can be accessed in IEEE, the previous version of the paper can be accessed in arXiv. (Accepted by TPAMI 2024)

If you encounter any question, please feel free to contact us. You can create an issue or just send email to me windvchen@gmail.com. Also welcome for any idea exchange and discussion.

[10/20/2024] This paper is finally accepted by TPAMI. 👋 You can find the newest version of paper here (with additional new results and experiments). For the previous version, please refer to here. Please note that the attack methods compared in both versions differ slightly; for instance, the TPAMI version includes more recent methods, while some older ones were omitted. We recommend reviewing both versions to gain a comprehensive understanding of the comparisons with existing approaches.

[10/14/2024] Thanks for the contributions from @AndPuQing and @yuangan, DiffAttack currently supports the newest version of diffusers (0.30.3). Please note that due to differences in package versions, the final evaluated results may vary slightly. To reproduce the results from our paper, we recommend installing diffusers==0.9.0 and using the backed-up script diff_latent_attack-0.9.0.py.

[11/30/2023] Access the latest version, v2, of our paper on Arxiv. 👋👋 In this updated release, we have enriched the content with additional discussions and experiments. Noteworthy additions include comprehensive experiments on diverse datasets (refer to Appendix I), exploration of various model structures (refer to Appendix H), and insightful comparisons with ensemble attacks (refer to Appendix G & K) as well as GAN-based methods (refer to Appendix J). Furthermore, we provide expanded details on the current limitations and propose potential directions for future research on diffusion-based methods (refer to Section 5).

[09/07/2023] Besides ImageNet-Compatible, the code now also supports generating adversarial attacks on CUB_200_2011 and Standford Cars datasets. 🚀🚀 Please refer to Requirements for more details.

[05/16/2023] Code is public.

[05/14/2023] Paper is publicly accessible on ArXiv now.

[04/30/2023] Code cleanup done. Waiting to be made public.

- Abstract

- Requirements

- Crafting Adversarial Examples

- Evaluation

- Results

- Citation & Acknowledgments

- License

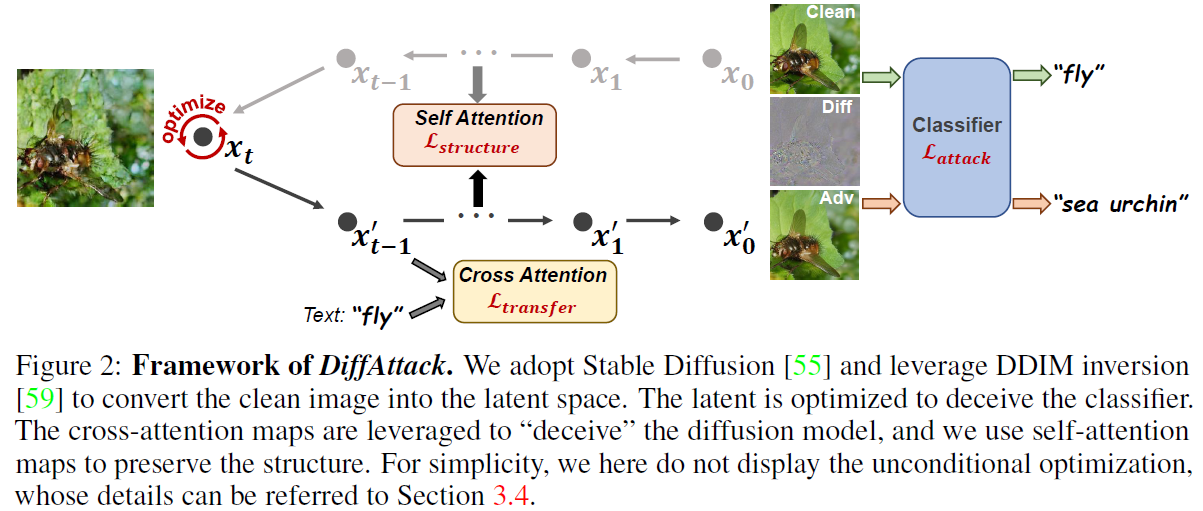

Many existing adversarial attacks generate

-

Hardware Requirements

- GPU: 1x high-end NVIDIA GPU with at least 16GB memory

-

Software Requirements

- Python: 3.8

- CUDA: 11.3

- cuDNN: 8.4.1

To install other requirements:

pip install -r requirements.txt -

Datasets

- There have been demo-datasets in demo, you can directly run the optimization code below to see the results.

- If you want to test the full

ImageNet-Compatibledataset, please download the dataset ImageNet-Compatible and then change the settings of--images_rootand--label_pathin main.py

-

Pre-trained Models

- We adopt

Stable Diffusion 2.0as our diffusion model, you can load the pretrained weight by setting--pretrained_diffusion_path="stabilityai/stable-diffusion-2-base"in main.py. - For the pretrained weights of the adversarially trained models (Adv-Inc-v3, Inc-v3ens3, Inc-v3ens4, IncRes-v2ens) in Section 4.2.2 of our paper, you can download them from here and then place them into the directory

pretrained_models.

- We adopt

-

(Supplement) Attack CUB_200_2011 and Standford Cars datasets

- Dataset: Aligned with ImageNet-Compatible, we randomly select 1K images from CUB_200_2011 and Standford Cars datasets, respectively. You can download the dataset here [CUB_200_2011 | Standford Cars] and then change the settings of

--images_rootand--label_pathin main.py. Note that you should also set--dataset_nametocub_200_2011orstandford_carwhen running the code. - Pre-trained Models: You can download models (ResNet50, SENet154, and SE-ResNet101) pretrained on CUB_200_2011 and Standford Cars from Beyond-ImageNet-Attack repository. Then place them into the directory

pretrained_models.

- Dataset: Aligned with ImageNet-Compatible, we randomly select 1K images from CUB_200_2011 and Standford Cars datasets, respectively. You can download the dataset here [CUB_200_2011 | Standford Cars] and then change the settings of

To craft adversarial examples, run this command:

python main.py --model_name <surrogate model> --save_dir <save path> --images_root <clean images' path> --label_path <clean images' label.txt>

The specific surrogate models we support can be found in model_selection function in other_attacks.py. You can also leverage the parameter --dataset_name to generate adversarial examples on other datasets, such as cub_200_2011 and standford_car.

The results will be saved in the directory <save path>, including adversarial examples, perturbations, original images, and logs.

For some specific images that distort too much, you can consider weaken the inversion strength by setting --start_step to a larger value, or leveraging pseudo masks by setting --is_apply_mask=True.

To evaluate the crafted adversarial examples on other black-box models, run:

python main.py --is_test True --save_dir <save path> --images_root <outputs' path> --label_path <clean images' label.txt>

The --save_dir here denotes the path to save only logs. The --images_root here should be set to the path of --save_dir in above Crafting Adversarial Examples.

Apart from the adversarially trained models, we also evaluate our attack's power to deceive other defensive approaches as displayed in Section 4.2.2 in our paper, their implementations are as follows:

- Adversarially trained models (Adv-Inc-v3, Inc-v3ens3, Inc-v3ens4, IncRes-v2ens): Run the code in Robustness on other normally trained models.

- HGD: Change the input size to 224, and then directly run the original code.

- R&P: Since our target size is 224, we reset the image scale augmentation proportionally (232~248). Then run the original code.

- NIPS-r3: Since its ensembled models failed to process inputs with 224 size, we run its original code that resized the inputs to 299 size.

- RS: Change the input size to 224 and set sigma=0.25, skip=1, max=-1, N0=100, N=100, alpha=0.001, then run the original code.

- NRP: Change the input size to 224 and set purifier=NRP, dynamic=True, then run the original code.

- DiffPure: Modify the original codes to evaluate the existing adversarial examples, not crafted examples again.

If you find this paper useful in your research, please consider citing:

@ARTICLE{10716799,

author={Chen, Jianqi and Chen, Hao and Chen, Keyan and Zhang, Yilan and Zou, Zhengxia and Shi, Zhenwei},

journal={IEEE Transactions on Pattern Analysis and Machine Intelligence},

title={Diffusion Models for Imperceptible and Transferable Adversarial Attack},

year={2024},

volume={},

number={},

pages={1-17},

keywords={Diffusion models;Perturbation methods;Closed box;Noise reduction;Solid modeling;Image color analysis;Glass box;Semantics;Gaussian noise;Purification;Adversarial attack;diffusion model;imperceptible attack;transferable attack},

doi={10.1109/TPAMI.2024.3480519}}

Also thanks for the open source code of Prompt-to-Prompt. Some of our codes are based on them.

This project is licensed under the Apache-2.0 license. See LICENSE for details.