PyTorch codes for "Frequency-Assisted Mamba for Remote Sensing Image Super-Resolution", IEEE Transactions on Multimedia (TMM), 2024.

- Authors: Yi Xiao, Qiangqiang Yuan*, Kui Jiang, Yuzeng Chen, Qiang Zhang, and Chia-Wen Lin

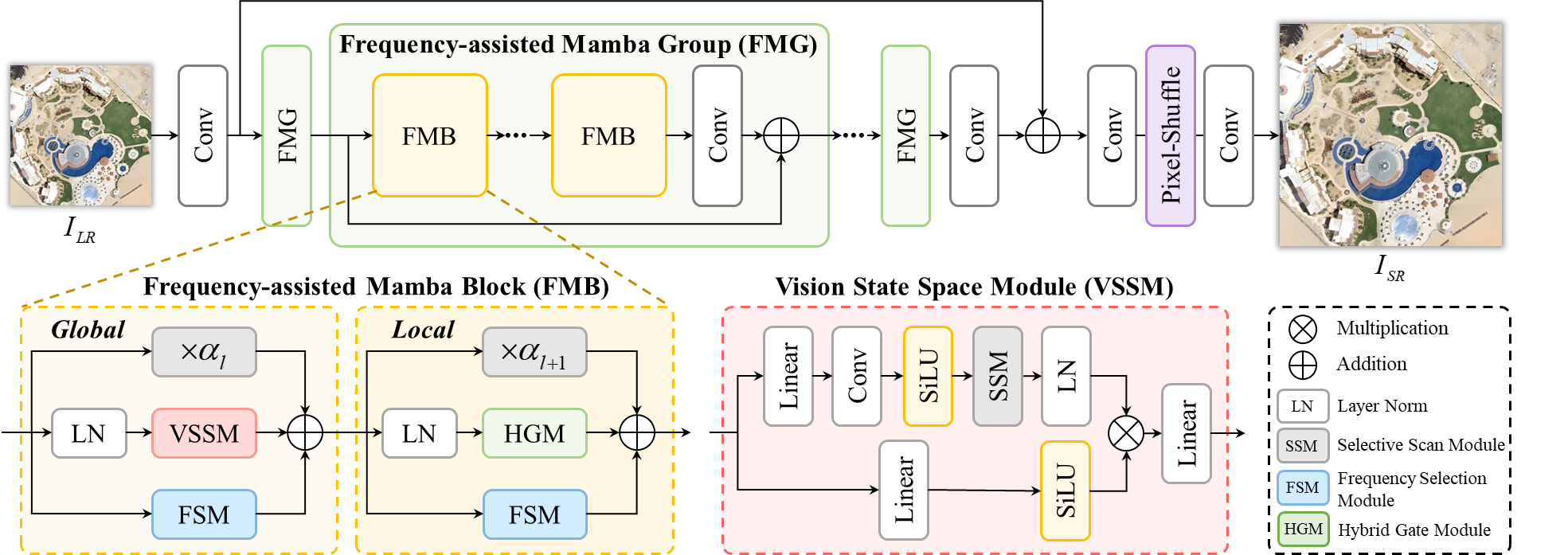

Recent progress in remote sensing image (RSI) super-resolution (SR) has exhibited remarkable performance using deep neural networks, e.g., Convolutional Neural Networks and Transformers. However, existing SR methods often suffer from either a limited receptive field or quadratic computational overhead, resulting in sub-optimal global representation and unacceptable computational costs in large-scale RSI. To alleviate these issues, we develop the first attempt to integrate the Vision State Space Model (Mamba) for RSI-SR, which specializes in processing large-scale RSI by capturing long-range dependency with linear complexity. To achieve better SR reconstruction, building upon Mamba, we devise a Frequency-assisted Mamba framework, dubbed FMSR, to explore the spatial and frequent correlations. In particular, our FMSR features a multi-level fusion architecture equipped with the Frequency Selection Module (FSM), Vision State Space Module (VSSM), and Hybrid Gate Module (HGM) to grasp their merits for effective spatial-frequency fusion. Recognizing that global and local dependencies are complementary and both beneficial for SR, we further recalibrate these multi-level features for accurate feature fusion via learnable scaling adaptors. Extensive experiments on AID, DOTA, and DIOR benchmarks demonstrate that our FMSR outperforms state-of-the-art Transformer-based methods HAT-L in terms of PSNR by 0.11 dB on average, while consuming only 28.05% and 19.08% of its memory consumption and complexity, respectively.

git clone https://github.com/XY-boy/FreMamba.git

Please download the following remote sensing benchmarks:

| Data Type | AID | DOTA-v1.0 | DIOR | NWPU-RESISC45 |

|---|---|---|---|---|

| Training | Download | None | None | None |

| Testing | Download | Download | Download | Download |

- CUDA 11.1

- Python 3.9.13

- PyTorch 1.9.1

- Torchvision 0.10.1

- causal_conv1d==1.0.0

- mamba_ssm==1.0.1

- Step I. Use the structure below to prepare your dataset, e.g., DOTA, and DIOR. /xxxx/xxx/ (your data path)

/GT/

/000.png

/···.png

/099.png

/LR/

/000.png

/···.png

/099.png

- Step II. Change the

--data_dirto your data path. - Step III. Change the

--pretrained_srto your pre-trained model path. - Step IV. Run the eval_4x.py

python eval_4x.py

python train_4x.py

Our work is built upon MambaIR. Thanks to the author for sharing this awesome work!

If you find our work helpful in your research, please consider citing it!

@ARTICLE{xiao2024fmsr,

author={Xiao, Yi and Yuan, Qiangqiang and Jiang, Kui and Chen, Yuzeng and Zhang, Qiang and Lin, Chia-Wen},

journal={IEEE Transactions on Multimedia},

title={Frequency-Assisted Mamba for Remote Sensing Image Super-Resolution},

year={2024},

volume={26},

number={},

pages={1-14},

}