LibMTL is an open-source library built on PyTorch for Multi-Task Learning (MTL). See the latest documentation for detailed introductions and API instructions.

⭐ Star us on GitHub — it motivates us a lot!

- [Jul 22 2022]: Added support for Nash-MTL (ICML 2022).

- [Jul 21 2022]: Added support for Learning to Branch (ICML 2020). Many thanks to @yuezhixiong (#14).

- [Mar 29 2022]: Paper is now available on the arXiv.

- Features

- Overall Framework

- Supported Algorithms

- Installation

- Quick Start

- Citation

- Contributors

- Contact Us

- Acknowledgements

- License

- Unified:

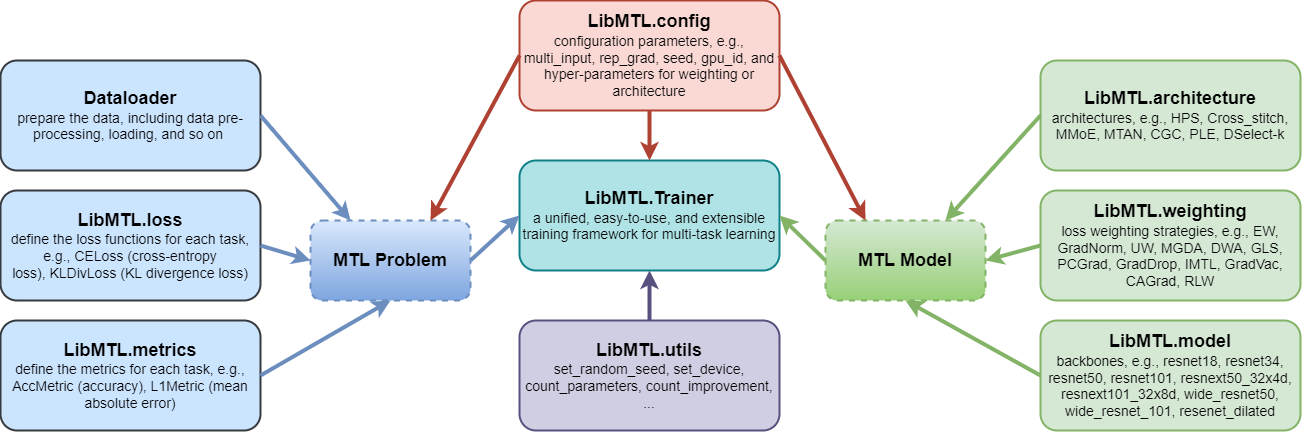

LibMTLprovides a unified code base to implement and a consistent evaluation procedure including data processing, metric objectives, and hyper-parameters on several representative MTL benchmark datasets, which allows quantitative, fair, and consistent comparisons between different MTL algorithms. - Comprehensive:

LibMTLsupports 104 MTL models combined by 8 architectures and 13 loss weighting strategies. Meanwhile,LibMTLprovides a fair comparison on 3 computer vision datasets. - Extensible:

LibMTLfollows the modular design principles, which allows users to flexibly and conveniently add customized components or make personalized modifications. Therefore, users can easily and fast develop novel loss weighting strategies and architectures or apply the existing MTL algorithms to new application scenarios with the support ofLibMTL.

Each module is introduced in Docs.

LibMTL currently supports the following algorithms:

- 13 loss weighting strategies.

| Weighting Strategy | Venues | Comments |

|---|---|---|

| Equal Weighting (EW) | - | Implemented by us |

| Gradient Normalization (GradNorm) | ICML 2018 | Implemented by us |

| Uncertainty Weights (UW) | CVPR 2018 | Implemented by us |

| MGDA | NeurIPS 2018 | Referenced from official PyTorch implementation |

| Dynamic Weight Average (DWA) | CVPR 2019 | Referenced from official PyTorch implementation |

| Geometric Loss Strategy (GLS) | CVPR 2019 workshop | Implemented by us |

| Projecting Conflicting Gradient (PCGrad) | NeurIPS 2020 | Implemented by us |

| Gradient sign Dropout (GradDrop) | NeurIPS 2020 | Implemented by us |

| Impartial Multi-Task Learning (IMTL) | ICLR 2021 | Implemented by us |

| Gradient Vaccine (GradVac) | ICLR 2021 Spotlight | Implemented by us |

| Conflict-Averse Gradient descent (CAGrad) | NeurIPS 2021 | Referenced from official PyTorch implementation |

| Nash-MTL | ICML 2022 | Referenced from official PyTorch implementation |

| Random Loss Weighting (RLW) | arXiv | Implemented by us |

- 8 architectures.

| Architecture | Venues | Comments |

|---|---|---|

| Hard Parameter Sharing (HPS) | ICML 1993 | Implemented by us |

| Cross-stitch Networks (Cross_stitch) | CVPR 2016 | Implemented by us |

| Multi-gate Mixture-of-Experts (MMoE) | KDD 2018 | Implemented by us |

| Multi-Task Attention Network (MTAN) | CVPR 2019 | Referenced from official PyTorch implementation |

| Customized Gate Control (CGC), Progressive Layered Extraction (PLE) | ACM RecSys 2020 Best Paper | Implemented by us |

| Learning to Branch (LTB) | ICML 2020 | Implemented by us |

| DSelect-k | NeurIPS 2021 | Referenced from official TensorFlow implementation |

- Different combinations of different architectures and loss weighting strategies.

The simplest way to install LibMTL is using pip.

pip install -U LibMTLMore details about environment configuration is represented in Docs.

We use the NYUv2 dataset as an example to show how to use LibMTL.

The NYUv2 dataset we used is pre-processed by mtan. You can download this dataset here.

The complete training code for the NYUv2 dataset is provided in examples/nyu. The file train_nyu.py is the main file for training on the NYUv2 dataset.

You can find the command-line arguments by running the following command.

python train_nyu.py -hFor instance, running the following command will train a MTL model with EW and HPS on NYUv2 dataset.

python train_nyu.py --weighting EW --arch HPS --dataset_path /path/to/nyuv2 --gpu_id 0 --scheduler stepMore details is represented in Docs.

If you find LibMTL useful for your research or development, please cite the following:

@article{LibMTL,

title={{LibMTL}: A Python Library for Multi-Task Learning},

author={Baijiong Lin and Yu Zhang},

journal={arXiv preprint arXiv:2203.14338},

year={2022}

}LibMTL is developed and maintained by Baijiong Lin and Yu Zhang.

If you have any question or suggestion, please feel free to contact us by raising an issue or sending an email to bj.lin.email@gmail.com.

We would like to thank the authors that release the public repositories (listed alphabetically): CAGrad, dselect_k_moe, MultiObjectiveOptimization, mtan, and nash-mtl.

LibMTL is released under the MIT license.