Supreme Court of India Hackathon 2024 Project

- Introduction

- Problem Statement

- Solution Overview

- Technologies Used

- Architecture

- Future Improvements

- Data Privacy and Security

- Key Issues Foreseen

- Getting Started

- Acknowledgements

The Legal Assist Chatbot is a multilingual conversational AI tool designed to provide legal information and summarize court documents in English and various scheduled Indian languages. This project was developed for the Supreme Court of India Hackathon 2024.

To develop an artificial intelligence-based model for a conversational use-case chatbot in English and the scheduled languages of the Constitution of India, 1950, to answer queries about case-related information, summarization of judgments, court documents, etc.

Our solution is a multilingual legal chatbot that leverages the capabilities of Google Gemini's model with Retrieval-Augmented Generation (RAG). The chatbot is designed to:

- Provide accurate answers to legal queries.

- Summarize judgments and retrieve relevant court documents.

- Support multiple Indian languages for wider accessibility.

- Ensure compliance with data privacy regulations.

The chatbot is built using a scalable cloud platform, enabling it to handle large volumes of queries efficiently and provide a seamless user experience.

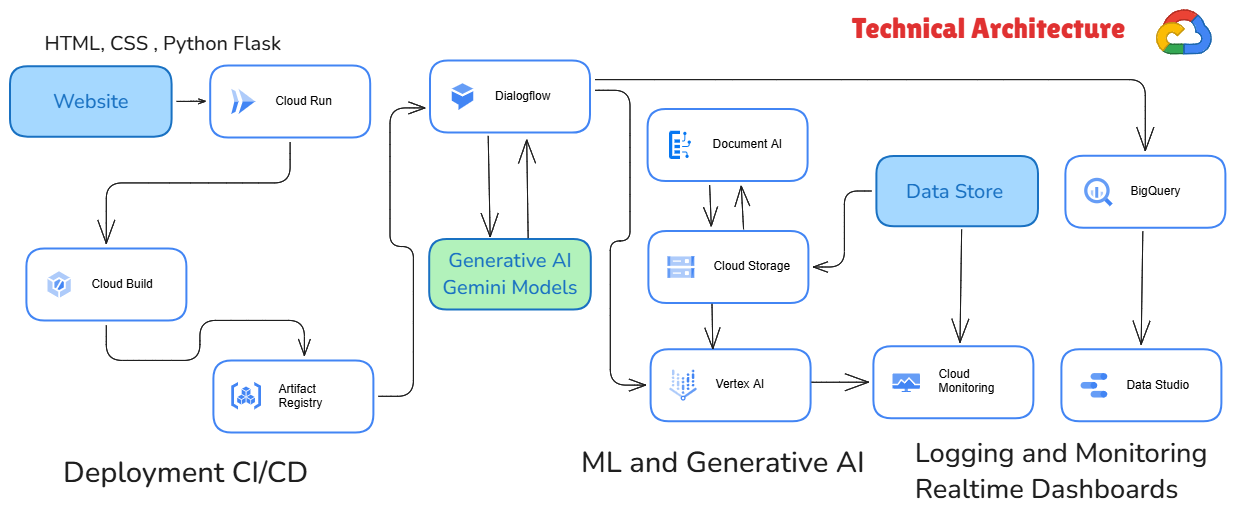

- Retrieval-Augmented Generation (RAG): For enhanced natural language processing and information retrieval.

- Google Gemini: Leveraging advanced NLP capabilities.

- Vertex AI: For orchestrating the retrieval, transformation, and generation processes.

- Flask: Used for creating the chatbot interface.

- Vector Database: For efficient semantic search and document retrieval.

- Machine Learning Models: Used to generate context-aware responses.

The Legal Assist Chatbot follows a multi-stage RAG architecture:

- Question Input: The user poses a legal question.

- Semantic Search: Using embeddings to find relevant documents or data.

- Vector Database: Storing data as mathematical representations for efficient retrieval.

- Large Language Model (LLM): Generates natural language responses based on retrieved context.

- Post-Processing: Refining and ensuring the quality of the generated response.

- Response Output: Presents the final answer to the user.

- Expand Language Support: Include more Indian languages and dialects.

- Advanced NLP Models: Integrate more sophisticated models for better context understanding.

- Continuous Learning: Implement feedback loops for model improvement based on user interactions.

- Voice Integration: Add voice support for enhanced user experience.

- Real-Time Updates: Incorporate real-time legal updates to ensure the most current information.

- Cross-Platform Deployment: Extend accessibility by deploying the chatbot on various platforms like mobile apps and social media.

Ensuring the protection of sensitive legal information is crucial. We implement robust encryption, access controls, and compliance with data privacy laws to safeguard user data.

- Real-Time Accuracy: Ensuring real-time and accurate responses is challenging. We use efficient indexing and retrieval algorithms to enhance performance.

- Model Bias: Bias in language models can lead to incorrect or unfair responses. Regular audits and diverse training data are used to minimize biases.

To get a copy of the project up and running on your local machine, follow these steps:

- Clone the Repository:

git clone https://github.com/Shrutika-211998/Legal-Assist-Chatbot.git - Install Dependencies:

cd Legal-Assist-Chatbot pip install -r requirements.txt - Run the Application:

The chatbot should now be running on

flask runhttp://localhost:5000.

We would like to thank the Supreme Court of India Hackathon 2024 for providing the opportunity to develop this project. Special thanks to our team members:

- Shreeja Sharma

- Shrutika Shripat

- Vijay Kumar

- Vishwas Dubey

- Yash Kavaiya