EMOPIA_cls

This is the official repository of EMOPIA: A Multi-Modal Pop Piano Dataset For Emotion Recognition and Emotion-based Music Generation. The paper has been accepted by International Society for Music Information Retrieval Conference 2021. This repository is the Emotion Recognition part (Audio and MIDI domain).

News!

2021-10-29 update matlab feature (key, tempo, note density)

2021-07-21 update dataset

2021-07-20 Upload all pretrained weight

you can check ML performance in notebook

Environment

-

Install python and PyTorch:

- python==3.8.5

- torch==1.8.0 (Please install it according to your CUDA version.)

-

Other requirements:

- pip install -r requirements.txt

-

git clone MIDI processor (already done)

- MIDI-like(magenta)

- REMI

- If you want to bulid new REMI corpus, vocab from other dataset, plz check official repo of compund-word-transfomer and

EMOPIA_cls/midi_cls/midi_helper/remi/src

Usage

Inference

download model weight in Here, unzip in project dir.

-

MIDI domain inference

python inference.py --types {midi_like or remi} --task ar_va --file_path {your_midi} --cuda {cuda} python inference.py --types {midi_like or remi} --task arousal --file_path {your_midi} --cuda {cuda} python inference.py --types {midi_like or remi} --task valence --file_path {your_midi} --cuda {cuda} -

Audio domain inference

python inference.py --types wav --task ar_va --file_path {your_mp3} --cuda {cuda} python inference.py --types wav --task arousal --file_path {your_mp3} --cuda {cuda} python inference.py --types wav --task valence --file_path {your_mp3} --cuda {cuda}

Inference results

python inference.py --types wav --task ar_va --file_path ./dataset/sample_data/Sakamoto_MerryChristmasMr_Lawrence.mp3

./dataset/sample_data/Sakamoto_MerryChristmasMr_Lawrence.mp3 is emotion Q3

Inference values: [0.33273646 0.17223473 0.63210356 0.07314324]

python inference.py --types midi_like --task ar_va --file_path ./dataset/sample_data/Sakamoto_MerryChristmasMr_Lawrence.mid

./dataset/sample_data/Sakamoto_MerryChristmasMr_Lawrence.mid is emotion Q3

Inference values: [-1.3685153 -1.3001229 2.2495744 -0.873877 ]

Training from scratch

-

Download the data files from HERE.

-

Preprocessing

a. audio: resampling to 22050

b. midi: magenta feature extraction, remi feature extraction

python preprocessing.py -

training options:

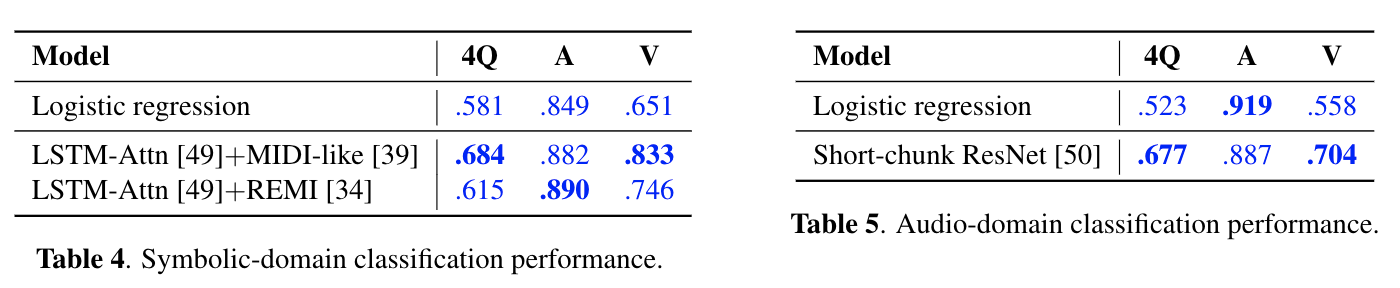

a. MIDI domain classification

cd midi_cls python train_test.py --midi {midi_like or remi} --task ar_va python train_test.py --midi {midi_like or remi} --task arousal python train_test.py --midi {midi_like or remi} --task valenceb. Wav domain clasfficiation

cd audio_cls python train_test.py --wav sr22k --task ar_va python train_test.py --wav sr22k --task arousal python train_test.py --wav sr22k --task valence

Authors

The paper is a co-working project with Anna, Joann and Nabin. This repository is mentained by me.

License

The EMOPIA dataset is released under Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International (CC BY-NC-SA 4.0). It is provided primarily for research purposes and is prohibited to be used for commercial purposes. When sharing your result based on EMOPIA, any act that defames the original music owner is strictly prohibited.

Cite the dataset

@inproceedings{{EMOPIA},

author = {Hung, Hsiao-Tzu and Ching, Joann and Doh, Seungheon and Kim, Nabin and Nam, Juhan and Yang, Yi-Hsuan},

title = {{MOPIA}: A Multi-Modal Pop Piano Dataset For Emotion Recognition and Emotion-based Music Generation},

booktitle = {Proc. Int. Society for Music Information Retrieval Conf.},

year = {2021}

}