Official implementation of the ICML 2023 paper "On Pitfalls of Test-time Adaptation".

TTAB is a benchmark for standardizing and comprehensively evaluating Test-time Adaptation algorithms on a diverse array of distribution shifts.

The TTAB package contains:

- Data loaders that automatically handle data processing and splitting to cover multiple significant evaluation settings considered in prior work.

- Unified dataset evaluators that standardize model evaluation for each dataset and setting.

- Multiple representative Test-time Adaptation (TTA) algorithms.

In addition, the example scripts contain default models, optimizers, and evaluation code. New algorithms can be easily added and run on all of the TTAB datasets.

To run a baseline test, please prepare the relevant pre-trained checkpoints for the base model and place them in pretrain/ckpt/.

The TTAB package depends on the following requirements:

- numpy>=1.21.5

- pandas>=1.1.5

- pillow>=9.0.1

- pytz>=2021.3

- torch>=1.7.1

- torchvision>=0.8.2

- timm>=0.6.11

- scikit-learn>=1.0.3

- scipy>=1.7.3

- tqdm>=4.56.2

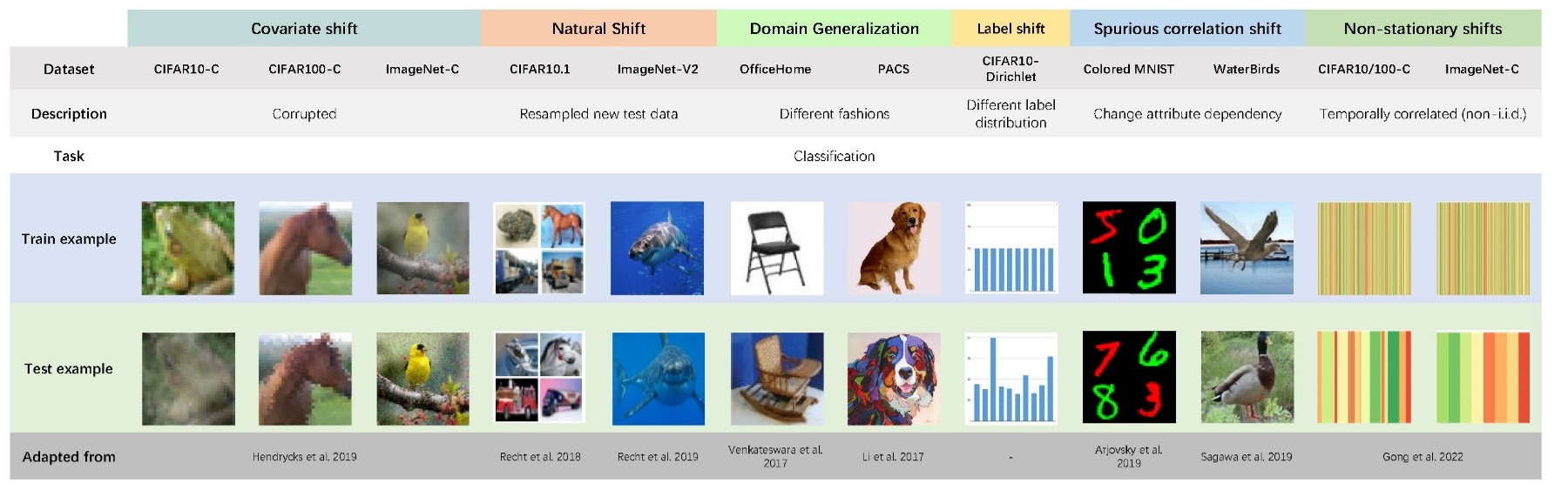

Distribution shift occurs when the test distribution differs from the training distribution, and it can considerably degrade performance of machine learning models deployed in the real world. The form of distribution shifts differs greatly across varying applications in practice. In TTAB, we collect 10 datasets and systematically sort them into 5 types of distribution shifts:

- Covariate Shift

- Natural Shift

- Domain Generalization

- Label Shift

- Spurious Correlation Shift

The TTAB package provides a simple, standardized interface for all TTA algorithms and datasets in the benchmark. This short Python snippet covers all of the steps of getting started with a user-customizable configuration, including the choice of TTA algorithms, datasets, base models, model selection methods, experimental setups, evaluation scenarios (we will discuss evaluation scenarios in more detail in Scenario) and protocols.

config, scenario = configs_utils.config_hparams(config=init_config)

# Dataset

test_data_cls = define_dataset.ConstructTestDataset(config=config)

test_loader = test_data_cls.construct_test_loader(scenario=scenario)

# Base model.

model = define_model(config=config)

load_pretrained_model(config=config, model=model)

# Algorithms.

model_adaptation_cls = get_model_adaptation_method(

adaptation_name=scenario.model_adaptation_method

)(meta_conf=config, model=model)

model_selection_cls = get_model_selection_method(selection_name=scenario.model_selection_method)(

meta_conf=config, model=model

)

# Evaluate.

benchmark = Benchmark(

scenario=scenario,

model_adaptation_cls=model_adaptation_cls,

model_selection_cls=model_selection_cls,

test_loader=test_loader,

meta_conf=config,

)

benchmark.eval()For evaluation, the TTAB package provides two types of dataset objects. The standard dataset object stores data, labels and indices as well as several APIs to support high-level manipulation, such as mixing the source and target domains. The standard dataset object serves common evaluation metrics like Top-1 accuracy and cross-entropy.

To support other metrics, such as worst-group accuracy, for more robust evaluation, we provide a group-wise dataset object that records additional group information.

To provide a more seamless user experience, we have designed a unified data loader that supports all dataset objects. To load data in TTAB, simply run the following command with config and scenario as inputs.

test_data_cls = define_dataset.ConstructTestDataset(config=config)

test_loader = test_data_cls.construct_test_loader(scenario=scenario)In the scenario section, we outline all relevant parameters for defining a distribution shift problem in practice, such as test_domain and test_case. In the test_domain, we specify the implicit test_case determines how we organize the existing dataset corresponding to test_domain into a data stream that will be fed to TTA methods. Besides, we also define the model architecture, TTA method, and model selection method that we will use for the defined distribution shift problem.

Here, we present an example of scenario. Please feel free to suggest a new scenario for your research.

"S1": Scenario(

task="classification",

model_name="resnet26",

model_adaptation_method="tent",

model_selection_method="last_iterate",

base_data_name="cifar10",

test_domains=[

TestDomain(

base_data_name="cifar10",

data_name="cifar10_c_deterministic-gaussian_noise-5",

shift_type="synthetic",

shift_property=SyntheticShiftProperty(

shift_degree=5,

shift_name="gaussian_noise",

version="deterministic",

has_shift=True,

),

domain_sampling_name="uniform",

domain_sampling_value=None,

domain_sampling_ratio=1.0,

)

],

test_case=TestCase(

inter_domain=HomogeneousNoMixture(has_mixture=False),

batch_size=64,

data_wise="batch_wise",

offline_pre_adapt=False,

episodic=False,

intra_domain_shuffle=True,

),

),We provide an example script that can be used to adapt distribution shifts on the TTAB datasets.

python run_exp.pyCurrently, before using the example script, you need to manually set up the args object in the parameters.py. This script is configured to use the default base model, dataset, evaluation protocol and reasonable hyperparameters.

In this link, we provide a set of scripts that can be used to pre-train models on the in-distribution TTAB datasets. These pre-trained models were used to benchmark baselines in our paper. Note that we adopt self-supervised learning with a rotation prediction task to train the baseline model in our paper for a fair comparison. In practice, please feel free to choose whatever pre-training methods you prefer, but please pay attention to the setup of TTA methods.

If you find this repository helpful for your project, please consider citing:

@inproceedings{zhao2023on,

title = {On Pitfalls of Test-time Adaptation},

author = {Zhao, Hao and Liu, Yuejiang and Alahi, Alexandre and Lin, Tao},

booktitle = {International Conference on Machine Learning (ICML)},

year = {2023},

}