Introduction

Bolt is a light-weight library for deep learning. Bolt, as a universal deployment tool for all kinds of neural networks, aims to minimize the inference runtime as much as possible. Bolt has been widely deployed and used in many departments of HUAWEI company, such as 2012 Laboratory, CBG and HUAWEI Product Lines. If you have questions or suggestions, you can submit issue. QQ群: 833345709

Why Bolt is what you need?

- High Performance: 15%+ faster than existing open source acceleration libraries.

- Rich Model Conversion: support Caffe, ONNX, TFLite, Tensorflow.

- Various Inference Precision: support FP32, FP16, INT8, 1-BIT.

- Multiple platforms: ARM CPU(v7, v8, v8.2), Mali GPU, Qualcomm GPU, X86 CPU(AVX2, AVX512)

- Bolt is the first to support NLP and also supports common CV applications.

- Minimize ROM/RAM

- Rich Graph Optimization

- Efficient Thread Affinity Setting

- Auto Algorithm Tuning

- Time-Series Data Acceleration

See more excellent features and details here

Building Status

Kinds of choices are provided for the compilation of bolt. Please make a suitable choice depending on your environment.

NOTE: Bolt defaultly link static library, This may cause some problem on some platforms. You can use --shared option to link shared library.

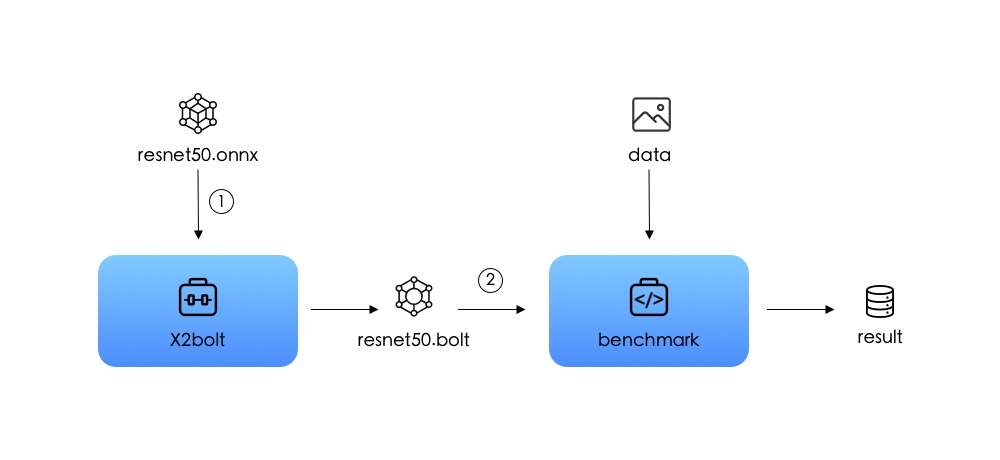

Quick Start

Two steps to get started with bolt.

-

Conversion: use X2bolt to convert your model from caffe,onnx,tflite or tensorflow to .bolt;

-

Inference: run benchmark with .bolt and data to get the inference result.

For more details about the usage of X2bolt and benchmark tools, see docs/USER_HANDBOOK.md.

DL Applications in Bolt

Here we show some interesting and useful applications in bolt.

| Face Detection | ASR | Semantics Analysis | Image Classification |

|---|---|---|---|

demo_link: face detection demo_link: face detection |

demo_link: asr demo_link: asr |

demo_link: semantics analysis demo_link: semantics analysis |

demo_link: image classification demo_link: image classification |

Verified Networks

Bolt has shown its high performance in the inference of common CV and NLP neural networks. Some of the representative networks that we have verified are listed below. You can find detailed benchmark information in docs/BENCHMARK.md.

| Application | Models |

| CV | Resnet50, Shufflenet, Squeezenet, Densenet, Efficientnet, Mobilenet_v1, Mobilenet_v2, Mobilenet_v3, BiRealNet, ReActNet, Ghostnet, SSD, Yolov3, Pointnet, ViT, TNT ... |

| NLP | Bert, Albert, Neural Machine Translation, Text To Speech, Automatic Speech Recognition, Tdnn ... |

| Recommendation | MLP |

| More DL Tasks | ... |

More models than these mentioned above are supported, users are encouraged to further explore.

Documentations

Everything you want to know about bolt is recorded in the detailed documentations stored in docs.

- How to install bolt with different compilers.

- How to use bolt to inference your ML models.

- How to develop bolt to customize more models.

- Operators documentation

- Benchmark results on some universal models.

- How to build demo/example with kit.

- Frequently Asked Questions(FAQ)

Articles

- 深度学习加速库Bolt领跑端侧AI

- 为什么 Bolt 这么快:矩阵向量乘的实现

- 深入硬件特性加速TinyBert,首次实现手机上Bert 6ms推理

- Bolt GPU性能优化,让上帝帮忙掷骰子

- Bolt助力HMS机器翻译,自然语言处理又下一城

- ARM CPU 1-bit推理,走向极致的道路

教程

- 图像分类: Android Demo, iOS Demo

- 图像增强: Android Deme, iOS Demo

- 情感分类: Android Demo

- 中文语音识别: Android Demo, iOS Demo

- 人脸检测: Android Demo, iOS Demo

Acknowledgement

Bolt refers to the following projects: caffe, onnx, tensorflow, ncnn, mnn, dabnn.

License

The MIT License(MIT)