Code for paper: Hierarchical Graph Pattern Understanding for Zero-Shot Video Object Segmentation

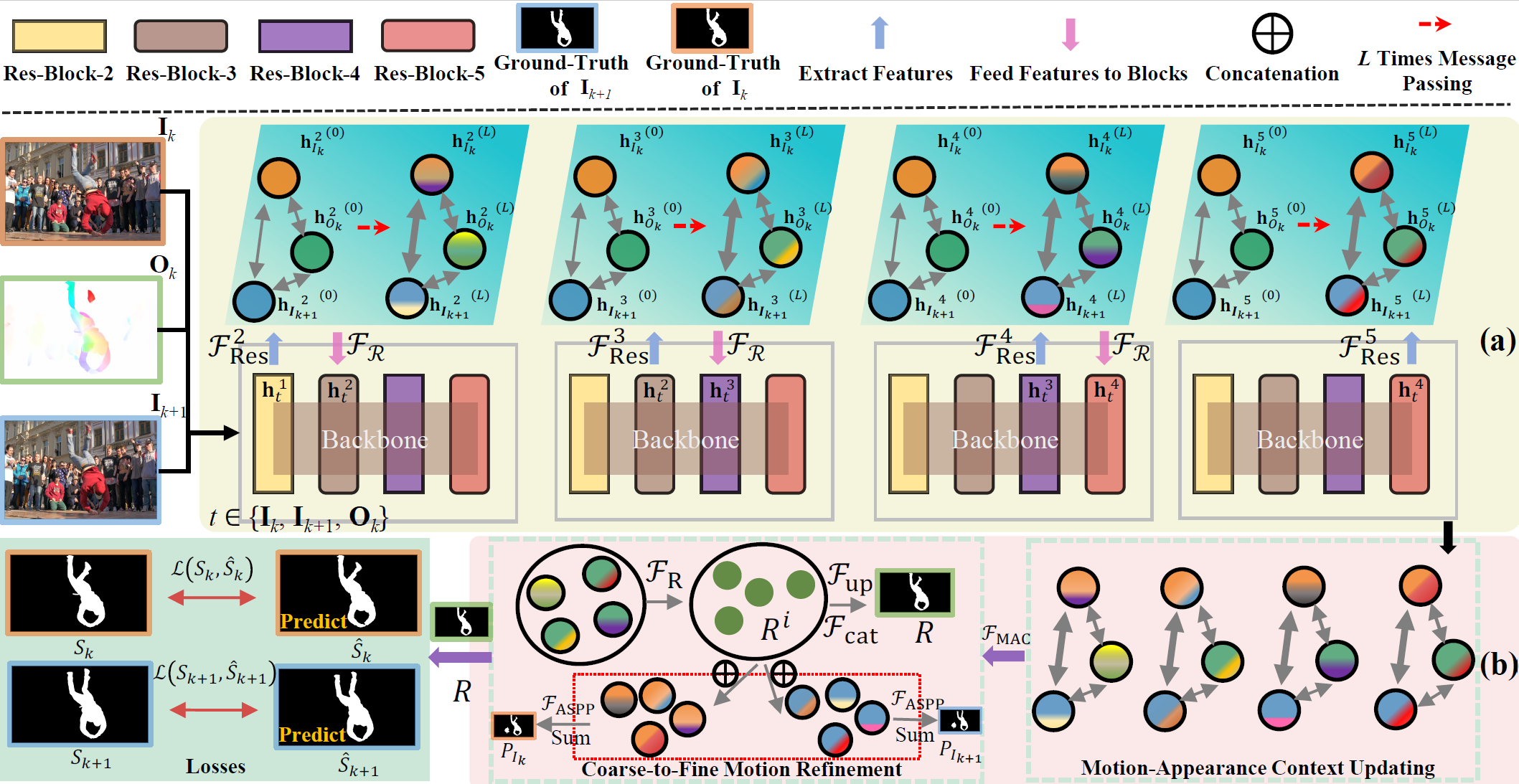

Figure 1. Architecture of HGPU. Frames (I_k, I_{k+1}) and their corresponding optical flow (O_k) are used as inputs to generate high-order feature representations with multiple modalities by (a) HGPE. Motion-appearance features are parsed through (b) MAUD to output coarse-to-fine segmentation results.-

Python (3.6.12)

-

PyTorch (version:1.7.0)

-

Apex (https://github.com/NVIDIA/apex, version: 0.1)

-

Requirements in the requirements.txt files.

- Download the DAVIS-2017 dataset from DAVIS.

- Download the YouTube-VOS dataset from YouTube-VOS, the validation set is not mandatory to download.

- Download the YouTube2018-hed and davis-hed datasets.

- The optical flow files are obtained by RAFT, we provide demo code that can be run directly on path

flow. We also provide optical flow of YouTube-VOS (18G) in GoogleDrive, optical flow of DAVIS can be found in GoogleDrive.

Please ensure the datasets are organized as following format.

YouTube-VOS

|----train

|----Annotations

|----JPEGImages

|----YouTube-flow

|----YouTube-hed

|----meta.json

DAVIS

|----Annotations

|----ImageSets

|----JPEGImages

|----davis-flow

|----davis-hed

We provide multi-GPU parallel code based on apex.

Run CUDA_VISIBLE_DEVICES="0,1,2,3" python -m torch.distributed.launch --nproc_per_node 4 train_HGPU.py for distributed training in Pytorch.

Please change your dada path in two codes (libs/utils/config_davis.py in line 52, and libs/utils/config_youtubevos.py in line 38)

If you want to test the model results directly, you can follow the settings below.

- Pre-trained models and pre-calculated results.

| Dataset | J Mean | Pre-trained Model | Pre-calculated Mask |

|---|---|---|---|

| DAVIS-16 | 86.0 full-training; 78.6 pre-training; 80.3 main-training | [full-training]; [pre-training]; [main-training] | [DAVIS-16] |

| Youtube-Objects | 73.9 (average 10 category levels) | without fine-tuning | [YouTube-Objects] |

| Long-Videos | 74.0 | without fine-tuning | [Long-Videos] |

| DAVIS-17 | 67.0 | [DAVIS-17] | [DAVIS-17] |

-

Change your path in

test_HGPU.py, then runpython test_HGPU.py. -

Run

python apply_densecrf_davis.py(change your path in line 16) for getting binary masks.

The code directory structure is as follows.

HGPU

|----flow

|----libs

|----model

|----HGPU

|----encoder_0.8394088008745166.pt

|----decoder_0.8394088008745166.pt

|....

|----apply_densecrf_davis.py

|----train_HGPU.py

|----test_HGPU.py

-

Evaluation code from DAVIS_Evaluation, the python version is available at PyDavis16EvalToolbox.

-

The YouTube-Objects dataset can be downloaded from here and annotation can be found here.

-

The Long-Videos dataset can be downloaded from here.

- PWCNet is used to compute optical flow estimation for HGPU training, and the pre-trained model and inference results are in here.

- Zero-shot Video Object Segmentation via Attentive Graph Neural Networks, ICCV 2019 (https://github.com/carrierlxk/AGNN)

- Motion-Attentive Transition for Zero-Shot Video Object Segmentation, AAAI 2020 (https://github.com/tfzhou/MATNet)

- Video Object Segmentation Using Space-Time Memory Networks, ICCV 2019 (https://github.com/seoungwugoh/STM)

- Video Object Segmentation with Adaptive Feature Bank and Uncertain-Region Refinement, NeurIPS 2020 (https://github.com/xmlyqing00/AFB-URR)