This repository is an official implementation of ADAPT: Action-aware Driving Caption Transformer, accepted by ICRA 2023.

Created by Bu Jin, Xinyu Liu, Yupeng Zheng, Pengfei Li, Hao Zhao, Tong Zhang, Yuhang Zheng, Guyue Zhou and Jingjing Liu from Institute for AI Industry Research(AIR), Tsinghua University.

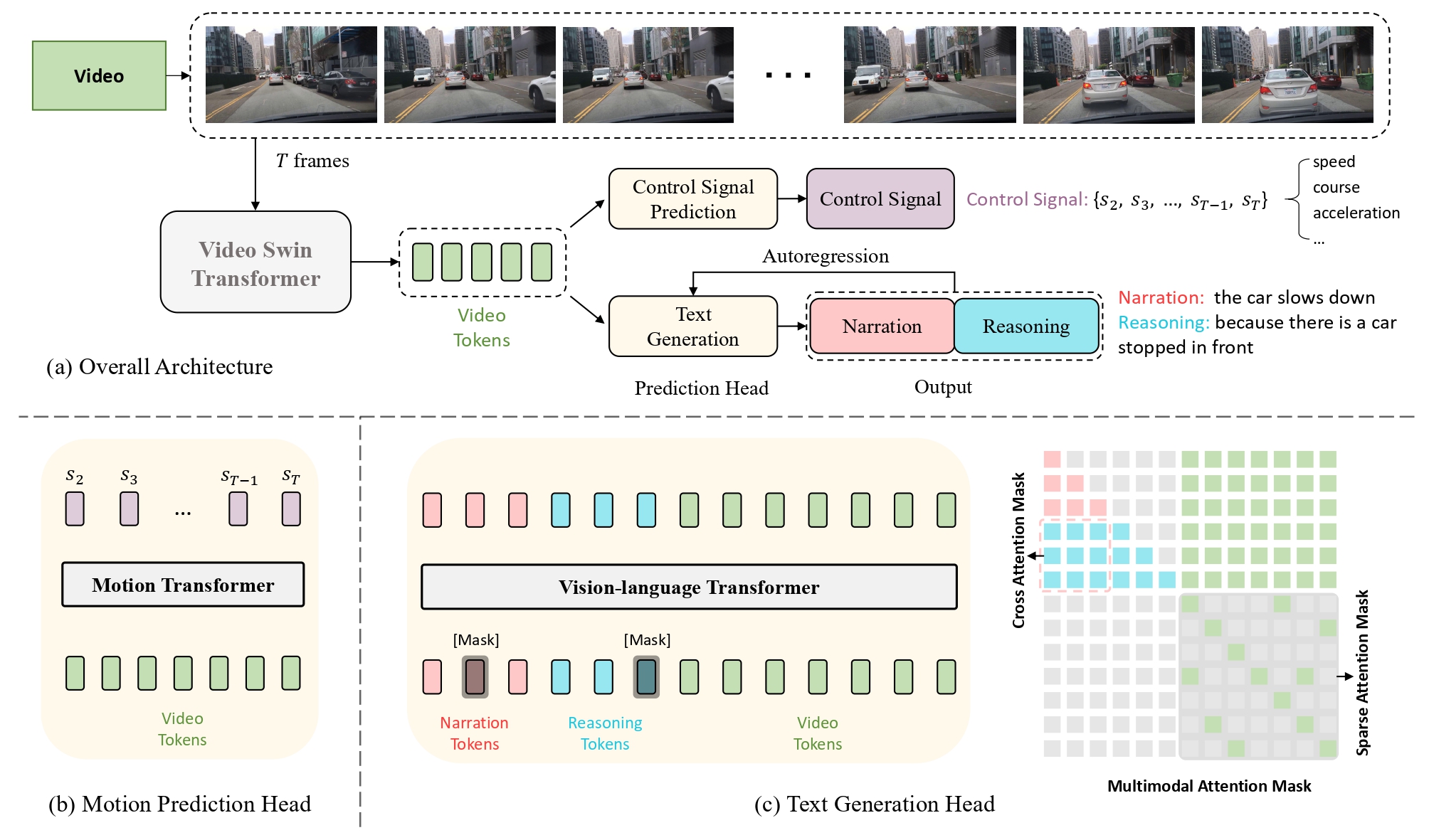

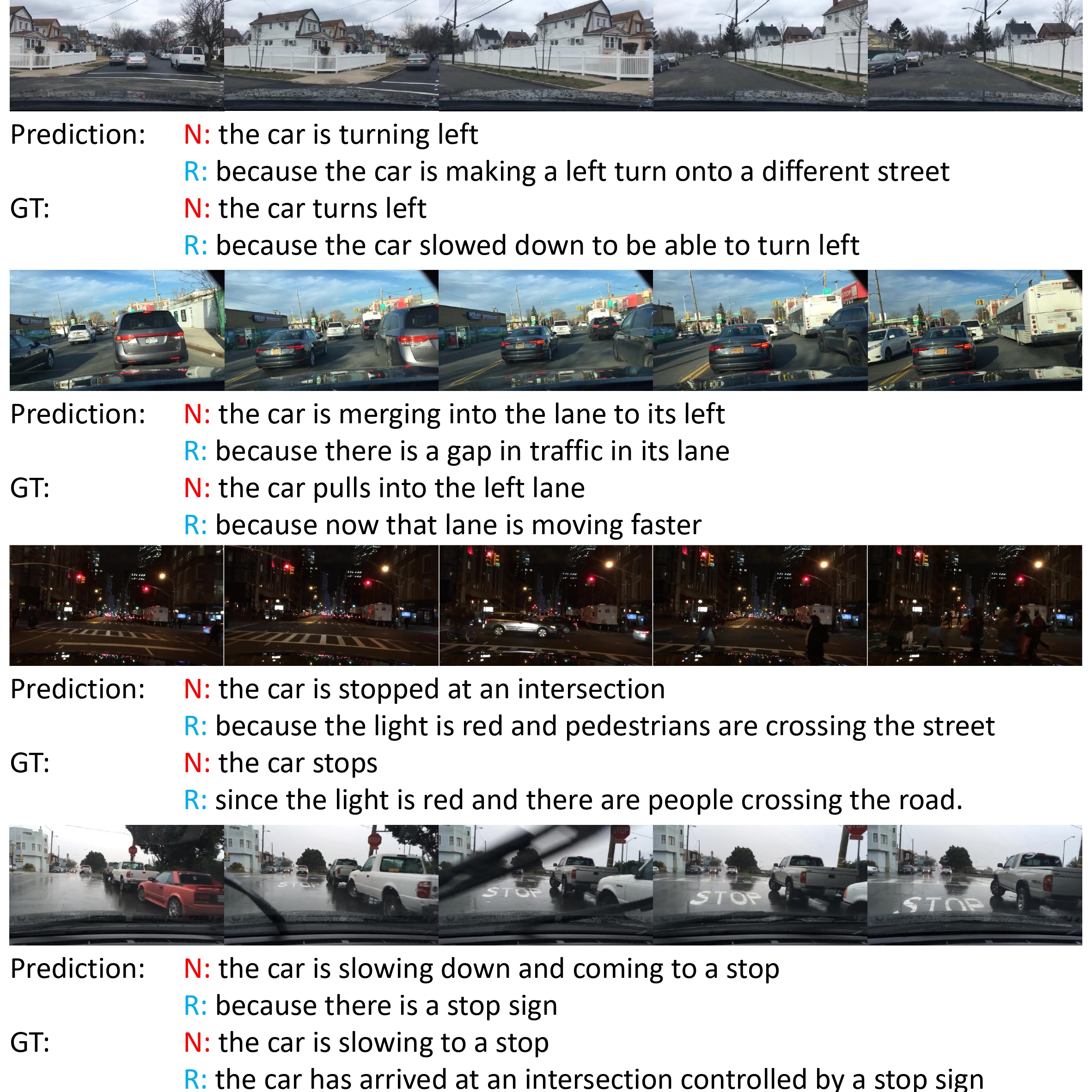

We propose an end-to-end transformer-based architecture, ADAPT (Action-aware Driving cAPtion Transformer), which provides user-friendly natural language narrations and reasoning for autonomous vehicular control and action. ADAPT jointly trains both the driving caption task and the vehicular control prediction task, through a shared video representation.

This repository contains the training and testing of the proposed framework in paper, as well as the demo in smulator environment and real word.

This reposity will be updated soon, including:

- Uploading the Preprocessed Data of BDDX.

- Uploading the Raw Data of BDDX, along with an easier processing script.

- Uploading the Visualization Codes of raw data and results.

- Updating the Experiment Codes to make it easier to get up with.

- Uploading the Conda Environments of ADAPT.

- ADAPT: Action-aware Driving Caption Transformer

Create conda environment:

conda create --name ADAPT python=3.8

Install torch:

pip install torch==1.13.1+cu116 torchaudio==0.13.1+cu116 torchvision==0.14.1+cu116 -f https://download.pytorch.org/whl/torch_stable.html

Install apex:

git clone https://github.com/NVIDIA/apex

cd apex

pip install -v --no-cache-dir ./

cd ..

rm -rf apex

Then install other dependency:

pip install -r requirements.txt

We provide a Docker image to make it easy to get up. Before you run the launch_container.sh, please ensure the directory name is right in launch_container.sh and your current directory.

sh launch_container.shOur latest docker image jxbbb/adapt:latest is adapted from linjieli222/videocap_torch1.7:fairscale, which supports the following mixed precision training

- Torch.amp

- Nvidia Apex O2

- deepspeed

- fairscale

-

We release our best performing checkpoints. You can download these models at [ Google Drive ] and place them under

checkpointsdirectory. If the directory does not exist, you can create one. -

We release the base video-swin models we used during training in [ Google Drive ]. If you want to use other pretrained video-swin models, you can refer to Video-Swin-Transformer.

We provide a Docker image for easier reproduction. Please install the following:

- nvidia driver (418+),

- Docker (19.03+),

- nvidia-container-toolkit.

We only support Linux with NVIDIA GPUs. We test on Ubuntu 18.04 and V100 cards. We use mixed-precision training hence GPUs with Tensor Cores are recommended. Our scripts require the user to have the docker group membership so that docker commands can be run without sudo.

Then download codes and files for caption evaluation in Google Drive and put it under src/evalcap

You can ether download the preprocessed data in this site, or just download the raw videos and car information in this site, and preprocess it with the code in src/prepro.

The resulting data structure should follow the hierarchy as below.

${REPO_DIR}

|-- checkpoints

|-- datasets

| |-- BDDX

| | |-- frame_tsv

| | |-- captions_BDDX.json

| | |-- training_32frames_caption_coco_format.json

| | |-- training_32frames.yaml

| | |-- training.caption.lineidx

| | |-- training.caption.lineidx.8b

| | |-- training.caption.linelist.tsv

| | |-- training.caption.tsv

| | |-- training.img.lineidx

| | |-- training.img.lineidx.8b

| | |-- training.img.tsv

| | |-- training.label.lineidx

| | |-- training.label.lineidx.8b

| | |-- training.label.tsv

| | |-- training.linelist.lineidx

| | |-- training.linelist.lineidx.8b

| | |-- training.linelist.tsv

| | |-- validation...

| | |-- ...

| | |-- validation...

| | |-- testing...

| | |-- ...

| | |-- testing...

|-- datasets_part

|-- docs

|-- models

| |-- basemodel

| |-- captioning

| |-- video_swin_transformer

|-- scripts

|-- src

|-- README.md

|-- ...

|-- ... We provide a demo to run end-to-end inference on the test video.

Our inference code will take a video as input, and generate video caption.

sh scripts/inference.shThe prediction should look like

Prediction: The car is stopped because the traffic light turns red.We provide example scripts to evaluate pre-trained checkpoints.

# Assume in the docker container

sh scripts/BDD_test.shWe provide example scripts to train our model in different sets.

# Assume in the docker container

sh scripts/BDDX_multitask.sh# Assume in the docker container

sh scripts/BDDX_only_caption.sh# Assume in the docker container

sh scripts/BDDX_only_signal.sh# Assume in the docker container

sh scripts/BDDX_multitask_des.sh

sh scripts/BDDX_multitask_exp.shRemember that this two commands require two additional testing data. The data suructure should be:

${REPO_DIR}

|-- datasets

| |-- BDDX

| |-- BDDX_des

| |-- BDDX_expIf you find our work useful in your research, please consider citing:

@article{jin2023adapt,

title={ADAPT: Action-aware Driving Caption Transformer},

author={Jin, Bu and Liu, Xinyu and Zheng, Yupeng and Li, Pengfei and Zhao, Hao and Zhang, Tong and Zheng, Yuhang and Zhou, Guyue and Liu, Jingjing},

journal={arXiv preprint arXiv:2302.00673},

year={2023}

}Our code is built on top of open-source GitHub repositories. We thank all the authors who made their code public, which tremendously accelerates our project progress. If you find these works helpful, please consider citing them as well.