Official repository for the paper "MAVIS: Mathematical Visual Instruction Tuning".

[📖 Paper] [🤗 MAVIS-Caption] [🤗 MAVIS-Instruct] [🏆 Leaderboard]

🌟 Our model is mainly evaluation on MathVerse, a comprehensive visual mathematical benchmark for MLLMs

- [2024.08.05] The new official LLaVA model, LLaVA-OneVision, adopt MAVIS-Insruct as training data 🔥, achieving new SoTA on MathVerse and MathVista benchmarks.

- [2024.07.30] We release the temporal version for MAVIS-Caption & Instruct in Google Drive

- [2024.07.11] 🚀 We release MAVIS to facilitate MLLM's visual mathematical capabilities 📐

- [2024.07.01] 🎉 MathVerse is accepted by ECCV 2024 🎉

- [2024.03.22] 🔥 We release the MathVerse benchmark (🌐 Webpage, 📑 Paper, and 🤗 Dataset)

- Coming soon: dataset and models

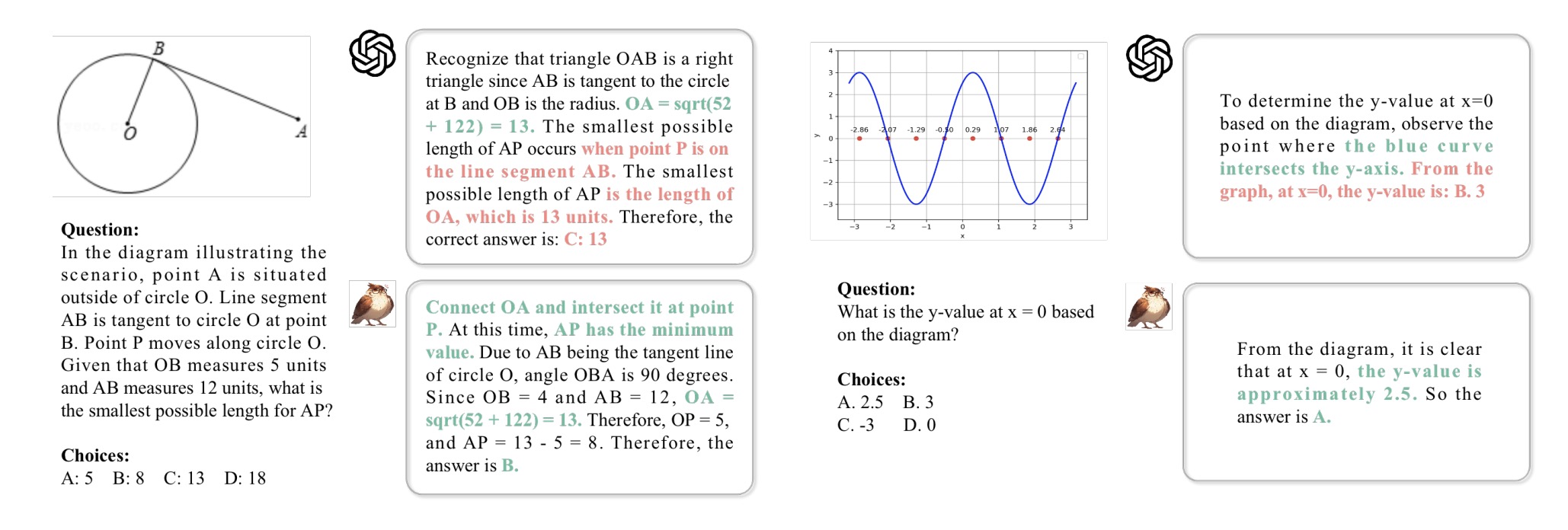

We identify three key areas within Multi-modal Large Language Models (MLLMs) for visual math problem-solving that need to be improved: visual encoding of math diagrams, diagram-language alignment, and mathematical reasoning skills.

In this paper, we propose MAVIS, the first MAthematical VISual instruction tuning paradigm for MLLMs, including two newly curated datasets, a mathematical vision encoder, and a mathematical MLLM:

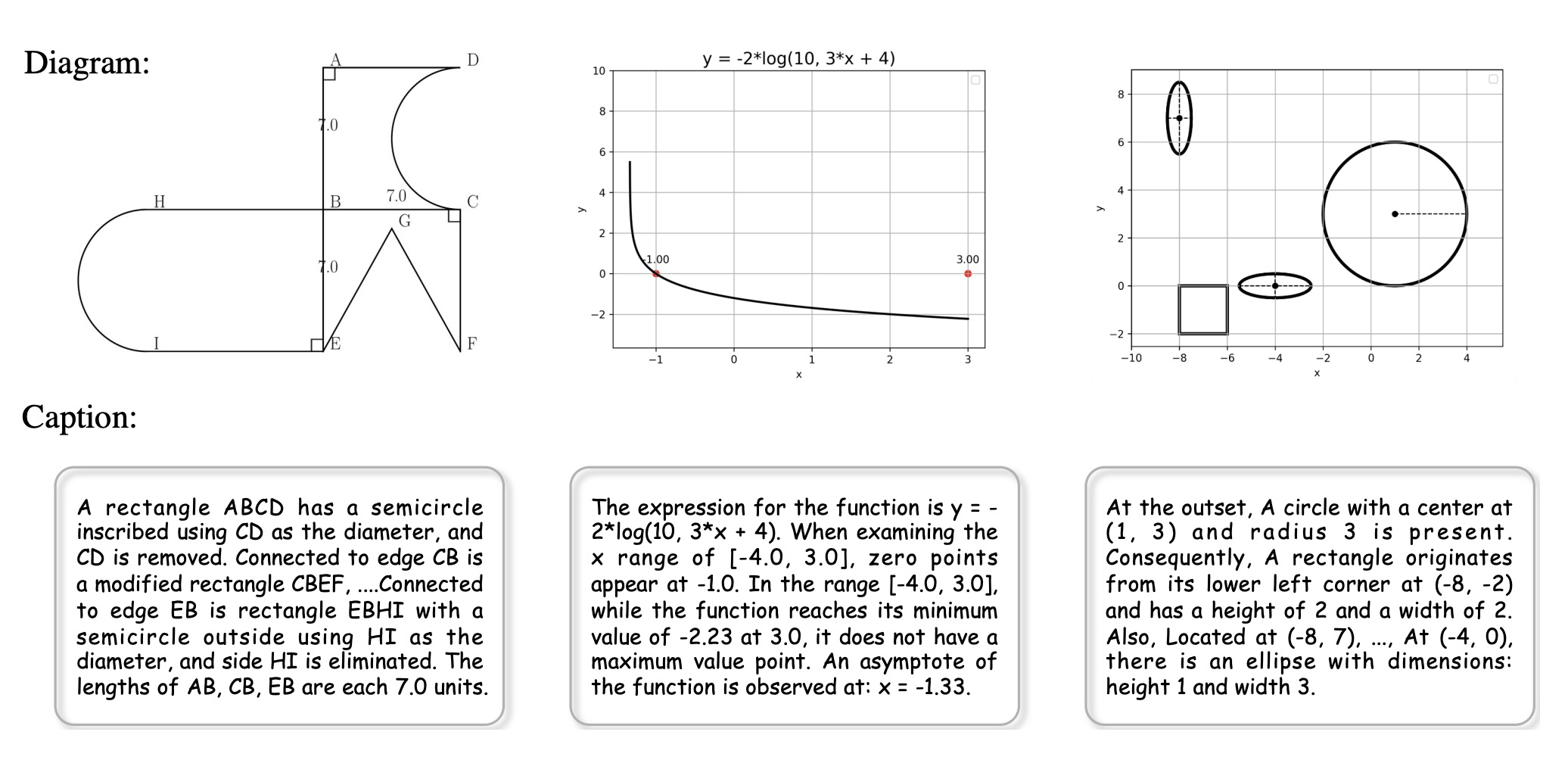

- MAVIS-Caption: 588K high-quality caption-diagram pairs, spanning geometry and function

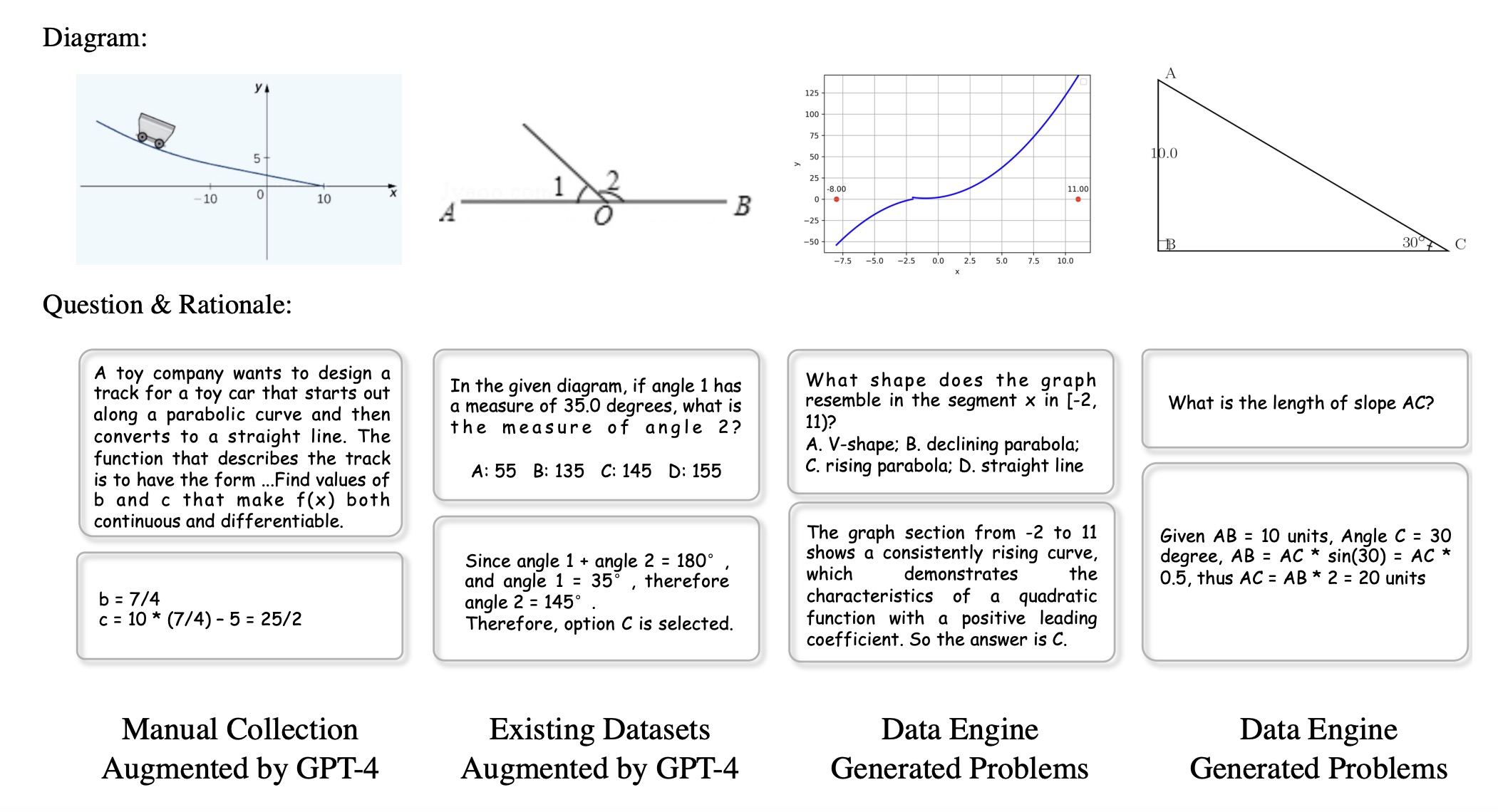

- MAVIS-Instruct: 834K instruction-tuning data with CoT rationales in a text-lite version

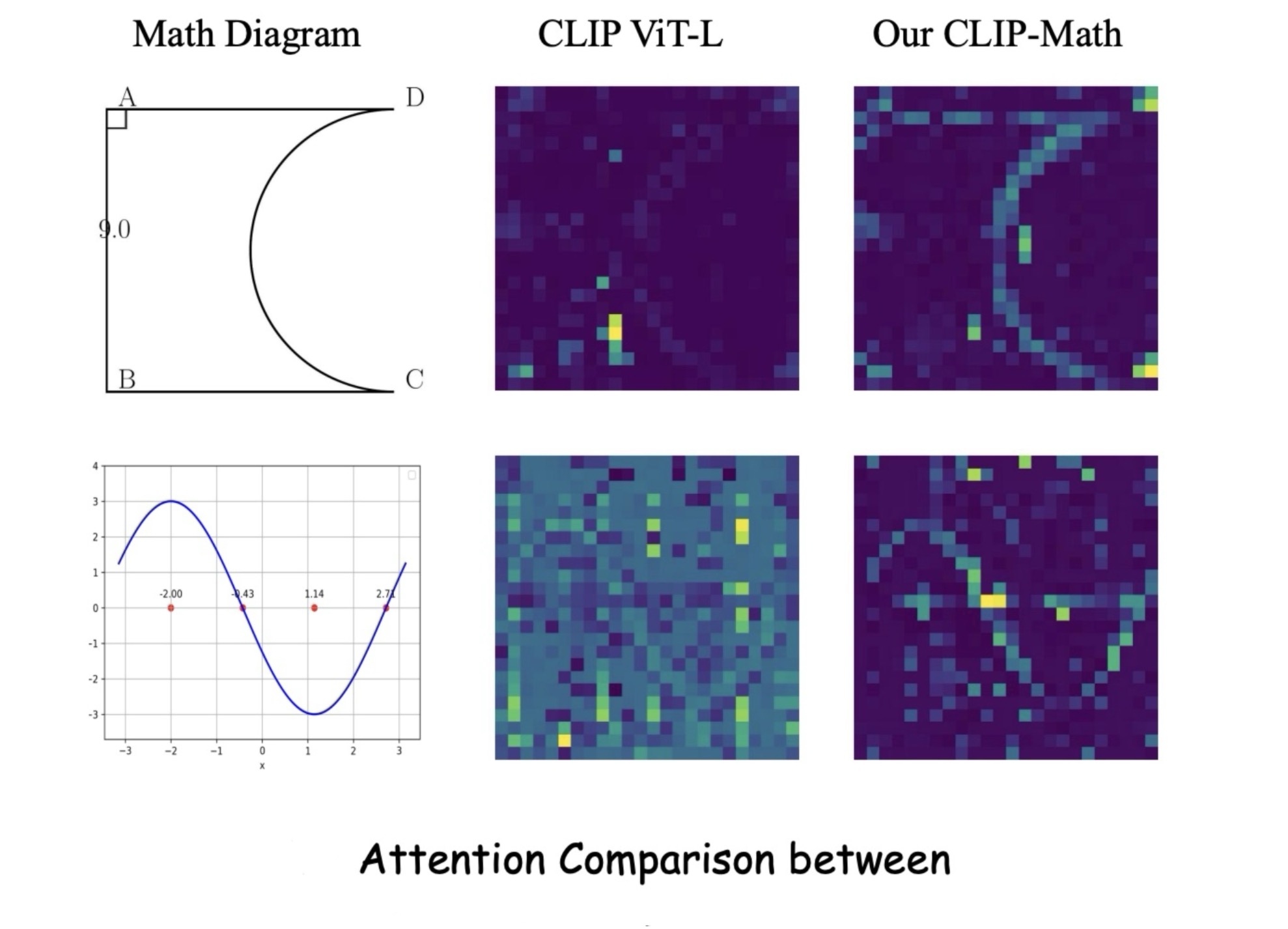

- Math-CLIP: a vision encoder specifically for understanding mathematical diagrams within MLLMs

- MAVIS-7B: an MLLM with a three-stage training paradigm achiving leading performance on MathVerse benchmark

Coming in a week!

The temporal data version is released in Google Drive.

We will soon release the final data with much higher-quality.

If you find MAVIS useful for your research and applications, please kindly cite using this BibTeX:

@misc{zhang2024mavismathematicalvisualinstruction,

title={MAVIS: Mathematical Visual Instruction Tuning},

author={Renrui Zhang and Xinyu Wei and Dongzhi Jiang and Yichi Zhang and Ziyu Guo and Chengzhuo Tong and Jiaming Liu and Aojun Zhou and Bin Wei and Shanghang Zhang and Peng Gao and Hongsheng Li},

year={2024},

eprint={2407.08739},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2407.08739},

}Explore our additional research on Vision-Language Large Models, focusing on multi-modal LLMs and mathematical reasoning:

- [MathVerse] MathVerse: Does Your Multi-modal LLM Truly See the Diagrams in Visual Math Problems?

- [LLaVA-NeXT-Interleave] Tackling Multi-image, Video, and 3D in Large Multimodal Models

- [MathVista] MathVista: Evaluating Mathematical Reasoning of Foundation Models in Visual Contexts

- [LLaMA-Adapter] LLaMA-Adapter: Efficient Fine-tuning of Language Models with Zero-init Attention

- [ImageBind-LLM] Imagebind-LLM: Multi-modality Instruction Tuning

- [SPHINX-X] Scaling Data and Parameters for a Family of Multi-modal Large Language Models

- [Point-Bind & Point-LLM] Multi-modality 3D Understanding, Generation, and Instruction Following

- [PerSAM] Personalize segment anything model with one shot

- [MathCoder] MathCoder: Seamless Code Integration in LLMs for Enhanced Mathematical Reasoning

- [MathVision] Measuring Multimodal Mathematical Reasoning with the MATH-Vision Dataset

- [CSV] Solving Challenging Math Word Problems Using GPT-4 Code Interpreter