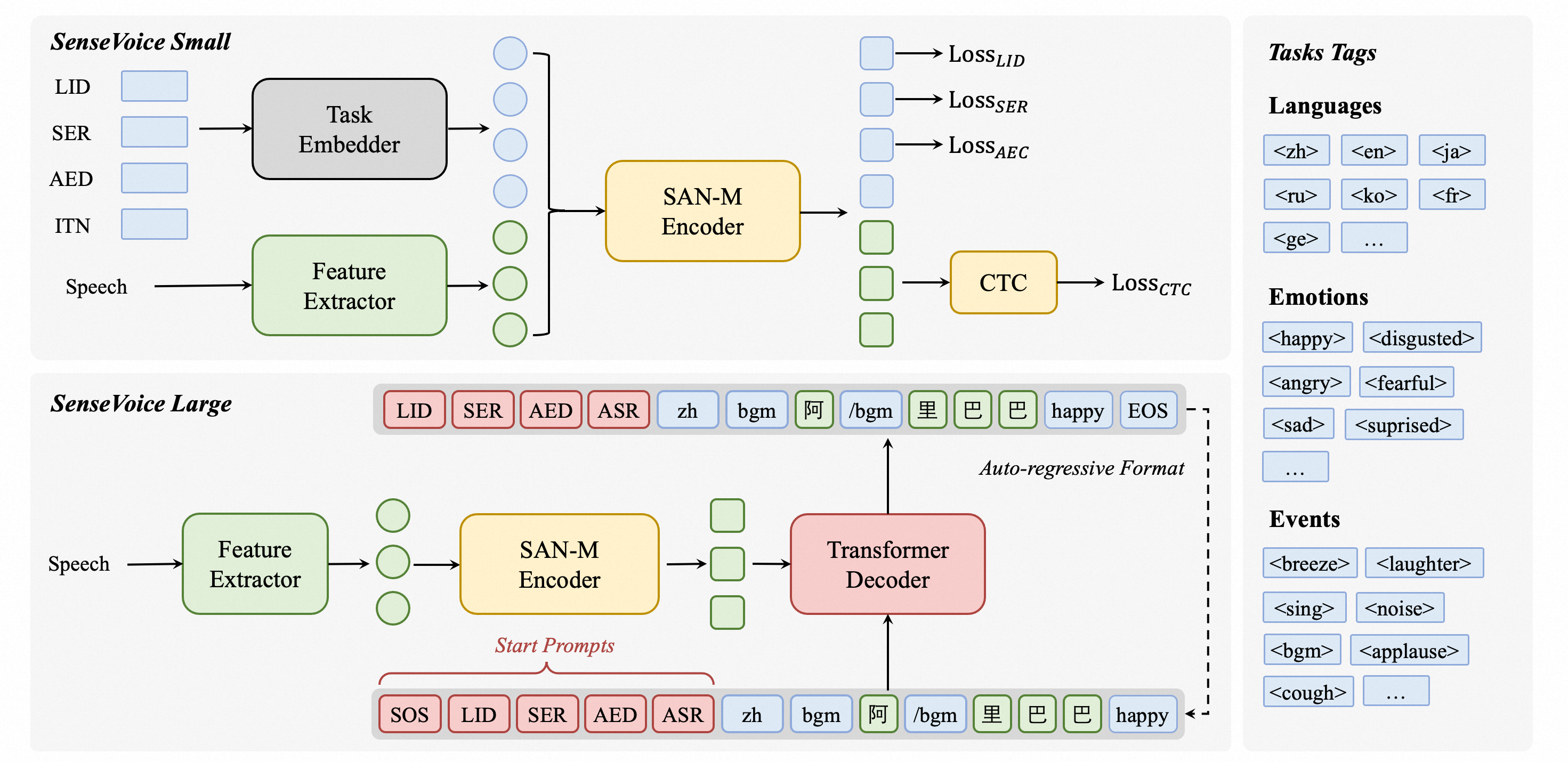

SenseVoice is a speech foundation model with multiple speech understanding capabilities, including automatic speech recognition (ASR), spoken language identification (LID), speech emotion recognition (SER), and audio event detection (AED).

Homepage | What's News | Benchmarks | Install | Usage | Community

Model Zoo: modelscope, huggingface

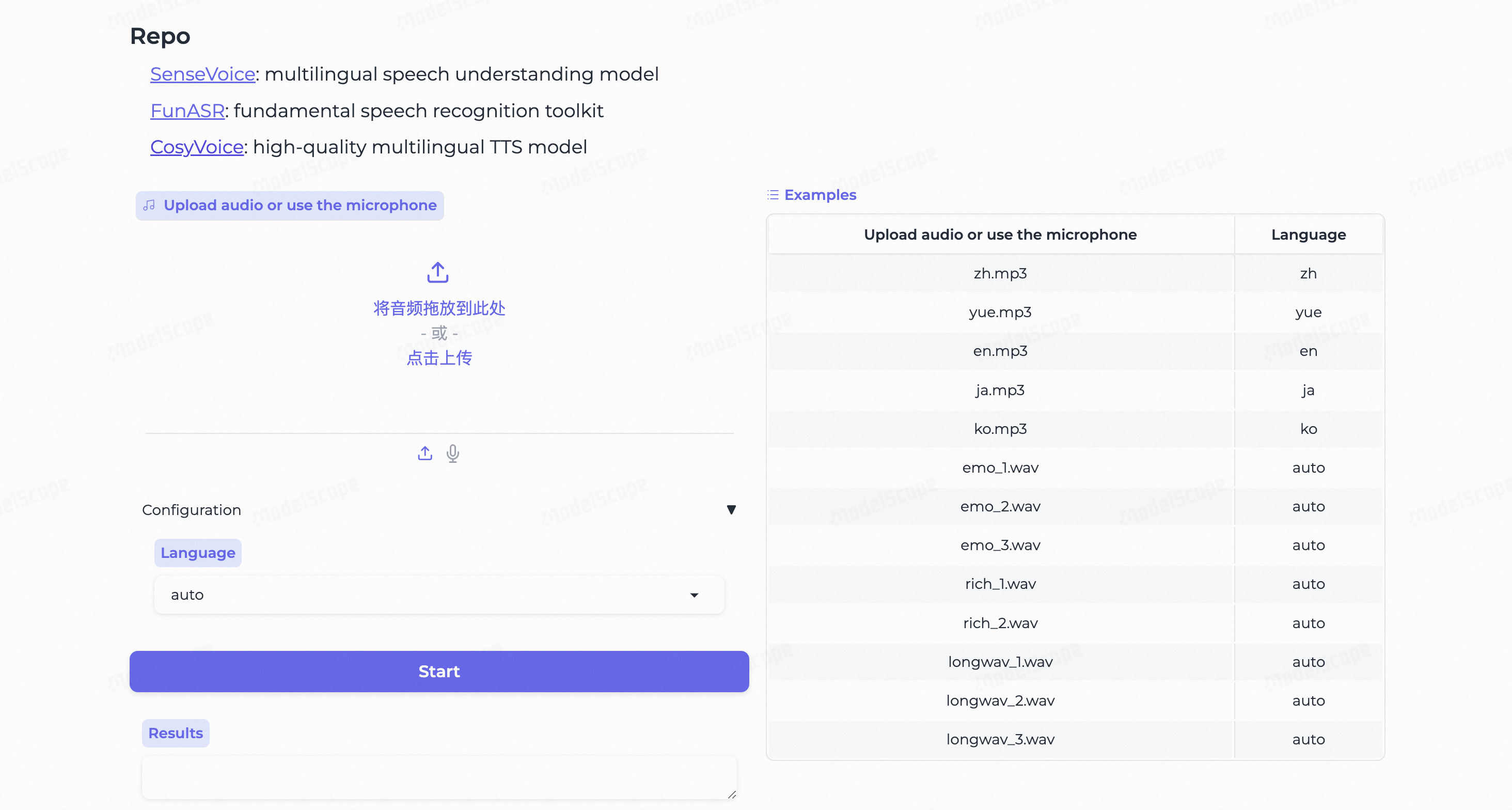

Online Demo: modelscope demo, huggingface space

SenseVoice focuses on high-accuracy multilingual speech recognition, speech emotion recognition, and audio event detection.

- Multilingual Speech Recognition: Trained with over 400,000 hours of data, supporting more than 50 languages, the recognition performance surpasses that of the Whisper model.

- Rich transcribe:

- Possess excellent emotion recognition capabilities, achieving and surpassing the effectiveness of the current best emotion recognition models on test data.

- Offer sound event detection capabilities, supporting the detection of various common human-computer interaction events such as bgm, applause, laughter, crying, coughing, and sneezing.

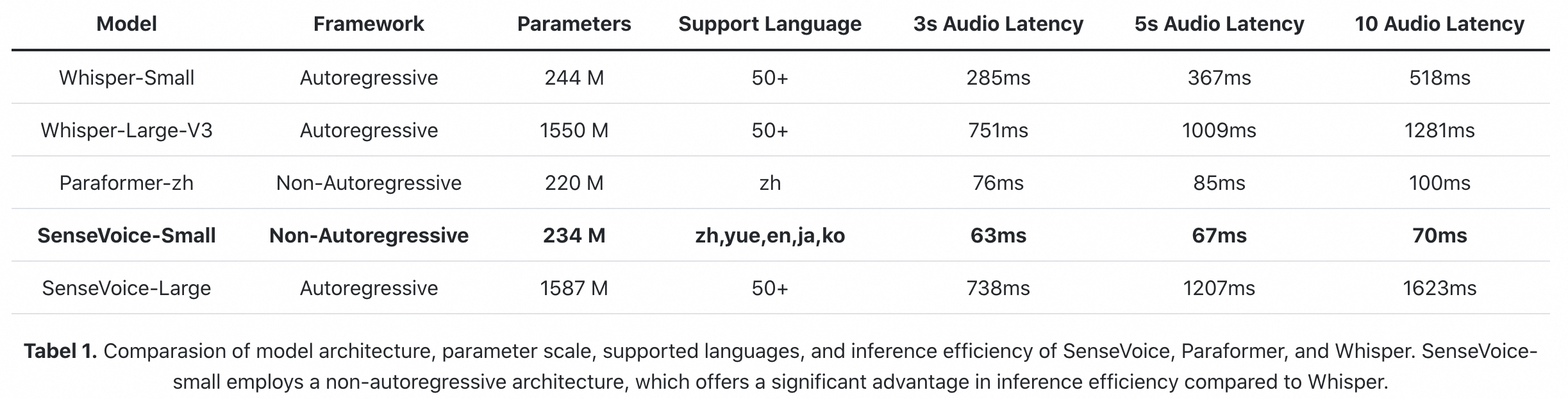

- Efficient Inference: The SenseVoice-Small model utilizes a non-autoregressive end-to-end framework, leading to exceptionally low inference latency. It requires only 70ms to process 10 seconds of audio, which is 15 times faster than Whisper-Large.

- Convenient Finetuning: Provide convenient finetuning scripts and strategies, allowing users to easily address long-tail sample issues according to their business scenarios.

- Service Deployment: Offer service deployment pipeline, supporting multi-concurrent requests, with client-side languages including Python, C++, HTML, Java, and C#, among others.

- 2024/7: Added Export Features for ONNX and libtorch, as well as Python Version Runtimes: funasr-onnx-0.4.0, funasr-torch-0.1.1

- 2024/7: The SenseVoice-Small voice understanding model is open-sourced, which offers high-precision multilingual speech recognition, emotion recognition, and audio event detection capabilities for Mandarin, Cantonese, English, Japanese, and Korean and leads to exceptionally low inference latency.

- 2024/7: The CosyVoice for natural speech generation with multi-language, timbre, and emotion control. CosyVoice excels in multi-lingual voice generation, zero-shot voice generation, cross-lingual voice cloning, and instruction-following capabilities. CosyVoice repo and CosyVoice space.

- 2024/7: FunASR is a fundamental speech recognition toolkit that offers a variety of features, including speech recognition (ASR), Voice Activity Detection (VAD), Punctuation Restoration, Language Models, Speaker Verification, Speaker Diarization and multi-talker ASR.

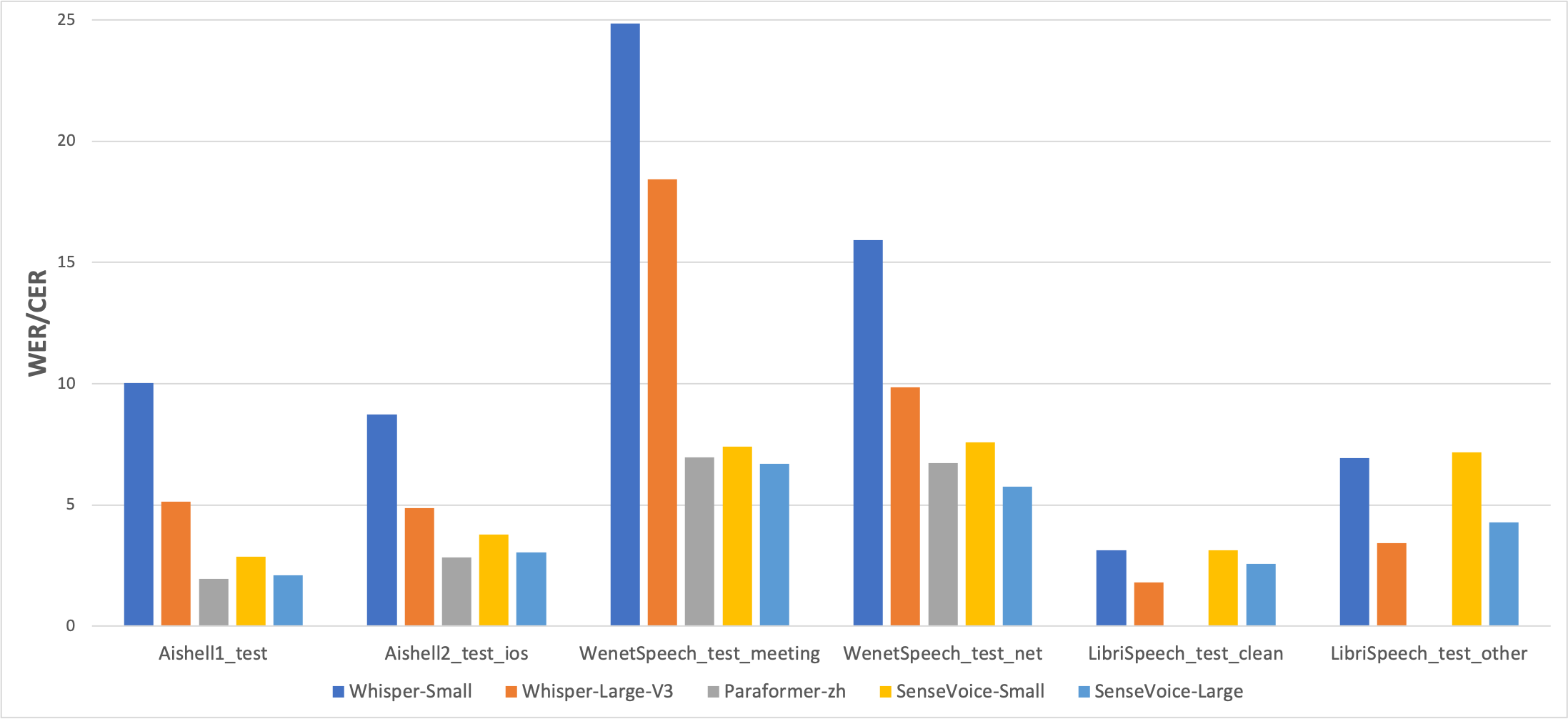

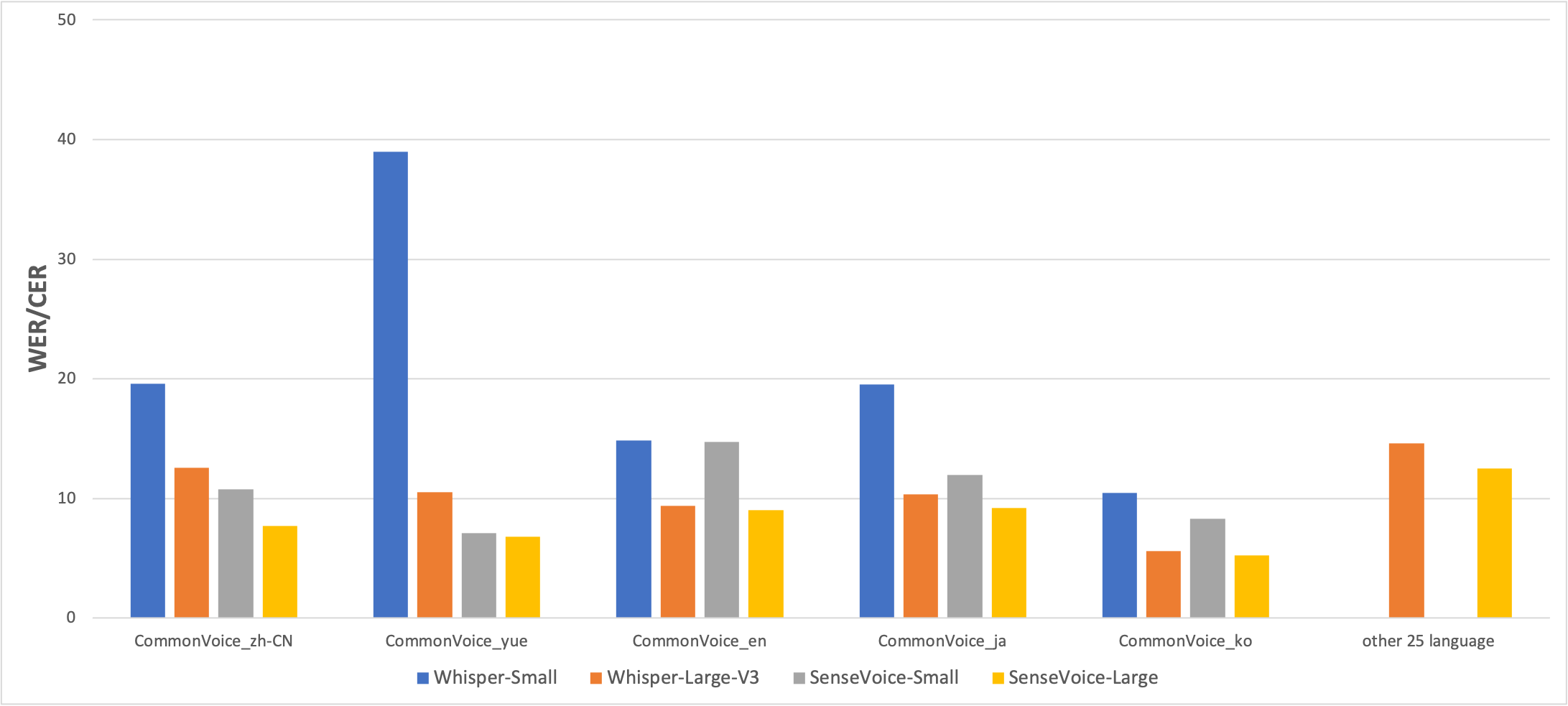

We compared the performance of multilingual speech recognition between SenseVoice and Whisper on open-source benchmark datasets, including AISHELL-1, AISHELL-2, Wenetspeech, LibriSpeech, and Common Voice. In terms of Chinese and Cantonese recognition, the SenseVoice-Small model has advantages.

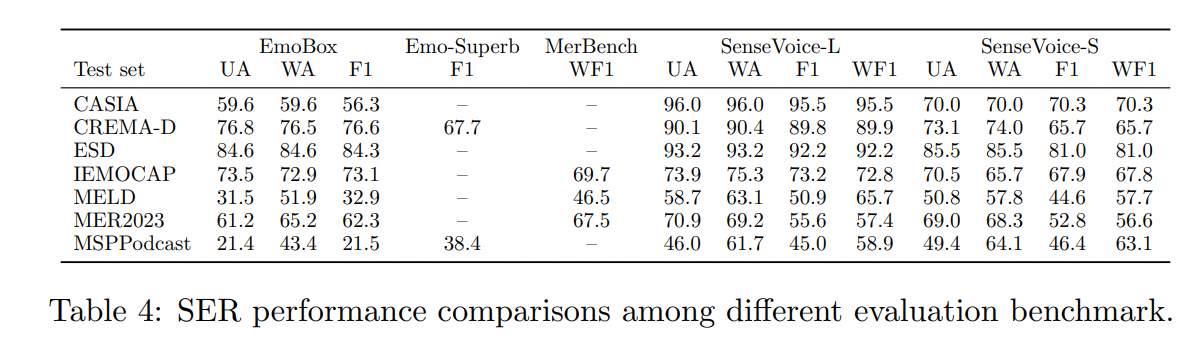

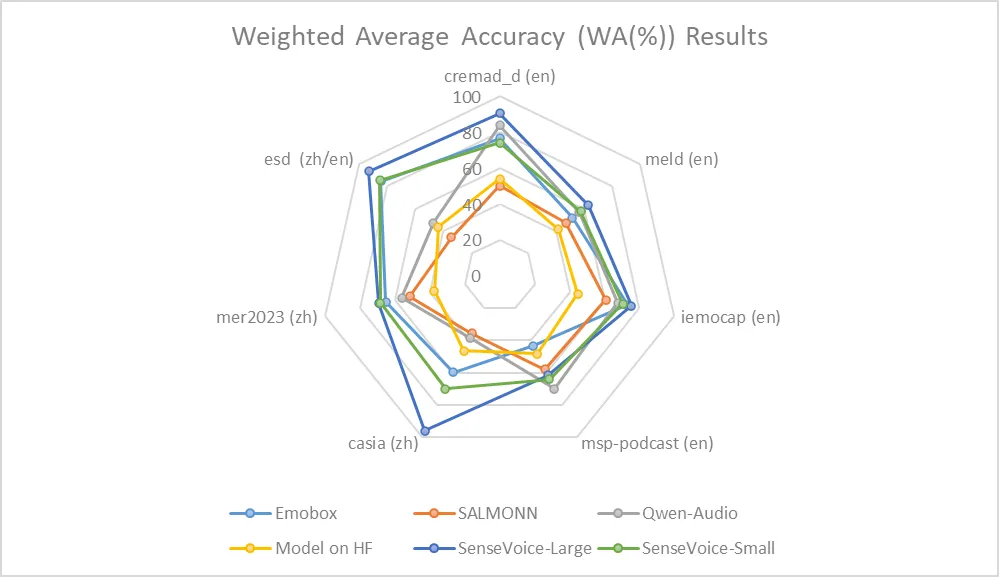

Due to the current lack of widely-used benchmarks and methods for speech emotion recognition, we conducted evaluations across various metrics on multiple test sets and performed a comprehensive comparison with numerous results from recent benchmarks. The selected test sets encompass data in both Chinese and English, and include multiple styles such as performances, films, and natural conversations. Without finetuning on the target data, SenseVoice was able to achieve and exceed the performance of the current best speech emotion recognition models.

Furthermore, we compared multiple open-source speech emotion recognition models on the test sets, and the results indicate that the SenseVoice-Large model achieved the best performance on nearly all datasets, while the SenseVoice-Small model also surpassed other open-source models on the majority of the datasets.

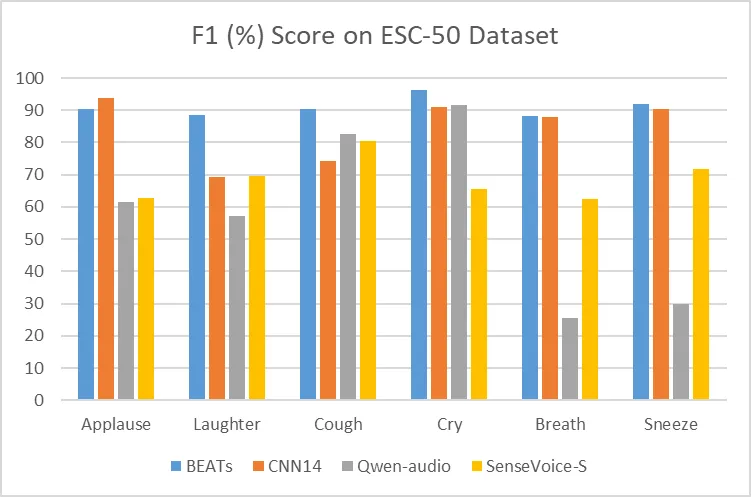

Although trained exclusively on speech data, SenseVoice can still function as a standalone event detection model. We compared its performance on the environmental sound classification ESC-50 dataset against the widely used industry models BEATS and PANN. The SenseVoice model achieved commendable results on these tasks. However, due to limitations in training data and methodology, its event classification performance has some gaps compared to specialized AED models.

The SenseVoice-Small model deploys a non-autoregressive end-to-end architecture, resulting in extremely low inference latency. With a similar number of parameters to the Whisper-Small model, it infers more than 5 times faster than Whisper-Small and 15 times faster than Whisper-Large.

pip install -r requirements.txtSupports input of audio in any format and of any duration.

from funasr import AutoModel

from funasr.utils.postprocess_utils import rich_transcription_postprocess

model_dir = "iic/SenseVoiceSmall"

model = AutoModel(

model=model_dir,

trust_remote_code=True,

remote_code="./model.py",

vad_model="fsmn-vad",

vad_kwargs={"max_single_segment_time": 30000},

device="cuda:0",

)

# en

res = model.generate(

input=f"{model.model_path}/example/en.mp3",

cache={},

language="auto", # "zh", "en", "yue", "ja", "ko", "nospeech"

use_itn=True,

batch_size_s=60,

merge_vad=True, #

merge_length_s=15,

)

text = rich_transcription_postprocess(res[0]["text"])

print(text)Parameter Description (Click to Expand)

model_dir: The name of the model, or the path to the model on the local disk.trust_remote_code:- When

True, it means that the model's code implementation is loaded fromremote_code, which specifies the exact location of themodelcode (for example,model.pyin the current directory). It supports absolute paths, relative paths, and network URLs. - When

False, it indicates that the model's code implementation is the integrated version within FunASR. At this time, modifications made tomodel.pyin the current directory will not be effective, as the version loaded is the internal one from FunASR. For the model code, click here to view.

- When

vad_model: This indicates the activation of VAD (Voice Activity Detection). The purpose of VAD is to split long audio into shorter clips. In this case, the inference time includes both VAD and SenseVoice total consumption, and represents the end-to-end latency. If you wish to test the SenseVoice model's inference time separately, the VAD model can be disabled.vad_kwargs: Specifies the configurations for the VAD model.max_single_segment_time: denotes the maximum duration for audio segmentation by thevad_model, with the unit being milliseconds (ms).use_itn: Whether the output result includes punctuation and inverse text normalization.batch_size_s: Indicates the use of dynamic batching, where the total duration of audio in the batch is measured in seconds (s).merge_vad: Whether to merge short audio fragments segmented by the VAD model, with the merged length beingmerge_length_s, in seconds (s).ban_emo_unk: Whether to ban the output of theemo_unktoken.

If all inputs are short audios (<30s), and batch inference is needed to speed up inference efficiency, the VAD model can be removed, and batch_size can be set accordingly.

model = AutoModel(model=model_dir, trust_remote_code=True, device="cuda:0")

res = model.generate(

input=f"{model.model_path}/example/en.mp3",

cache={},

language="zh", # "zh", "en", "yue", "ja", "ko", "nospeech"

use_itn=False,

batch_size=64,

)For more usage, please refer to docs

Supports input of audio in any format, with an input duration limit of 30 seconds or less.

from model import SenseVoiceSmall

from funasr.utils.postprocess_utils import rich_transcription_postprocess

model_dir = "iic/SenseVoiceSmall"

m, kwargs = SenseVoiceSmall.from_pretrained(model=model_dir, device="cuda:0")

m.eval()

res = m.inference(

data_in=f"{kwargs['model_path']}/example/en.mp3",

language="auto", # "zh", "en", "yue", "ja", "ko", "nospeech"

use_itn=False,

ban_emo_unk=False,

**kwargs,

)

text = rich_transcription_postprocess(res[0][0]["text"])

print(text)ONNX and Libtorch Export

# pip3 install -U funasr funasr-onnx

from pathlib import Path

from funasr_onnx import SenseVoiceSmall

from funasr_onnx.utils.postprocess_utils import rich_transcription_postprocess

model_dir = "iic/SenseVoiceSmall"

model = SenseVoiceSmall(model_dir, batch_size=10, quantize=True)

# inference

wav_or_scp = ["{}/.cache/modelscope/hub/{}/example/en.mp3".format(Path.home(), model_dir)]

res = model(wav_or_scp, language="auto", use_itn=True)

print([rich_transcription_postprocess(i) for i in res])Note: ONNX model is exported to the original model directory.

from pathlib import Path

from funasr_torch import SenseVoiceSmall

from funasr_torch.utils.postprocess_utils import rich_transcription_postprocess

model_dir = "iic/SenseVoiceSmall"

model = SenseVoiceSmall(model_dir, batch_size=10, device="cuda:0")

wav_or_scp = ["{}/.cache/modelscope/hub/{}/example/en.mp3".format(Path.home(), model_dir)]

res = model(wav_or_scp, language="auto", use_itn=True)

print([rich_transcription_postprocess(i) for i in res])Note: Libtorch model is exported to the original model directory.

Undo

git clone https://github.com/alibaba/FunASR.git && cd FunASR

pip3 install -e ./Data examples

{"key": "YOU0000008470_S0000238_punc_itn", "text_language": "<|en|>", "emo_target": "<|NEUTRAL|>", "event_target": "<|Speech|>", "with_or_wo_itn": "<|withitn|>", "target": "Including legal due diligence, subscription agreement, negotiation.", "source": "/cpfs01/shared/Group-speech/beinian.lzr/data/industrial_data/english_all/audio/YOU0000008470_S0000238.wav", "target_len": 7, "source_len": 140}

{"key": "AUD0000001556_S0007580", "text_language": "<|en|>", "emo_target": "<|NEUTRAL|>", "event_target": "<|Speech|>", "with_or_wo_itn": "<|woitn|>", "target": "there is a tendency to identify the self or take interest in what one has got used to", "source": "/cpfs01/shared/Group-speech/beinian.lzr/data/industrial_data/english_all/audio/AUD0000001556_S0007580.wav", "target_len": 18, "source_len": 360}

Full ref to data/train_example.jsonl

Data Prepare Details

Description:

key: audio file unique IDsource:path to the audio filesource_len:number of fbank frames of the audio filetarget:transcriptiontarget_len:length of targettext_language:language id of the audio fileemo_target:emotion label of the audio fileevent_target:event label of the audio filewith_or_wo_itn:whether includes punctuation and inverse text normalization

train_text.txt

BAC009S0764W0121 甚至出现交易几乎停滞的情况

BAC009S0916W0489 湖北一公司以员工名义贷款数十员工负债千万

asr_example_cn_en 所有只要处理 data 不管你是做 machine learning 做 deep learning 做 data analytics 做 data science 也好 scientist 也好通通都要都做的基本功啊那 again 先先对有一些>也许对

ID0012W0014 he tried to think how it could betrain_wav.scp

BAC009S0764W0121 https://isv-data.oss-cn-hangzhou.aliyuncs.com/ics/MaaS/ASR/test_audio/BAC009S0764W0121.wav

BAC009S0916W0489 https://isv-data.oss-cn-hangzhou.aliyuncs.com/ics/MaaS/ASR/test_audio/BAC009S0916W0489.wav

asr_example_cn_en https://isv-data.oss-cn-hangzhou.aliyuncs.com/ics/MaaS/ASR/test_audio/asr_example_cn_en.wav

ID0012W0014 https://isv-data.oss-cn-hangzhou.aliyuncs.com/ics/MaaS/ASR/test_audio/asr_example_en.wavtrain_text_language.txt

The language ids include <|zh|>、<|en|>、<|yue|>、<|ja|> and <|ko|>.

BAC009S0764W0121 <|zh|>

BAC009S0916W0489 <|zh|>

asr_example_cn_en <|zh|>

ID0012W0014 <|en|>train_emo.txt

The emotion labels include<|HAPPY|>、<|SAD|>、<|ANGRY|>、<|NEUTRAL|>、<|FEARFUL|>、<|DISGUSTED|> and <|SURPRISED|>.

BAC009S0764W0121 <|NEUTRAL|>

BAC009S0916W0489 <|NEUTRAL|>

asr_example_cn_en <|NEUTRAL|>

ID0012W0014 <|NEUTRAL|>train_event.txt

The event labels include<|BGM|>、<|Speech|>、<|Applause|>、<|Laughter|>、<|Cry|>、<|Sneeze|>、<|Breath|> and <|Cough|>.

BAC009S0764W0121 <|Speech|>

BAC009S0916W0489 <|Speech|>

asr_example_cn_en <|Speech|>

ID0012W0014 <|Speech|>Command

# generate train.jsonl and val.jsonl from wav.scp, text.txt, text_language.txt, emo_target.txt, event_target.txt

sensevoice2jsonl \

++scp_file_list='["../../../data/list/train_wav.scp", "../../../data/list/train_text.txt", "../../../data/list/train_text_language.txt", "../../../data/list/train_emo.txt", "../../../data/list/train_event.txt"]' \

++data_type_list='["source", "target", "text_language", "emo_target", "event_target"]' \

++jsonl_file_out="../../../data/list/train.jsonl"If there is no train_text_language.txt, train_emo_target.txt and train_event_target.txt, the language, emotion and event label will be predicted automatically by using the SenseVoice model.

# generate train.jsonl and val.jsonl from wav.scp and text.txt

sensevoice2jsonl \

++scp_file_list='["../../../data/list/train_wav.scp", "../../../data/list/train_text.txt"]' \

++data_type_list='["source", "target"]' \

++jsonl_file_out="../../../data/list/train.jsonl" \

++model_dir='iic/SenseVoiceSmall'Ensure to modify the train_tool in finetune.sh to the absolute path of funasr/bin/train_ds.py from the FunASR installation directory you have set up earlier.

bash finetune.shpython webui.py- Triton (GPU) Deployment Best Practices: Using Triton + TensorRT, tested with FP32, achieving an acceleration ratio of 526 on V100 GPU. FP16 support is in progress. Repository

- Sherpa-onnx Deployment Best Practices: Supports using SenseVoice in 10 programming languages: C++, C, Python, C#, Go, Swift, Kotlin, Java, JavaScript, and Dart. Also supports deploying SenseVoice on platforms like iOS, Android, and Raspberry Pi. Repository

If you encounter problems in use, you can directly raise Issues on the github page.

You can also scan the following DingTalk group QR code to join the community group for communication and discussion.

| FunAudioLLM | FunASR |

|---|---|

|