Installation | Features | Quick-Start | Components | Supporting Methods | Supporting Datasets | FAQs

With FlashRAG and provided resources, you can effortlessly reproduce existing SOTA works in the RAG domain or implement your custom RAG processes and components.

-

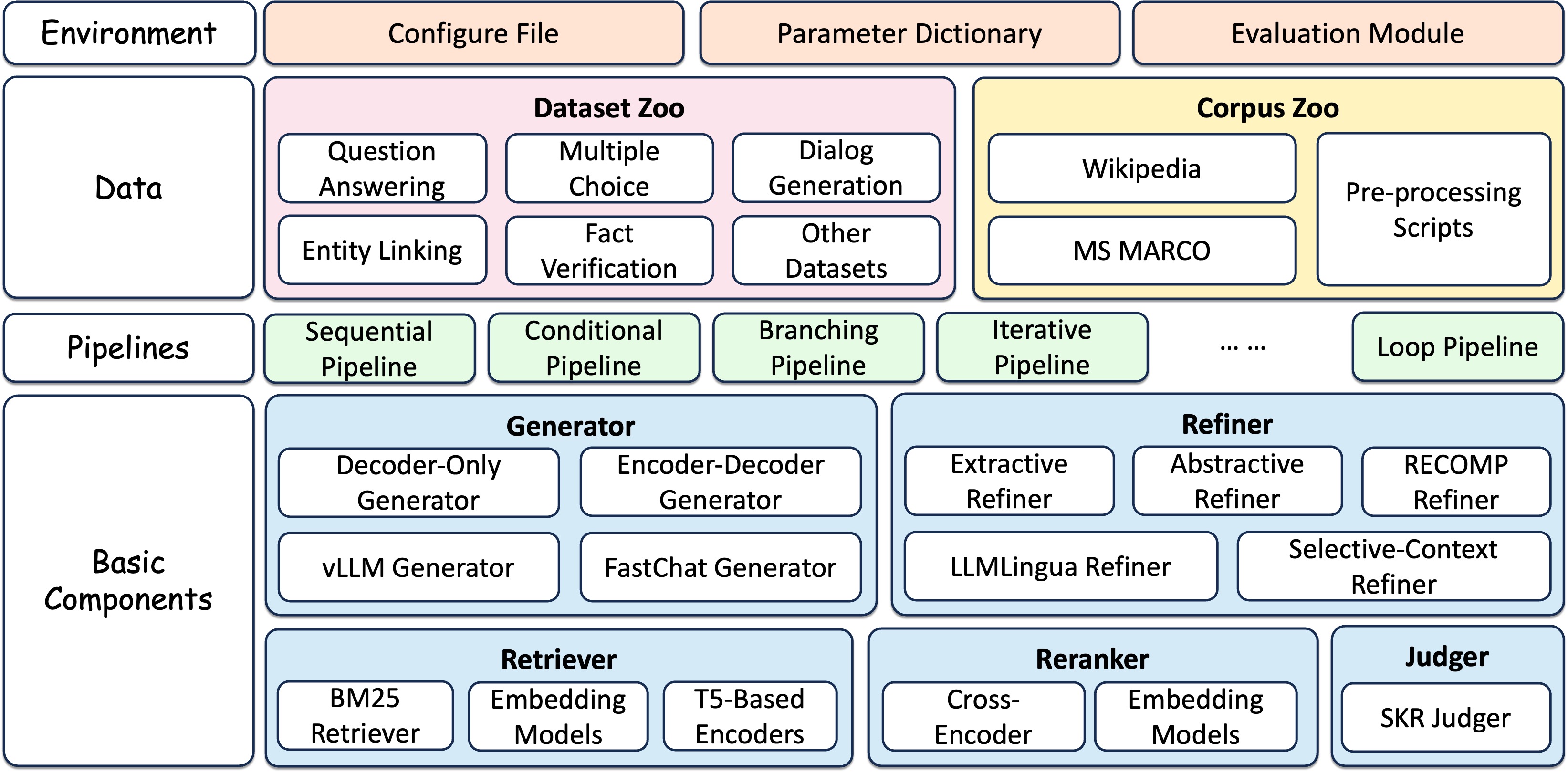

Extensive and Customizable Framework: Includes essential components for RAG scenarios such as retrievers, rerankers, generators, and compressors, allowing for flexible assembly of complex pipelines.

-

Comprehensive Benchmark Datasets: A collection of 32 pre-processed RAG benchmark datasets to test and validate RAG models' performances.

-

Pre-implemented Advanced RAG Algorithms: Features 14 advancing RAG algorithms with reported results, based on our framework. Easily reproducing results under different settings.

-

Efficient Preprocessing Stage: Simplifies the RAG workflow preparation by providing various scripts like corpus processing for retrieval, retrieval index building, and pre-retrieval of documents.

-

Optimized Execution: The library's efficiency is enhanced with tools like vLLM, FastChat for LLM inference acceleration, and Faiss for vector index management.

FlashRAG is still under development and there are many issues and room for improvement. We will continue to update. And we also sincerely welcome contributions on this open-source toolkit.

- Support OpenAI models

- Support Claude and Gemini models

- Provdide instructions for each component

- Integrate sentence Transformers

- Inlcude more RAG approaches

- Add more evaluation metrics (e.g., Unieval, name-entity F1) and benchmarks (e.g., RGB benchmark)

- Enhance code adaptability and readability

[24/08/02] We add support for a new method Spring, significantly improve the performance of LLM by adding only a few token embeddings. See it result in result table.

[24/07/17] Due to some unknown issues with HuggingFace, our original dataset link has been invalid. We have updaded it. Please check the new link if you encounter any problems.

[24/07/06] We add support for a new method: Trace, which refine text by constructing a knowledge graph. See it results and details.

[24/06/19] We add support for a new method: IRCoT, and update the result table.

[24/06/15] We provide a demo to perform the RAG process using our toolkit.

[24/06/11] We have integrated sentence transformers in the retriever module. Now it's easier to use the retriever without setting pooling methods.

[24/06/05] We have provided detailed document for reproducing existing methods (see how to reproduce, baseline details), and configurations settings.

[24/06/02] We have provided an introduction of FlashRAG for beginners, see an introduction to flashrag (中文版 한국어).

[24/05/31] We supported Openai-series models as generator.

To get started with FlashRAG, simply clone it from Github and install (requires Python 3.9+):

git clone https://github.com/RUC-NLPIR/FlashRAG.git

cd FlashRAG

pip install -e . Due to the incompatibility when installing faiss using pip, it is necessary to use the following conda command for installation.

# CPU-only version

conda install -c pytorch faiss-cpu=1.8.0

# GPU(+CPU) version

conda install -c pytorch -c nvidia faiss-gpu=1.8.0Note: It is impossible to install the latest version of faiss on certain systems.

From the official Faiss repository (source):

- The CPU-only faiss-cpu conda package is currently available on Linux (x86_64 and arm64), OSX (arm64 only), and Windows (x86_64)

- faiss-gpu, containing both CPU and GPU indices, is available on Linux (x86_64 only) for CUDA 11.4 and 12.1

For beginners, we provide a an introduction to flashrag (中文版 한국어) to help you familiarize yourself with our toolkit. Alternatively, you can directly refer to the code below.

We provide a toy demo to implement a simple RAG process. You can freely change the corpus and model you want to use. The English demo uses general knowledge as the corpus, e5-base-v2 as the retriever, and Llama3-8B-instruct as generator. The Chinese demo uses data crawled from the official website of Remin University of China as the corpus, bge-large-zh-v1.5 as the retriever, and qwen1.5-14B as the generator. Please fill in the corresponding path in the file.

To run the demo:

cd examples/quick_start

# copy the config file here, otherwise, streamlit will complain that file s

cp ../methods/my_config.yaml .

# run english demo

streamlit run demo_en.py

# run chinese demo

streamlit run demo_zh.pyWe also provide an example to use our framework for pipeline execution.

Run the following code to implement a naive RAG pipeline using provided toy datasets.

The default retriever is e5-base-v2 and default generator is Llama3-8B-instruct. You need to fill in the corresponding model path in the following command. If you wish to use other models, please refer to the detailed instructions below.

cd examples/quick_start

python simple_pipeline.py \

--model_path <Llama-3-8B-instruct-PATH> \

--retriever_path <E5-PATH>After the code is completed, you can view the intermediate results of the run and the final evaluation score in the output folder under the corresponding path.

You can use the pipeline class we have already built (as shown in pipelines) to implement the RAG process inside. In this case, you just need to configure the config and load the corresponding pipeline.

Firstly, load the entire process's config, which records various hyperparameters required in the RAG process. You can input yaml files as parameters or directly as variables. The priority of variables as input is higher than that of files.

from flashrag.config import Config

config_dict = {'data_dir': 'dataset/'}

my_config = Config(config_file_path = 'my_config.yaml',

config_dict = config_dict)We provide comprehensive guidance on how to set configurations, you can see our configuration guidance. You can also refer to the basic yaml file we provide to set your own parameters.

Next, load the corresponding dataset and initialize the pipeline. The components in the pipeline will be automatically loaded.

from flashrag.utils import get_dataset

from flashrag.pipeline import SequentialPipeline

from flashrag.prompt import PromptTemplate

from flashrag.config import Config

config_dict = {'data_dir': 'dataset/'}

my_config = Config(config_file_path = 'my_config.yaml',

config_dict = config_dict)

all_split = get_dataset(my_config)

test_data = all_split['test']

pipeline = SequentialPipeline(my_config)You can specify your own input prompt using PromptTemplete:

prompt_templete = PromptTemplate(

config,

system_prompt = "Answer the question based on the given document. Only give me the answer and do not output any other words.\nThe following are given documents.\n\n{reference}",

user_prompt = "Question: {question}\nAnswer:"

)

pipeline = SequentialPipeline(my_config, prompt_template=prompt_templete)Finally, execute pipeline.run to obtain the final result.

output_dataset = pipeline.run(test_data, do_eval=True)The output_dataset contains the intermediate results and metric scores for each item in the input dataset.

Meanwhile, the dataset with intermediate results and the overall evaluation score will also be saved as a file (if save_intermediate_data and save_metric_score are specified).

Sometimes you may need to implement more complex RAG process, and you can build your own pipeline to implement it.

You just need to inherit BasicPipeline, initialize the components you need, and complete the run function.

from flashrag.pipeline import BasicPipeline

from flashrag.utils import get_retriever, get_generator

class ToyPipeline(BasicPipeline):

def __init__(self, config, prompt_templete=None):

# Load your own components

pass

def run(self, dataset, do_eval=True):

# Complete your own process logic

# get attribute in dataset using `.`

input_query = dataset.question

...

# use `update_output` to save intermeidate data

dataset.update_output("pred",pred_answer_list)

dataset = self.evaluate(dataset, do_eval=do_eval)

return datasetPlease first understand the input and output forms of the components you need to use from our documentation.

If you already have your own code and only want to use our components to embed the original code, you can refer to the basic introduction of the components to obtain the input and output formats of each component.

In FlashRAG, we have built a series of common RAG components, including retrievers, generators, refiners, and more. Based on these components, we have assembled several pipelines to implement the RAG workflow, while also providing the flexibility to combine these components in custom arrangements to create your own pipeline.

| Type | Module | Description |

|---|---|---|

| Judger | SKR Judger | Judging whether to retrieve using SKR method |

| Retriever | Dense Retriever | Bi-encoder models such as dpr, bge, e5, using faiss for search |

| BM25 Retriever | Sparse retrieval method based on Lucene | |

| Bi-Encoder Reranker | Calculate matching score using bi-Encoder | |

| Cross-Encoder Reranker | Calculate matching score using cross-encoder | |

| Refiner | Extractive Refiner | Refine input by extracting important context |

| Abstractive Refiner | Refine input through seq2seq model | |

| LLMLingua Refiner | LLMLingua-series prompt compressor | |

| SelectiveContext Refiner | Selective-Context prompt compressor | |

| KG Refiner | Use Trace method to construct a knowledge graph | |

| Generator | Encoder-Decoder Generator | Encoder-Decoder model, supporting Fusion-in-Decoder (FiD) |

| Decoder-only Generator | Native transformers implementation | |

| FastChat Generator | Accelerate with FastChat | |

| vllm Generator | Accelerate with vllm |

Referring to a survey on retrieval-augmented generation, we categorized RAG methods into four types based on their inference paths.

- Sequential: Sequential execuation of RAG process, like Query-(pre-retrieval)-retriever-(post-retrieval)-generator

- Conditional: Implements different paths for different types of input queries

- Branching : Executes multiple paths in parallel, merging the responses from each path

- Loop: Iteratively performs retrieval and generation

In each category, we have implemented corresponding common pipelines. Some pipelines have corresponding work papers.

| Type | Module | Description |

|---|---|---|

| Sequential | Sequential Pipeline | Linear execution of query, supporting refiner, reranker |

| Conditional | Conditional Pipeline | With a judger module, distinct execution paths for various query types |

| Branching | REPLUG Pipeline | Generate answer by integrating probabilities in multiple generation paths |

| SuRe Pipeline | Ranking and merging generated results based on each document | |

| Loop | Iterative Pipeline | Alternating retrieval and generation |

| Self-Ask Pipeline | Decompose complex problems into subproblems using self-ask | |

| Self-RAG Pipeline | Adaptive retrieval, critique, and generation | |

| FLARE Pipeline | Dynamic retrieval during the generation process | |

| IRCoT Pipeline | Integrate retrieval process with CoT |

We have implemented 14 works with a consistent setting of:

- Generator: LLAMA3-8B-instruct with input length of 2048

- Retriever: e5-base-v2 as embedding model, retrieve 5 docs per query

- Prompt: A consistent default prompt, template can be found in the method details.

For open-source methods, we implemented their processes using our framework. For methods where the author did not provide source code, we will try our best to follow the methods in the original paper for implementation.

For necessary settings and hyperparameters specific to some methods, we have documented them in the specific settings column. For more details, please consult our reproduce guidance and method details.

It’s important to note that, to ensure consistency, we have utilized a uniform setting. However, this setting may differ from the original setting of the method, leading to variations in results compared to the original outcomes.

| Method | Type | NQ (EM) | TriviaQA (EM) | Hotpotqa (F1) | 2Wiki (F1) | PopQA (F1) | WebQA(EM) | Specific setting |

|---|---|---|---|---|---|---|---|---|

| Naive Generation | Sequential | 22.6 | 55.7 | 28.4 | 33.9 | 21.7 | 18.8 | |

| Standard RAG | Sequential | 35.1 | 58.9 | 35.3 | 21.0 | 36.7 | 15.7 | |

| AAR-contriever-kilt | Sequential | 30.1 | 56.8 | 33.4 | 19.8 | 36.1 | 16.1 | |

| LongLLMLingua | Sequential | 32.2 | 59.2 | 37.5 | 25.0 | 38.7 | 17.5 | Compress Ratio=0.5 |

| RECOMP-abstractive | Sequential | 33.1 | 56.4 | 37.5 | 32.4 | 39.9 | 20.2 | |

| Selective-Context | Sequential | 30.5 | 55.6 | 34.4 | 18.5 | 33.5 | 17.3 | Compress Ratio=0.5 |

| Trace | Sequential | 30.7 | 50.2 | 34.0 | 15.5 | 37.4 | 19.9 | |

| Spring | Sequential | 37.9 | 64.6 | 42.6 | 37.3 | 54.8 | 27.7 | Use Llama2-7B-chat with trained embedding table |

| SuRe | Branching | 37.1 | 53.2 | 33.4 | 20.6 | 48.1 | 24.2 | Use provided prompt |

| REPLUG | Branching | 28.9 | 57.7 | 31.2 | 21.1 | 27.8 | 20.2 | |

| SKR | Conditional | 33.2 | 56.0 | 32.4 | 23.4 | 31.7 | 17.0 | Use infernece-time training data |

| Ret-Robust | Loop | 42.9 | 68.2 | 35.8 | 43.4 | 57.2 | 33.7 | Use LLAMA2-13B with trained lora |

| Self-RAG | Loop | 36.4 | 38.2 | 29.6 | 25.1 | 32.7 | 21.9 | Use trained selfrag-llama2-7B |

| FLARE | Loop | 22.5 | 55.8 | 28.0 | 33.9 | 20.7 | 20.2 | |

| Iter-Retgen, ITRG | Loop | 36.8 | 60.1 | 38.3 | 21.6 | 37.9 | 18.2 | |

| IRCoT | Loop | 33.3 | 56.9 | 41.5 | 32.4 | 45.6 | 20.7 |

We have collected and processed 35 datasets widely used in RAG research, pre-processing them to ensure a consistent format for ease of use. For certain datasets (such as Wiki-asp), we have adapted them to fit the requirements of RAG tasks according to the methods commonly used within the community. All datasets are available at Huggingface datasets.

For each dataset, we save each split as a jsonl file, and each line is a dict as follows:

{

'id': str,

'question': str,

'golden_answers': List[str],

'metadata': dict

}Below is the list of datasets along with the corresponding sample sizes:

| Task | Dataset Name | Knowledge Source | # Train | # Dev | # Test |

|---|---|---|---|---|---|

| QA | NQ | wiki | 79,168 | 8,757 | 3,610 |

| QA | TriviaQA | wiki & web | 78,785 | 8,837 | 11,313 |

| QA | PopQA | wiki | / | / | 14,267 |

| QA | SQuAD | wiki | 87,599 | 10,570 | / |

| QA | MSMARCO-QA | web | 808,731 | 101,093 | / |

| QA | NarrativeQA | books and story | 32,747 | 3,461 | 10,557 |

| QA | WikiQA | wiki | 20,360 | 2,733 | 6,165 |

| QA | WebQuestions | Google Freebase | 3,778 | / | 2,032 |

| QA | AmbigQA | wiki | 10,036 | 2,002 | / |

| QA | SIQA | - | 33,410 | 1,954 | / |

| QA | CommenseQA | - | 9,741 | 1,221 | / |

| QA | BoolQ | wiki | 9,427 | 3,270 | / |

| QA | PIQA | - | 16,113 | 1,838 | / |

| QA | Fermi | wiki | 8,000 | 1,000 | 1,000 |

| multi-hop QA | HotpotQA | wiki | 90,447 | 7,405 | / |

| multi-hop QA | 2WikiMultiHopQA | wiki | 15,000 | 12,576 | / |

| multi-hop QA | Musique | wiki | 19,938 | 2,417 | / |

| multi-hop QA | Bamboogle | wiki | / | / | 125 |

| Long-form QA | ASQA | wiki | 4,353 | 948 | / |

| Long-form QA | ELI5 | 272,634 | 1,507 | / | |

| Open-Domain Summarization | WikiASP | wiki | 300,636 | 37,046 | 37,368 |

| multiple-choice | MMLU | - | 99,842 | 1,531 | 14,042 |

| multiple-choice | TruthfulQA | wiki | / | 817 | / |

| multiple-choice | HellaSWAG | ActivityNet | 39,905 | 10,042 | / |

| multiple-choice | ARC | - | 3,370 | 869 | 3,548 |

| multiple-choice | OpenBookQA | - | 4,957 | 500 | 500 |

| Fact Verification | FEVER | wiki | 104,966 | 10,444 | / |

| Dialog Generation | WOW | wiki | 63,734 | 3,054 | / |

| Entity Linking | AIDA CoNll-yago | Freebase & wiki | 18,395 | 4,784 | / |

| Entity Linking | WNED | Wiki | / | 8,995 | / |

| Slot Filling | T-REx | DBPedia | 2,284,168 | 5,000 | / |

| Slot Filling | Zero-shot RE | wiki | 147,909 | 3,724 | / |

Our toolkit supports jsonl format for retrieval document collections, with the following structure:

{"id":"0", "contents": "...."}

{"id":"1", "contents": "..."}The contents key is essential for building the index. For documents that include both text and title, we recommend setting the value of contents to {title}\n{text}. The corpus file can also contain other keys to record additional characteristics of the documents.

In the academic research, Wikipedia and MS MARCO are the most commonly used retrieval document collections. For Wikipedia, we provide a comprehensive script to process any Wikipedia dump into a clean corpus. Additionally, various processed versions of the Wikipedia corpus are available in many works, and we have listed some reference links.

For MS MARCO, it is already processed upon release and can be directly downloaded from its hosting link on Hugging Face.

- How should I set different experimental parameters?

- How to build my own corpus, such as a specific segmented Wikipedia?

- How to index my own corpus?

- How to reproduce supporting methods?

FlashRAG is licensed under the MIT License.

Please kindly cite our paper if helps your research:

@article{FlashRAG,

author={Jiajie Jin and

Yutao Zhu and

Xinyu Yang and

Chenghao Zhang and

Zhicheng Dou},

title={FlashRAG: A Modular Toolkit for Efficient Retrieval-Augmented Generation Research},

journal={CoRR},

volume={abs/2405.13576},

year={2024},

url={https://arxiv.org/abs/2405.13576},

eprinttype={arXiv},

eprint={2405.13576}

}