This GitHub repository contains the code examples of the 1st Edition of Python Machine Learning book. If you are looking for the code examples of the 2nd Edition, please refer to this repository instead.

What you can expect are 400 pages rich in useful material just about everything you need to know to get started with machine learning ... from theory to the actual code that you can directly put into action! This is not yet just another "this is how scikit-learn works" book. I aim to explain all the underlying concepts, tell you everything you need to know in terms of best practices and caveats, and we will put those concepts into action mainly using NumPy, scikit-learn, and Theano.

You are not sure if this book is for you? Please checkout the excerpts from the Foreword and Preface, or take a look at the FAQ section for further information.

1st edition, published September 23rd 2015

Paperback: 454 pages

Publisher: Packt Publishing

Language: English

ISBN-10: 1783555130

ISBN-13: 978-1783555130

Kindle ASIN: B00YSILNL0

German ISBN-13: 978-3958454224

Japanese ISBN-13: 978-4844380603

Italian ISBN-13: 978-8850333974

Chinese ISBN-13: 978-9864341405

Korean ISBN-13: 979-1187497035

Russian ISBN-13: 978-5970604090

Simply click on the ipynb/nbviewer links next to the chapter headlines to view the code examples (currently, the internal document links are only supported by the NbViewer version).

Please note that these are just the code examples accompanying the book, which I uploaded for your convenience; be aware that these notebooks may not be useful without the formulae and descriptive text.

- Excerpts from the Foreword and Preface

- Instructions for setting up Python and the Jupiter Notebook

- Machine Learning - Giving Computers the Ability to Learn from Data [dir] [ipynb] [nbviewer]

- Training Machine Learning Algorithms for Classification [dir] [ipynb] [nbviewer]

- A Tour of Machine Learning Classifiers Using Scikit-Learn [dir] [ipynb] [nbviewer]

- Building Good Training Sets – Data Pre-Processing [dir] [ipynb] [nbviewer]

- Compressing Data via Dimensionality Reduction [dir] [ipynb] [nbviewer]

- Learning Best Practices for Model Evaluation and Hyperparameter Optimization [dir] [ipynb] [nbviewer]

- Combining Different Models for Ensemble Learning [dir] [ipynb] [nbviewer]

- Applying Machine Learning to Sentiment Analysis [dir] [ipynb] [nbviewer]

- Embedding a Machine Learning Model into a Web Application [dir] [ipynb] [nbviewer]

- Predicting Continuous Target Variables with Regression Analysis [dir] [ipynb] [nbviewer]

- Working with Unlabeled Data – Clustering Analysis [dir] [ipynb] [nbviewer]

- Training Artificial Neural Networks for Image Recognition [dir] [ipynb] [nbviewer]

- Parallelizing Neural Network Training via Theano [dir] [ipynb] [nbviewer]

A big thanks to Dmitriy Dligach for sharing his slides from his machine learning course that is currently offered at Loyola University Chicago.

Some readers were asking about Math and NumPy primers, since they were not included due to length limitations. However, I recently put together such resources for another book, but I made these chapters freely available online in hope that they also serve as helpful background material for this book:

-

Introduction to NumPy [PDF] [EPUB] [Code Notebook]

You are very welcome to re-use the code snippets or other contents from this book in scientific publications and other works; in this case, I would appreciate citations to the original source:

BibTeX:

@Book{raschka2015python,

author = {Raschka, Sebastian},

title = {Python Machine Learning},

publisher = {Packt Publishing},

year = {2015},

address = {Birmingham, UK},

isbn = {1783555130}

}

MLA:

Raschka, Sebastian. Python machine learning. Birmingham, UK: Packt Publishing, 2015. Print.

Sebastian Raschka’s new book, Python Machine Learning, has just been released. I got a chance to read a review copy and it’s just as I expected - really great! It’s well organized, super easy to follow, and it not only offers a good foundation for smart, non-experts, practitioners will get some ideas and learn new tricks here as well.

– Lon Riesberg at Data Elixir

Superb job! Thus far, for me it seems to have hit the right balance of theory and practice…math and code!

– Brian Thomas

I've read (virtually) every Machine Learning title based around Scikit-learn and this is hands-down the best one out there.

– Jason Wolosonovich

The best book I've seen to come out of PACKT Publishing. This is a very well written introduction to machine learning with Python. As others have noted, a perfect mixture of theory and application.

– Josh D.

A book with a blend of qualities that is hard to come by: combines the needed mathematics to control the theory with the applied coding in Python. Also great to see it doesn't waste paper in giving a primer on Python as many other books do just to appeal to the greater audience. You can tell it's been written by knowledgeable writers and not just DIY geeks.

– Amazon Customer

Sebastian Raschka created an amazing machine learning tutorial which combines theory with practice. The book explains machine learning from a theoretical perspective and has tons of coded examples to show how you would actually use the machine learning technique. It can be read by a beginner or advanced programmer.

- William P. Ross, 7 Must Read Python Books

If you need help to decide whether this book is for you, check out some of the "longer" reviews linked below. (If you wrote a review, please let me know, and I'd be happy to add it to the list).

- Python Machine Learning Review by Patrick Hill at the Chartered Institute for IT

- Book Review: Python Machine Learning by Sebastian Raschka by Alex Turner at WhatPixel

- ebook and paperback at Amazon.com, Amazon.co.uk, Amazon.de

- ebook and paperback from Packt (the publisher)

- at other book stores: Google Books, O'Reilly, Safari, Barnes & Noble, Apple iBooks, ...

- social platforms: Goodreads

- Italian translation via "Apogeo"

- German translation via "mitp Verlag"

- Japanese translation via "Impress Top Gear"

- Chinese translation

- Logistic Regression Implementation [dir] [ipynb] [nbviewer]

- A Basic Pipeline and Grid Search Setup [dir] [ipynb] [nbviewer]

- An Extended Nested Cross-Validation Example [dir] [ipynb] [nbviewer]

- A Simple Barebones Flask Webapp Template [view directory][download as zip-file]

- Reading handwritten digits from MNIST into NumPy arrays [GitHub ipynb] [nbviewer]

- Scikit-learn Model Persistence using JSON [GitHub ipynb] [nbviewer]

- Multinomial logistic regression / softmax regression [GitHub ipynb] [nbviewer]

"Related Content" (not in the book)

- Model evaluation, model selection, and algorithm selection in machine learning - Part I

- Model evaluation, model selection, and algorithm selection in machine learning - Part II

- Model evaluation, model selection, and algorithm selection in machine learning - Part III

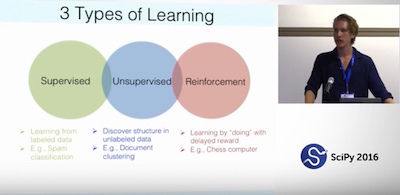

We had such a great time at SciPy 2016 in Austin! It was a real pleasure to meet and chat with so many readers of my book. Thanks so much for all the nice words and feedback! And in case you missed it, Andreas Mueller and I gave an Introduction to Machine Learning with Scikit-learn; if you are interested, the video recordings of Part I and Part II are now online!

I attempted the rather challenging task of introducing scikit-learn & machine learning in just 90 minutes at PyData Chicago 2016. The slides and tutorial material are available at "Learning scikit-learn -- An Introduction to Machine Learning in Python."

Note

I have set up a separate library, mlxtend, containing additional implementations of machine learning (and general "data science") algorithms. I also added implementations from this book (for example, the decision region plot, the artificial neural network, and sequential feature selection algorithms) with additional functionality.

Dear readers,

first of all, I want to thank all of you for the great support! I am really happy about all the great feedback you sent me so far, and I am glad that the book has been so useful to a broad audience.

Over the last couple of months, I received hundreds of emails, and I tried to answer as many as possible in the available time I have. To make them useful to other readers as well, I collected many of my answers in the FAQ section (below).

In addition, some of you asked me about a platform for readers to discuss the contents of the book. I hope that this would provide an opportunity for you to discuss and share your knowledge with other readers:

(And I will try my best to answer questions myself if time allows! :))

The only thing to do with good advice is to pass it on. It is never of any use to oneself.

— Oscar Wilde

Once again, I have to say (big!) THANKS for all the nice feedback about the book. I've received many emails from readers, who put the concepts and examples from this book out into the real world and make good use of them in their projects. In this section, I am starting to gather some of these great applications, and I'd be more than happy to add your project to this list -- just shoot me a quick mail!

- 40 scripts on Optical Character Recognition by Richard Lyman

- Code experiments by Jeremy Nation

- What I Learned Implementing a Classifier from Scratch in Python by Jean-Nicholas Hould

- What are machine learning and data science?

- Why do you and other people sometimes implement machine learning algorithms from scratch?

- What learning path/discipline in data science I should focus on?

- At what point should one start contributing to open source?

- How important do you think having a mentor is to the learning process?

- Where are the best online communities centered around data science/machine learning or python?

- How would you explain machine learning to a software engineer?

- How would your curriculum for a machine learning beginner look like?

- What is the Definition of Data Science?

- How do Data Scientists perform model selection? Is it different from Kaggle?

- How are Artificial Intelligence and Machine Learning related?

- What are some real-world examples of applications of machine learning in the field?

- What are the different fields of study in data mining?

- What are differences in research nature between the two fields: machine learning & data mining?

- How do I know if the problem is solvable through machine learning?

- What are the origins of machine learning?

- How was classification, as a learning machine, developed?

- Which machine learning algorithms can be considered as among the best?

- What are the broad categories of classifiers?

- What is the difference between a classifier and a model?

- What is the difference between a parametric learning algorithm and a nonparametric learning algorithm?

- What is the difference between a cost function and a loss function in machine learning?

- Fitting a model via closed-form equations vs. Gradient Descent vs Stochastic Gradient Descent vs Mini-Batch Learning -- what is the difference?

- How do you derive the Gradient Descent rule for Linear Regression and Adaline?

- How does the random forest model work? How is it different from bagging and boosting in ensemble models?

- What are the disadvantages of using classic decision tree algorithm for a large dataset?

- Why are implementations of decision tree algorithms usually binary, and what are the advantages of the different impurity metrics?

- Why are we growing decision trees via entropy instead of the classification error?

- When can a random forest perform terribly?

- What is overfitting?

- How can I avoid overfitting?

- Is it always better to have the largest possible number of folds when performing cross validation?

- When training an SVM classifier, is it better to have a large or small number of support vectors?

- How do I evaluate a model?

- What is the best validation metric for multi-class classification?

- What factors should I consider when choosing a predictive model technique?

- What are the best toy datasets to help visualize and understand classifier behavior?

- How do I select SVM kernels?

- Interlude: Comparing and Computing Performance Metrics in Cross-Validation -- Imbalanced Class Problems and 3 Different Ways to Compute the F1 Score

- What is Softmax regression and how is it related to Logistic regression?

- Why is logistic regression considered a linear model?

- What is the probabilistic interpretation of regularized logistic regression?

- Does regularization in logistic regression always results in better fit and better generalization?

- What is the major difference between naive Bayes and logistic regression?

- What exactly is the "softmax and the multinomial logistic loss" in the context of machine learning?

- What is the relation between Loigistic Regression and Neural Networks and when to use which?

- Logistic Regression: Why sigmoid function?

- Is there an analytical solution to Logistic Regression similar to the Normal Equation for Linear Regression?

- What is the difference between deep learning and usual machine learning?

- Can you give a visual explanation for the back propagation algorithm for neural networks?

- Why did it take so long for deep networks to be invented?

- What are some good books/papers for learning deep learning?

- Why are there so many deep learning libraries?

- Why do some people hate neural networks/deep learning?

- How can I know if Deep Learning works better for a specific problem than SVM or random forest?

- What is wrong when my neural network's error increases?

- How do I debug an artificial neural network algorithm?

- What is the difference between a Perceptron, Adaline, and neural network model?

- What is the basic idea behind the dropout technique?

- Why do we need to re-use training parameters to transform test data?

- What are the different dimensionality reduction methods in machine learning?

- What is the difference between LDA and PCA for dimensionality reduction?

- When should I apply data normalization/standardization?

- Does mean centering or feature scaling affect a Principal Component Analysis?

- How do you attack a machine learning problem with a large number of features?

- What are some common approaches for dealing with missing data?

- What is the difference between filter, wrapper, and embedded methods for feature selection?

- Should data preparation/pre-processing step be considered one part of feature engineering? Why or why not?

- Is a bag of words feature representation for text classification considered as a sparse matrix?

- Why is the Naive Bayes Classifier naive?

- What is the decision boundary for Naive Bayes?

- Can I use Naive Bayes classifiers for mixed variable types?

- Is it possible to mix different variable types in Naive Bayes, for example, binary and continues features?

- What is Euclidean distance in terms of machine learning?

- When should one use median, as opposed to the mean or average?

- Is R used extensively today in data science?

- What is the main difference between TensorFlow and scikit-learn?

- Can I use paragraphs and images from the book in presentations or my blog?

- How is this different from other machine learning books?

- Which version of Python was used in the code examples?

- Which technologies and libraries are being used?

- Which book version/format would you recommend?

- Why did you choose Python for machine learning?

- Why do you use so many leading and trailing underscores in the code examples?

- What is the purpose of the

return selfidioms in your code examples? - Are there any prerequisites and recommended pre-readings?

- How can I apply SVM to categorical data?

I am happy to answer questions! Just write me an email or consider asking the question on the Google Groups Email List.

If you are interested in keeping in touch, I have quite a lively twitter stream (@rasbt) all about data science and machine learning. I also maintain a blog where I post all of the things I am particularly excited about.