Practical Web DataBase Design Book

This README file contains my hand-work from database concepts discussed in the book.

Also, I Implemented the applications described in the book as an illustrative example.

The Repository is a part of collaborative work production in

a 6-Month Mentorship program under the supervision of Eng.Ahmed Emad.

- An Intro to the content of the book

- Book Example Design and Impl in MySql & PostgresSql

- Ecommerce Application Design

- Some Common Queries, e.g., top-selling products And Daily or Monthly Reports

- MySQL DataBase Performance Tuning

- The Tools which I used In the project

Practical web database design is a great text book that had written by experienced professional engineers.

They made an insightful database work consists of Nine chapters and Appendix.

On Their first words, They gave us a good introduction about database definition,

a short history about how we start thinking for files alternative, what is the DBMS and its models,

and is there a database on the web? if so, how about using it in web application.

After that, The author discussed core database concepts, e.g., Data model, Relational Model and

how it consists of Tables, Rows, Columns, The Domains and data types.

They explained data model constrains and how to use primary keys and foreign keys in a wise DB Model Design.

At the End of Ch.2, He talked about Normalization, Indexs, Data Integrity.

The book explained five normalization forms with good examples, Physical data access methods, e.g.,

sequential access, direct access, indexed access, and data integrity rules.

After that we are seeing the author talking about Sql history, and it's categories.

What is Data Definition Language(DDL), DML, Querying using Select, WHERE, HAVING, and JOIN clauses.

The Book has a good E-commerce example for these concepts started from ch.3 till ch.7.

when The Author intended to talk about Database Design Fundamentals,

He gave us, At first, a solid academic definitions about data modeling, Model Entities,

its attributes, Relationships.

we found a well-described project to explain where to use OneToMany or ManyToMany, its recursive version,

and OneToOne Relationships.

The End of ch.4 is a brilliant explanation about DeNormalization for Performance purposes.

I found chapter five in this book is a legend for every software engineer and business owner.

These insightful papers gave us the guide from A~Z in System design process.

How to start the process with the owner and customer, what are the questions to ask them about,

so you can get your goal obvious.

After Gathering Information, we can step into Identifying System Entities, Attributes, Relationships.

Ending By preparing your conceptual data model.

In the end, the author addresses advanced database features

- Sub-Queries

- Stored Procedures and Triggers

- Database Performance Tuning

- Types of Indexes, and Indexes best practices.

- Managing Concurrency: Locking and Transactions.

Also, Book Authors address two main chapters about Database Security and Maintenance. These topics are more towards System Administration work, but it is good for us to know how to perform good security rules.

As I mentioned earlier, the author discussed in Chapter.5

What is the SOLID steps to follow, so you can result a well-designed project.

The author strongly recommends to analysis system requirements firstly with the business owner

and some possible customers by asking these questions(mentioned inch5)

for example we can ask about the kind of products will be sold? what information

about the products must be maintained in the Database? How should the database store customer orders?

and other many questions, so you can estimate the size of the application and how far should we care in

the design about performance or scalability.

After Gathering system information from the business

we can see that the system must contain products entity.

The Product entity must contain every available information for various different

products which are not in the same category.

Here we can find it's reasonable to have a lot of null in the product entity.

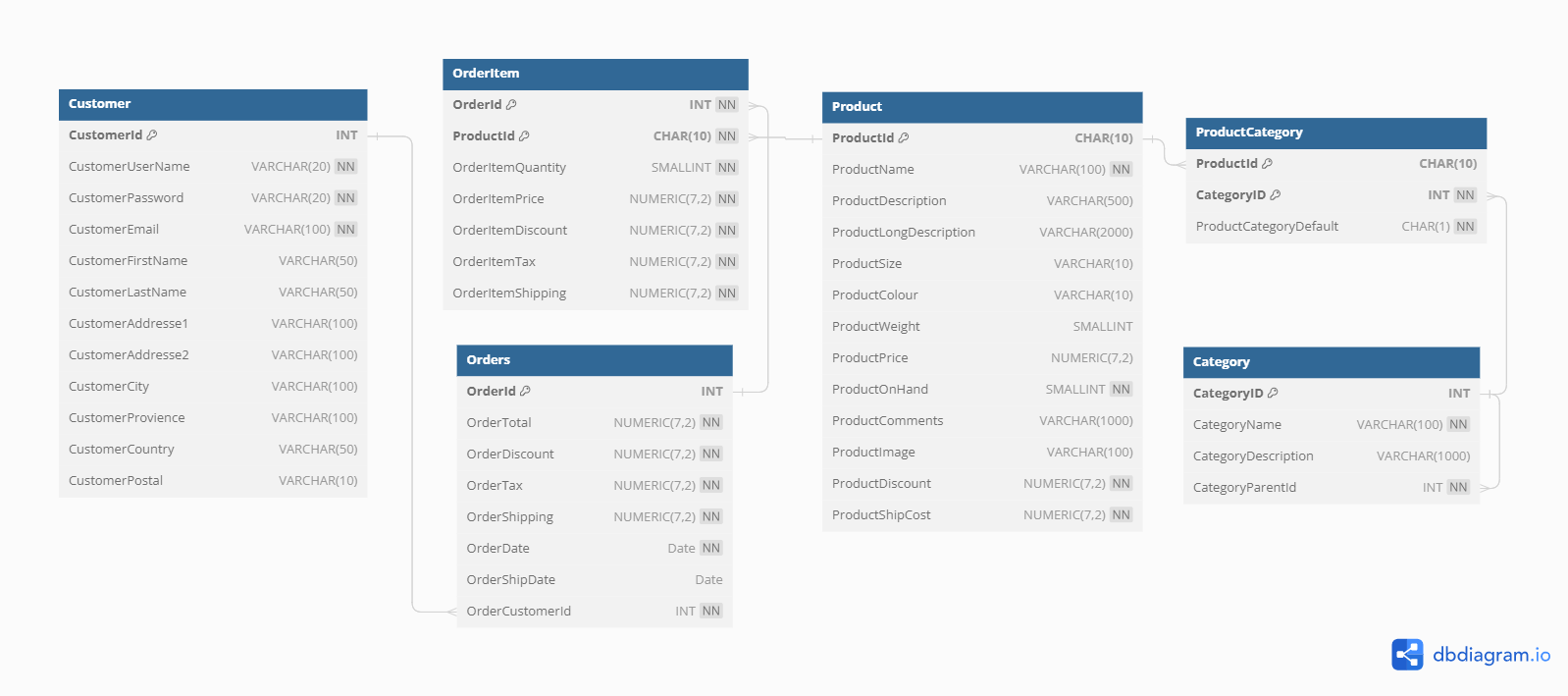

All other rest of system entities are described in the ERD Diagram below and those relationships.

I Implemented these model classes using Java , Spring Data Jpa. Take a look here.

I used postgreSQL to generate and test the Database, as you can access the sql code to create your Own schema from the system from here.

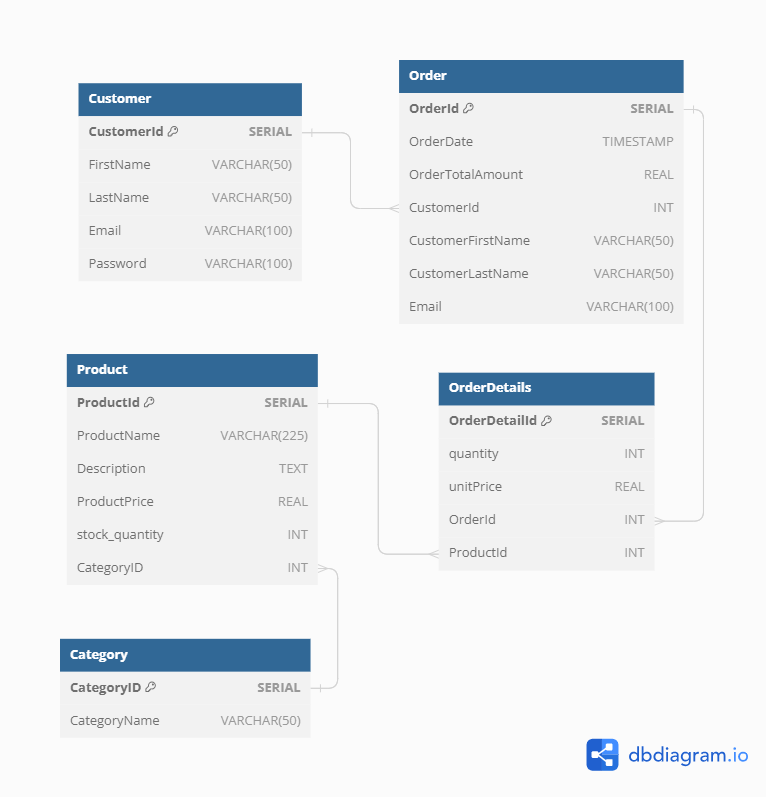

The app is e-commerce that has Order, Product, Category, and Customer Entities.

- Customer can purchase many orders so it will be a

OneToManyRelationship. As a One Customer linked to Many Orders. - Products can be in many orders, also orders can have many products.

So I linked them with

ManyToManyRelationship: Here we have an associative Entity (OrderDetails) - Category always had many products.

So it will be a one category linked to many products as

OneToManyRelation

You can Access a

SQLfile for creating the database schema: DB Schema.

Also, if you need to test the database design and queries listed below, you can use these Fake Data.

Here you can find ERD Normalized Form of the app.

when the customer creat an order,

I need his/her email and full-name to notify him/her with an email containing the order details and shipping information.

we need to Denormalize this design, so it would be easy for the Query to get customer info.

I added user-name and email to Order Entity

Here is Redundant info, but Better Performance.

Business Owner wants a daily report of the total revenue for a specific date.

we can get the total revenue by get the summation of the orderTotalAmount for A specific Order where the date is orderDate

SELECT SUM(ordertotalamount)

FROM "Order" WHERE

DATE(orderdate)='2023-09-09'; -- write specific date you want Business Owner wants a monthly report Of the top-selling products in a given month.

SELECT DATE_FORMAT(orderdate,'%y-%m') AS Months, productId

FROM orderdetails od JOIN 'Order' o ON o.orderId=od.OrderId

(SELECT ProductName FROM product p where p.productId = od.productId, SUM(quantity)) As totalQuantity

WHERE DATE_FORMAT(orderdate,'%y-%m') = '2023-10'

GROUP BY DATE_FORMAT(orderdate,'%y-%m'), productid

ORDER BY totalQuantity DESC

;Business Owner wants list Of customers who have placed orders totaling more than $500 in the past month.

SELECT c. customerid, c. firstname, c. lastname

FROM customer c JOIN "Order" o ON c.customerid = o.customerid

WHERE o.orderdate DATE_TRUNC( 'month' , CURRENT_DATE -INTERVAL '1 month')

AND o.order_date < DATE_TRUNC( 'month' , CURRENT_DATE)

GROUP BY c. customerid, c. firstname, c. lastname

HAVING SUM(o.totalamount > 500) -- put the amount you want

;searching is a fundamental feature for any website now, not only ecommerce, so we should care about how fast it is and accurate for searching results

select productid, productname,productname from product

where productname like 'camera' or productdescription like 'camera';- we need the customer to know what else we have he might be interested in, so when the customer is viewing a product, the system will suggest products from the similar category

SELECT p.productname FROM product AS p

INNER JOIN category AS c ON p.categoryid = c.categoryid

WHERE p.categoryid =

(SELECT categoryid FROM product WHERE productid = 'viewed_product_id')

AND p.productid <> 'viewed_product_id' -- select the viewed product id

ORDER BY

(SELECT COUNT(*) FROM orderdetails AS od WHERE od.productid = p.productid) DESC

LIMIT 5

;when the customer purchases a new order, create a history sale in the database for this customer. The sale history details may be order date, customer full name, products purchased in the order. The trigger should be triggered on the order insertion.

# Create the sale history table if it doesn't exist

CREATE TABLE IF NOT EXISTS sale_history (

sale_id INT AUTO_INCREMENT PRIMARY KEY,

order_date DATE,

customer_full_name VARCHAR(255),

products_purchased TEXT

);

# Create the trigger

DELIMITER $$

CREATE TRIGGER CreateSaleHistory

AFTER INSERT ON orders

FOR EACH ROW

BEGIN

INSERT INTO sale_history (order_date, customer_full_name, products_purchased)

VALUES (NEW.order_date, CONCAT(NEW.customer_first_name, ' ', NEW.customer_last_name),

NEW.products_ordered);

END;

$$

DELIMITER ;write a transaction to lock quantity field with product id = 211 from being updated.

And another transaction to lock the row.

-- Lock the field quantity with product id = 211

begin;

select quantity from product where productid = 211 ;

commit;

-- Lock the row with product_id = 211 for update

begin;

select * from product where productid = 211 for update;

commit;Performance tuning is a critical topic that every developer should care about.

A subsection in ch.8 of the book gives a quick look about database tuning,

so I found it is a fundamental subject, and I need to deep dive into it.

I listened to MySQL Database Tuning course on

YouTube.

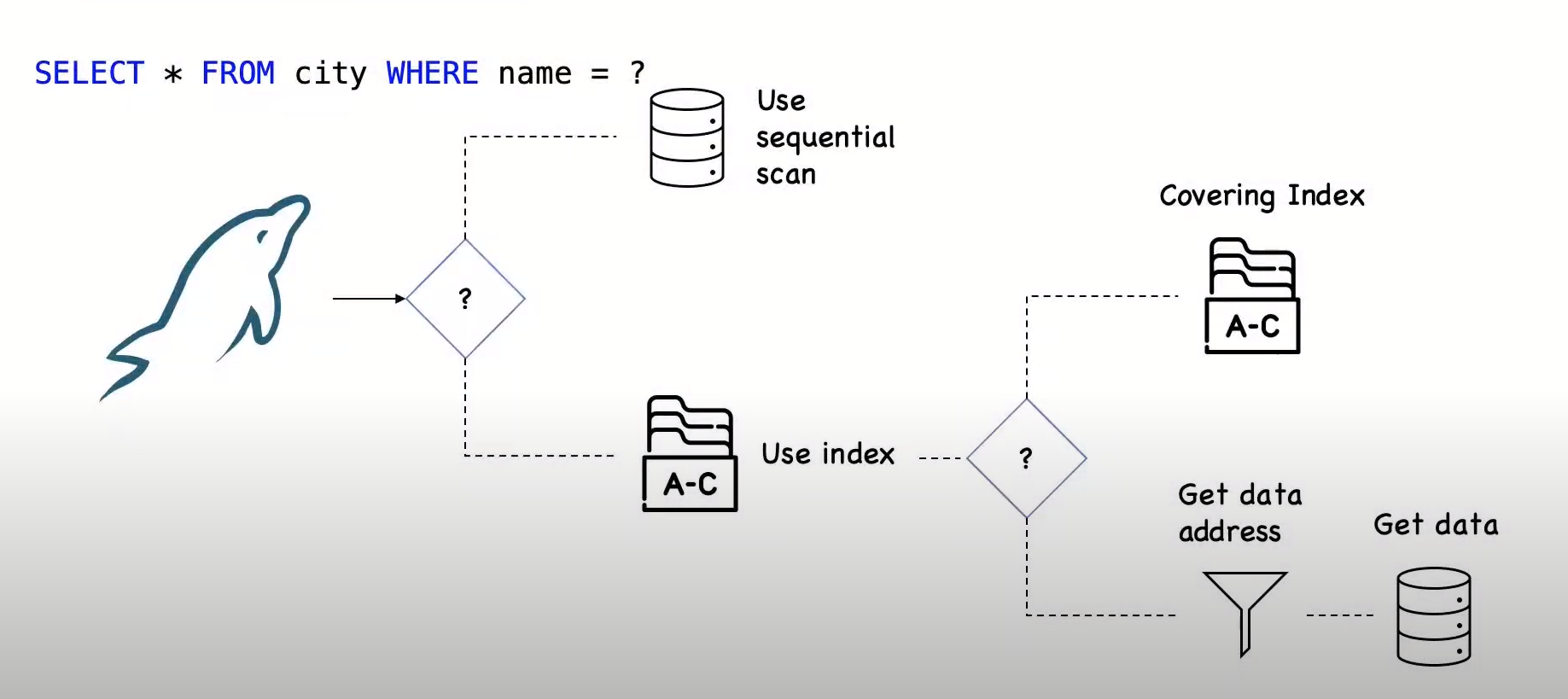

For MySQL database engine, we will talk about :

MySQL creates a query plan before each query

when I have a query to optimize,

I'll Analyize why is it slow? or why it impacts the system too much?

the main tool fo this is explain statement

which provides information about

the query plan chosen by the optimizer

and the optimizer has to make a few decisions before executing each query

If the client wants to fetch data from my database, we have 3 options for doing this:

- Fetch the data directly from a table. This is called full scan, it's normally works best for the small tables. As it fetches all rows from the table and checks against the condition, it's the worst time-response way to use!

- Go to the

indexand stops here, because all required columns are in the index. if it so, we have anindex covering, the index is used to filter outrowsthen access these rows from the table, usually this is the best time-response way to access a table, but this is less often a case exists. - Get the Records location from

index, and go to the table to get the actual data.

If we have a slow query, the first thing to do is running it with explain.

It shows the query plan, or you can say the list things expected to happen when the query is executed.

If I run the query with explain analyze, we will get both the estimation of what the planner expected

along with what happens when the query runs.

explain select * from orders;

-- run with explain analyze

explain analyze select * from orders;

-- show explain in json or tree format

explain format=json select * from product ;

-- show explain in tree format

explain format=tree select * from product ;Tips:

- pay attention to weather the estimates, and the actual cost differs from each other,

if there is a big difference, the optimizer is making poor decisions.

Running

analyzetable cause is a good thing to start. - when analyzing a query, we should care about

cacheit is Hot or Cold as it for sure affects the amount of time for the query.

Conclusion:

explain analyze is a profiling tool for your queries that will reveal where mySQL spends time on the query and why? display the difference between planning vg actual execution

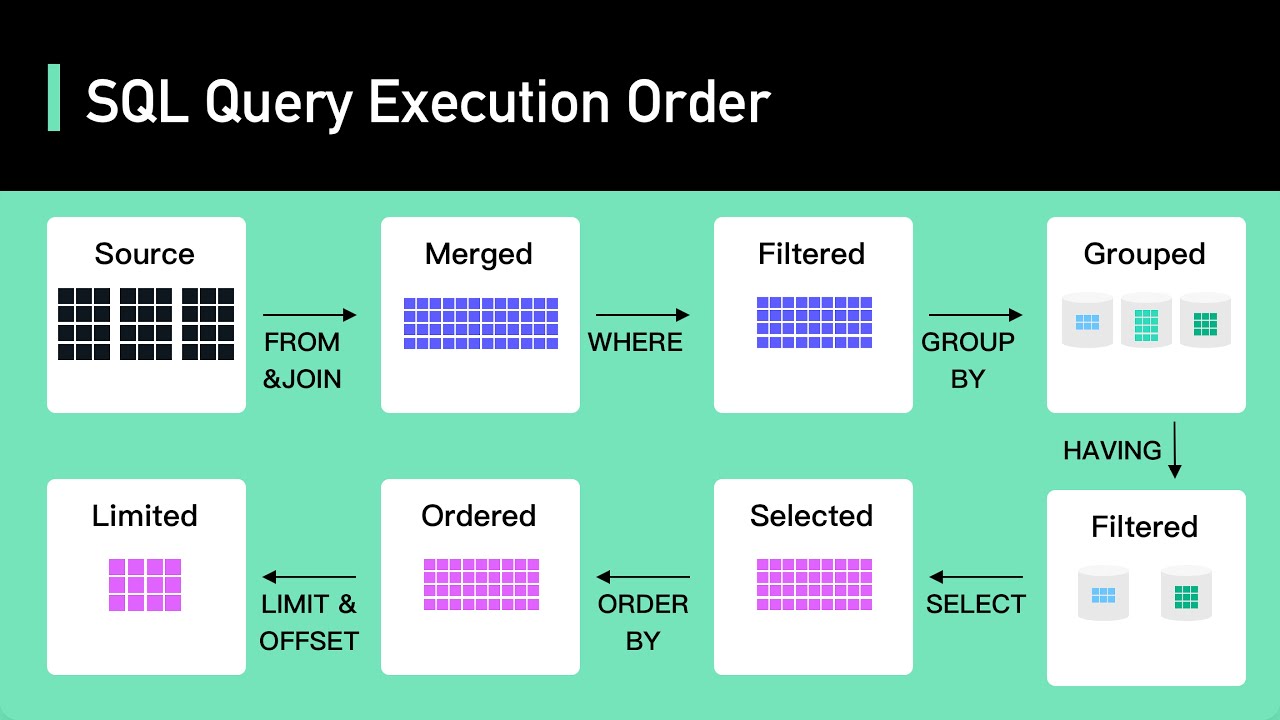

Before any performance steps, It is important to pay attention to the query execution order. As shown below, this is the order of executing a query statements:

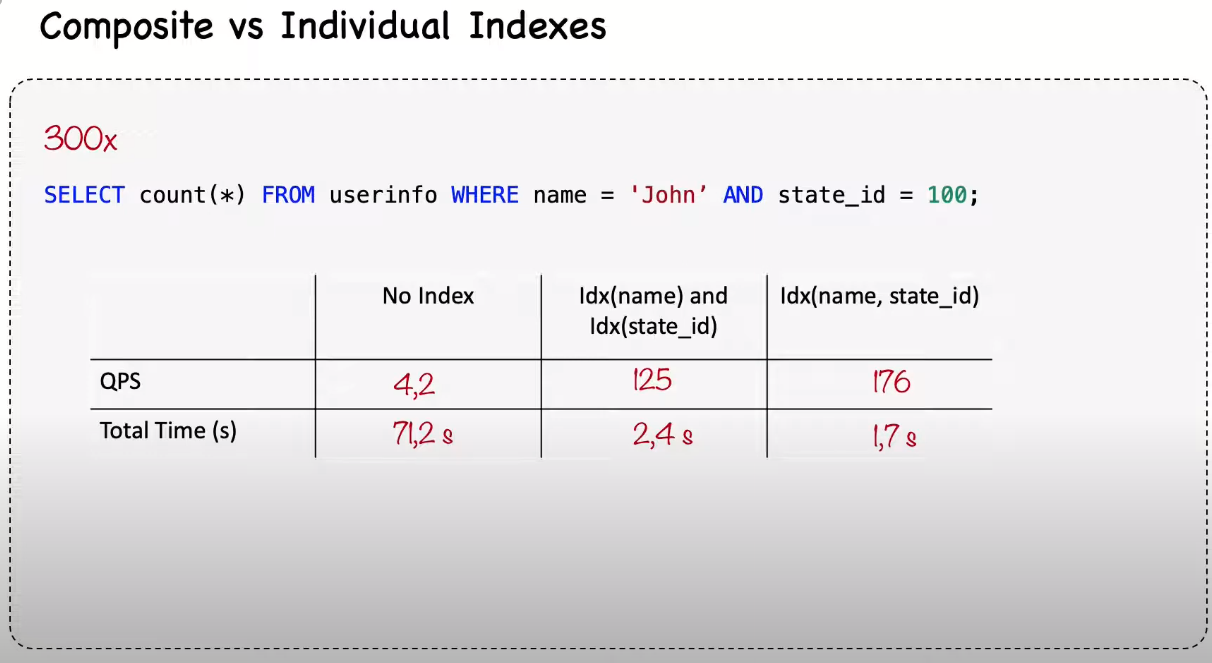

Multi-Column index is known also as a composite index.

when querying data from a table, we can do this

with two conditions, and every column is index individually, Or we can index the two columns in a composite index.

Also, we can query the data with no index!

I'm testing in userinfo table

-- No index so mysql does a full coverage here and took long time response

explain analyze select count(*) from userinfo where name = 'John100' and state_id = 100;

-- create individual index

-- use analyze table and you'll find it's much faster now

alter table userinfo add index name_idx(name);

alter table userinfo add index state_id_idx(state_id);

-- drop the two column indexes

drop index name_idx(name);

drop index state_id_idx(state_id);

-- create the composite index

alter table userinfo add index name_state_idx(name,state_id);The benchmark time result from comparing these indexes' options is :

Redundant indexes are not a good thing to keep in your schema. Use this simple query to know what Redundant you have

select * from sys.schema_redundant_indexes\G;you can access the python script that I used to generate one million fake data. , also another script for benchmark testing.

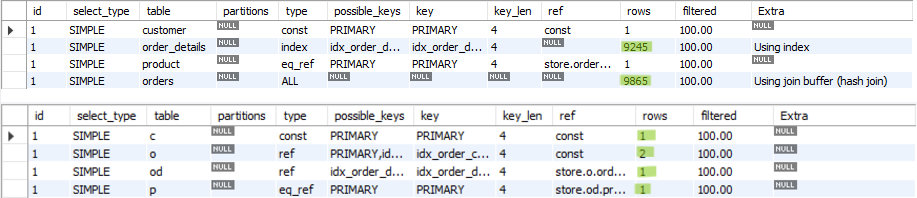

It is a common use case in all ecommerce websites, so I applied it to our example that I mentioned it the top of this page aganist a 10k records and I tried to optimize its performance

select c.customer_id,

c.first_name,

c.last_name,

o.order_id,

o.total_amount,

p.product_id,

p.name

from customer c join

(orders o join

(order_details od join

product p on od.product_id = p.product_id)

)

where c.customer_id=500; -- select the specific customer you want Here in that query,It worked and returned me the desired output.

And for curious purposes I executed the query with explain keyword, and here is the bad news as

I found it's not efficient.

For 10k rows, it covers almost all the row(9250 rows). I can see it is full coverage,

It is very strange because most of the columns are primary and foreign keys, so it's typically indexed.

After thinking about the issue, I tried to reduce the number of joins.

# I have nested JOINs, which can be optimized by breaking them into separate queries.

# This can reduce the complexity and improve readability.

select c.customer_id,

c.first_name,

c.last_name,

o.order_id,

o.total_amount,

p.product_id,

p.name

from customer c

join orders o on c.customer_id = o.customer_id

join order_details od on o.order_id = od.order_id

join product p on od.product_id = p.product_id

where c.customer_id=500;The result has Blown up my mind, it covers only (2) rows for maximum.

using explain we'll get this.so Take a look at the results down here:

- Retrieve the total number of products in each category.

- Find the top customers by total spending.

- List products that have low stock quantities of less than 10 quantities.

- Calculate the revenue generated from each product category.

| The Query | Time | Optimization Technique | Rewrite Query | Time |

|---|---|---|---|---|

select cat.category_name, count(p.product_id) as totalproducts from product p join category cat on cat.category_id=p.category_id GROUP BY cat.category_id ORDER BY totalproducts DESC; |

57 ms | create an index on product for the category id and change the grouping attribute to category_name |

create index idx_category_id on product(category_id); |

41 ms |

select c.first_name, c.last_name, o.order_id,o.total_amount from customer c join orders o on c.customer_id = o.customer_id where o.total_amount = (SELECT MAX(total_amount) from orders); |

1.9 ms | using ORDER BY and LIMIT instead of using MAX and index total_amount column |

SELECT c.first_name, c.last_name, o.order_id, SUM(o.total_amount) as totalAmountFinal FROM customer c JOIN orders o ON c.customer_id = o.customer_id ORDER BY totalAmountFinal DESC LIMIT 1; |

.7 ms |

select p.product_id,p.name,p.stock_quantity from product p where stock_quantity<10; |

1.9 ms | create index of stock_quantity |

create index idx_quantity on product (stock_quantity); |

1.7 ms |

SELECT p.category_id, cat.category_name, SUM(p.price) as total_price FROM product p JOIN category cat ON p.category_id = cat.category_id WHERE cat.category_name = 'category1' GROUP BY p.category_id, cat.category_name; |

1.6 ms | Avoid Unnecessary Columns in GROUP BY |

SELECT p.category_id, cat.category_name, SUM(p.price) as total_price FROM product p JOIN category cat ON p.category_id = cat.category_id WHERE cat.category_name = 'category1' GROUP BY p.category_id; |

1.5 ms |

If you want to access a good recap overview of what I learned in my database journey with the book and my mentor advices, I posted here a one flow chart page containing all of my learning