Step-by-Step Guide on how to run Large Language Model on a Raspberry Pi 5 (might work on 4 too, haven't tested it yet)

- Prerequisite

- Setup Raspberry Pi

- Option 1: Run LLMs using Ollama

- Option 2: Run LLMs using Llama.cpp

- Extra Resoucres

- Raspberry Pi 5, 8GB RAM

- 32GB SD Card

You can also follow along this YouTube video instead.

- Connect the SD card to your laptop

- Download Raspberry Pi OS (bootloader): https://www.raspberrypi.com/software/

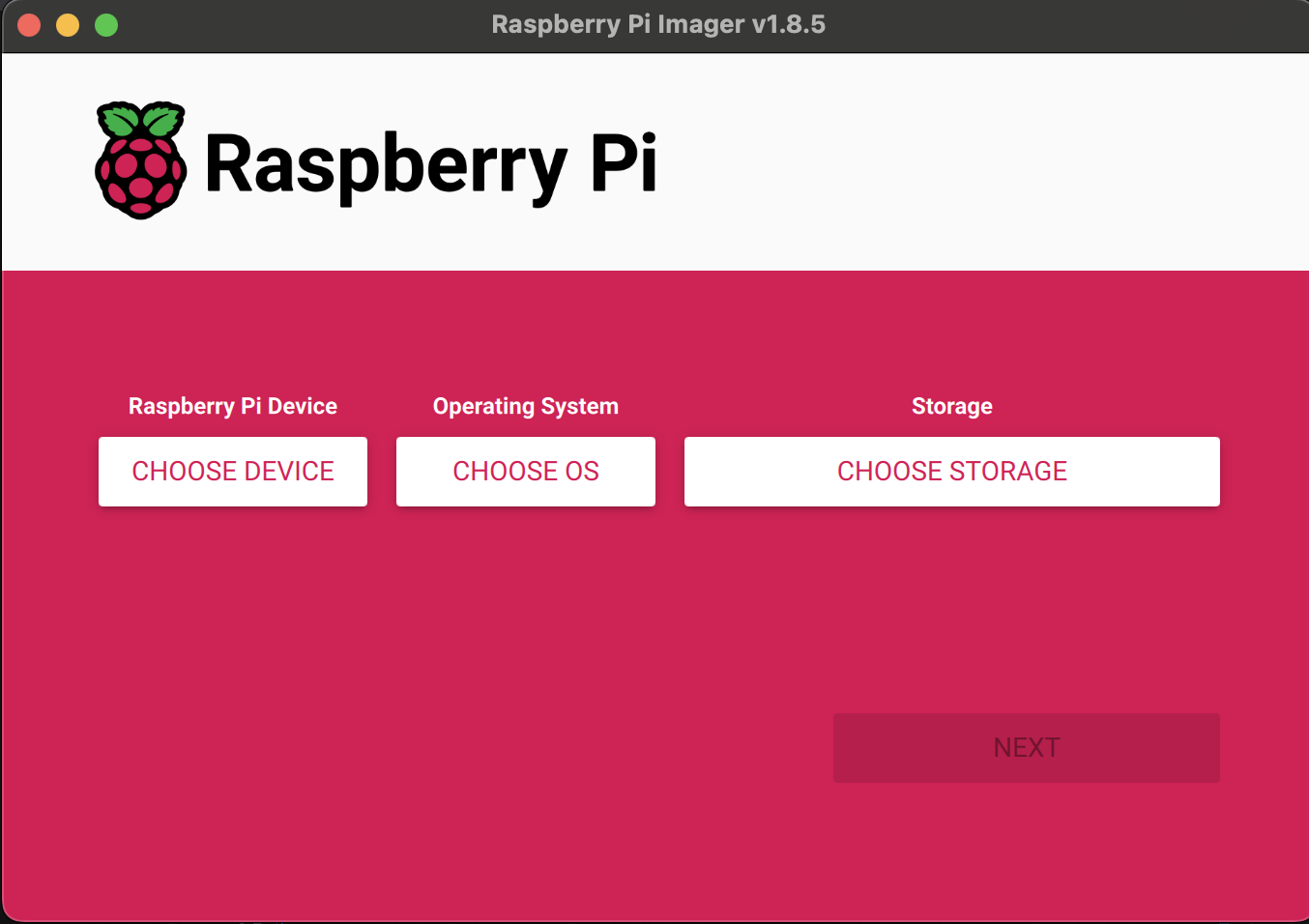

- Run it, and you should see:

- "Choose Device" - choose Raspberry Pi 5

- OS, choose the latest (64bit is the recommended)

- "Choose Storage" - choose the inserted SD card

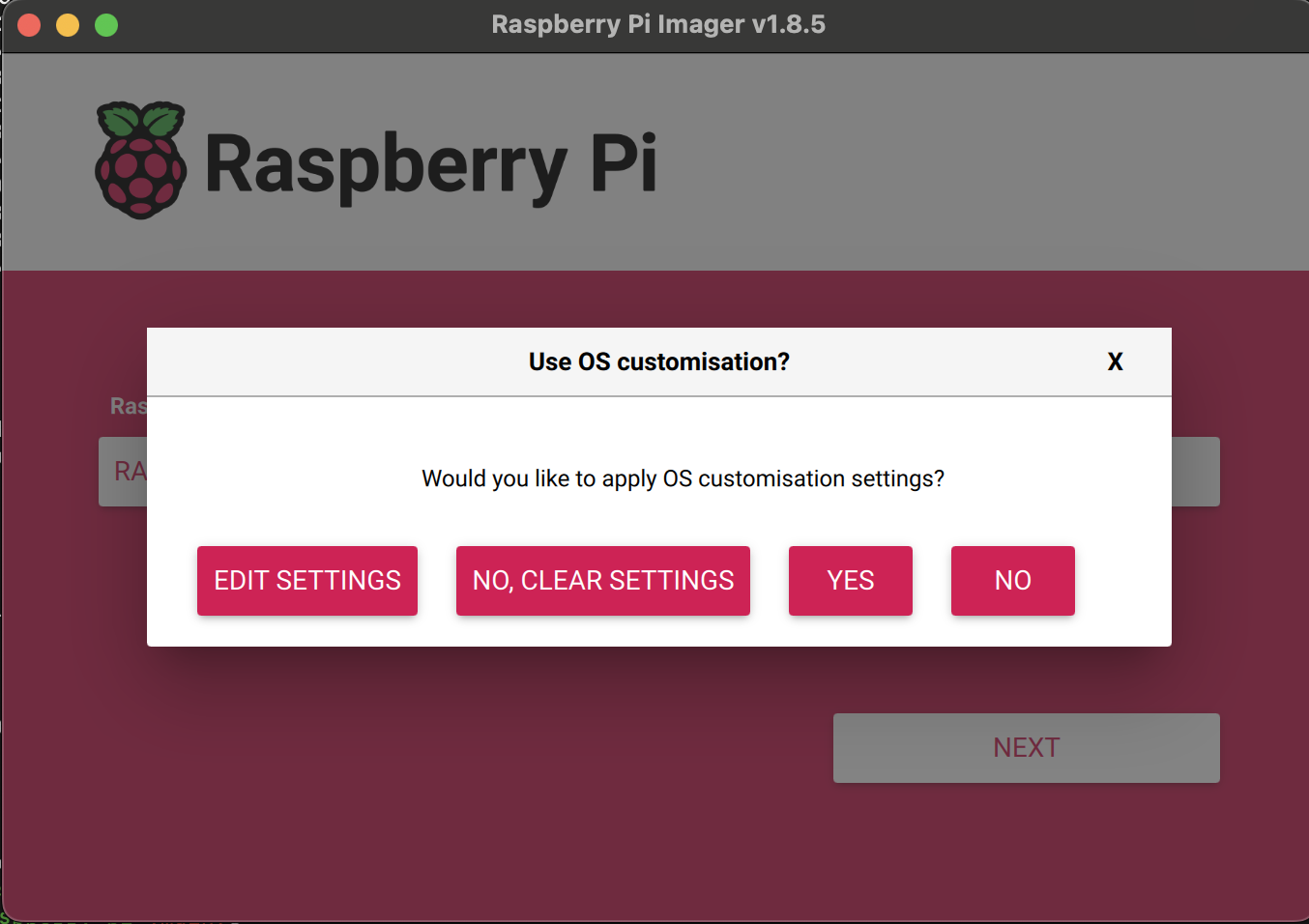

- Now click next, and it will ask you if you want to edit the settings, click "Edit settings"

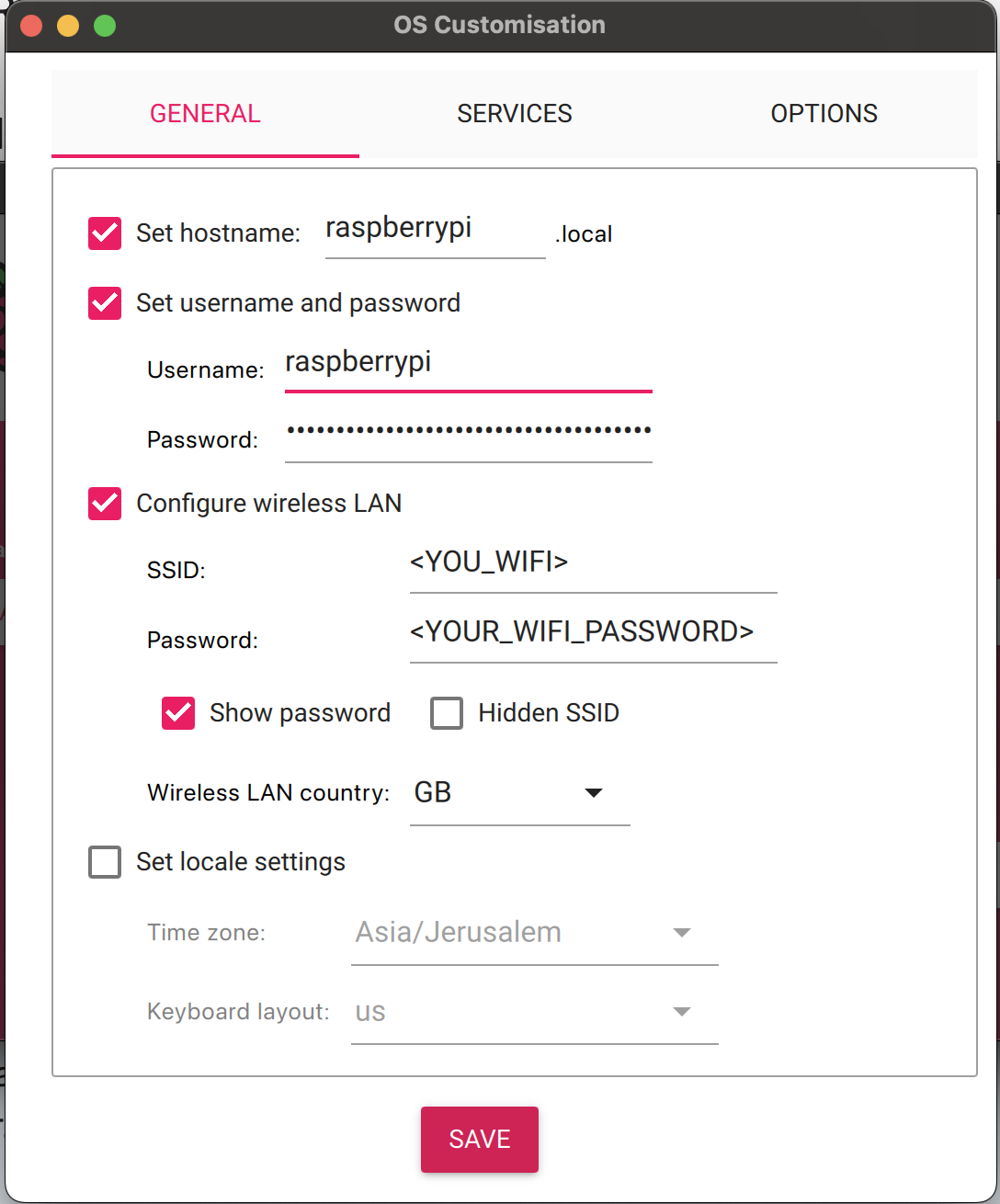

- Configure

- enable hostname and set it to

raspberrypi.local - Set username and password you will remember, we will use them shortly

- Enable "Configure Wireless LAN" and add your wifi name and password

- Click save, and contiue. it will take a few minutes to write everything to the SD

- enable hostname and set it to

- Insert the SD card to your raspberry pi, and connect it to the electricity

- SSH into the Raspberry PI:

ssh ssh <YOUR_USERNAME>@raspberrypi.local- Install Ollama:

curl -fsSL https://ollama.com/install.sh | sh- Download & Run Mistral model:

ollama run mistralThat is it!

- Install:

sudo apt update && sudo apt install git g++ wget build-essential- Download llama.cpp repo:

git clone https://github.com/ggerganov/llama.cpp

cd llama.cpp- Compile:

make -j- Download Mistral model:

cd models

wget https://huggingface.co/TheBloke/Mistral-7B-v0.1-GGUF/resolve/main/mistral-7b-v0.1.Q4_K_S.gguf)- Go back to repo root folder, and run:

cd ..

./main -m models/mistral-7b-v0.1.Q4_K_S.gguf -p "Whatsup?" -n 400 -eThat is it!