⭐ If ProPainter is helpful to your projects, please help star this repo. Thanks! 🤗

📖 For more visual results, go checkout our project page

- 2023.09.21: Add features for memory-efficient inference. Check our GPU memory requirements. 🚀

- 2023.09.07: Our code and model are publicly available. 🐳

- 2023.09.01: This repo is created.

- Make a interactive Gradio demo.

- Make a Colab demo.

-

Update features for memory-efficient inference.

|

|

|

|

|

|

-

Clone Repo

git clone https://github.com/sczhou/ProPainter.git

-

Create Conda Environment and Install Dependencies

conda env create -f environment.yaml conda activate propainter

- Python >= 3.7

- PyTorch >= 1.6.0

- CUDA >= 9.2

- mmcv-full (refer the command table to install v1.4.8)

Download our pretrained models from Releases V0.1.0 to the weights folder. (All pretrained models can also be automatically downloaded during the first inference.)

The directory structure will be arranged as:

weights

|- ProPainter.pth

|- recurrent_flow_completion.pth

|- raft-things.pth

|- i3d_rgb_imagenet.pt (for evaluating VFID metric)

|- README.md

We provide some examples in the inputs folder.

Run the following commands to try it out:

# The first example (object removal)

python inference_propainter.py --video inputs/object_removal/bmx-trees --mask inputs/object_removal/bmx-trees_mask

# The second example (watermark removal)

python inference_propainter.py --video inputs/watermark_removal/running_car.mp4 --mask inputs/watermark_removal/mask.pngThe results will be saved in the results folder.

To test your own videos, please prepare the input mp4 video (or split frames) and frame-wise mask(s).

If you want to specify the video resolution for processing or avoid running out of memory, you can set the video size of --width and --height:

# process a 576x320 video; set --fp16 to use fp16 (half precision) during inference.

python inference_propainter.py --video inputs/watermark_removal/running_car.mp4 --mask inputs/watermark_removal/mask.png --height 320 --width 576 --fp16Video inpainting typically requires a significant amount of GPU memory. Here, we offer various features that facilitate memory-efficient inference, effectively avoiding the Out-Of-Memory (OOM) error. You can use the following options to reduce memory usage further:

- Reduce the number of local neighbors through decreasing the

--neighbor_length(default 10). - Reduce the number of global references by increasing the

--ref_stride(default 10). - Set the

--resize_ratio(default 1.0) to resize the processing video. - Set a smaller video size via specifying the

--widthand--height. - Set

--fp16to use fp16 (half precision) during inference. - Reduce the frames of sub-videos

--subvideo_length(default 80), which effectively decouples GPU memory costs and video length.

Blow shows the estimated GPU memory requirements for different sub-video lengths with fp32/fp16 precision:

| Resolution | 50 frames | 80 frames |

|---|---|---|

| 1280 x 720 | 28G / 19G | OOM / 25G |

| 720 x 480 | 11G / 7G | 13G / 8G |

| 640 x 480 | 10G / 6G | 12G / 7G |

| 320 x 240 | 3G / 2G | 4G / 3G |

| Dataset | YouTube-VOS | DAVIS |

|---|---|---|

| Description | For training (3,471) and evaluation (508) | For evaluation (50 in 90) |

| Images | [Official Link] (Download train and test all frames) | [Official Link] (2017, 480p, TrainVal) |

| Masks | [Google Drive] [Baidu Disk] (For reproducing paper results; provided in ProPainter paper) | |

The training and test split files are provided in datasets/<dataset_name>. For each dataset, you should place JPEGImages to datasets/<dataset_name>. Resize all video frames to size 432x240 for training. Unzip downloaded mask files to datasets.

The datasets directory structure will be arranged as: (Note: please check it carefully)

datasets

|- davis

|- JPEGImages_432_240

|- <video_name>

|- 00000.jpg

|- 00001.jpg

|- test_masks

|- <video_name>

|- 00000.png

|- 00001.png

|- train.json

|- test.json

|- youtube-vos

|- JPEGImages_432_240

|- <video_name>

|- 00000.jpg

|- 00001.jpg

|- test_masks

|- <video_name>

|- 00000.png

|- 00001.png

|- train.json

|- test.json

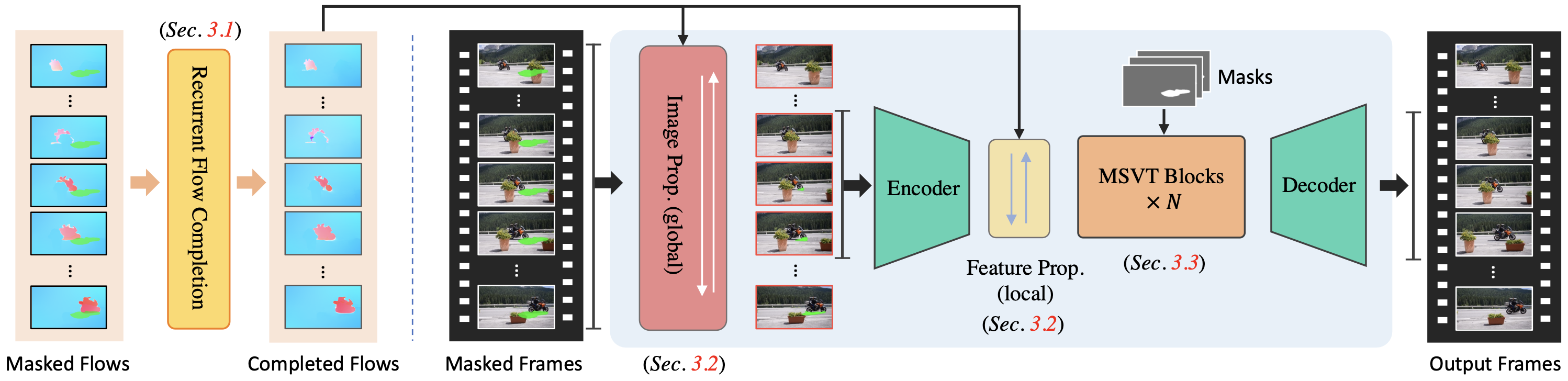

Our training configures are provided in train_flowcomp.json (for Recurrent Flow Completion Network) and train_propainter.json (for ProPainter).

Run one of the following commands for training:

# For training Recurrent Flow Completion Network

python train.py -c configs/train_flowcomp.json

# For training ProPainter

python train.py -c configs/train_propainter.jsonYou can run the same command to resume your training.

To speed up the training process, you can precompute optical flow for the training dataset using the following command:

# Compute optical flow for training dataset

python scripts/compute_flow.py --root_path <dataset_root> --save_path <save_flow_root> --height 240 --width 432Run one of the following commands for evaluation:

# For evaluating flow completion model

python scripts/evaluate_flow_completion.py --dataset <dataset_name> --video_root <video_root> --mask_root <mask_root> --save_results

# For evaluating ProPainter model

python scripts/evaluate_propainter.py --dataset <dataset_name> --video_root <video_root> --mask_root <mask_root> --save_resultsThe scores and results will also be saved in the results_eval folder.

Please --save_results for further evaluating temporal warping error.

If you find our repo useful for your research, please consider citing our paper:

@inproceedings{zhou2023propainter,

title={{ProPainter}: Improving Propagation and Transformer for Video Inpainting},

author={Zhou, Shangchen and Li, Chongyi and Chan, Kelvin C.K and Loy, Chen Change},

booktitle={Proceedings of IEEE International Conference on Computer Vision (ICCV)},

year={2023}

}This project is licensed under NTU S-Lab License 1.0. Redistribution and use should follow this license.

If you have any questions, please feel free to reach me out at shangchenzhou@gmail.com.

This code is based on E2FGVI and STTN. Some code are brought from BasicVSR++. Thanks for their awesome works.