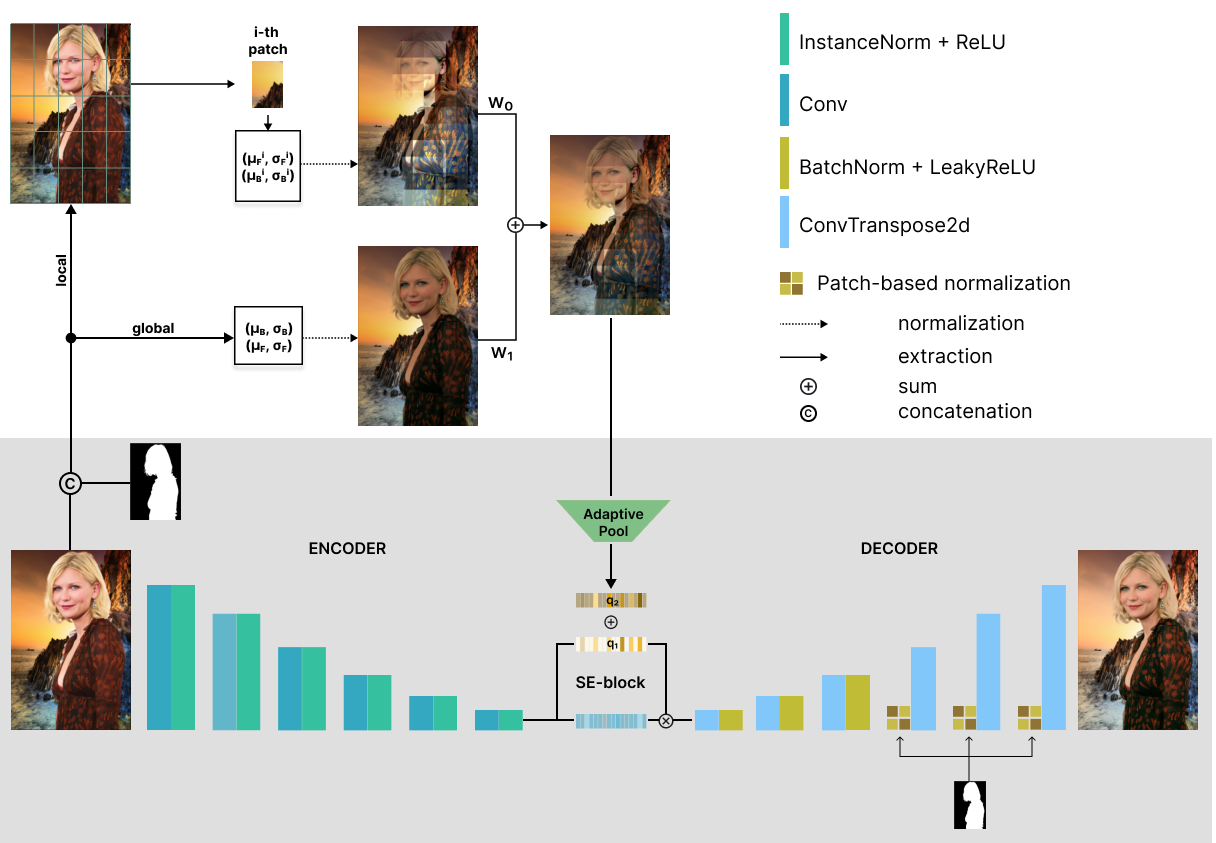

We present a patch-based harmonization network consisting of novel Patch-based normalization (PN) blocks and a feature extractor based on statistical color transfer. We evaluate our approach on available image harmonization datasets. Extensive experiments demonstrate the network's high generalization capability for different domains. Additionally, we collected a new dataset focused on portrait harmonization. Our network achieves state-of-the-art results on iHarmony4 and gains the best metrics on the synthetic portrait dataset.

For more information see our paper PHNet: Patch-based Normalization for Image Harmonization.

Clone and install required python packages:

git clone https://github.com/befozg/PHNet.git

cd PHNet

# Create virtual env by conda from env.yml file

conda env create -f env.yml

conda activate phnetWe present Flickr-Faces-HQ-Harmonization (FFHQH), a new dataset for portrait harmonization based on the FFHQ. It contains real images, foreground masks, and synthesized composites.

- Download link: FFHQH

Also, we provide some pre-trained models called PHNet for demo usage.

| State file | Size | Where to place | Download |

|---|---|---|---|

| Trained on iHarmony4 | 512x512 | checkpoints/ |

iharmony512.pth |

| Trained on FFHQH | 1024x1024 | checkpoints/ |

ffhqh1024.pth |

| Trained on FFHQH | 512x512 | checkpoints/ |

ffhqh512.pth |

| Trained on FFHQH | 256x256 | checkpoints/ |

ffhqh256.pth |

You can use downloaded trained models, otherwise select the baseline and parameters for training. To train the model, execute the following command:

python train.py <train-config-path> where train-config-path refers to the appropriate configuration file.

You should pass your own config path for customized experiments. Refer to our config/train.yaml for training details.

To test the model, execute the following command:

python test.py <test-config-path>where test-config-path refers to the appropriate configuration file. Refer to our config/test_FFHQH.yaml for inference details using FFHQH checkpoints and config/test_iHarmony4.yaml for iHarmony4 trained model.

You can cite the paper using the following BibTeX entry:

@misc{efremyan2024phnet,

title={PHNet: Patch-based Normalization for Portrait Harmonization},

author={Karen Efremyan and Elizaveta Petrova and Evgeny Kaskov and Alexander Kapitanov},

year={2024},

eprint={2402.17561},

archivePrefix={arXiv},

primaryClass={cs.CV}}

This work is licensed under a variant of Creative Commons Attribution-ShareAlike 4.0 International License.

Please see the specific license.