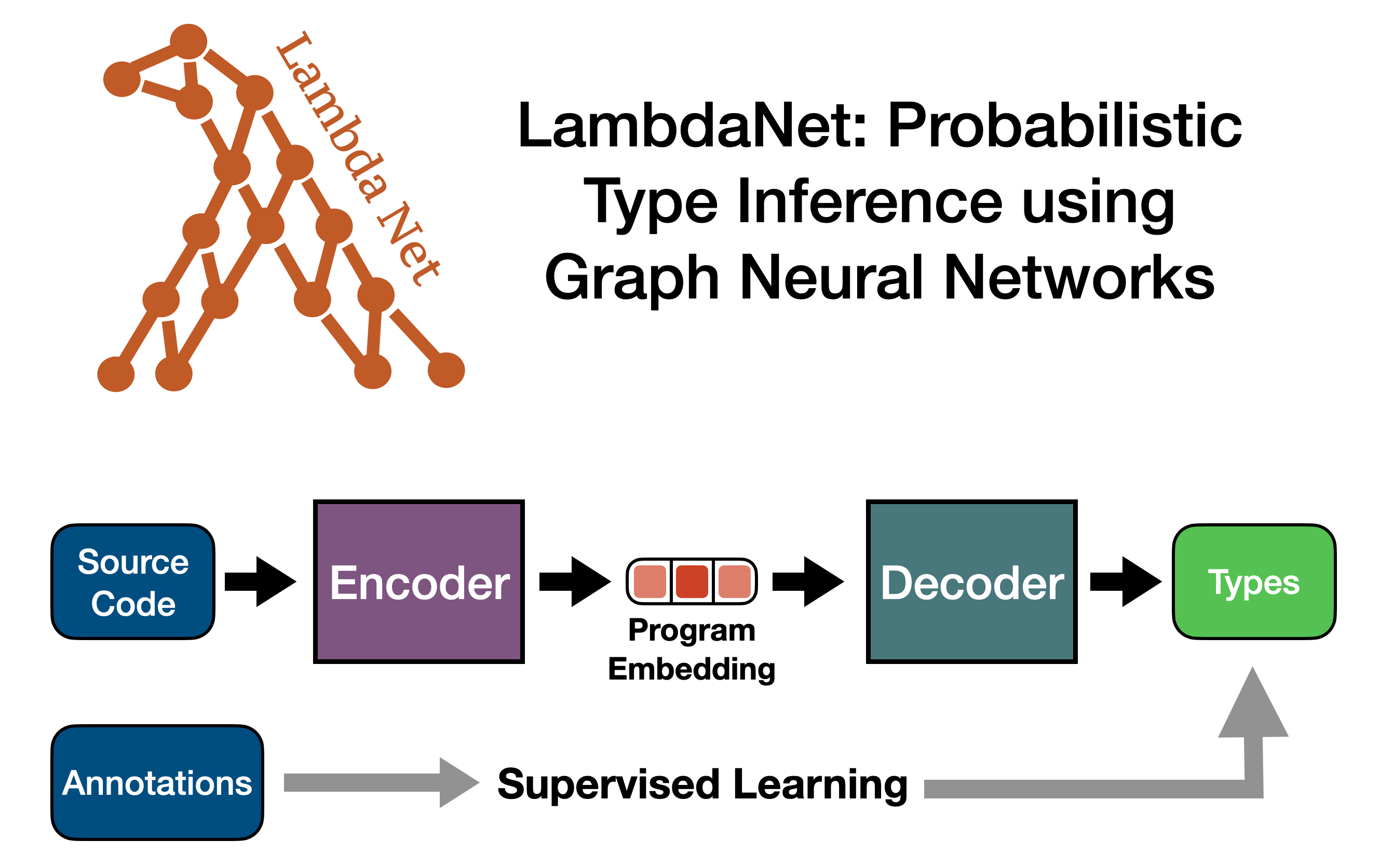

This is the source code repo for the ICLR paper LambdaNet: Probabilistic Type Inference using Graph Neural Networks. For an overview of how LambdaNet works, see our video from ICLR 2020.

This branch contains the latest improvement and features. To produce the results presented by the paper, please see the ICLR20 branch.

After cloning this repo, here are the steps to reproduce our experimental results:

- Install all the dependencies (Java, sbt, TypeScript, etc.) See the "Using Docker" section below.

- If you are not using docker, you will need to either set the environment variable

OMP_NUM_THREADS=1or prefix all the commands in the following step withexport OMP_NUM_THREADS=1;.

- If you are not using docker, you will need to either set the environment variable

- To run pre-trained model

- download the model using this link (predicts user defined type) and unzip the file.

- To run the model in interactive mode, which outputs

(source code position, predicted type)pairs for the specified files:- If not exits, create the file

<project root>/configs/modelPath.txtand write the location of the model directory into it. The location should be the directory that directly contains themodel.serializedfile. - Under project root, run

sbt "runMain lambdanet.TypeInferenceService". - After it finishes loading the model into memory, simply enter the directory path that contains the TypeScript files of interest. Note that in this version of LambdaNet, the model will take any existing user type annotations in the source files as part of its input and only predict the type for the locations where a type annotation is missing.

- Note that currently, LambdaNet only works with TypeScript files and assumes the files follow a TypeScript project structure, so if you want to run it on JavaScript files, you will need to change the file extensions to

.ts.

- If not exits, create the file

- Alternatively, to run the model in batched mode, which outputs human-readable HTML files and accuracy statistics, use the code from the

ICLR20Git branch.

- To train LambdaNet from scratch

- Download the TypeScript projects used in our experiments and prepare the TS projects into a serialization format. This can be achieved using the

mainfunction defined insrc/main/scala/lambdanet/PrepareRepos.scala. Check the source code and make sure you uncomment all the steps (Some steps may have been commented out), then run it usingsbt "runMain lambdanet.PrepareRepos. - Check the

maindefined insrc/main/scala/lambdanet/train/Training.scalato adjust any training configuration if necessary, then run it usingsbt "runMain lambdanet.train.Training".

- Download the TypeScript projects used in our experiments and prepare the TS projects into a serialization format. This can be achieved using the

The TypeScript files used for manual comparison with JSNice are put under the directory data/comparison/.

We also provide a Docker file to automatically download and install all the dependencies. (If on linux, you can also install the dependencies into your system by manually running the commands defined inside Dockerfile.) Here are the steps to run pre-trained LambdaNet model inside a Docker Container:

-

First, make sure you have installed Docker.

-

Put pre-trained model weights somewhere accessible to the docker instance (e.g., inside the lambdanet project root). Then config the model path following the instruction above (instruction 2.2.1).

-

Under project root, run

docker build -t lambdanet:v1 . && docker run --name lambdanet --memory 14g -t -i lambdanet:v1. (Make sure the machine you are using has enough memory for thedocker runcommand.) -

After the Docker container has successfully started, follow the steps described above.