This is an example project showing how you could use Amazon SageMaker with Pytorch Lightning, from getting started to model training. A detailed discussion about SageMaker and PyTorch Lightning can be found in the article Neural Network on Amazon SageMaker with PyTorch Lightning.

The super cool Pytorch Lightning Framework to simplify machine learning model development.

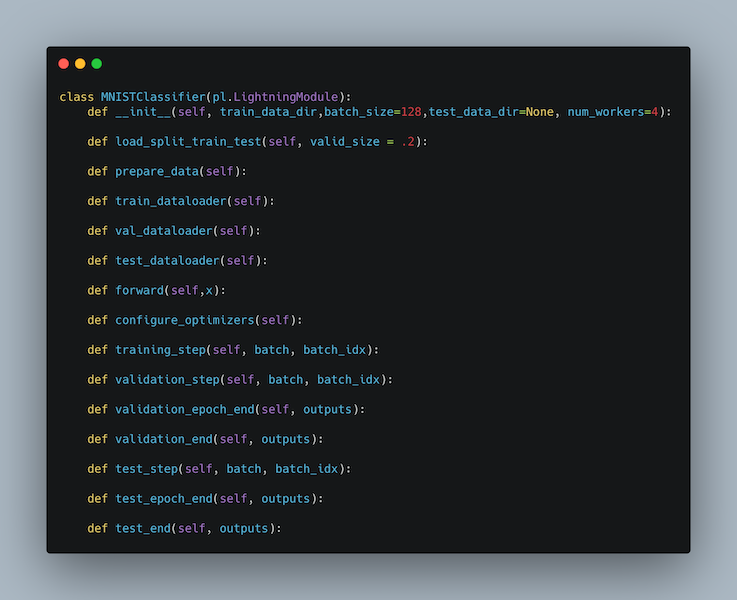

Pytorch Lightning (PL) offers support to a wide number of advanced functions to ML researchers developing models. It is also useful when managing multiple projects because imposes a defined structure:

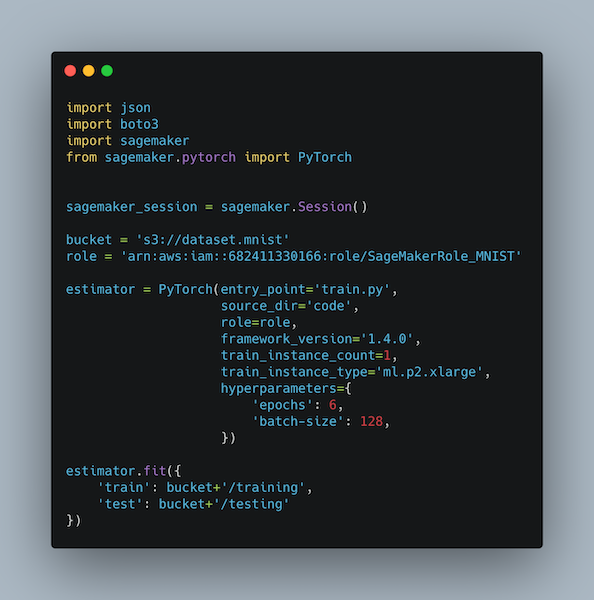

Amazon SageMaker offers suport to model training and instance management through a number of features exposed to developers and researched. One of the most interesting feature is its capability of managing GPU instances on your behalf, through Python CLI:

The conde contained in this repo can be run either using SageMaker Notebook Instances and from a standard Python project. I personally prefer the latter approach because it does not require any Jupyter Notebook instance to be set up and configured and has the improved capability to create computation resources when they are needed and destroy them after usage, in a Serverless compliant way.

To run this project on Amazon SageMaker, please spin up your Amazon SageMaker Notebook, attach a github repository, then run notebook/sagemaker_deploy.ipynb

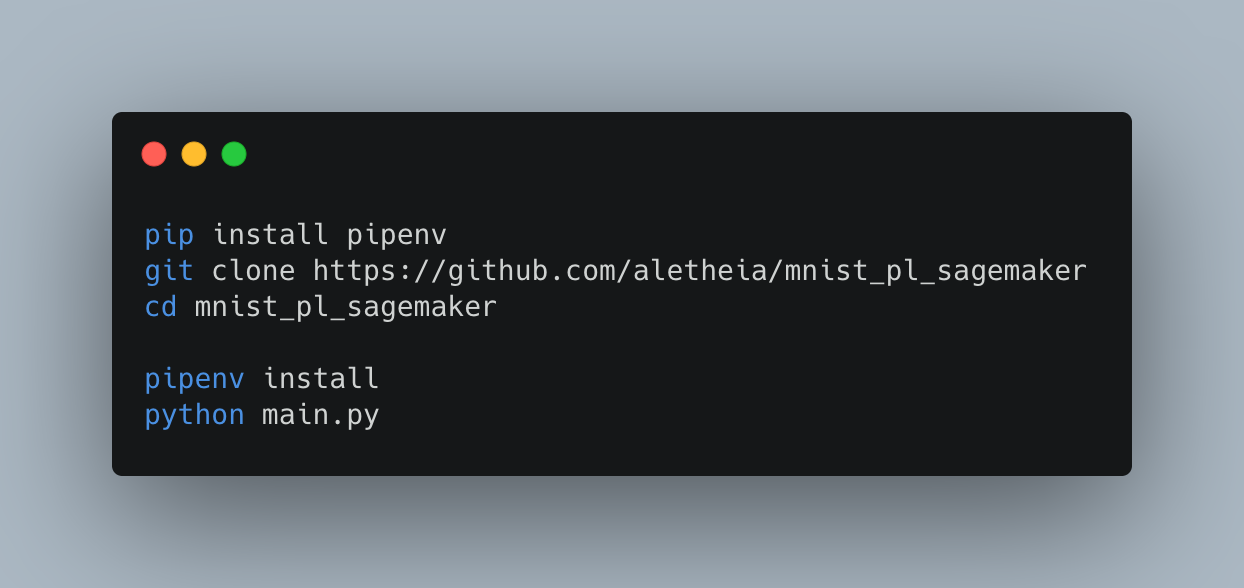

To run the project using Python

This project uses SageMaker Execution Role creation script by Márcio Dos Santos

This project is released under MIT license. Feel free to download, change and distribute the code published here.