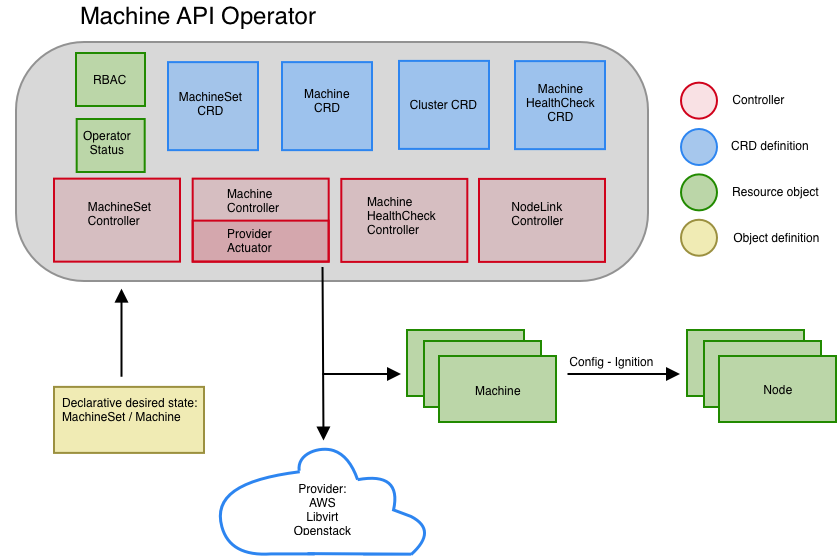

Machine API Operator

The Machine API Operator manages the lifecycle of specific purpose CRDs, controllers and RBAC objects that extend the Kubernetes API. This allows to convey desired state of machines in a cluster in a declarative fashion.

Architecture

CRDs

- MachineSet

- Machine

- Cluster

- MachineHealthCheck

Controllers

Cluster API controllers

-

- Reconciles desired state for MachineSets by ensuring presence of specified number of replicas and config for a set of machines.

-

-

Reconciles desired state for Machines by ensuring that instances with a desired config exist in a given cloud provider. Currently we support:

-

cluster-api-provider-azure Coming soon.

-

cluster-api-provider-openstack Coming soon.

-

cluster-api-provider-baremetal. Under development in Metal3.

-

-

- Reconciles desired state of machines by matching IP addresses of machine objects with IP addresses of node objects. Annotating node with a special label containing machine name that the cluster-api node controller interprets and sets corresponding nodeRef field of each relevant machine.

Nodelink controller

-

Reconciles desired state of machines by matching IP addresses of machine objects with IP addresses of node objects and annotates nodes with a special machine annotation containing the machine name. The cluster-api node controller interprets the annotation and sets the corresponding nodeRef field of each relevant machine.

-

Build:

$ make nodelink-controller

Machine healthcheck controller

-

Reconciles desired state for MachineHealthChecks by ensuring that machines targeted by machineHealthCheck objects are healthy or remediated otherwise.

-

Build:

$ make machine-healthcheck -

How to test it:

-

Create a machineset and locate its selector. Assuming the selector corresponds to the following list of match labels:

machine.openshift.io/cluster-api-cluster: cluster machine.openshift.io/cluster-api-machine-role: worker machine.openshift.io/cluster-api-machine-type: worker machine.openshift.io/cluster-api-machineset: cluster-worker-us-east-1a -

Define a

MachineHealthCheckmanifest that will be watching all machines of the machinset based on its match labels:apiVersion: healthchecking.openshift.io/v1alpha1 kind: MachineHealthCheck metadata: name: example namespace: default spec: selector: matchLabels: machine.openshift.io/cluster-api-cluster: cluster machine.openshift.io/cluster-api-machine-role: worker machine.openshift.io/cluster-api-machine-type: worker machine.openshift.io/cluster-api-machineset: cluster-worker-us-east-1a

-

By default, the machine health check controller recognize only

NotReadycondition and will remove unhealthy machine after 5 minutes. If you want to customize unhealthy conditions you can createnode-unhealthy-conditionsconfig map, for example:apiVersion: v1 kind: ConfigMap metadata: name: node-unhealthy-conditions namespace: openshift-machine-api data: conditions: | items: - name: NetworkUnavailable timeout: 5m status: True

-

Pick a node that is managed by one of the machineset's machines

-

SSH into the node, disable and stop the kubelet services:

# systemctl disable kubelet # systemctl stop kubelet -

After some time the node will transition into

NotReadystate -

Watch the

machine-healthcheckcontroller logs to see how it notices a node inNotReadystate and starts to reconcile the node -

After some time the current node instance is terminated and new instance is created. Followed by new node joining the cluster and turning in

Readystate.

-

Creating machines

You can create a new machine by applying a manifest representing an instance of the machine CRD

The machine.openshift.io/cluster-api-cluster label will be used by the controllers to lookup for the right cloud instance.

You can set other labels to provide a convenient way for users and consumers to retrieve groups of machines:

machine.openshift.io/cluster-api-machine-role: worker

machine.openshift.io/cluster-api-machine-type: worker

Dev

-

Generate code (if needed):

$ make generate

-

Build:

$ make build

-

Run:

$ ./bin/machine-api-operator start --kubeconfig ${HOME}/.kube/config --images-json=pkg/operator/fixtures/images.json -

Image:

$ make image

The Machine API Operator is designed to work in conjunction with the Cluster Version Operator. You can see it in action by running an OpenShift Cluster deployed by the Installer.

However you can run it in a vanilla Kubernetes cluster by precreating some assets:

- Create a

openshift-machine-api-operatornamespace - Create a CRD Status definition

- Create a CRD Machine definition

- Create a CRD MachineSet definition

- Create a Installer config

- Then you can run it as a deployment

- You should then be able to deploy a machineSet object

Machine API operator with Kubemark over Kubernetes

INFO: For development and testing purposes only

- Deploy MAO over Kubernetes:

$ kustomize build | kubectl apply -f --

Deploy Kubemark actuator prerequisities:

$ kustomize build config | kubectl apply -f - -

Create

clusterinfrastructure.config.openshift.ioto tell the MAO to deploykubemarkprovider:apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: infrastructures.config.openshift.io spec: group: config.openshift.io names: kind: Infrastructure listKind: InfrastructureList plural: infrastructures singular: infrastructure scope: Cluster versions: - name: v1 served: true storage: true --- apiVersion: config.openshift.io/v1 kind: Infrastructure metadata: name: cluster status: platform: kubemark

The file is already present under

config/kubemark-config-infra.yamlso it's sufficient to run:$ kubectl apply -f config/kubemark-config-infra.yaml

CI & tests

Run unit test:

$ make test

Run e2e-aws-operator tests. This tests assume that a cluster deployed by the Installer is up and running and a KUBECONFIG environment variable is set:

$ make test-e2e

Tests are located under machine-api-operator repository and executed in prow CI system. A link to failing tests is published as a comment in PR by @openshift-ci-robot. Current test status for all OpenShift components can be found in https://deck-ci.svc.ci.openshift.org.

CI configuration is stored under openshift/release repository and is split into 4 files:

- cluster/ci/config/prow/plugins.yaml - says which prow plugins are available and where job config is stored

- ci-operator/config/openshift/machine-api-operator/master.yaml - configuration for machine-api-operator component repository

- ci-operator/jobs/openshift/machine-api-operator/openshift-machine-api-operator-master-presubmits.yaml - prow jobs configuration for presubmits

- ci-operator/jobs/openshift/machine-api-operator/openshift-machine-api-operator-master-postsubmits.yaml - prow jobs configuration for postsubmits

More information about those files can be found in ci-operator onboarding file.