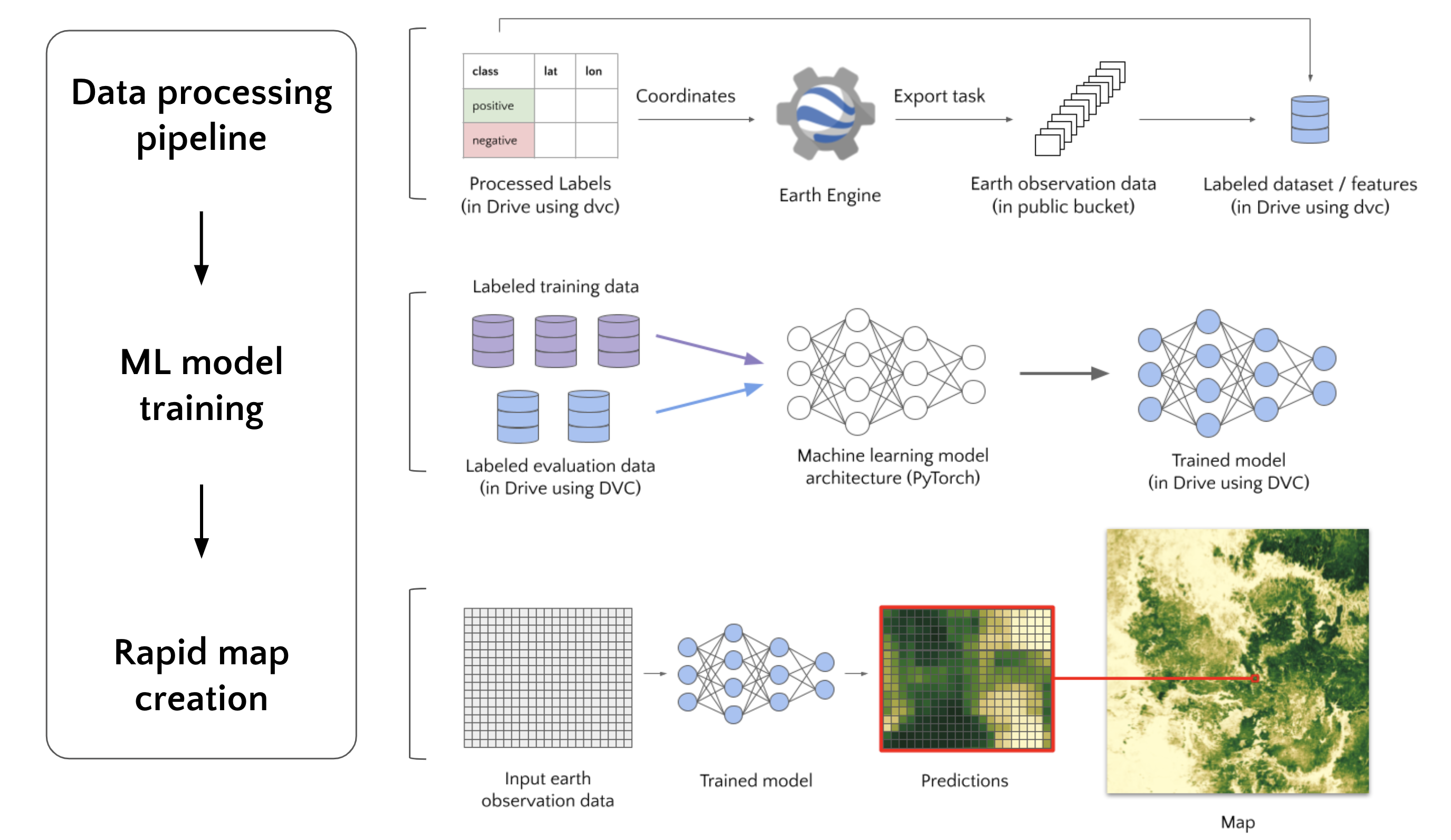

Rapid map creation with machine learning and earth observation data.

Example projects: Cropland, Buildings, Maize

Colab notebook tutorial demonstrating data exploration, model training, and inference over small region. (video)

Prerequisites:

- Github access token (obtained here)

- Forked OpenMapFlow repository

- Basic Python knowledge

To create your own maps with OpenMapFlow, you need to

- Generate your own OpenMapFlow project, this will allow you to:

- Add your own labeled data

- Train a model using that labeled data, and

- Create a map using the trained model.

A project can be generated by either following the below documentation OR running the above Colab notebook.

Prerequisites:

- Github repository - where your project will be stored

- Google/Gmail based account - for accessing Google Drive and Google Cloud

- Google Cloud Project (create) - for accessing Cloud resources for creating a map (additional info)

- Google Cloud Service Account Key (generate) - for deploying Cloud resources from Github Actions

Once all prerequisites are satisfied, inside your Github repository run:

pip install openmapflow

openmapflow generateThe command will prompt for project configuration such as project name and Google Cloud Project ID. Several prompts will have defaults shown in square brackets. These will be used if nothing is entered.

After all configuration is set, the following project structure will be generated:

<YOUR PROJECT NAME>

│ README.md

│ datasets.py # Dataset definitions (how labels should be processed)

│ evaluate.py # Template script for evaluating a model

│ openmapflow.yaml # Project configuration file

│ train.py # Template script for training a model

│

└─── .dvc/ # https://dvc.org/doc/user-guide/what-is-dvc

│

└─── .github

│ │

│ └─── workflows # Github actions

│ │ deploy.yaml # Automated Google Cloud deployment of trained models

│ │ test.yaml # Automated integration tests of labeled data

│

└─── data

│ raw_labels/ # User added labels

│ datasets/ # ML ready datasets (labels + earth observation data)

│ models/ # Models trained using datasets

| raw_labels.dvc # Reference to a version of raw_labels/

| datasets.dvc # Reference to a version of datasets/

│ models.dvc # Reference to a version of models/

Github Actions Secrets Being able to pull and deploy data inside Github Actions requires access to Google Cloud. To allow the Github action to access Google Cloud, add a new repository secret (instructions).

- In step 5 of the instructions, name the secret:

GCP_SA_KEY - In step 6, enter your Google Cloud Service Account Key

After this the Github actions should successfully run.

GCloud Bucket: A Google Cloud bucket must be created for the labeled earth observation files. Assuming gcloud is installed run:

gcloud auth login

gsutil mb -l <YOUR_OPENMAPFLOW_YAML_GCLOUD_LOCATION> gs://<YOUR_OPENMAPFLOW_YAML_BUCKET_LABELED_EO>Prerequisites:

Add reference to already existing dataset in your datasets.py:

from openmapflow.datasets import GeowikiLandcover2017, TogoCrop2019

datasets = [GeowikiLandcover2017(), TogoCrop2019()]Download and push datasets

openmapflow create-datasets # Download datasets

dvc commit && dvc push # Push data to version control

git add .

git commit -m'Created new dataset'

git pushData can be added by either following the below documentation OR running the above Colab notebook.

Prerequisites:

- Generated OpenMapFlow project

- EarthEngine account - for accessing Earth Engine and pulling satellite data

- Raw labels - a file (csv/shp/zip/txt) containing a list of labels and their coordinates (latitude, longitude)

- Move raw label files into project's data/raw_labels folder

- Convert raw labels to standardized dataframe using the

LabeledDatasetclass in datasets.py, example:

label_col = "is_crop"

class TogoCrop2019(LabeledDataset):

def load_labels(self) -> pd.DataFrame:

# Read in raw label file

df = pd.read_csv(PROJECT_ROOT / DataPaths.RAW_LABELS / "Togo_2019.csv")

# Rename coordinate columns to be used for getting Earth observation data

df.rename(columns={"latitude": LAT, "longitude": LON}, inplace=True)

# Set start and end date for Earth observation data

df[START], df[END] = date(2019, 1, 1), date(2020, 12, 31)

# Set consistent label column

df[label_col] = df["crop"].astype(float)

# Split labels into train, validation, and test sets

df[SUBSET] = train_val_test_split(index=df.index, val=0.2, test=0.2)

# Set country column for later analysis

df[COUNTRY] = "Togo"

return df

datasets: List[LabeledDataset] = [TogoCrop2019(), ...]Run dataset creation:

earthengine authenticate # For getting new earth observation data

gcloud auth login # For getting cached earth observation data

openmapflow create-datasets # Initiatiates or checks progress of dataset creation

dvc commit && dvc push # Push new data to data version control

git add .

git commit -m'Created new dataset'

git pushA model can be trained by either following the below documentation OR running the above Colab notebook.

Prerequisites:

# Pull in latest data

dvc pull

# Set model name, train model, record test metrics

export MODEL_NAME=<YOUR MODEL NAME>

python train.py --model_name $MODEL_NAME

python evaluate.py --model_name $MODEL_NAME

# Push new models to data version control

dvc commit

dvc push

# Make a Pull Request to the repository

git checkout -b"$MODEL_NAME"

git add .

git commit -m "$MODEL_NAME"

git push --set-upstream origin "$MODEL_NAME"Now after merging the pull request, the model will be deployed to Google Cloud.

Prerequisites:

Only available through above Colab notebook. Cloud Architecture must be deployed using the deploy.yaml Github Action.

from openmapflow.datasets import TogoCrop2019

df = TogoCrop2019().load_df(to_np=True)

x = df.iloc[0]["eo_data"]

y = df.iloc[0]["class_prob"]